What Is the “Human Diet”?

3000 words

Is there one (or, one with slight modifications) diet that all humans should be eating? I’m skeptical of such claims. Though both vegans (one who does not eat or use animal products) and carnivores (one who eats only animal products), in my opinion, have some warped views on diet and human evolution. Both are extreme views; both have wrong ideas about diet throughout our evolution; both get some things right. Though, both are extreme views with little to no support. While it is hard to pinpoint what the “human diet” is, clearly, there were certain things that we ate through our evolutionary niches in our ancestral Africa that we “should” be eating today (in good quantities).

Although it is difficult to reconstruct the diet of early hominids due to lack of specimens (Luca, Perry, and Rienzo, 2014), by studying the eating behavior of our closest evolutionary relatives—the chimpanzees—we can get an idea of what our LCA ate and its eating behavior (Ulijaszek, Mann, and Elton, 2013). Humans have been throughout most every niche we could possibly been in and, therefore, have come across the most common foods in each ecology. If animal A is in ecosystem E with foods X, Y, and Z, then animal A eats foods X, Y, and Z, since animals consume what is in their ecosystem. Knowing this much, the niches our ancestors lived in in the past had to have a mix of both game and plants, therefore that was our diet (in differing amounts, obviously). But it is more complicated than that.

So, knowing this, according to Ulijaszek, Mann, and Elton, (2013: 35) “Mammalian comparisons may be more useful than ‘Stone Age’ perspectives, as many of the attributes of hominin diets and the behaviour associated with obtaining them were probably established well before the Pleistocene, the time stone agers were around (Foley 1995; Ulijaszek 2002; Elton 2008a).” Humans eat monocots (various flowering plants with one seed), which is not common our order. The advent of farming was us “expanding our dietary niche”, which began “the widespread adoption of agriculture [which] is an obvious point of transition to a ‘monocot world’” (Ulijaszek, Mann, and Elton, 2013). Although these foodstuffs dominate our diet, there is seasonality in what types of those foods we consume.

So since humans tend to not pick at things to eat, but have discrete meals (it is worth noting that one should have “three square meals a day” is a myth; see Mattson et al, 2014), we need to eat a lot in the times we do eat. Therefore, since we are large-bodied primates and our energy needs are much higher (due to our large brains that consume 20 percent of our daily caloric consumption), we need higher quality energy. The overall quality and energy density of our diets are due to meat-eating—which folivorous/frugivorous primates do not consume. We have a shorter gut tract which is “often attributed to the greater reliance of faunivory in humans“, though “humans are not confined to ‘browse’ vegetation … and make extensive use of grasses and their animal consumers” (Ulijaszek, Mann, and Elton, 2013: 58). Due to this, we show amazing dietary flexibility and adaptability due to our ability to eat a wide range of foodstuffs in most any environment we find ourselves in.

So “It is difficult to pinpoint what the human diet actually is … Nonetheless, humans are frequently described as omnivores” (Ulijaszek, Mann, and Elton, 2013: 59). Omnivores normally feed at two or more trophic levels, though others define it as just consuming plants and animals (Chubaty et al, 2014). Trophic level one is taken up by plants; level two is taken up by herbivores—primary consumers; level three is taken up by predators—who feed on the herbivores; level four or five is taken up by apex predators or carnivores; while the last level is also taken up by detrivores—those who feed on waste. Though, of course, “omnivory” is a continuum and not a category in and of itself. Humans eat primary producers (plants) and primary consumers (herbivores) and some secondary consumers (like fish), “although human omnivory may only be possible because of technological processing” (Ulijaszek, Mann, and Elton, 2013: 59). Other animals described as “omnivorous” eat only foods from one trophic level and only consume food from another level when needed.

Humans—as a species—rely on meat consumption. Fonseca-Azevedo and Herculano-Houzel (2012) showed that the energetic cost of a brain is directly related to the number of neurons in the brain. So, there were metabolic limitations in regard to brain and body size. The number of hours available to feed along with the low caloric yield of plant foods explains why great apes have such large bodies and small brains—which was probably overcome by erectus, who probably started cooking food around 1.5 mya. If we consumed only a diet of raw foods, then it would have taken us around 9 h/day to consume the calories we would need to power our brains—which is just not feasible. So it is unlikely that erectus—who was the first to have the human body plan and therefore the ability to run, which implies he would have needed higher quality energy—would have survived on a diet of raw plant foods since it would take so many hours to consume enough food to power their growing brains.

We can see that we are adapted to eating meat by looking at our intestines. Our small intestines are relatively long, whereas our long intestines are relatively short, which indicates that we became adapted to eating meat. Our “ability to eat significant quantities of meat and fish is a significant departure from the dietary norm of the haplorhine primates, especially for animals in the larger size classes.” Though “Humans share many features of their gut morphology with other primates, particularly the great apes, and have a gut structure that reflects their evolutionary heritage as plant, specifically ripe fruit, eaters” (Ulijaszek, Mann, and Elton, 2013: 63). Chimpanzees are not physiologically adapted to meat eating, which can be seen in the development of hypercholesterolemia along with vascular disease, even when controlled diets in captivity (Ford and Stanford, 2004).

When consuming a lot of protein, though, “rabbit starvation” needs to be kept in mind. Rabbit starvation is a type of malnutrition that arises from eating little to no fat and high amounts of protein. Since protein intake is physiologically demanding (it takes the most energy to process out of the three macros), Ben-Dor et al (2011) suggest a caloric ceiling of about 35 percent of kcal coming from protein. So erectus’ protein ceiling was 3.9 g/bw per day whereas for Homo sapiens it was 4.0 g/bw per day. Ben-Dor et al (2011) show that erectus’ DEE (daily energy expenditure) was about 2704 kcal, with “a maximum long-term plant protein ceiling of 1014 calories“, implying that erectus was, indeed, an omnivore. So, of course, the consumption of protein and raw plants are physiologically limited. Since erectus’ ceiling on protein intake was 947 kcal and his ceiling on raw plant intake was 1014 kcal, then, according to the model proposed by Ben-Dor et al (2011), erectus would have needed to consume about 744 kcal from fat, which is about 27 percent of his overall caloric intake and 44 percent of animal product intake.

Neanderthals would have consumed between 74-85 percent of their daily caloric energy during glacial winters from fat, with the rest coming from protein (Ben-Dor, Gopher, and Barkai, 2016), while consuming between 3,360 to 4,480 kcal per day (Steegman, Cerny, and Holliday, 2002). (See more on Neanderthal diet here.) Neanderthals consumed a large amount of protein, about 292 grams per day (Ben-Dor, Gopher, and Barkai, 2016: 370). Since our close evolutionary cousins (Neanderthals and erectus) ate large amounts of protein and fat, they were well-acclimated, physiologically speaking, to their high-protein diets. Though, their diets were not too high in protein to where rabbit starvation would occur—fat was consumed in sufficient amounts in the animals that Neanderthals hunted and killed, so rabbit starvation was not a problem for them. But since rabbit starvation is a huge problem for our species, “It is therefore unlikely that humans could be true carnivores in the way felids are” (Ulijaszek, Mann, and Elton, 2013: 66).

We consume a diet that is both omnivorous and eclectic, which is determined by our phylogeny through the form of our guts; we have nutritional diversity in our evolutionary history. We needed to colonize new lands and, since animals can only consume what is in their ecosystem, the foods that are edible in said ecosystem will be what is consumed by that animal. Being eclectic feeders made the migration out of Africa possible.

But humans are not true carnivores, contrary to some claims. “Meat-eating has allowed humans to colonize high latitudes and very open landscapes. However, bearing in mind the phylogenetic constraints that prevent humans from being true carnivores, such expansion was probably not accomplished through meat-eating alone. Instead, humans have used their ability to technologically harvest, produce, and consume a very wide range of foods to help exploit all major biomes” (Ulijaszek, Mann, and Elton, 2013: 67).

Humans, though, lack the gut specialization and dentition to process grasses efficiently. This means that our ancestors ate animals that ate these things, and therefore the C4 they consumed elevated the levels in the fossils we discovered. Information like this implies that our ancestors ate across a wide variety of trophic levels and had substantial dietary diversity throughout evolutionary history.

“Hominins lack the specialized dentition found in carnivorans (the group of animals that includes the cat and dog families) and other habitual meat and bone eaters, so must have pre-processed at least some of the meat in their diet” (Ulijaszek, Mann, and Elton, 2013: 81). This is where stone tools come into play (Zink and Lieberman, 2016). “Processing” food can be anything from taking out nutrients to changing how the food looks. We can look at “food processing” as a form of pre-digestion before consumption. The use of stone tools, and cooking, was imperative for us to begin the processing of meat and other foods. This gave us the ability to “pre-digest” our food before consumption, which increases the available energy in any food that is cooked/processed. For example, cooking denatures protein strands and breaks down the cell walls which gelatinizes the collagen in the meat which allows for easier chewing and digestion. Carmody et al (2016) showed that adaptation to a cooked diet began around 275 kya.

In his book Catching Fire, Wrangham (2009: 17-18) writes:

Raw-foodists are dedicated to eating 100 percent of their diets raw, or as close to 100 percent as they can manage. There are only three studies of their body weight, and all find that people who eat raw tend to be thin. The most extensive is the Giessen Raw Food study, conducted by nutritionist Corinna Koebnick and her colleagues in Germany, who used questionnaires to study 513 raw-foodists who ate from 70 to 100 percent of their diet raw. They chose to eat raw to be healthy, to prevent illness, to have a long life, or to live naturally. Raw food included not only uncooked vegetables and occasional meat, but also cold-pressed oil and honey, and some items were lightly heated such as dried fruits, dried meat, and dried fish. Body mass index (BMI), which measures weight in relation to the square of the height, was used as a measure of fatness. As the proportion of food eaten raw rose, BMI fell. The average weight loss when shifting from a cooked to a raw food diet was 26.5 pounds (12 kilograms) for women and 21.8 pounds (9.9 kilograms) for men. Among those eating a purely raw diet (31 percent), the body weights of almost a third indicated chronic energy deficiency. The scientists’ conclusion was unambiguous: “a strict raw food diet cannot guarantee an adequate energy supply.”

Also, vegetarians and meat-eaters who cooked their food have similar body weights. This implies that cooking food—no matter the type—gives more caloric energy to use for the body and that raw-foodists are fighting a losing battle with biology, consuming raw foods at such a high quantity that our guts are not used for. As can be seen above in the citation from Fonseca-Azevedo and Herculano-Houzel (2012), great apes who eat nothing but raw food have large guts and bodies which are needed to consume the raw plant foods they eat but we cannot thrive on such a diet because it is not calorically nor nutritionally viable for us—most importantly due to the size of our brains and its caloric requirements.

Carmody, Weintraub, and Wrangham (2011) show that modern raw-foodists who subsist on raw meat and plants have nutrient deficiencies and chronic energy deficiencies, even though they process their foods (cooking is a form of processing, as is cutting, mashing, pounding, etc) in different manners, while females experience low fecundity. Thus, the cooking of food seems to be needed for normal biological functioning; we have clearly evolved past consuming all raw foods. So it is clear that cooking—along with meat-eating—was imperative to our evolution. (Which does not mean that humans only ate meat and that eating meat and only meat is part of our evolutionary history.) Cooking food lead to it gelatinizing which denatured the protein, leading to easier mastication of the food, which meant less force since the food was not as hard after cooking. This then led to smaller teeth, over time, which was seen in erectus (Zink and Lieberman, 2016). This was due to cooking along with tool-use: the tool-use lead to smaller particles leading to less force per bite, which eventually led to smaller teeth in our lineage.

Finally, humans are said to be “facultative carnivores.” A facultative carnivore is an animal that does best on a carnivorous diet but can survive—not thrive—on other foodstuffs when meat is not available. This, though, doesn’t make sense. Humans are eclectic feeders—omnivorous in nature. Yes, we began cooking about 1.5 mya; yes meat-eating (and the cooking of said meat) is huge in the evolution of our species; yes without meat and cooking we would not have had the energy requirements to split off from chimpanzees/great apes. But this does not mean that we do “best” on a carnivorous diet. There are about 7,105 ethnic groups in the world (Spencer, 2014: 1029), and so to say that all of these ethnies would do the same or similar, physiologically speaking, on an all-meat diet is crazy talk. The claims that we subsisted on one type of food over the other throughout our evolutionary history is a bold claim—with no basis in evolutionary history.

Marlene Zuk (2013: 103-104), author of Paleofantasy writes:

Another implication of the importance Marlowe attaches to bow hunting is that, rather than starting out as exclusively carnivorous and then adding starches and other plant material to the diet, ancient humans have been able to increase the proportion of meat only after newer technology had come about, a mere 30,000 years ago. Other anthropologists concur that the amount of meat in the human diet grew as we diverged from our other primate ancestors. All of this means that, first, contrary to the claims of many paleo-diet proponents, the earliest humans did not have an exclusively meat-based diet that we are best adapted to eat; and second, our ancestors’ diets clearly changed dramatically and repeatedly over the last tens, not to mention hundreds, thousands of years, even before the advent of agriculture.

The assumption that we were fully (or even mostly) carnivorous and then added plant foods/carbs is clearly false. “Fantasies” like this are “just-so stories”; they are nice-sounding stories, but reality is clearly more nuanced than people’s evolutionary and Stone Age imaginations. This makes sense, though. Since we evolved from an LCA (last common ancestor) with chimpanzees some 6.3 mya (Patterson et al, 2006). So why would it make sense that we would then, ultimately, only subsist on an all-meat diet, if our LCA with chimpanzees was most likely a forager who lived in the trees (Lieberman, 2013).

One thing, though, I’m sure that everyone agrees with is that the environments we have constructed for ourselves in the first world are maladaptive—what is termed an “evolutionary mismatch” (Lieberman, 2013; Genne-Bacon, 2014). The mismatch arises from the high-carb food environments we have constructed, with cheap foodstuffs that is loaded with sugar, salt, and fat which is much more addictive than on their own (see Kessler, 2010). This makes food more palatable and people then want to eat it more. Foods like this, obviously, were not in our OEE (original evolutionary environment), and therefore cause us huge problems in our modern-day environments. Evolutionary mismatches occur when technological advancement increases faster than the genome can adapt. This can clearly be seen in our societies and the explosion of obesity over the past few decades (Fung, 2016, 2018).

We did not evolve eating highly processed carbohydrates loaded with salt and sugar. That much everyone can agree on.

Conclusion

It is clear that both claims from vegans/vegetarians and carnivores are false: there is no one “human diet” that we “should” be eating. Individual variation in different physiologic processes implies that there is no one “human diet”, no matter what type of food is being pushed as “what we should be” eating. Humans are eclectic feeders; we will eat anything since “Humans show remarkable dietary flexibility and adaptability“. Furthermore, we also “have a relatively unspecialized gut, with a colon that is shorter relative to overall size than in other apes; this is often attributed to the greater reliance on faunivory in humans (Chivers and Langer 1994)” (Ulijaszek, Mann, and Elton, 2013: 58). Our dietary eclectism can be traced back to our Australopithecine ancestors. The claim that we are either “vegetarian/vegan or carnivore” throughout our evolution is false.

Humans aren’t “natural carnivores” or “natural vegans/vegetarians.” Humans are eclectic feeders. Animals eat whatever is in their ecosystem. Ergo humans are omnivores, though we can’t pinpoint what the “human diet” is since there is great variability in it due to culture/ecology, we know one thing: we did not subsist on mainly only one food; we had a large variety of food, especially with fallback foods, to consume throughout our evolutionary history. So claims that we evolved to eat a certain way (as vegans/vegetarians and carnivores claim) is false. (Note I am not saying that high carb diets are good; I’ve railed hard on them.)

Do Genes and Polymorphisms Explain the Differences between Eastern and Western Societies?

2150 words

In 2012, biologist Hippokratis Kiaris published a book titled Genes, Polymorphisms, and the Making of Societies: How Genetic Behavioral Traits Influence Human Cultures. His main point is that “the presence of different genes in the corresponding people has actually dictated the acquisition of these distinct cultural and historical lines, and that an alternative outcome might be unlikely” (Kiaris, 2012: 9). This is a book that I have not seen discussed in any HBD blog, and based on the premise of the book (how it purports to explain behavioral/societal outcomes between Eastern and Western society) you would think it would be. The book is short, and he speaks with a lot of determinist language. (It’s worth noting he does not discuss IQ at all.)

In the book, he discusses how genes “affect” and “dictate” behavior which then affects “collective decisions and actions” while also stating that it is “conceivable” that history, and what affects human decision-making and reactions, are also “affected by the genetic identity of the people involved” (Kiaris, 2012: 11). Kiaris argues that genetic differences between Easterners and Westerners are driven by “specific environmental conditions that apparently drove the selection of specific alleles in certain populations, which in turn developed particular cultural attitudes and norms” (Kiaris, 2012: 91).

Kiaris attempts to explain the societal differences between the peoples who adopted Platonic thought and those who adopted Confucian thought. He argues that differences between Eastern and Western societies “are not random and stochastic” but are “dictated—or if this is too strong an argument, they are influenced considerably—by the genes that these people carry.” So, Kiaris says, “what we view as a choice is rather the complex and collective outcome of the influence of people’s specific genes combined with the effects of their specific environment … [which] makes the probability for rendering a certain choice distinct between different populations” (Kiaris, 2012: 50).

The first thing that Kiaris discusses (behavior wise) is DRD4. This allele has been associated with miles migrated from Africa (with a correlation of .85) along with novelty-seeking and hyperactivity (which may cause the association found with DRD4 frequency and miles migrated from Africa (Chen et al, 1999). Kiaris notes, of course, that the DRD4 alleles are unevenly distributed across the globe, with people who have migrated further from Africa having a higher frequency of these alleles. Europeans were more likely to have the “novelty-seeking” DRD7 compared to Asian populations (Chang et al, 1996). But, Kiaris (2012: 68) wisely writes (emphasis mine):

Whether these differences [in DRD alleles] represent the collective and cumulative result of selective pressure or they are due to founder effects related to the genetic composition of the early populations that inhabited the corresponding areas remains elusive and is actually impossible to prove or disprove with certainty.

Kiaris then discusses differences between Eastern and Western societies and how we might understand these differences between societies as regards novelty-seeking and the DRD4-7 distribution across the globe. Westerners are more individualistic and this concept of individuality is actually a cornerstone of Western civilization. The “increased excitability and attraction to extravagance” of Westerners, according to Kiaris, is linked to this novelty-seeking behavior which is also related to individualism “and the tendency to constantly seek for means to obtain satisfaction” (Kiaris, 2012: 68). We know that Westerners do not shy away from exploration; after all, the West discovered the East and not vice versa.

Easterners, on the other hand, are more passive and have “an attitude that reflects a certain degree of stoicism and makes life within larger—and likely collectivistic—groups of people more convenient“. Easterners, compared to Westerners, take things “the way they are” which “probably reflects their belief that there is not much one can or should do to change them. This is probably the reason that these people appear rigid against life and loyal, a fact that is also reflected historically in their relatively high political stability” (Kiaris, 2012: 68-69).

Kiaris describes DRD4 as a “prototype Westerner’s gene” (pg 83), stating that the 7R allele of this gene is found more frequently in Europeans compares to Asians. The gene has been associated with increased novelty-seeking, exploratory activity and human migrations, along with liberal ideology. These, of course, are cornerstones of Western civilization and thought, and so, Kiaris argues that the higher frequency of this allele in Europeans—in part—explains certain societal differences between the East and West. Kiaris (2012: 83) then makes a bold claim:

All these features [novelty-seeking, exploratory activity and migration] indeed tend to characterize Westerners and the culutral norms they developed, posing the intriguing possibility that DRD4 can actually represent a single gene that can “predispose” for what we understand as the stereotypic Western-type behavior. Thus, we could imagine that an individual beating the 7-repeat allele functions more efficiently in Western society while the one without this allele would probably be better suited to a society with Eastern-like structure. Alternatively, we could propose that a society with more individuals bearing the 7-repeat allele is more likely to have followed historical lines and choices more typical of a Western society, while a population with a lower number (or deficient as it is the actual case with Easterners) of individuals with the 7-repeat allele would more likely attend to the collective historical outcome of Eaasterners.

Kiaris (2012: 84) is, importantly, skeptical that having a high number of “novelty-seekers” and “explorers” would lead to higher scientific achievement. This is because “attempts to extrapolate from individual characteristics to those of a group of people and societies possess certain dangers and conceptual limitations.”

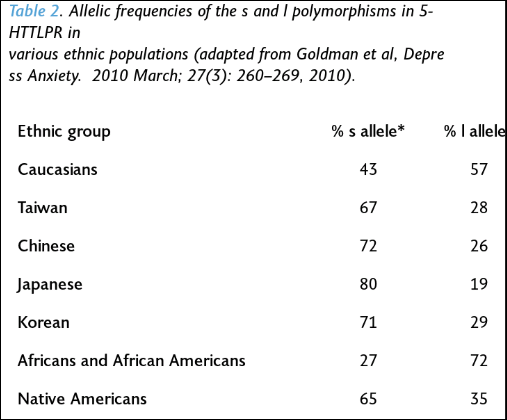

Kiaris (2012: 86) says that “collectivistic behavior … is related to the activity of serotonin.” He then goes on to cite a few instances of other polymorphisms which are associated with collective behavior as well. Goldman et al (2010) show ethnic differences in the l and s alleles (from Kiaris, 2012: 86):

It should also be noted that populations (Easterners) that had a higher frequency of the s allele had a lower prevalence of depression than Westerners. So Western societies are more likely to “suffer more frequently from various manifestations of depression and general mood disorders than those of Eastern cultures (Chiao & Blizinsky, 2010)” (Kiaris, 2012: 89).

As can be seen from the table above, Westerners are more likely to have the l allele than Easterners, which should subsequently predict higher levels of happiness in Western compared to Eastern populations. However, “happiness” is, in many ways, subjective; so how would one find an objective way to measure “happiness” cross-culturally? However, Kiaris (2012: 94) writes: “Intuitively speaking, though, I have to admit that I would rather expect Asians to be happier, in general, than Westerners. I cannot support this by specific arguments, but I think the reason for that is related to the individualistic approach of life that the people possess in Western societies: By operating under individualistic norms, it is unavoidably stressful, a condition that operates at the expense of the perception of individuals’ happiness.”

Kiaris discusses catechol-O-methyltransferase (COMT), which is an enzyme responsible for the inactivation of catecholamines. Catecholamines are the hormones dopamine, adrenaline, and noradrenaline. These hormones regulate the “fight or flight” function (Goldstein, 2011). So since catecholamines play a regulatory role in the “fight or flight” mechanism, increased COMT activity results in lower dopamine levels, which is then associated with better performance.

“Warriors” and “worriers” are intrinsically linked to the “fight or flight” mechanism. A “warrior” is someone who performs better under stress, achieves maximal performance despite threat and pain, and is more likely to act efficiently in a threatening environment. A “worrier” is “someone that has an advantage in memory and attention tasks, is more exploratory and efficient in complex environments, but who exhibits worse performance under stressful conditions (Stein et al., 2006)” (Kiaris, 2012: 102).

Kiaris (2012: 107) states that “at the level of society, it can be argued that the specific Met-bearing COMT allele contributes to the buildup of Western individualism. Opposed to this, Easterners’ increased frequency of the Val-bearing “altruistic” allele fits quite well with the construction of a collectivistic society: You have to be an altruist at some degree in order to understand the benefits of collectivism. By being a pure individualist, you only understand “good” as defined and reflected by your sole existence.”

So, Kiaris’ whole point is thus: there are differences in polymorphic genes between Easterners and Westerners (and are unevenly distributed) and that differences in these polymorphisms (DRD4, HTT, MAOA, and COMT) explain behavioral differences between behaviors in Eastern and Western societies. So the genetic polymorphisms associated with “Western behavior” (DRD4) are associated with increased novelty-seeking, tendency for financial risk-taking, distance of OoA migration, and liberal ideology. Numerous different MAOA and 5-HTT polymorphisms are associated with collectivism (e.g., Way and Lieberman, 2006 for MAOA and collectivism). The polymorphism in COMT more likely to be found in Westerners predisposes for “worrier’s behavior”. Furthermore, certain polymorphisms of the CHRNB3 gene are more common in all of the populations that migrated out of Africa, which predisposed for leaders—and not follower—behavior.

| Trait | Gene | Allele frequency |

| Novelty seeking | DRD4 | 7-repeat novelty seeking allele more common in the West |

| Migration | DRD4 | 7-repeat allele is associated with distance from Africa migration |

| Nomads/settlers | DRD4 | 7-repeat allele is associated with nomadic life |

| Political ideology | DRD4 | 7-repeat allele is more common in liberals |

| Financial risk taking | DRD4 | 7-repeat allele is more common in risk takers |

| Individualism/Collectivism | HTT | s allele (collectivistic) of 5-HTT is more common in the East |

| Happiness | HTT | l allele has higher prevalence in individuals happy with their life |

| Individualism/Collectivism | MAOA | 3-repeat allele (collectivistic) more common in the East) |

| Warrior/Worrier | COMT | A-allele (worrier) more common in the West |

| Altruism | COMT | G-allele (warrior) associated with altruism |

| Leader/Follower | CHRBN3 | A-allele (leader) more common in populations Out-of-Africa |

The table above is from Kiaris (2012: 117) who lays out the genes/polymorphisms discussed in his book—what supposedly shows how and why Eastern and Western societies are so different.

Kiaris (2012: 141) then makes a bold claim: “Since we know now that at least a fraction (and likely more than that) of our behavior is due to our genes“, actually “we” don’t “know” this “now”.

The takeaways from the book are: (1) populations differ genetically; (2) since populations differ genetically, then genetic differences correlated with behavior should show frequency differences between populations; (3) since these populations show both behavioral/societal differences and they also differ in genetic polymorphisms which are then associated with that behavior, then those polymorphisms are, in part, a cause of that society and the behavior found in it; (4) therefore, differences in Eastern and Western societies are explained by (some) of these polymorphisms discussed.

Now for a simple rebuttal of the book:

“B iff G” (behavior B is possible if and only if a specific genotype G is instantiated) or “if G, then necessarily B” (genotype G is a sufficient cause for behavior B). Both claims are false; genes are neither a sufficient or necessary cause for any behavior. Genes are, of course, a necessary pre-condition for behavior, but they are not needed for a specific behavior to be instantiated; genes can be said to be difference makers (Sterelny and Kitcher, 1988) (but see Godfrey-Smith and Lewontin, 1993 for a response). These claims cannot be substantiated; therefore, the claims that “if G, then necessarily B” and “B iff G” are false, it cannot be shown that genes are difference makers in regard to behavior, nor can it be shown that particular genes or whatnot.

Conclusion

I’m surprised that I have not come across a book like this sooner; you would expect that there would be a lot more written on this. This book is short, it discusses some good resources, but the conclusions that Kiaris draws, in my opinion, will not come to pass because genes are not neccesary nor sufficient cause of any type of behavior, nor can it be shown that genes are causes of any behavior B. Behavioral differences between Eastern and Western societies, logically, cannot come down to differences in genes, since they are neither necessary nor sufficient causes of behavior (genes are neccessary pre-conditions for behavior, since without genes there is no organism, but genes cannot explain behavior).

Kiaris attempts to show how and why Eastern and Western societies became so different, how and why Western societies are dominated by “Aristotle’s reason and logic”, while Eastern lines of thought “has been dominated by Confucious’s harmony, collectivism, and context dependency” (Kiaris, 2012: 9). While the book is well-written and researched (he talks about nothing new if you’re familiar with the literature), Kiaris fails to prove his ultimate point: that differences in genetic polymorphisms between individuals in different societies explain how and why the societies in question are so different. Though, it is not logically possible for genes to be a necessary nor sufficient cause for any behavior. Kiaris talks like a determinist, since he says that “the presence of different genes in the corresponding people has actually dictated the acquisition of these distinct cultural and historical lines, and that an alternative outcome might be unlikely” (Kiaris, 2012: 9), though that is just wishful thinking: if we were able to start history over again, things would occur differently, “the presence of different genes in the corresponding people” be dammed, since genes do not cause behavior.

Racial Differences in Physical Functioning

1350 words

Assessing physical functioning is important. Such simple tests—such as walk, stand, and sit tests—can predict numerous things. “Testing” defines one’s abilities after being given a set of instructions. Racial differences exist and, of course, both genetic and environmental factors play a part in health disparities between ethnies in America. Minorities report lower levels of physical activity (PA) than whites, this—most often—leads to negative outcomes, but due to their (average) physiology, they can get away with doing “less” than other ethnies. In this article, I will look at studies which talk about racial differences in physical functioning, what it means, and what can be done about it.

Racial differences in physical functioning

Racial differences in self-rated health at similar levels of health exist (Spencer et al, 2009). Does being optimistic or pessimistic about health effect one’s outcomes? Using 2,929 HABC (Health, Aging, and Body Composition) participants, Spencer et al (2009) examined the relationship between self-rated health (SRH) and race, while controlling for demographic, psychosocial and physical health factors. They found that whites were 3.7 times more likely than blacks to report good SRH.

Elderly blacks were more likely to be less educated, reported lower satisfaction with social support, and also had lower scores on a physical battery test than whites. Further, black men and women were less likely to report that walking a quarter mile was “easy”, implying that (1) they have no endurance and (2) weak leg muscles.

Blacks were also more likely to report higher personal mastery:

Participants were asked whether they agreed or disagreed with the following statements: “ I often feel helpless in dealing with the problems of life ” and “ I can do just about anything I really set my mind to do, ” with response categories of disagree strongly, disagree somewhat, agree somewhat, and agree strongly. (Spencer et al, 2009: 90)

Blacks were also more likely to report higher BMI and more chronic health conditions than whites. White men, though, were more likely to report higher global pain, but were older than black men in the sample. When whites and blacks of similar physical functioning were compared, whites were more likely to report higher SRH. Health pessimists were found to be at higher risk of poor health.

Vazquez et al (2018) showed that ‘Hispanics’ were less likely to report having mobility limitations than whites and blacks even after adjustment for age, gender, and education. Blacks, compared to non-‘Hispanic’ whites were more likely to have limitations on activities of daily living (ADL) and instrumental activities of daily living (IADL) For ADL limitations, questions like “Do participant receive help or supervision with personal care such as bathing, dressing, or getting around the house because of an impairment or a physical or mental health problem?” and for IADLs “Does participant receive help or supervision using the telephone, paying bills, taking medications, preparing light meals, doing laundry, or going shopping?” (Vazquez et al, 2018: 4). They also discuss the so-called “Hispanic paradox” (which I discussed), but could not come to a conclusion on the data they acquired. Nonetheless, ‘Hispanic’ participants were less likely to report mobility issues; blacks were more likely than whites to report significant difficulties with normal activities of daily living.

Araujo et al (2010) devised a lower-extremities chair test: how quickly one can stand and sit in a chair; along with a walking test: the time it takes to walk 50 feet. Those who could not complete the chair test were given a score of ‘0’. Overall, the composite physical function (CPF) score for blacks was 3.45, for ‘Hispanics’ it was 3.66, and for whites, it was 4.30. This shows that older whites were stronger—in the devised tests—and that into older age whites are more likely to not need assistance for everyday activities.

This is important because differences in physical functioning between blacks and whites can explain differences in outcomes one year after having a stroke (Roth et al, 2018). This makes sense, knowing what we know about stroke, cognitive ability and exercise into old age.

Shih et al (2005) conclude:

a nationally representative study of the US population, indicate that among older adults with arthritis: (1) racial disparities found in rates of onset of ADL [activities of daily living] limitations are explained by differences in health needs, health behaviors, and economic resources; (2) there are race-specific differences in risk factors for the onset of ADL limitations; and (3) physical limitations are the most important risk factor for onset of ADL limitations in all racial and ethnic groups.

Safo (2012) showed that out of whites, blacks and “Hispanics”, blacks reported the most (low back) pain, worse role functioning score and overall physical functioning score. Lavernia et al (2011) also found that racial/ethnic minorities were more likely to report pain and have lower physical functioning after having a total knee arthroplasty (TKA) and total hip arthroplasty (THA). They found that blacks and ‘Hispanics’ were more likely to report pain, decreased well-being, and have a lower physical functioning score, which was magnified specifically in blacks. Blacks were more likely to report higher levels of pain than whites (Edwards et al, 2001; Campbell and Edwards, 2013), while Kim et al (2017) showed that blacks had lower pain tolerance and higher pain ratings. (Read Pain and Ethnicity by Ronald Wyatt.)

Sarcopenia is the loss of muscle tissue which is a natural part of the aging process. Sarcopenia—and sarcopenic obesity (obesity brought on by muscle loss due to aging)—shows racial/ethnic/gender differences, too. “Hispanics” were the most likely to have sarcopenia and sarcopenic obesity and blacks were least likely to acquire those two maladies (Du et al, 2018). They explain why sarcopenic obesity may be higher in ‘Hispanic’ populations:

One possibility to explain the higher rates of sarcopenia and SO in the Hispanic population could be the higher prevalence of poorly controlled chronic disease, particularly diabetes, and other health conditions.

[…]

We were surprised to find that Hispanic adults had higher rates of sarcopenia and SO [sarcopenic obesity]. One possible explanation could be the disparity in mortality rates among ethnic populations. Populations that have greater survival rates may live longer even with poorer health and thus have greater chance of developing sarcopenia. Alternatively, populations which have lower survival rates may not live long enough to develop sarcopenia and thus may identify with lower prevalence of sarcopenia. This explanation appears to be supported by the results of our study and current mortality statistics; NH Blacks have the highest mortality rate, followed by NH Whites, and lastly Hispanics.

Differences in physical activity could, of course, lead to differences in sarcopenic obesity. Physical activity leads to an increase in testosterone in lifelong sedentary men (Hayes et al, 2017), while those who had high physical activity compared to low physical activity were more likely to have high testosterone, which was not observed between the groups that were on a calorie-restricted diet (Kumagai et al, 2016). Kumagai et al (2018) also showed that vigorous physical exercise leads to increases in testosterone in obese men:

We demonstrated that a 12-week aerobic exercise intervention increased serum total testosterone, free testosterone, and bioavailable testosterone levels in overweight/obese men. We suggest that an increase in vigorous physical activity increased circulating testosterone levels in overweight/obese men.

(Though see Hawkins et al, 2008 who show that only SHGB and DHT increased with no increase in testosterone.)

So, clearly, since exercise can increase testosterone levels in obese subjects, and higher levels of testosterone are associated with lower levels of adipose tissue; since adequate levels of steroid hormones are needed for lower levels of adipose tissue (Mammi et al, 2012), then since exercise increases testosterone and higher levels of testosterone lead to lower levels of adipose tissue, if physical activity is increased, then levels of obesity and sarcopenic obesity should decrease in those populations.

Conclusion

Racial differences in physical functioning exist; these differences in physical functioning that exist have grave consequences for certain events, especially after a stroke. Differences in physical functioning/activity cause differences in sarcopenia/sarcopenic obesity in different ethnies. This can be ameliorated by targeting at-risk groups with certain outreach. This type of research shows how differences in lifestyle between ethnies cause differences in physical activity between ethnies as the years progress.

(Also read Evolving Human Nutrition: Implications for Public Health, specifically Chapter 8 on socioeconomic status and health disparities for more information on how and why differences like this persist between ethnies in America.)