Home » IQ (Page 7)

Category Archives: IQ

People Should Stop Thinking IQ Measures ‘Intelligence’: A Response to Grey Enlightenment

1700 words

I’ve had a few discussions with Grey Enlightenment on this blog, regarding construct validity. He has now published a response piece on his blog to the arguments put forth in my article, though unfortunately it’s kind of sophomoric.

People Should Stop Saying Silly Things About IQ

He calls himself a ‘race realist’yet echoes the same arguments used by those who oppose such realism.

1) One doesn’t have to believe in racial differences in mental traits to be a race realist as I have argued twice before in my articles You Don’t Need Genes to Delineate Race and Differing Race Concepts and the Existence of Race: Biologically Scientific Definitions of Race. It’s perfectly possible to be a race realist—believe in the reality of race—without believing there are differences in mental traits—‘intelligence’, for instance (whatever that is).

2) That I strongly question the usefulness and utility of IQ due to its construction doesn’t mean that I’m not a race realist.

3) I’ve even put forth an analogous argument on an ‘athletic abilities test’ where I gave a hypothetical argument where a test was constructed that wasn’t a true test of athletic ability and that it was constructed on the basis of who is or is not athletic, per the constructors’ presuppositions. In this hypothetical scenario, am I really denying that athletic differences exist between races and individuals? No. I’d just be pointing out flaws in a shitty test.

Just because I question the usefulness and (nonexistent) validity of IQ doesn’t mean that I’m not a race realist, nor that I believe groups or individuals are ‘the same’ in ‘intelligence’ (whatever that may be; which seems to be a common strawman for those who don’t bow to the alter of IQ).

Blood alcohol concentration is very specific and simple; human intelligence by comparison is not . Intelligence is polygenic (as opposed to just a single compound) and is not as easy to delineate, as, say, the concentration of ethanol in the blood.

It’s irrelevant how ‘simple’ blood alcohol concentration is. The point of bringing it up is that it’s a construct valid measure which is then calibrated against an accepted and theoretical biological model. The additive gene assumption is false, that is, genes being independent of the environment giving ‘positive charges’ as Robert Plomin believes.

He says IQ tests are biased because they require some implicit understanding if social constructs, like what 1+1 equals or how to read a word problem, but how is a test that is as simple as digit recall or pattern recognition possibly a social construct.

What is it that allows individuals to be better than others on digit recall or pattern recognition (what kind of pattern recognition?)? The point of my 1+1 statement is that it is construct valid regarding one’s knowledge of that math problem whereas for the word problem, it was a quoted example showing how if the answer isn’t worded correctly it could be indirectly testing something else.

He’s invoking a postmodernist argument that IQ tests do not measure an innate, intrinsic intelligence, but rather a subjective one that is construct of the test creators and society.

I could do without the buzzword (postmodernist) though he is correct. IQ tests test what their constructors assume is ‘intelligence’ and through item analysis they get the results they want, as I’ve shown previously.

If IQ tests are biased, how is then [sic] that Asians and Jews are able to score better than Whiles [sic] on such tests; surely, they should be at a disadvantage due to implicit biases of a test that is created by Whites.

If I had a dollar for every time I’ve heard this ‘argument’… We can just go back to the test construction argument and we can construct a test that, say, blacks and women score higher than whites and men respectively. How well would that ‘predict’ anything then, if the test constructors had a different set of assumptions?

IQ tests aren’t ‘biased’, as much as lower class people aren’t as prepared to take these tests as people in higher classes (which East Asians and Jews are in). IQ tests score enculturation to the middle class, even the Flynn effect can be explained by the rise in the middle class, lending credence to the aforementioned hypothesis (Richardson, 2002).

Regarding the common objection by the left that IQ tests don’t measures [sic] anything useful or that IQ isn’t correlated with success at life, on a practical level, how else can one explain obvious differences in learning speed, income or educational attainment among otherwise homogeneous groups? Why is it in class some kids learn so much faster than others, and many of these fast-learners go to university and get good-paying jobs, while those who learn slowly tend to not go to college, or if they do, drop out and are either permanently unemployed or stuck in low-paying, low-status jobs? In a family with many siblings, is it not evident that some children are smarter than others (and because it’s a shared environment, environmental differences cannot be blamed).

1) I’m not a leftist.

2) I never stated that IQ tests don’t correlate with success in life. They correlate with success in life since achievement tests and IQ tests are different versions of the same test. This, of course, goes back to our good friend test construction. IQ is correlated with income at .4, meaning 16 percent of the variance is explained by IQ and since you shouldn’t attribute causation to correlations (lest you commit the cum hoc, ergo propter hoc fallacy), we cannot even truthfully say that 16 percent of the variation between individuals is due to IQ.

3) Pupils who do well in school tend to not be high-achieving adults whereas children who were not good pupils ended up having good success in life (see the paper Natural Learning in Higher Education by Armstrong, 2011). Furthermore, the role of test motivation could account for low-paying, low-status jobs (Duckworth et al, 2011; though I disagree with their consulting that IQ tests test ‘intelligence’ [whatever that is] they show good evidence that in low scorers, incentives can raise scores, implying that they weren’t as motivated as the high scorers). Lastly, do individuals within the same family experience the same environment the same or differently?

As teachers can attest, some students are just ‘slow’ and cannot grasp the material despite many repetitions; others learn much more quickly.

This is evidence of the uselessness of IQ tests, for if teachers can accurately predict student success then why should we waste time and money to give a kid some test that supposedly ‘predicts’ his success in life (which as I’ve argued is self-fulfilling)? Richardson (1998: 117) quotes Layzer (1973: 238) who writes:

Admirers of IQ tests usually lay great stress on their predictive power. They marvel that a one-hour test administered to a child at the age of eight can predict with considerable accuracy whether he will finish college. But as Burt and his associates have clearly demonstrated, teachers’ subjective assessments afford even more reliable predictors. This is almost a truism.

Because IQ tests test for the skills that are required for learning, such as short term memory, someone who has a low IQ would find learning difficult and be unable to make correct inferences from existing knowledge.

Right, IQ tests test for skills that are required for learning. Though a lot of IQ test questions are general knowledge questions, so how is that testing anything innate if you’ve first got to learn the material, and if you have not you’ll score lower? Richardson (2002) discusses how people in lower classes are differentially prepared for IQ tests which then affects scores, along with psycho-social factors that do so as well. It’s more complicated than ‘low IQ > X’.

All of these sub-tests are positively correlated due to an underlying factor –called g–that accounts for 40-50% of the variation between IQ scores. This suggests that IQ tests measure a certain factor that every individual is endowed with, rather than just being a haphazard collection of questions that have nothing to do with each other. Race realists’ objection is that g is meaningless, but the literature disagrees “… The practical validity of g as a predictor of educational, economic, and social outcomes is more far-ranging and universal than that of any other known psychological variable. The validity of g is greater the complexity of the task.[57][58]”

I’ve covered this before. It correlates with the aforementioned variables due to test construction. It’s really that easy. If the test constructors have a different set of presuppositions before the test is constructed then completely different outcomes can be had just by constricting a different test.

Then what about ‘g’? What would one say then? Nevertheless, I’ve heavily criticized ‘g’ and its supposed physiology, and if physiologists did study this ‘variable’ and if it truly did exist, 1) it would not be rank ordered because physiologists don’t rank order traits, 2) they don’t assume normal variations, they don’t estimate heritability and attempt to untangle genes from environment, 3) they don’t assume that normal variation is related to genetic variation (except in rare cases, like down syndrome, for instance), and 4) nor do they assume within the normal range of physiological differences that a higher level is ‘better’ than a lower. My go-to example here is BMR (basal metabolic rate). It has a similar heritability range as IQ (.4 to .8; which is most likely overestimated due to the use of the flawed twin method, just like the heritability of IQ), so is one with a higher BMR somehow ‘better’ than one with a lower BMR? This is what logically follows from assuming that ‘g’ is physiological and all of the assumptions that come along with it. It doesn’t make logical, physiological sense! (Jensen, 1998: 92 further notes that “g tells us little if anything about its contents“.)

All in all, I thank Grey Enlightenment for his response to my article, though it leaves a lot to be desired and if he responds to this article then I hope that it’s much more nuanced. IQ has no construct validity, and as I’ve shown, the attempts at giving it validity are circular, and done by correlating it with other IQ tests and achievement tests. That’s not construct validity.

IQ and Construct Validity

1550 words

The word ‘construct’ is defined as “an idea or theory containing various conceptual elements, typically one considered to be subjective and not based on empirical evidence.” Whereas the word ‘validity’ is defined as “the quality of being logically or factually sound; soundness or cogency.” Is there construct validity for IQ tests? Are IQ tests tested against an idea or theory containing various conceptual elements? No, they are not.

Cronbach and Meehl (1955) define construct validity, which they state is “involved whenever a test is to be interpreted as a measure of some attribute or quality which is not “operationally defined.”” Though, the construct validity for IQ tests has been fleeting to investigators. Why? Because there is no theory of individual IQ differences to test IQ tests on. It is even stated that “there is no accepted unit of measurement for constructs and even fairly well-known ones, such as IQ, are open to debate.” The ‘fairly well-known ones’ like IQ are ‘open to debate’ because no such validity exists. The only ‘validity’ that exists for IQ tests is correlations with other tests and attempted correlations with job performance, but I will show that that is not construct validity as is classicly defined.

Construct validity can be easily defined as the ability of a test to measure the concept or construct that it is intended to measure. We know two things about IQ tests: 1) they do not test ‘intelligence’ (but they supposedly do a ‘good enough job’ so that it does not matter) and 2) it does not even test the ‘construct’ that it is intended to measure. For example, the math problem ‘1+1’ is construct valid regarding one’s knowledge and application of that math problem. Construct validity can pretty much be summed up as the proof that it is measuring what the test intends…but where is this proof? It is non-existent.

Richardson (1998: 116) writes:

Psychometrists, in the absence of such theoretical description, simply reduce score differences, blindly to the hypothetical construct of ‘natural ability’. The absence of descriptive precision about those constructs has always made validity estimation difficult. Consequently the crucial construct validity is rarely mentioned in test manuals. Instead, test designers have sought other kinds of evidence about the valdity of their tests.

The validity of new tests is sometimes claimed when performances on them correlate with performances on other, previously accepted, and currently used, tests. This is usually called the criterion validity of tests. The Stanford-Binet and the WISC are often used as the ‘standards’ in this respect. Whereas it may be reassuring to know that the new test appears to be measuring the same thing as an old favourite, the assumption here is that (construct) validity has already been demonstrated in the criterion test.

Some may attempt to say that, for instance, biological construct validity for IQ tests may be ‘brain size’, since brain size is correlated with IQ at .4 (meaning 16 percent of the variance in IQ is explained by brain size). However, for this to be true, someone with a larger brain would always have to be ‘more intelligent’ (whatever that means; score higher on an IQ test) than someone with a smaller brain. This is not true, so therefore brain size is not and should not be used as a measure of construct validity. Nisbett et al (2012: 144) address this:

Overall brain size does not plausibly account for differences in aspects of intelligence because all areas of the brain are not equally important for cognitive functioning.

For example, breathalyzer tests are construct valid. There is a .93 correlation (test-retest) between 1 ml/kg bodyweight of ethanol in 20, healthy male subjects. Furthermore, obtaining BAC through gas chromatography of venous blood, the two readings were highly correlated at .94 and .95 (Landauer, 1972). Landauer (1972: 253) writes “the very high accuracy and validity of breath analysis as a correct estimate of the BAL is clearly shown.” Construct validity exists for ad-libitum taste tests of alcohol in the laboratory (Jones et al, 2016).

There is a casual connection between what one breathes into the breathalyzer and his BAC that comes out of the breathalyzer and how much he had to drink. For example, for a male at a bodyweight of 160 pounds, 4 drinks would have him at a BAC of .09, which would make him unfit to drive. (‘One drink’ being 12 oz of beer, 5 oz of wine, or 1.25 oz of 80 proof liquor.) He drinks more, his BAC reading goes up. Someone is more ‘intelligent’ (scores higher on an IQ test), then what? The correlations obtained from so-called ‘more intelligent people’, like glucose consumption, brain evoked potentials, reaction time, nerve conduction velocity, etc have never been shown to determine higher ‘ability’ to score higher on IQ tests. That, too, would not even be construct validation for IQ tests, since there needs to be a measure showing why person A scored higher than person B, which needs to hold one hundred percent of the time.

Another good example of the construct validity of an unseen construct is white blood cell count. White blood cell count was “associated with current smoking status and COPD severity, and a risk factor for poor lung function, and quality of life, especially in non-currently smoking COPD patients. The WBC count can be used, as an easily measurable COPD biomarker” (Koo et al, 2017). In fact, the PRISA II test has white blood cell count in it, which is a construct valid test. Even elevated white blood cell count strongly predicts all-cause and cardiovascular mortality (Johnson et al, 2005). It is also an independent risk factor for coronary artery disease (Twig et al, 2012).

A good example of tests supposedly testing one thing but testing another is found here:

As an example, think about a general knowledge test of basic algebra. If a test is designed to assess knowledge of facts concerning rate, time, distance, and their interrelationship with one another, but test questions are phrased in long and complex reading passages, then perhaps reading skills are inadvertently being measured instead of factual knowledge of basic algebra.

Numerous constructs have validity—but not IQ tests. It is assumed that they test ‘intelligence’ even though an operational definition of intelligence is hard to come by. This is important, as if there cannot be an agreement on what is being tested, how will there be construct validity for said construct in question?

Richardson (2002) writes that Detterman and Sternberg sent out a questionnaire to a group of theorists which was similar to another questionnaire sent out decades earlier to see if there was an agreement on what ‘intelligence’ is. Twenty-five attributes of intelligence were mentioned. Only 3 were mentioned by more than 25 percent of the respondents, with about half mentioning ‘higher level components’, one quarter mentioned ‘executive processes’ while 29 percent mentioned ‘that which is valued by culture’. About one-third of the attributes were mentioned by less than 10 percent of the respondents with 8 percent of them answering that intelligence is ‘the ability to learn’. So if there is hardly any consensus on what IQ tests measure or what ‘intelligence’ is, then construct validity for IQ seems to be very far in the distance, almost unseeable, because we cannot even define the word, nor actually test it with a test that’s not constructed to fit the constructors’ presupposed notions.

Now, explaining the non-existent validity of IQ tests is very simple: IQ tests are purported to measure ‘g’ (whatever that is) and individual differences in test scores supposedly reflect individual differences in ‘g’. However, we cannot say that it is differences in ‘g’ that cause differences in individual test scores since there is no agreed-upon model or description of ‘g’ (Richardson, 2017: 84). Richardson (2017: 84) writes:

In consequence, all claims about the validity of IQ tests have been based on the assumption that other criteria, such as social rank or educational or occupational acheivement, are also, in effect, measures of intelligence. So tests have been constructed to replicate such ranks, as we have seen. Unfortunately, the logic is then reversed to declare that IQ tests must be measures of intelligence, because they predict school acheivement or future occupational level. This is not proper scientific validation so much as a self-fulfilling ordinance.

Construct validity for IQ does not exist (Richardson and Norgate, 2015), unlike construct validity for breathalyzers (Landauer, 1972) or white blood cell count as a disease proxy (Wu et al, 2013; Shah et al, 2017). So, if construct validity is non-existent, then that means that there is no measure for how well IQ tests measure what it’s ‘purported to measure’, i.e., how ‘intelligent’ one is over another because 1) the definition of ‘intelligence’ is ill-defined and 2) IQ tests are not validated against agreed-upon biological models, though some attempts have been made, though the evidence is inconsistent (Richardson and Norgate, 2015). For there to be true validity, evidence cannot be inconsistent; it needs to measure what it purports to measure 100 percent of the time. IQ tests are not calibrated against biological models, but against correlations with other tests that ‘purport’ to measure ‘intelligence’.

(Note: No, I am not saying that everyone is equal in ‘intelligence’ (whatever that is), nor am I stating that everyone has the same exact capacity. As I pointed out last week, just because I point out flaws in tests, it does not mean that I think that people have ‘equal ability’, and my example of an ‘athletic abilities’ test last week is apt to show that pointing out flawed tests does not mean that I deny individual differences in a ‘thing’ (though athletic abilities tests are much better with no assumptions like IQ tests have.))

Is Diet An IQ Test?

1350 words

Dr. James Thompson is a big proponent of ‘diet being an IQ test‘ and has written quite a few articles on this matter. Though, the one he published today is perhaps the most misinformed.

He first shortly discusses the fact that 200 kcal drinks are being marketed as ‘cures’ for type II diabetes. People ‘beat’ the disease with only 200 kcal drinks. Sure, they lost weight, lost their disease. Now what? Continue drinking the drinks or now go back to old dietary habits? Type II diabetes is a lifestyle disease, and so can be ameliorated with lifestyle interventions. Though, Big Pharma wants you to believe that you can only overcome the disease with their medicines and ‘treatments’ along with the injection of insulin from your primary care doctor. Though, this would only exacerbate the disease, not cure it. The fact of the matter is this: these ‘treatments’ only ‘cure’ the proximate causes. The ULTIMATE CAUSES are left alone and this is why people fall back into habits.

When speaking about diabetes and obesity, this is a very important distinction to make. Most doctors, when treating diabetics, only treat the proximate causes (weight, symptoms that come with weight, etc) but they never get to the root of the problem. The root of the problem is, of course, insulin. The main root is never taken care of, only the proximate causes are ‘cured’ through interventions, however, the underlying cause of diabetes, and obesity as well is not taken care of because of doctors. This, then, leads to a neverending cycle of people losing a few pounds or whatnot and then they, expectedly, gain it back and they have to re-do the regimen all over again. The patient never gets cured, Big Pharma, hospitals et al get to make money off not curing a patients illness by only treating proximate and not ultimate causes.

Dr. Thompson then talks about a drink for anorexics, called ‘Complan“, and that he and another researcher gave this drink to anorexics, giving them about 3000 kcals per day of the drink, which was full of carbs, fat and vitamins and minerals (Bhanji and Thompson, 1974).

The total daily calorific intake was 2000-3000 calories, resulting in a mean weight gain of 12.39 kilos over 53 days, a daily gain of 234 grams, or 1.64 kilos (3.6 pounds) a week. That is in fact a reasonable estimate of the weight gains made by a totally sedentary person who eats a 3000 calorie diet. For a higher amount of calories, adjust upwards. Thermodynamics.

Thermodynamics? Take the first law. The first law of thermodynamics is irrelevant to human physiology (Taubes, 2007; Taubes, 2011; Fung, 2016). (Also watch Gary Taubes explain the laws of thermodynamics.) Now take the second law of thermodynamics which “states that the total entropy can never decrease over time for an isolated system, that is, a system in which neither energy nor matter can enter nor leave.” People may say that ‘a calorie is a calorie’ therefore it doesn’t matter whether all of your calories come from, say, sugar or a balanced high fat low carb diet, all weight gain or loss will be the same. Here’s the thing about that: it is fallacious. Stating that ‘a calorie is a calorie’ violates the second law of thermodynamics (Feinman and Fine, 2004). They write:

The second law of thermodynamics says that variation of efficiency for different metabolic pathways is to be expected. Thus, ironically the dictum that a “calorie is a calorie” violates the second law of thermodynamics, as a matter of principle.

So talk of thermodynamics when talking about the human physiological system does not make sense.

He then cites a new paper from Lean et al (2017) on weight management and type II diabetes. The authors write that “Type 2 diabetes is a chronic disorder that requires lifelong treatment. We aimed to assess whether intensive weight management within routine primary care would achieve remission of type 2 diabetes.” To which Dr. Thompson asks ‘How does one catch this illness?” and ‘Is there some vaccination against this “chronic disorder”?‘ The answer to how does one ‘catch this illness’ is simple: the overconsumption of processed carbohydrates, constantly spiking insulin which leads to insulin resistance which then leads to the production of more insulin since the body is resistant which then causes a vicious cycle and eventually insulin resistance occurs along with type II diabetes.

Dr. Thompson writes:

Patients had been put on Complan, or its equivalent, to break them from the bad habits of their habitual fattening diet. This is good news, and I am in favour of it. What irritates me is the evasion contained in this story, in that it does not mention that the “illness” of type 2 diabetes is merely a consequence of eating too much and becoming fat. What should the headline have been?

Trial shows that fat people who eat less become slimmer and healthier.

I hope this wonder treatment receives lots of publicity. If you wish to avoid hurting anyone’s feelings just don’t mention fatness. In extremis, you may talk about body fat around vital organs, but keep it brief, and generally evasive.

So you ‘break bad habits’ by introducing new bad habits? It’s not sustainable to drink these low kcal drinks and expect to be healthy. I hope this ‘wonder treatment’ does not receive a lot of publicity because it’s bullshit that will just line the pockets of Big Pharma et al, while making people sicker and, the ultimate goal, having them ‘need’ Big Pharma to care for their illness—when they can just as easily care for it themselves.

‘Trial shows that fat people who eat less become slimmer and healthier’. Or how about this? Fat people that eat well and exercise, up to 35 BMI, have no higher risk of early death then someone with a normal BMI who eats well and exercises (Barry et al, 2014). Neuroscientist Dr. Sandra Aamodt also compiles a wealth of solid information on this subject in her 2016 book “Why Diets Make Us Fat: The Unintended Consequences of Our Obsession with Weight Loss“.

Dr. Thompson writes:

I see little need to update the broad conclusion: if you want to lose weight you should eat less.

This is horrible advice. Most diets fail, and they fail because the ‘cures’ (eat less, move more; Caloric Reduction as Primary: CRaP) are garbage and don’t take human physiology into account. If you want to lose weight and put your diabetes into remission, then you must eat a low-carb (low carb or ketogenic, doesn’t matter) diet (Westman et al, 2008; Azar, Beydoun, and Albadri, 2016; Noakes and Windt, 2016; Saslow et al, 2017). Combine this with an intermittent fasting plan as pushed by Dr. Jason Fung, and you have a recipe to beat diabesity (diabetes and obesity) that does not involve lining the pockets of Big Pharma, nor does it involve one sacrificing their health for ‘quick-fix’ diet plans that never work.

In sum, diets are not ‘IQ tests’. Low kcal ‘drinks’ to ‘change habits’ of type II diabetics will eventually exacerbate the problem because when the body is in extended caloric restriction, the brain panics and releases hormones to stimulate appetite while stopping hormones that cause you to be sated and stop eating. This is reality; these studies that show that eating or drinking 800 kcal per day or whatnot are based on huge flaws: the fact that this could be sustainable for a large number of the population is not true. In fact, no matter how much ‘willpower’ you have, you will eventually give in because willpower is a finite resource (Mann, 2014).

There are easier ways to lose weight and combat diabetes, and it doesn’t involve handing money over to Big Pharma/Big Food. You only need to intermittently fast, you’ll lose weight and your diabetes will not be a problem, you’ll be able to lose weight and will not have problems with diabetes any longer (Fung, 2016). Most of these papers coming out recently on this disease are garbage. Real interventions exist, they’re easier and you don’t need to line the pockets of corporations to ‘get cured’ (which never happens, they don’t want to cure you!)

Athletic Ability and IQ

1150 words

Proponents of the usefulness of IQ tests may point to athletic competitions as an analogous test/competition that they believe may reinforce their belief that IQ tests ‘intelligence’ (whatever that is). Though, there are a few flaws in their attempted comparison. Some may say that “Lebron James and Usain Bolt have X morphology/biochemistry and therefore that’s why they excel! The same goes foe IQ tests!” People then go on to ask if I ‘deny human evolution’ because I deny the usefulness (that is built into the test by way of ‘item analysis; Jensen, 1980: 137) of IQ tests and point out flaws in their construction.

People who accept the usefulness of IQ tests and attempt to defend their flaws may attempt to make sports competition, like, say, a 100m sprint, an analogous argument. They may say that ‘X is better than Y, and the reason is ‘genetic’ in nature!’. Though, nature vs. nurture is a false dichotomy and irrelevant (Oyama, 1985, 2000; Oyama, 1999; Oyama, 2000; Moore, 2003). Behavior is neither ‘genetic’ nor ‘environmental’. with that out of the way, tests of athletic ability as mentioned above are completely different from IQ tests.

Tests of athletic ability do not have any arbitrary judgments as IQ tests do in their construction and analysis of the items to be put on the test. It’s a simple, cut-and-dry explanation: on this instance in this test, runner X was better than runner Y. We can then test runner X and see what kind of differences he has in his physiology and somatype, along with asking him what drives him to succeed. We can then do the same for the other athlete and discover that, as hypothesized, there are inherent differences in their physiology that make runner X be better than runner Y, say the ability to take deeper breaths, take longer strides per step due to longer legs, having thinner appendages as to be faster and so on. In regard to IQ, the tests are constructed on the prior basis of who is or is not intelligent. Basically, as is not the case with tests of athletic ability, the ‘winners and losers’, so to speak, are already chosen on the prior suppositions of who is or is not intelligent. Therefore, the comparison of athletic abilities tests and IQ tests are not good because athletic abilities tests are not constructed on the basis of who the constructors believe are athletic, like IQ tests are constructed on the basis of who the testers believe is ‘intelligent’ or not.

Some people are so far up the IQ-tests-test-intelligence idea that due to the critiques I cite on IQ tests, I actually get asked if I ‘deny human evolution’. That’s ridiculous and I will explain why.

Imagine an ‘athletic abilities’ test existed. Imagine that this test was constructed on the basis of who the test constructor believed who is or is not athletic. Imagine that he constructs the test to show that people who had previously low ability in past athletic abilities tests had ‘high athletic ability’ in this new test that he constructed. Then I discover the test. I read about it and I see how it is constructed and what the constructors did to get the results they wanted, because they believed that the lower-ability people in the previous tests had higher ability and therefore constructed an ‘athletic abilities’ test to show they were more ‘athletic’ than the former high performers. I then point out the huge flaws in the construction of such a test. The logic of people who claim that I deny human evolution because I blast the validity and construction of IQ tests would, logically, have to say that I’m denying athletic differences between groups and individuals, when in actuality I’m only pointing out huge flaws in the ‘athletic abilities’ test that was constructed. The athletic abilities example I’ve conjured up is analogous to the IQ test construction tirade I’ve been on recently. So, if a test of ‘athletic ability’ exists and I come and critique it, then no, I am not denying athletic differences between individuals I am only pointing out flawed tests.

The basic structure of my ‘athletic abilities’ argument is this: that test that would be constructed would not test true ‘athletic abilities’ just like IQ tests don’t test ‘intelligence’ (Richardson, 2002). Pointing out huge flaws in tests does not mean that you’re a ‘blank slatist’ (whatever that is; it’s a strawman for people who don’t bow down to the IQ alter). Pointing out flaws in IQ tests does not mean that you believe that everyone and every group is ‘equal’ in a psychological and mental sense. Pointing out the flaws in IQ tests does not mean that one is a left-wing egalitarian that believes that all humans—individuals and groups—are equal and that the only cause of their differences comes down to the environment (whether SES or the epigenetic environment, etc). Pointing out flaws in these tests is needed; lest people truly think that they do test, say, ability for complex cognition (they don’t). Indeed, it seems that everyday life is more complicated than the hardest Raven’s item. Richardson and Norgate (2014) write:

Indeed, typical IQ test items seem remarkably un-complex in their cognitive demands compared with, say, the cognitive demands of ordinary social life and other everyday activities that the vast majority of children and adults can meet. (pg 3)

On the other hand abundant cognitive research suggests that everyday, “real life”

problem solving, carried out by the vast majority of people, especially in social-cooperative situations, is a great deal more complex than that required by IQ test items, including those in the Raven. (pg 6)

Could it be possible that ‘real-life’ athletic ability, such as ‘walking’ or whatnot be more ‘complex’ than the analog of athletic ability? No, not at all. Because, as I previously noted, athletic abilities tests test who has the ‘better’ physiology or morphology for whichever competition they choose to compete in (and of course there will be considerable self-selection since people choose things they’re good at). It’s clear that there is absolutely no possibility of ‘real-life’ athletic ability possibly being more complex than tests of athletic ability.

In sum, no, I do not deny human evolution because I critique IQ tests. Just because I critique IQ tests doesn’t mean that I deny human evolution. My example of the ‘athletic test’ is a sound and logical analog to the IQ critiques that I cite. Just framing it in the way of a false test of athletic ability and then pointing out the flaws is enough to show that I don’t deny human evolution. Because if such an ‘athletic abilities’ test did exist and I pointed out its flaws, I would not be denying differences between groups or individuals due to evolution, I’d simply be critiquing a shitty test, which is what I do with IQ tests. Actual tests of athletic ability are not analogous to IQ tests because tests of athletic ability are not ‘constructed’ in the way that IQ tests are.

IQ Test Construction

1550 words

No one really discusses how IQ tests are constructed; people just accept the numbers that are spit out and think that it shows one’s intelligence level relative to others who took the test. However, there are huge methodological flaws in regard to IQ tests—one of the largest, in my opinion, being that they are constructed to fit a normal curve and based on the ‘prior knowledge’ of who is or is not intelligent.

What people don’t understand about test construction is that the behavior genetic (BG) method must assume a normal distribution. IQ tests have been constructed to display this normal distribution, so we cannot say whether or not it exists in nature, though few human traits fall on the normal distribution. The fact of the matter is this: The normal curve is achieved through keeping more items that people get right while keeping the smaller proportion of items that people get right and wrong. This forces the normal curve and all of the assumptions that come along with this so-called IQ bell curve.

Even then, the fact that the normal distribution is forced doesn’t mean as much as the assumptions and conclusions drawn from the forced curve. It is assumed that individual test score differences arise out of ‘biology’, however with how test questions are manipulated to get the results that the test constructors want, it is then assumed that the cause for individual test score differences are ‘biological’ in nature, however we don’t know if these distributions are ‘biological’ in nature due to how the tests are constructed.

The fact of the matter is, the tests are constructed based off of the prior knowledge of who is or is not intelligent. This means that we can ‘build the test’ to fit these preconceived notions. The problem of item selection was discussed by Richardson (1998) who discussed boys scoring a few points higher than girls, and wondering whether or not these differences should be ‘allowed to persist’ or not. Richardson (1998: 114) writes (12/26/17 Edit: I’ll also provide the quote that precedes this one):

“One who would construct a test for intellectual capacity has two possible methods of handling the problem of sex differences.

1 He may assume that all the sex differences yielded by his test items are about equally indicative of sex differences in native ability.

2 He may proceed on the hypothesis that large sex differences on items of the Binet type are likely to be factitious in the sense that they reflect sex differences in experience or training. To the extent that this assumption is valid, he will be justified in eliminating from his battery test items which yield large sex differences.

The authors of the New Revision have chosen the second of these alternatives and sought to avoid using test items showing large differences in percents passing.” (McNemar 1942:56)This is, of course, a clear admission of the subjectivity of such assumptions: while ‘preferring’ to see sex differences as undesirable artefacts of test composition, other differences between groups or individuals, such as different social classes or, at various times, different ‘races’, are seen as ones ‘truly’ existing in nature. Yet these, too, could be eliminated or exaggerated by exactly the same process of assumption and manipulation of test composition.

And further writes on page 121:

Suffice it to say that investigators have simply made certain assumptions about ‘what to expect’ in the patterns of scores, and adjusted their analytical equations accordingly: not surprisingly, that pattern emerges!

The only ‘assumption’ that the test constructors have is the biases they already have on who is or is not ‘intelligent’ and then they construct the test through item selection, excising items that don’t fit their desired distribution. Is that supposed to be scientific? You can ask a group of children a bunch of questions and then construct a test to get the conclusion you want based on item selection.

The BG method needs to assume that IQ test scores lie on a normal curve and that it is a quantitative trait that exhibits a normal distribution, though Micceri (1989) showed that normal distributions for measurable traits are the exception, rather than the rule, for numerous measurable traits. Richardson (1998: 113) further writes:

The same applies to many other ‘characteristics’ of IQ. For example, the ‘normal distribution, or bell-shaped curve, reflects (misleadingly as I have suggested in Chapters 1 to 3) key biological assumptions about the nature of cognitive abilities. It is also an assumption crucial to many statistical analyses done on test scores. But it is a property built into a test by the simple device of using relatively more items on which about half the testees pass, and relatively few items on which either many or only a few of them pass. Dangers arise, of course, when we try to pass this property off as something happening in nature instead of contrived by test constructors.

So with the knowledge of test construction, then there is something very obvious here: we can construct IQ tests that, say, show blacks scoring higher than whites and women scoring higher than men. We can then make the assumption that there are genes that are responsible for this distribution and then ‘find genes’ that supposedly cause these differences in test scores (which are constructed to show the differences!). What then? Let’s say that someone did do that, would the logical conclusion be that there are genes ‘driving’ the differences in IQ test scores?

Richardson (2017: 3) writes:

In summary, either directly or indirectly, IQ and related tests are calibrated against social class background, and score differences are inevitably consequences of that social stratification to some extent. Through that calibration, they will also correlate with any genetic cline within the social strata. Whether or not, and to what degree, the tests also measure “intelligence” remains debateable because test validity has been indirect and circular. … Such circularity is also reflected in correlations between IQ and adult occupational levels, income, wealth, and so on. As education largely determines the entry level to the job market, correlations between IQ and occupation are, again, at least partly, self-fullfilling. … CA [cognitive ability], as measured by IQ-type tests, is intrinsically inter-twined with social stratification, and its associated genetic background, by the very nature of the tests.

This, again, falls back on the non-existent construct validity that IQ tests have. Construct validity “defines how well a test or experiment measures up to its claims.” No such construct validity exists for IQ tests. If breathalyzers didn’t test someone’s fitness to drive, would they still be a good measure? If they had no construct validity, if there was no biological model to calibrate the breathalyzer against, would we still accept it as a realistic model to test people against and judge their fitness to drive? Still yet another definition of construct validity comes from Strauss and Smith (2009) who write that psychological constructs are “validated by testing whether they relate to measures of other constructs as specified by theory.” No such biological model exists for IQ; why expect some type of biological model like this when there are other perfectly well-reasoned response to how and why individuals differ in IQ test scores (Richardson, 2002)?

The normal distribution is forced, which IQ-ists claim to know. Richardson (1998) notes that Jensen “noted how ‘every item is carefully edited and selected on the basis of technical procedures known as “item analysis”, based on tryouts of the items on large samples and the test’s target population’ (1980:145).” These ‘tryouts’ are what force the normal curve, and no matter how ‘technical’ the procedures are, there are still huge biases, which then make people draw huge assumptions, again, based on who is or is not intelligent.

Simon (1997: 204) writes (emphasis mine):

There is another, and completely irrefutable, reason why the bell-shaped curve proves nothing at all in the context of H-M’s book: The makers of IQ tests consciously force the test into such a form that it produces this curve, for ease of statistical analysis. The first versions of such tests invariably produce odd-shaped distributions. The test-makers then subtract and add questions to find those that discriminate well between more-successful and less-successful test-takers. For this reason alone the bell-shaped IQ curve must be considered an artifact rather than a fact, and therefore tells us nothing about human nature or human society.

Simon (1997) rightly notes, as I have numerous times, how biased (against certain classes) the excision of items during their analysis and selection (of test items). This shows that both the so-called normal curve and the outcomes they supposedly show aren’t “natural”, but are chosen and forced by the test constructors and their biased and presuppositions about what “intelligence” is. John Raven, for example, also stated in his personal notes how he used his “intuition” to rank-order items, while others further noted that there was no “underlying processing theory” to guide item difficulty and retain old items on newer versions of the test (Carpenter, Just, and Shell: 408).

In sum, IQ tests are constructed to fit a normal curve on the basis of an assumption of a normal distribution, and on the presupposed basis of who is or is not ‘intelligent’ (whatever that means). The BG method needs to assume that IQ is a quantitative trait which exhibits a normal distribution. IQ is assumed to be like height, or weight, but which physiological process in the body does it mimick? I have argued that there is no physiological basis to ‘IQ’ or what they test and that they can be explained not by biology, but through test construction. I wonder what the distributions of IQ test scores would look like without forced normal distributions? Since it is assumed that IQ tests something directly measurable—like height and weight as is normally used—then they must fall on a normal distribution, which all other measurable psychological traits do not show (Micceri, 1989; Buzsaki and Mizseki, 2014).

Some may argue that ‘they know this’ (they being psychometricians). However, ‘they’ must know that most of their assumptions and conclusions about ‘good and bad genes’ lie on the huge assumption of the normal distribution. IQ test scores do not show a normal distribution, they were designed to create it. The fact that most psychological traits show a strong skew to one side and so that’s why a normal distribution is forced is meaningless. The fact of the matter is, just through how the tests are constructed means that we should be cautious as to what these tests test with the assumptions that we currently have about them.

Find the Genes: Testosterone Version

1600 words

Testosterone has a similar heritability to IQ (between .4 and .6; Harris, Vernon, and Boomsma, 1998; Travison et al, 2014). To most, this would imply a significant effect of genes on the production of testosterone and therefore we should find a lot of SNPs that affect the production of testosterone. However, testosterone production is much more complicated than that. In this article, I will talk about testosterone production and discuss two studies which purport to show a few SNPs associated with testosterone. Now, this doesn’t mean that the SNPs cause high/low testosterone, just that they were associated. I will then speak briefly on the ‘IQ SNPs’ and compare it to ‘testosterone SNPs’.

Testosterone SNPs?

Complex traits are ‘controlled’ by many genes and environmental factors (Garland Jr., Zhao, and Saltzman, 2016). Testosterone is a complex trait, so along with the heritability of testosterone being .4 to .6, there must be many genes of small effect that influence testosterone, just like they supposedly do for IQ. This is obviously wrong for testosterone, which I will explain below.

Back in 2011 it was reported that genetic markers were discovered ‘for’ testosterone, estrogen, and SHGB production, while showing that genetic variants in the SHGB locus, located on the X chromosome, were associated with substantial testosterone variation and increased the risk of low testosterone (important to keep in mind) (Ohlsson et al, 2011). The study was done since low testosterone is linked to numerous maladies. Low testosterone is related to cardiovascular risk (Maggio and Basaria, 2009), insulin sensitivity (Pitteloud et al, 2005; Grossman et al, 2008), metabolic syndrome (Salam, Kshetrimayum, and Keisam, 2012; Tsuijimora et al, 2013), heart attack (Daka et al, 2015), elevated risk of dementia in older men (Carcaillon et al, 2014), muscle loss (Yuki et al, 2013), and stroke and ischemic attack (Yeap et al, 2009). So this is a very important study to understand the genetic determinants of low serum testosterone.

Ohlsson et al (2011) conducted a meta-analysis of GWASs, using a sample of 14,429 ‘Caucasian’ men. To be brief, they discovered two SNPs associated with testosterone by performing a GWAS of serum testosterone concentrations on 2 million SNPs on over 8,000 ‘Caucasians’. The strongest associated SNP discovered was rs12150660 was associated with low testosterone in this analysis, as well as in a study of Han Chinese, but it is rare along with rs5934505 being associated with an increased risk of low testosterone(Chen et al, 2016). Chen et al (2016) also caution that their results need replication (but I will show that it is meaningless due to how testosterone is produced in the body).

Ohlsson et al (2011) also found the same associations with the same two SNPs, along with rs6258 which affect how testosterone binds to SHGB. Ohlsson et al (2011) also validated their results: “To validate the independence of these two SNPs, conditional meta-analysis of the discovery cohorts including both rs12150660 and rs6258 in an additive genetic linear model adjusted for covariates was calculated.” Both SNPs were independently associated with low serum testosterone in men (less than 300ng/dl which is in the lower range of the new testosterone guidelines that just went into effect back in July). Men who had 3 or more of these SNPs were 6.5 times more likely to have lower testosterone.

Ohlsson et al (2011) conclude that they discovered genetic variants in the SHGB locus and X chromosome that significantly affect serum testosterone production in males (noting that it’s only on ‘Caucasians’ so this cannot be extrapolated to other races). It’s worth noting that, as can be seen, these SNPs are not really associated with variation in the normal range, but near the lower end of the normal range in which people would then need to seek medical help for a possible condition they may have.

In infant males, no SNPs were significantly associated with salivary testosterone levels, and the same was seen for infant females. Individual variation in salivary testosterone levels during mini-puberty (Kurtoglu and Bastug, 2014) were explained by environmental factors, not SNPs (Xia et al, 2014). They also replicated Carmaschi et al (2010) who also showed that environmental factors influence testosterone more than genetic factors in infancy. There is a direct correlation between salivary testosterone levels and free serum testosterone (Wang et al, 1981; Johnson, Joplin, and Burin, 1987), so free serum testosterone was indirectly tested.

This is interesting because, as I’ve noted here numerous times, testosterone is indirectly controlled by DNA, and it can be raised or lowered due to numerous environmental variables (Mazur and Booth, 1998; Mazur, 2016), such as marriage (Gray et al, 2002; Burnham et al, 2003; Gray, 2011; Pollet, Cobey, and van der Meij, 2013; Farrelly et al, 2015; Holmboe et al, 2017), having children (Gray et al, 2002; Gray et al, 2006; Gettler et al, 2011); to obesity (Palmer et al, 2012; Mazur et al, 2013; Fui, Dupuis, and Grossman, 2014; Jayaraman, Lent-Schochet, and Pike, 2014; Saxbe et al, 2017) smoking is not clearly related to testosterone (Zhao et al, 2016), and high-carb diets decrease testosterone (Silva, 2014). Though, most testosterone decline can be ameliorated with environmental interventions (Shi et al, 2013), it’s not a foregone conclusion that testosterone will sharply decrease around age 25-30.

Studies on ‘testosterone genes’ only show associations, not causes, genes don’t directly cause testosterone production, it is indirectly controlled by DNA, as I will explain below. These studies on the numerous environmental variables that decrease testosterone is proof enough of the huge effects of environment on testosterone production and synthesis.

How testosterone is produced in the body

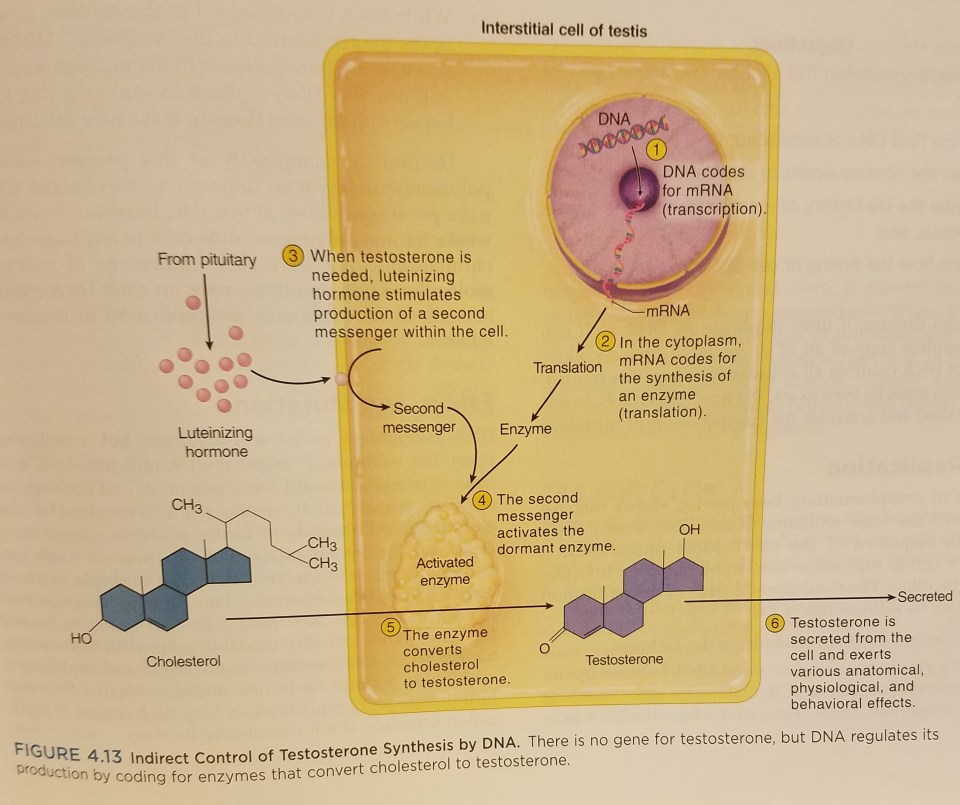

There are five simple steps to testosterone production: 1) DNA codes for mRNA; 2) mRNA codes for the synthesis of an enzyme in the cytoplasm; 3) luteinizing hormone stimulates the production of another messenger in the cell when testosterone is needed; 4) this second messenger activates the enzyme; 5) the enzyme then converts cholesterol to testosterone (Leydig cells produce testosterone in the presence of luteinizing hormone) (Saladin, 2010: 137). Testosterone is a steroid and so there are no ‘genes for’ testosterone.

Cells in the testes enzymatically convert cholesterol into the steroid hormone testosterone. Quoting Saladin (2010: 137):

But to make it [testosterone], a cell of the testis takes in cholesterol and enzymatically converts it to testosterone. This can occur only if the genes for the enzymes are active. Yet a further implication of this is that genes may greatly affect such complex outcomes as behavior, since testosterone strongly influences such behaviors as aggression and sex drive. [RR: Most may know that I strongly disagree with the fact that testosterone *causes* aggression, see Archer, Graham-Kevan and Davies, 2005.] In short, DNA codes only for RNA and protein synthesis, yet it indirectly controls the synthesis of a much wider range of substances concerned with all aspects of anatomy, physiology, and behavior.

(Figure from Saladin (2010: 137; Anatomy and Physiology: The Unity of Form and Function)

Genes only code for RNA and protein synthesis, and thusly, genes do not *cause* testosterone production. This is a misconception most people have; if it’s a human trait, then it must be controlled by genes, ultimately, not proximately as can be seen, and is already known in biology. Genes, on their own, are not causes but passive templates (Noble, 2008; Noble, 2011; Krimsky, 2013; Noble, 2013; Also read Exploring Genetic Causation in Biology). This is something that people need to understand; genes on their own do nothing until they are activated by the system.

What does this have to do with ‘IQ genes’?

My logic here is very simple: 1) Testosterone has the same heritability range as IQ. 2) One would assume—like is done with IQ—that since testosterone is a complex trait that it must be controlled by ‘many genes of small effect’. 3) Therefore, since I showed that there are no ‘genes for’ testosterone and only ‘associations’ (which could most probably be mediated by environmental interventions) with low testosterone, may the same hold true for ‘IQ genes/SNPS’? These testosterone SNPs I talked about from Ohlsson et al (2011) were associated with low testosterone. These ‘IQ SNP’ studies (Davies et al, 2017; Hill et al, 2017; Savage et al, 2017) are the same—except we have an actual idea of how testosterone is produced in the body, we know that DNA is indirectly controlling its production, and, most importantly, there is/are no ‘gene[s] for’ testosterone.

Conclusion

Testosterone has the same heritability range as IQ, is a complex trait like IQ, but, unlike how IQ is purported to be, it [testosterone] is not controlled by genes; only indirectly. My reasoning for using this example is simple: something has a moderate to high heritability, and so most would assume that ‘numerous genes of small effect’ would have an influence on testosterone production. This, as I have shown, is false. It’s also important to note that Ohlsson et al (2011) showed associated SNPs in regards to low testosterone—not testosterone levels in the normal range. Of course, only when physiological values are outside of the normal range will we notice any difference between men, and only then will we find—however small—genetic differences between men with normal and low levels of testosterone (I wouldn’t be surprised if lifestyle factors explained the lower testosterone, but we’ll never know that in regards to this study).

Testosterone production is a real, measurable physiologic process, as is the hormone itself; which is not unlike the so-called physiologic process that ‘g’ is supposed to be, which does not mimic any known physiologic process in the body, which is covered with unscientific metaphors like ‘power’ and ‘energy’ and so on. This example, in my opinion, is important for this debate. Sure, Ohlsson et al (2011) found a few SNPs associated with low testosterone. That’s besides the point. They are only associated with low testosterone; they do not cause low testosterone. So, I assert, these so-called associated SNPs do not cause differences in IQ test scores; just because they’re ‘associated’ doesn’t mean they ’cause’ the differences in the trait in question. (See Noble, 2008; Noble, 2011; Krimsky, 2013; Noble, 2013.) The testosterone analogy that I made here buttresses my point due to the similarities (it is a complex trait with high heritability) with IQ.

IQ Test Construction, IQ Test Validity, and Raven’s Progressive Matrices Biases

2050 words

There are a lot of conceptual problems with IQ tests that I never see talked about. The main ones are how the tests are constructed (to fit a normal curve, no less); to the fact that there is no construct validity to the tests (IQ tests aren’t calibrated against a biological model like breathalyzers are calibrated against a model of blood in the blood stream); and how the Raven’s Progressive Matrices test is actually biased despite being touted as the most culture-free test since all you’re doing is rotating abstract symbols to see what comes next in the sequence. These three assumptions have important implications for the ‘power’ of the IQ tests, the most important being the test construction and validity.

I) IQ test construction

IQ tests are constructed with the assumption that we know what IQ tests test (we don’t) and with the prior ‘knowledge’ of who is or is not intelligent. Test constructors construct the tests to reveal presumed differences between individuals.

It is assumed that 1) IQ scores lie on a normal distribution (they don’t) and 2) few natural bio functions conform to this curve. Another problem with IQ test construction is the assumption that it increases with age and levels off after puberty. Though this, like the other things, has been built into the test by choosing items that an increasing proportion of children pass. You can, of course, reverse this effect by choosing items that older people do well on and younger people don’t.

Further, they keep 50 percent of items that children get right while keeping a smaller proportion of items that children get right, which, in effect, presupposes who is or is not intelligent.

Though, you never see those who believe that IQ is a ‘good enough’ proxy for intelligence ever being this up. Why? This is very important for the validity of these tests. Because if how the tests are constructed is wrong and test scores are not to fit a normal distribution when no normal distribution actually exists for most human mental (including IQ scores) and physiological traits, then the assumptions and conclusions drawn from them are wrong. IQ tests are constructed with the prior idea of who is or is not ‘intelligent’ and this is done by how the items are chosen—50 percent of the items that people get right are kept while the smaller proportion of items people get right or wrong are kept. This is how this so-called ‘normal curve’ appears in IQ tests and is why the book The Bell Curve has the name it has. But bell curve don’t exist for a modicum of traits including IQ!!

Simon (1997: 204) writes (emphasis mine):

There is another, and completely irrefutable, reason why the bell-shaped curve proves nothing at all in the context of H-M’s book: The makers of IQ tests consciously force the test into such a form that it produces this curve, for ease of statistical analysis. The first versions of such tests invariably produce odd-shaped distributions. The test-makers then subtract and add questions to find those that discriminate well between more-successful and less-successful test-takers. For this reason alone the bell-shaped IQ curve must be considered an artifact rather than a fact, and therefore tells us nothing about human nature or human society.

The analysis and selection of items that go on the tests are biased since there is no cognitive theory on which the analysis and selection of items are based. Carpenter, Just and Shell (1990: 408) note how John Raven, the creator of the Raven’s Progressive Matrices, even discussed this in his personal notes, writing “He used his intuition and clinical experience to rank order the difficulty of the six problem types. Many years later, normative data from Forbes (1964), shown in Figure 3, became the basis for selecting problems for retention in newer versions of the test and for arranging the problems in order of increasing difficulty, without regard to any underlying processing theory.”

II) IQ test validity

Another problem with IQ tests are its validity. People attempt to ‘prove’ its validity with correlating job performance success with IQ scores, though there are huge flaws in the studies purporting to show a .5 correlation between IQ and job performance (Richardson, 2002; Richardson and Norgate, 2015). IQ tests are not like, say, breathalyzers (which are calibrated against a model of blood alcohol) or white blood cell count (which is a proxy for disease in the body). Those two measures have a solid theoretical basis and underpinning; as blood alcohol rises, the individual had increased alcohol consumption. The same is true for white blood cell count. The same is not true for IQ tests.

One of the biggest measures used in regards to job performance and IQ testing (people attempt to use job performance to attempt to validate IQ tests) is supervisor rating. However, supervisory ratings are hugely subjective and a lot of factors that would have a supervisor be said to be a ‘good worker’ are not variables that entail just that job.

The only ‘validity’ that IQ test have is correlations with other IQ tests and tests like the SAT. This is not validity. Say the breathalyzer wasn’t calibrated against a model of blood alcohol in the body, would breathalyzers still be a valid tool to test people’s blood/alcohol level? On that same note let’s say that white blood cells wasn’t construct valid. Would we be able to reliably use white blood cell count as a valid measure for disease in the body? These very same problems plague IQ tests and people accept them as ‘proxies’ for intelligence, they test ‘enough of intelligence’ to be able to say that one is smarter than another because they scored higher in a test and therefore tap into this mystical ‘g’ that they have more of which is like a ‘power’ or ‘energy’.

These tests, therefore, are constructed with the idea of who is or is not intelligent and you can see that by looking at how the items are chosen for the test. That’s not scientific. So a true test of ‘intelligence’ may not even exist since these tests have this type of construct bias already in them.

IQ tests have no validity like breathalyzers and white blood cell count, and the so-called ‘culture-free’ IQ test Raven’s Progressive Matrices is anything but.

III) Raven’s and culture bias

I specifically asked Dr. James Thompson about Raven’s being culture-fair. I said that I recall Linda Gottfredson saying that people say that Ravens is culture-fair only because Jensen said it:

Yes, Gottfredson made that remark, and I remember her doing it at an ISIR conference.

So that’s one thing about Ravens that crumbles. A quote from Ken Richardson’s book Genes, Brains, and Human Potential: The Science and Ideology of Intelligence:

It is well known that families and subcultures vary in their exposure to, and usage of, the tools of literacy, numeracy, and associated ways of thinking. Children will vary in these because of accidents of background. …that background experience with specific cultural tools like literacy and numeracy is reflected in changes in brain networks. This explains the importance of social class context to cognitive demands, but is says nothing about individual potential.

(This argument on social class is much more complex than ‘poor people are genetically predisposed to be dumb and poor’.

Consider a recent GCTA study by Plomin et al., who reported a SNP-based heritability estimate of 35% for “general cognitive ability” among UK 12 year olds (as compared to a twin heritability estimate of 46%) [8]. According to the Wellcome Trust “genetic map of Britain,” striking patterns of genetic clustering (i.e. population stratification) exist within different geographic regions of the UK, including distinct genetic clusterings comprised of the residents of the South, South-East and Midlands of England; Cumbria, Northumberland and the Scottish borders; Lancashire and Yorkshire; Cornwall; Devon; South Wales; the Welsh borders; Anglesey in North Wales; Scotland and Ireland; and the Orkney Islands [8]. Now consider the title of a study from the University and College Union: “Location, Location, Location – the widening education gap in Britain and how where you live determines your chances” [9]. This state of affairs (not at all unique to the UK), combined with widespread geographic population stratification, is fertile ground for spurious heritability estimates.

Still Chasing Ghosts: A New Genetic Methodology Will Not Find the “Missing Heritability”

I think this argument is interesting, and it throws a wrench into a lot of things, but more on that another day.)

Richardson continues:

In other words, items like those in the Raven contain hidden structure which makes them more, not less, culturally steeped than any other kind of intelligence testing items, like the Raven, as somehow not knowledge-based, when all are clearly learning dependent. Ironically, such cultural-dependency testing is sometimes tacitly admitted by test users. For example, when testing children in Kuwait on the Raven in 2006, Ahmed Abdel-Khalek and John Raven transposed the items “to read from left to right following the custom of Arabic writings.” (Richardson, 2017: 99)

Finally, we have this dissertation which shows that urban peoples score better than hunter-gatherers (relevant to this present article):

Reading was the greatest predictor of performance Raven’s, despite controlling for age and sex. Attendance was also strongly correlated with Raven’s performance. These findings suggest that reading, or pattern recognition, could be fundamentally affecting the way an individual problem solves or learns to learn, and is somehow tapping into ‘g’. Presumably the only way to learn to read is through schooling. It is, therefore, essential that children are exposed to formal education, have the motivation to go/stay in school, and are exposed to consistent, quality training in order to develop the skills associated with improved performance. (pg. 83)

This is telling: This means that there is no such thing as a ‘culture-free’ IQ test and there will always be something involved that makes it culture un-fair.

People may say ‘It’s only rotating pictures and shapes to get the final answer, how much schooling could you need??’, well as seen above with the Tsimane, schooling is very important to IQ tests since they test learned skills. I’ve seen some people claim that IQ tests don’t test learned ability and that it’s all native, unlearned ability. That’s a very incorrect statement.

So although the symbols in a test like the RPM are experience-free, the rules governing their changes across the matrix are certainly not, and they are more likely to be already represented in the minds of children from middle-class homes, less so in others. Performance on the Raven’s test, in other words, is a question not of inducing ‘rules’ from meaningless symbols, in a totally abstract fashion, but of recruiting ones that are already rooted in the activites of some cultures rather than others. Like so many problems in life, including fields as diverse as chess, science and mathematics (e.g. Chi & Glaser, 1985), each item on the Raven’s test is a recognition problem (matching the covariation structure in a stimulus array to ones in background knowledge) before it is a reasoning problem. The latter is rendered easy when the former has been achieved. Similar arguments can be made about other so-called ‘culture-free’ items like analogies and classifications (Richardson & Webster, 1996). (Richardson, 2002: pg 292-292)

Everyday life is also more complex than the hardest items on Raven’s Matrices, while the test is not complex in its demands compared to tasks undertaken in everyday life (Carpenter, Just, and Shell, 1990). They conclude that the cause is differences in working memory, but that is an ill-defined concept in psychology. They do say, though, that “The processes that distinguish among individuals are primarily the ability to induce abstract relations and the ability to dynamically manage a large set of problem-solving goals in working memory.” So item complexity doesn’t make Raven’s items more difficult for others, since everyday life is more complex.

I’ll end with a bit of physiology. What physiological process is does IQ mimic in the body? If it is a physiological process, surely you’re aware that physiological processes *are not* static. IQ is said to be stable at adulthood, what a strange physiological process. Let’s say for arguments’ sake that IQ really does test some intrinsic, biological process. Does it seem weird to you that a supposed real, stable, biological, bodily function of an individual would be different at different times?

Conclusion

There are a lot of assumptions about IQ tests that are never talked about. The most important being how the tests are constructed to fit a normal curve when most traits important for survival aren’t normally distributed. IQ tests are constructed with the assumption of who is or isn’t intelligent just on the knowledge of how the items are prepared for the test. When you look at how the tests are constructed you can see how they are constructed to fit the normal curve because most of their assumptions and conclusions rest on the reality of the normal curve. There is no construct validity to IQ tests, they’re not like breathalyzers for instance which are calibrated against a model of blood alcohol or white blood cell count as a proxy for disease in the body. Raven’s—despite what is commonly stated about the test—is not unbiased, it perhaps is the most biased IQ test of them all. This highlights the problems with IQ tests that are rarely ever spoken about, and should have you call into question the ‘power’ of the IQ test which assumes who is or isn’t intelligent ahead of time.

Most Human Performance Traits Do Not Lie On a Bell Curve

1050 words

Steve Sailer published an article the other day titled Wieseltier vs. “The Bell Curve” and I left a comment saying that psychological traits are not normally distributed. Two people responded to me, and I replied back but Sailer didn’t approve my two comments. I have a blog, so I can post it here.

“We revisit a long-held assumption in human resource management, organizational behavior, and industrial and organizational psychology”

Maybe instead debunking allegedly long-held assumptions they should Notice that none of those disciplines actually exists.

They do actually exist.

“Human resource management: Human Resource Management (HRM) is the term used to describe formal systems devised for the management of people within an organization. The responsibilities of a human resource manager fall into three major areas: staffing, employee compensation and benefits, and defining/designing work.”

Organizational behavior: “the study of the way people interact within groups. Normally this study is applied in an attempt to create more efficient business organizations. The central idea of the study of organizational behavior is that a scientific approach can be applied to the management of workers.”

Industrial and organizational psychology: “This branch of psychology is the study of the workplace environment, organizations, and their employees. Technically, industrial and organizational psychology – sometimes referred to as I/O psychology or work psychology – actually focuses on two separate areas that are closely related.”

O’Boyle Jr and Aguinis (2012) write:

We conducted 5 studies involving 198 samples including 633,263 researchers, entertainers, politicians, and amateur and professional athletes. Results are remarkably consistent across industries, types of jobs, types of performance measures, and time frames and indicate that individual performance is not normally distributed—instead, it follows a Paretian (power law) distribution. Assuming normality of individual performance can lead to misspecified theories and misleading practices. Thus, our results have implications for all theories and applications that directly or indirectly address the performance of individual workers including performance measurement and management, utility analysis in preemployment testing and training and development, personnel selection, leadership, and the prediction of performance, among others.

Even most types of job performance and performance measures don’t fit a normal curve.

You say, “…psychological traits aren’t normally distributed.”

But the abstract you linked says,

…individual performance is not normally distributed.

Yes, those of us who have had the misfortune to manage work groups know all about 80/20. This is performance, not “psychological traits.”

Psychological tests aren’t a measure of performance? Traits like IQ only show a normal distribution because the normal distribution is built into the tests (see below).

The other one linked says,

… at many physiological and anatomical levels in the brain, the distribution of numerous parameters is in fact strongly skewed . . .

Okay. The cylinders in the straight-six engine of my BMW lean over to one side, but that doesn’t seem to effect the horsepower. This is a physical trait.

So, what of those “psychological traits”? Like IQ? Granted, it is a kind of performance, one of taking IQ tests, but the results have a normal distribution, and it’s not the kind of performance being measured in the study referenced anyway. IQ, by definition, is a “psychological trait,” and it has a normal distribution.

IQ tests have been constructed so that the scores will exhibit a bell curve distribution. That is, the tests themselves are constructed to reveal differences that are already presumed. IQ tests are constructed with the assumption that the scores are normally distributed, however, the normal distribution is built into the test. Items that 50 percent of the testees get right are kept, along with the smaller proportion of items that many testees get right. (See Richardson, 2002 for more information.) Even most psychological constructs are not normally distributed. This is like g being supposedly physiological when—if it were—it wouldn’t mimic any known physiologic process in the body.

Buzsaki and Muzuseki (2014) review data that sensory acuity, reaction time, memory word usage and sentence lengths are not normally distributed. Basic physiologic processes, too, are not normally distributed, like visual acuity, resting heart rate, metabolic rate, etc. And this makes sense, because those traits are crucial to human survival and therefore need to be malleable. Hormones raise, for instance, during a life-or-death situation, and that is what is needed for survival. So, therefore, few physiological traits are normally distributed.

Honestly, I don’t know what your point is, but I don’t disagree with what you have shown me. I know it’s true, but I’m just too far left on your ski jump curve of losers to grasp why you responded to me that way.

Wieseltier was referring to The Bell Curve, in which results have a normal distribution.

Rigbt, the IQ book. But tests are constructed with the assumption of a normal distribution, but psychological traits are not normally distributed. Read Mizsukei and Buzsaki’s work.

Burt (1967) writes:

A detailed analysis of test results obtained from a large sample of English children (4,665 in all), supplemented by a study of the meagre data already available, demonstrates beyond reasonable doubt that the distribution of individual differences in general intelligence by no means conforms with strict exactitude to the so-called normal curve.

In sum, IQ tests are constructed with the assumption that whatever is being tested lies on a bell curve. Clearly, since they are constructed in such a way, the results are forced to fit a normal distribution. But, as seen above, most traits that are critical to survival are not normally distributed, so why should intelligence/IQ be the same? The data from Buzsaki and Mizuseki (2014) show that “skewed … distributions are fundamental to structural and functional brain organization.”

(Also read The Myth of the Bell Curve and The Unicorn, The Normal Curve, and Other Improbable Creatures. where Micceri shows that achievement measures in “language arts, quantitative arts/logic, sciences, social studies/history, and skills such as study skills grammar, and punctuation” are not normally distributed. Human performance does not follow a bell curve. Also read The Bell Curve Is A Myth — Most People Are Actually Underperformers. The Bell Curve in Psychological Research and Practice: Myth or Reality?: “If IQ scores distribute normally, this does not mean that intelligence equally distribute normally in the population.” … “ In this way, a normal distribution in summated test scores, for example, would be seen as the sign of the presence of an error sufficient to give scores the characteristic bell shape, not as the proof of a good measurement.“)

Microcephaly and Normal IQ

1400 words

In my last article on brain size and IQ, I showed how people with half of their brains removed and people with microcephaly can have IQs in the normal/above average range. There is a pretty large amount of data out there on microcephalics and normal intelligence—even a family showing normal intelligence in two generations despite having dominantly inherited microcephaly.