Is Racial Superiority in Sports a Myth? A Response to Kerr (2010)

2750 words

Racial differences in sporting success are undeniable. The races are somewhat stratified in different sports and we can trace the cause of this to differences in genes and where one’s ancestors were born. We can then say that there is a relationship between them since, they have certain traits which their ancestors also had, which then correlate with geographic ancestry, and we can explain how and why certain populations dominate (or would have the capacity to based on body type and physiology) certain sporting events. Critiques of Taboo: Why Black Athletes Dominate Sports and Why We’re Afraid to Talk About It are few and far between, and the few that I am aware of are alright, but this one I will discuss today is not particularly good, because the author makes a lot of claims he could have easily verified himself.

In 2010, Ian Kerr published The Myth of Racial Superiority in Sports, who states that there is a “dark side” to sports, and specifically sets his sights on Jon Entine’s (2000) book Taboo. In this article, Kerr (2010) makes a lot of, in my opinion, giant claims which provide a lot of evidence and arguments in order to show their validity. I will discuss Kerr’s views on race, biology, the “environment”, “genetic determinism”, and racial dominance in sports (which will have a focus on sprinting/distance running in this article).

Race

Since establishing the reality and validity of the concept of race is central to proving Entine’s (2002) argument on racial differences in sports, then I must prove the reality of race (and rebut what Kerr 2010 writes about race). Kerr (2010: 20) writes:

First, it is important to note that Entine is not working in a vacuum; his assertions about race and sports are part of a larger ongoing argument about folk notions of race. Folk notions of race founded on the idea that deep, mutually exclusive biological categories dividing groups of people have scientific and cultural merit. This type of thinking is rooted in the notion that there are underlying, essential differences among people and that those observable physical differences among people are rooted in biology, in genetics (Ossorio, Duster, 2005: 2).

Dividing groups of people does have scientific, cultural and philosophical merit. The concept of “essences” has long been discarded by philosophers. Though there are differences in both anatomy and physiology in people that differ by geographic location, and this then, at the extreme end, would be enough to cause the differences in elite sporting competition that is seen.

Either way, the argument for the existence of race is simple: 1) populations differ in physical attributes (facial, morphological) which then 2) correlate with geographic ancestry. Therefore, race has a biological basis since the physical differences between these populations are biological in nature. Now that we have established that race exists using only physical features, it should be extremely simple to show how Kerr (2010) is in error with his strong claims regarding race and the so-called “mythology” of racial superiority in sports. Race is biological; the biological argument for race is sound (read here and here, and also see Hardimon, 2017).

Genetic determinism

True genetic determinism—as is commonly thought—does not have any sound, logical basis (Resnick and Vorhaus, 2006). So Kerr’s (2010) claims in this section need to be dissected here. This next quote, though, is pretty much imperative to the soundness and validity of his whole article, and let’s just say that it’s easy to rebut and invalidates his whole entire argument:

Vinay Harpalani is one of the most outspoken critics of using genetic determinism to validate notions of inferiority or the superiority of certain groups (in this case Black athletes). He argues that in order for any of Entine’s claims to be valid he must prove that: 1) there is a systematic way to define Black and White populations; 2) consistent and plausible genetic differences between the populations can be demonstrated; 3) a link between those genetic differences and athletic performance can be clearly shown (2004).

This is too easy to prove.

1) While I do agree that the terminology of ‘white’ and ‘black’ are extremely broad, as can be seen by looking at Rosenberg et al (2002), population clusters that cluster with what we call ‘white’ and ‘black’ exist (and are a part of continental-level minimalist races). So is there a systematic way to define ‘Black’ and ‘White’ populations? Yes, there is; genetic testing will show where one’s ancestors came from recently, thereby proving point 1.

2) Consistent and plausible genetic differences between populations can be demonstrated. Sure, there is more variation within races than between them (Lewontin, 1972; Rosenberg et al, 2002; Witherspoon et al, 2007; Hunley, Cabana, and Long, 2016). Even these small between-continent/group differences would have huge effects on the tail end of said distribution.

3) I have compiled numerous data on genetic differences between African ethnies and European ethnies and how these genetic differences then cause differences in elite athletic performance. I have shown that Jamaicans, West Africans, Kenyans and Ethiopians (certain subgroups of the two aforementioned countries) have genetic/somatypic differences that then lead to differences in these sporting competitions. So we can say that race can predict traits important for certain athletic competitions.

1) The terminology of ‘White’ and ‘Black’ are broad; but we can still classify individuals along these lines; 2) consistent and plausible genetic differences between races and ethnies do exist; 3) a link between these genetic differences between genes/athletic differences between groups can be found. Therefore Entine’s (2002) arguments—and the validity thereof—are sound.

Kerr (2010) then makes a few comments on the West’s “obsession with superficial physical features such as skin color”, but using Hardimon’s minimalist race concept, skin color is a part of the argument to prove the existence and biological reality of race, therefore skin color is not ‘superficial’, since it is also a tell of where one’s ancestors evolved in the recent past. Kerr (2010: 21) then writes:

Marks writes that Entine is saying one of three things: that the very best Black athletes have an inherent genetic advantage over the very best White athletes; that the average Black athlete has a genetic advantage over the average White athlete; that all Blacks have the genetic potential to be better athletes than all Whites. Clearly these three propositions are both unknowable and scientifically untenable. Marks writes that “the first statement is trivial, the secondly statistically intractable, and the third ridiculous for its racial essentialism” (Marks, 2000: 1077).

The first two, in my opinion (the very best black athletes have an inherent genetic advantage over the very best white athletes and the average black athlete has a genetic advantage over the average white athlete), are true, and I don’t know how you can deny this; especially if you’re talking about AVERAGES. The third statement is ridiculous, because it doesn’t work like that. Kerr (2010), of course, states that race is not a biological reality, but I’ve proven that it is so that statement is a non-factor.

Kerr (2010) then states that “ demonstrating across the board genetic variations between

populations — has in recent years been roundly debunked“, and also says “ Differences in height, skin color, and hair texture are simply the result of climate-related variation.” This is one of the craziest things I’ve read all year! Differences in height would cause differences in elite sporting competition; differences in skin color can be conceptualized as one’s ancestors’ multi-generational adaptation to the climate they evolved in as can hair texture. If only Kerr (2010) knew that this statement here was the beginning of the end of his shitty argument on Entine’s book. Race is a social construct of a biological reality, and there are genetic differences between races—however small (Risch et al, 2002; Tang et al, 2005) but these small differences can mean big differences at the elite level.

The “environment” and biological variability

Kerr (2010) then shifts his focus over to, not genetic differences, but biological differences. He specifically discusses the Kenyans—Kalenjin—stating that “height or weight, which play an instrumental role in helping define an individual’s athletic prowess, have not been proven to be exclusively rooted in biology or genetics.” While estimates of BMI and height are high (both around .8), I think we can disregard the numbers since they came from highly flawed twin studies, since molecular genetic evidence shows lower heritabilities. Either way, surely height is strongly influenced by ‘genes’. Another important caveat is that Kenya has one of the lowest BMIs in the world, 20.7 for Kenyan men, which also is part of the cause of why certain African ethnies dominate running competitions.

I don’t disagree with Kerr (2010) here too much; many papers show that SES/cultural/social factors are very important to Kenyan runners (Onywera et al, 2006; Wilbur and Pistiladis, 2012; Tucker, Onywera, and Santos-Concejero, 2015). You can have all of the ‘physical gifts’ in the world, if it’s not combined with the will to want to do your best, along with cultural and social factors you won’t succeed. But having an advantageous genotype and physique are useless without a strong mind (Lippi, Favaloro, and Guidi, 2008):

An advantageous physical genotype is not enough to build a top-class athlete, a champion capable of breaking Olympic records, if endurance elite performances (maximal rate of oxygen uptake, economy of movement, lactate/ventilatory threshold and, potentially, oxygen uptake kinetics) (Williams & Folland, 2008) are not supported by a strong mental background.”

Dissecting this, though, is tougher. Because being born at certain altitudes will cause certain advantageous traits, such as a larger lung capacity (and you will have an advantage in lung capacity when competing at lower altitudes), but certain subpopulations live in these high-altitude areas, so what is it? Genetic? Cultural? Environmental? All three? Nature vs nurture is a false dichotomy; so it is a mixture of the three.

How does one explain, then, the athlete who trains countless hours a day fine-tuning a jump shot, like LeBron James or shaving seconds off sub-four minute miles like Robert Kipkoech Cheruiyot, a four time Boston Marathon winner?

Literally no one denies that elite athletes put in insane amounts of practice; but if everyone has the same amount of practice they won’t have similar abilities.

He also briefly brings up muscle fibers, stating:

These include studies on African fast twitch muscle fibers and development of motor skills. Entine includes these studies to demonstrate irrevocable proof of embedded genetic differences between populations but refuses to accept the fact that any differences may be due to environmental factors or training.

This, again, shows ignorance of the literature. An individual’s muscle fibers are formed during development from the fusion of several myoblasts, with differentiation being completed before birth. Muscle fiber typoing is also set at age 6, no difference in skeletal muscle tissue was found when comparing 6-year-olds and adults, therefore we can state that muscle fiber typing is set by age 6 (Bell et al, 1980). You can, of course, train type II fibers to have similar aerobic capacity to type I fibers, but they’ll never be fully similar. This is something that Kerr (2010) obviously is ignorant to because he’s not well-read on the literature which causes him to make dumb statements like “any differences [in muscle fiber typing] may be due to environmental factors or training“.

Black domination in sports

Finally, Kerr (2010) discusses the fact that whites dominated certain running competitions in the Olympics and that before the 1960s, a majority of distance-running gold medals went to white athletes. He then states that the 2008 Boston Marathon winner was Kenyan; but the next 4 behind him were not. Now, let’s check out the 2017 Marathon winners: Kenya, USA, Japan for the top 3; while 5 Kenyans/Ethiopians are in the top 15 while the same is also true of women; a Kenyan winner, with Kenyans/Ethiopians taking 5 of the top 15 spots. The fact that whites used to do well in running sports is a non-factor; Jesse Owens blew away the competition in the Games in Germany, which showed how blacks would begin to dominate in the US decades later.

Kerr (2010) then ends the article with a ton of wild claims; the wildest one, in my opinion, being that “Kenyans are no more genetically different from any other African or European population on average“, does anyone believe this? Because I have data to the contrary. They have a higher Vo2 max, which of course is trainable but with a ‘genetic’ component (Larsen, 2003), while other authors argue that genetic differences between populations account for differences in success in running competition between populations (Vancini et al, 2014), while male and female Kenyan and Ethiopian runners are the fastest in the half and full marathon (Knechtle et al, 2016). There is a large amount of data out there that speaks about Kenyan/Ethiopian and others’ dominance in running; it seems Kerr (2010) just ignored the data. I agree with Kerr that Kenyanholos show that humans can adapt to their environment; but his conclusion here:

The fact that runners coming from Kenya do so well in running events attests to the fact the combination of intense high altitude training, consumption of a low-fat, high protein diet, and a social and cultural expectation to succeed have created in recent decades an environment which is highly conducive to producing excellent long-distance runners.

is very strong, and while I don’t disagree at all with anything here, he’s disregarding how somatype and genes differ between Kenyans and other populations that compete in these sports that then lead to differences in elite sporting competitions.

Elite sporting performance is influenced by myriad factors, including psychology, ‘environment’, and genetic factors. Something that Kerr (2010) doesn’t understand—because he’s not well-read on this literature—is that many genetic factors that influence sporting performance are known. The ability to become elite depends on one’s capacity for endurance, muscle performance, the ability of the tendons and ligaments to withstand stress and injury, and the attitude to train and push above and beyond what normal people can do (Lippi, Longo, and Maffulli, 2010). We can then extend this to human races; some are better-equipped to excel in running competitions than others.

On its face, Kerr’s (2010) claim that there are no inherent differences between races is wrong. Races differ in somatype, which is due to evolution in different geographic locations for tens of thousands of years. The human body is perfectly adapted to for long distance running (Murray and Costa, 2012), and since our capabilities for endurance running evolved in Africa and they, theoretically, have a musculoskeletal structure similar to the Homo sapiens that left Africa around 70 kya, then it’s only logical to state that African’s, on average, have an inherent ability in running competitions (West and East Africans, while North Africans fare very well in middle distance running, which, again, comes down to living in higher altitudes like Kenyans and Ethiopians).

Wagner and Heyward (2000) reviewed many studies on the physiological differences between blacks and whites. Blacks skew towards mesomorphy; black youths had smaller billiac and bitrochanteric width (the widest measure of the pelvis at the outer edges and the flat process on the femur, respectively), and black infants had longer extremities than white infants (Wagner and Heyward, 2000). We have anatomic evidence that blacks are superior runners (in an American context). Mesomorphic athletes are more likely to be sprinters (Sands et al, 2005; which is also seen in prepubescent children: Marta et al, 2013) Kenyans are ecto-dominant (Vernillo et al, 2013) which helps to explain their success at long-distance running. So just on only looking at the phenotype (a marker for race with geographic ancestry, proving the biological existence of race) we can confidently state, on average just by looking at an individual or a population, how they will fare in certain competitions.

Conclusion

Kerr’s (2010) arguments leave a ton to be desired. Race exists and is a biological reality. I don’t know why this paper got published since it was so full of errors; his arguments were not sound and much of the literature contradicts his claims. What he states at the end about Kenyans is not wrong at all, but to not even bring up genetic/biologic differences as a factor influencing their performance is dishonest.

Of course, a whole slew of factors, be they biological, cultural, psychological, genetic, socioeconomic, anatomic, physiologic etc influence sporting performance, but certain traits are more likely to be found in certain populations, and in the year 2018 we have a good idea of what influences elite sporting performance and what does not. It just so happens that these traits are unevenly distributed between populations, and the cause is evolution in differing climates in differing geographic locations.

Race exists and is a biological reality. Biological anatomic/physiological differences between these races then manifest themselves in elite sporting competition. The races differ, on average, in traits important for success in certain competitions. Therefore, race explains some of the variance in elite sporting competition.

Does Playing Violent Video Games Lead to Violent Behavior?

1400 words

President Trump was quoted the other day saying “We have to look at the Internet because a lot of bad things are happening to young kids and young minds and their minds are being formed,” Trump said, according to a pool report, “and we have to do something about maybe what they’re seeing and how they’re seeing it. And also video games. I’m hearing more and more people say the level of violence on video games is really shaping young people’s thoughts.” But outside of broad assertions like this—that playing violent video games cause violent behavior—does it stack up to what the scientific literature says about it? In short, no, it does not. (A lot of publication bias exists in this debate, too.) Why do people think that violent video games cause violent behavior? Mostly due to the APA and their broad claims with little evidence.

Just doing a cursory Google search of ‘violence in video games pubmed‘ brings up 9 journal articles, so let’s take a look at a few of those.

The first article is titled The Effect of Online Violent Video Games on Levels of Aggression by Hollingdale and Greitemeyer (2014). They took 101 participants and randomized them to one of four experimental conditions: neutral, offline; neutral online; (Little Big Planet 2) violent offline; and violent online video games (Call of Duty: Modern Warfare). After they played said games, they answered a questionnaire and then measured aggression using the hot sauce paradigm (Lieberman et al, 1999) to measure aggressive behavior. Hollingdale and Greitemeyer (2014) conclude that “this study has identified that increases in aggression are not more pronounced when playing a violent video game online in comparison to playing a neutral video game online.”

Staude-Muller (2011) finds that “it was not the consumption of violent video games but rather an uncontrolled pattern of video game use that was associated with increasing aggressive tendencies.” Przybylski, Ryan, and Rigby (2009) found that enjoyment, value, and desire to play in the future were strongly related to competence in the game. Players who were high in trait aggression, though, were more likely to prefer violent games, even though it didn’t add to their enjoyment of the game, while violent content lent little overall variance to the satisfactions previously cited.

Tear and Nielsen (2013) failed to find evidence that violent video game playing leads to a decrease in pro-social behavior (Szycik et al, 2017 also show that video games do not affect empathy). Gentile et al (2014) show that “habitual violent VGP increases long-term AB [aggressive behavior] by producing general changes in ACs [aggressive cognitions], and this occurs regardless of sex, age, initial aggressiveness, and parental involvement. These robust effects support the long-term predictions of social-cognitive theories of aggression and confirm that these effects generalize across culture.” The APA (2015) even states that “scientific research has demonstrated an association between violent video game use and both increases in aggressive behavior, aggressive affect, aggressive cognitions and decreases in prosocial behavior, empathy, and moral engagement.” How true is all of this, though? Does playing violent video games truly increase aggression/aggressive behavior? Does it have an effect on violence in America and shootings overall?

No.

Whitney (2015) states that the video-games-cause-violence paradigm has “weak support” (pg 11) and that, pretty much, we should be cautious before taking this “weak support” as conclusive. He concludes that there is not enough evidence to establish a truly causal connection between violent video game playing and violent and aggressive behavior. Cunningham, Engelstatter, and Ward (2016) tracked the sale of violent video games and criminal offenses after those games were sold. They found that violent crime actually decreased the weeks following the release of a violent game. Of course, this does not rule out any longer-term effects of violent game-playing, but in the short term, this is good evidence against the case of violent games causing violence. (Also see the PsychologyToday article on the matter.)

We seem to have a few problems here, though. How are we to untangle the effects of movies and other forms of violent media that children consume? You can’t. So the researcher(s) must assume that video games and only video games cause this type of aggression. I don’t even see how one can logically state that out of all other types of media that violent video games—and not violent movies, cartoons, TV shows etc—cause aggression/violent behavior.

Back in 2011, the Supreme Court case Brown vs. Entertainment Merchants Association concluding that since the effects on violent/aggressive behavior were so small and couldn’t be untangled from other so-called effects from other violent types of media. Ferguson (2015) found that violent video game playing had little effect on children’s mood, aggression levels, pro-social behavior or grades. He also found publication bias in this literature (Ferguson, 2017). Contrary to what those say about video games causing violence/aggressive behavior, video game playing was associated with a decrease in youth crime (Ferguson, 2014; Markey, Markey, and French, 2015 which is in line with Cunningham, Engelstatter, and Ward, 2016). You can read more about this in Ferguson’s article for The Conversation, along with his and others’ responses to the APA who state that violent video games cause violent behavior (with them stating that the APA is biased). (Also read a letter from 230 researchers on the bias in the APA’s Task Force on Violent Media.)

How would one actually untangle the effects of, say, violent video game playing and the effects of such other ‘problematic’ forms of media that also show aggression/aggressive acts towards others and actually pinpoint that violent video games are the culprit? That’s right, they can’t. How would you realistically control for the fact that the child grows up around—and consumes—so much ‘violent’ media, seeing others become violent around him etc; how can you logically state that the video games are the cause? Some may think it logical that someone who plays a game like, say, Call of Duty for hours on end a day would be more likely to be more violent/aggressive or more likely to commit such atrocities like school shootings. But none of these studies have ever come to the conclusion that violent video games may/will cause someone to kill or go on a shooting spree. It just doesn’t make sense. I can, of course, see the logic in believing that it would lead to aggressive behavior/lack of pro-social behavior (let’s say the kid played a lot of games and had little outside contact with people his age), but of course the literature on this subject should be enough to put claims like this to bed.

It’s just about impossible to untangle the so-called small effects of video games on violent/aggressive behavior from other types of media such as violent cartoons and violent movies. Who’s to say it’s not just the violent video games and not the violent movies and violent cartoons, too, that ’cause’ this type of behavior? It’s logically impossible to distinguish this, so therefore the small relationship between video games and violent behavior should be safely ignored. The media seems to be getting this right, which is a surprise (though I bet if Trump said the opposite—that violent video games didn’t cause violent behavior/shootings—that these same people would be saying that they do), but a broken clock is right twice a day.

So Trump’s claim (even if he didn’t outright state it) is wrong, along with anyone else who would want to jump in and attempt to say that video games cause violence. In fact, the literature shows a decrease in violence after games are released (Ferguson, 2014; Markey, Markey, and French, 2015; Cunningham, Engelstatter, and Ward, 2016). The amount of publication bias (also see Copenhaver and Ferguson, 2015 where they show how the APA ignores bias and methodological problems regarding these studies) in this field (Ferguson, 2017) should lead one to question the body of data we currently have, since studies that find an effect are more likely to get published than studies that find no effect.

Video games do not cause violent/aggressive behavior/school shootings. There is literally no evidence that they are linked to the deaths of individuals, and with the small effects noted on violent/aggressive behavior due to violent video game playing, we can disregard those claims. (One thing video games are good for, though, is improving reaction time (Benoit et al, 2017). The literature is strong here; playing these so-called “violent video games” such as Call of Duty improved children’s reaction time, so wouldn’t you say that these ‘violent video games’ have some utility?)

Lead, Race, and Crime

2500 words

Lead has many known neurological effects on the brain (regarding the development of the brain and nervous system) that lead to many deleterious health outcomes and negative outcomes in general. Including (but not limited to) lower IQ, higher rates of crime, higher blood pressure and higher rates of kidney damage, which have permanent, persistent effects (Stewart et al, 2007). Chronic lead exposure, too, can “also lead to decreased fertility, cataracts, nerve disorders, muscle and joint pain, and memory or concentration problems” (Sanders et al, 2009). Lead exposure in vitro, in infancy, and in childhood can also lead to “neuronal death” (Lidsky and Schneider, 2003). While epigenetic inheritance also plays a part (Sen et al, 2015). How do blacks and whites differ in exposure to lead? How much is the difference between the two races in America, and how much would it contribute to crime? On the other hand, China has high rates of lead exposure, but lower rates of crime, so how does this relationship play out with the lead-crime relationship overall? Are the Chinese an outlier or is there something else going on?

The effects of lead on the brain are well known, and numerous amounts of effort have been put into lowering levels of lead in America (Gould, 2009). Higher exposure to lead is also found in poorer, lower class communities (Hood, 2005). So since higher levels of lead exposure are found more often in lower-class communities, then blacks should have higher blood-lead levels than whites. This is what we find.

Blacks had a 27 percent higher concentration of lead in their tibia, while having significantly higher levels of blood lead, “likely because of sustained higher ongoing lead exposure over the decades” (Theppeang et al, 2008). Other data—coming out of Detroit—shows the same relationships (Haar et al, 1979; Talbot, Murphy, and Kuller, 1982; Lead poisoning in children under 6 jumped 28% in Detroit in 2016; also see Maqsood, Stanbury, and Miller, 2017) while lead levels in the water contribute to high levels of blood-lead in Flint, Michigan (Hanna-Attisha et al, 2016; Laidlaw et al, 2016). Cassidy-Bushrow et al (2017) also show that “The disproportionate burden of lead exposure is vertically transmitted (i.e., mother-to-child) to African-American children before they are born and persists into early childhood.”

Children exposed to lead have lower brain volumes as children, specifically in the ventrolateral prefrontal cortex, which is the same region of the brain that is impaired in antisocial and psychotic persons (Cecil et al, 2008). The community that was tested was well within the ‘safe’ range set by the CDC (Raine, 2014: 224), though the CDC says that there is no safe level of lead exposure. There is a large body of studies which show that there is no safe level of lead exposure (Needleman and Landrigan, 2004; Canfield, Jusko, and Kordas, 2005; Barret, 2008; Rossi, 2008; Abelsohn and Sanborn, 2010; Betts, 2012; Flora, Gupta, and Tiwari, 2012; Gidlow, 2015; Lanphear, 2015; Wani, Ara, and Usmani, 2015; Council on Environmental Health, 2016; Hanna-Attisha et al, 2016; Vorvolakos, Aresniou, and Samakouri, 2016; Lanphear, 2017). So the data is clear that there is absolutely no safe level of lead exposure, and even small effects can lead to deleterious outcomes.

Further, one brain study of 532 men who worked in a lead plant showed that those who had higher levels of lead in their bones had smaller brains, even after controlling for confounds like age and education (Stewart et al, 2008). Raine (2014: 224) writes:

The fact that the frontal cortex was particularly reduced is very interesting, given that this brain region is involved in violence. This lead effect was equivalent to five years of premature aging of the brain.

So we have good data that the parts of the brain that relate to violent tendencies are reduced in people exposed to more lead had the same smaller parts of the brain, indicating a relationship. But what about antisocial disorders? Are people with higher levels of lead in their blood more likely to be antisocial?

Needleman et al (1996) show that boys who had higher levels of lead in their blood had higher teacher ratings of aggressive and delinquent behavior, along with higher self-reported ratings of aggressive behavior. Even high blood-lead levels later in life is related to crime. One study in Yugoslavia showed that blood lead levels at age three had a stronger relationship with destructive behavior than did prenatal blood lead levels (Wasserman et al, 2008); with this same relationship being seen in America with high blood lead levels correlating with antisocial and aggressive behavior at age 7 and not age 2 (Chen et al 2007).

Nevin (2007) showed a strong relationship between preschool lead exposure and subsequent increases in criminal cases in America, Canada, Britain, France, Australia, Finland, West Germany, and New Zealand. Reyes (2007) also shows that crime increased quicker in states that saw a subsequent large decrease in lead levels, while variations in lead levels within cities correlating with variations in crime rates (Mielke and Zahran, 2012). Nevin (2000) showed a strong relationship between environmental lead levels from 1941 to 1986 and corresponding changes to violent crime twenty-three years later in the United States. Raine (2014: 226) writes (emphasis mine):

So, young children who are most vulnerable to lead absorption go on twenty-three years later to perpetrate adult violence. As lead levels rose throughout the 1950s, 1960s, and 1970s, so too did violence correspondingly rise in the 1970s, 1980s and 1990s. When lead levels fell in the late 1970s and early 1980s, so too did violence fall in the 1990s and the first decade of the twenty-first century. Changes in lead levels explained a full 91 percent of the variance in violent offending—an extremely strong relationship.

[…]

From international to national to state to city levels, the lead levels and violence curves match up almost exactly.

But does lead have a causal effect on crime? Due to the deleterious effects it has on the developing brain and nervous system, we should expect to find a relationship, and this relationship should become stronger with higher doses of lead. Fortunately, I am aware of one analysis, a sample that’s 90 percent black, which shows that with every 5 microgram increase in prenatal blood-lead levels, that there was a 40 percent higher risk of arrest (Wright et al, 2008). This makes sense with the deleterious developmental effects of lead; we are aware of how and why people with high levels of lead in their blood show similar brain scans/brain volume in certain parts of the brain in comparison to antisocial/violent people. So this is yet more suggestive evidence for a causal relationship.

Jennifer Doleac discusses three studies that show that blood-lead levels in America need to be addressed, since they are related strongly to negative health outcomes.Aizer and Curry (2017) show that “A one-unit increase in lead increased the probability of suspension from school by 6.4-9.3 percent and the probability of detention by 27-74 percent, though the latter applies only to boys.” They also show that children who live nearer to roads have higher blood-lead levels, since the soil near highways was contaminated decades ago with leaded gasoline. Fiegenbaum and Muller (2016) show that cities’ use of lead pipes increased murder rates between the years o921 and 1936. Finally, Billings and Schnepnel (2017: 4) show that their “results suggest that the effects of high levels of [lead] exposure on antisocial behavior can largely be reversed by intervention—children who test twice over the alert threshold exhibit similar outcomes as children with lower levels of [lead] exposure (BLL<5μg/dL).”

A relationship with lead exposure in vitro and arrests at adulthood. The sample was 90 percent black, with numerous controls. They found that prenatal and post-natal blood-lead exposure was associated with higher arrest rates, along with higher arrest rates for violent acts (Wright et al, 2008). To be specific, for every 5 microgram increase in prenatal blood-lead levels, there was a 40 percent greater risk for arrest. This is direct causal evidence for the lead-causes-crime hypothesis.

One study showed that in post-Katrina New Orleans, decreasing lead levels in the soil caused a subsequent decrease in blood lead levels in children (Mielke, Gonzales, and Powell, 2017). Sean Last argues that, while he believes that lead does contribute to crime, that the racial gaps have closed in the recent decades, therefore blood-lead levels cannot be a source of some of the variance in crime between blacks and whites, and even cites the CDC ‘lowering its “safe” values’ for lead, even though there is no such thing as a safe level of lead exposure (references cited above). White, Bonilha, and Ellis Jr., (2015) also show that minorities—blacks in particular—have higher rates of lead in their blood. Either way, Last seems to downplay large differences in lead exposure between whites and blacks at young ages, even though that’s when critical development of the mind/brain and other important functioning occurs. There is no safe level of lead exposure—pre- or post-natal—nor are there safe levels at adulthood. Even a small difference in blood lead levels would have some pretty large effects on criminal behavior.

Sean Last also writes that “Black children had a mean BLL which was 1 ug/dl higher than White children and that this BLL gap shrank to 0.9 ug/dl in samples taken between 2003 and 2006, and to 0.5 ug/dl in samples taken between 2007 and 2010.” Though, still, there are problems here too: “After adjustment, a 1 microgram per deciliter increase in average childhood blood lead level significantly predicts 0.06 (95% confidence interval [CI] = 0.01, 0.12) and 0.09 (95% CI = 0.03, 0.16) SD increases and a 0.37 (95% CI = 0.11, 0.64) point increase in adolescent impulsivity, anxiety or depression, and body mass index, respectively, following ordinary least squares regression. Results following matching and instrumental variable strategies are very similar” (Winter and Sampson, 2017).

Naysayers may point to China and how they have higher levels of blood-lead levels than America (two times higher), but lower rates of crime, some of the lowest in the world. The Hunan province in China has considerably lowered blood-lead levels in recent years, but they are still higher than developed countries (Qiu et al, 2015). One study even shows ridiculously high levels of lead in Chinese children “Results showed that mean blood lead level was 88.3 micro g/L for 3 – 5 year old children living in the cities in China and mean blood lead level of boys (91.1 micro g/L) was higher than that of girls (87.3 micro g/L). Twenty-nine point nine one per cent of the children’s blood lead level exceeded 100 micro g/L” (Qi et al, 2002), while Li et al (2014) found similar levels. Shanghai also has higher levels of blood lead than the rest of the developed world (Cao et al, 2014). Blood lead levels are also higher in Taizhou, China compared to other parts of the country—and the world (Gao et al, 2017). But blood lead levels are decreasing with time, but still higher than other developed countries (He, Wang, and Zhang, 2009).

Furthermore, Chinese women, compared to American women, had two times higher BLL (Wang et al, 2015). With transgenerational epigenetic inheritance playing a part in the inheritance of methylation DNA passed from mother to daughter then to grandchildren (Sen et al, 2015), this is a public health threat to Chinese women and their children. So just by going off of this data, the claim that China is a safe country should be called into question.

Reality seems to tell a different story. It seems that the true crime rate in China is covered up, especially the murder rate:

In Guangzhou, Dr Bakken’s research team found that 97.5 per cent of crime was not reported in the official statistics.

Of 2.5 million cases of crime, in 2015 the police commissioner reported 59,985 — exactly 15 less than his ‘target’ of 60,000, down from 90,000 at the start of his tenure in 2012.

The murder rate in China is around 10,000 per year according to official statistics, 25 per cent less than the rate in Australia per capita.

“I have the internal numbers from the beginning of the millennium, and in 2002 there were 52,500 murders in China,” he said.Instead of 25 per cent less murder than Australia, Dr Bakken said the real figure was closer to 400 per cent more.”

Guangzhou, for instance, doesn’t keep data for crime committed by migrants, who commit 80 percent of the crime in this province. Out of 2.5 million crimes committed in Guangzhou, only 5,985 crimes were reported in their official statistics, which was 15 crimes away from their target of 6000. Weird… Either way, China doesn’t have a similar murder rate to Switzerland:

The murder rate in China does not equal that of Switzerland, as the Global Times claimed in 2015. It’s higher than anywhere in Europe and similar to that of the US.

China also ranks highly on the corruption index, higher than the US, which is more evidence indicative of a covered up crime rate. So this is good evidence that, contrary to the claims of people who would attempt to downplay the lead-crime relationship, that these effects are real and that they do matter in regard to crime and murder.

So it’s clear that we can’t trust the official Chinese crime stats since there much of their crime is not reported. Why should we trust crime stats from a corrupt government? The evidence is clear that China has a higher crime—and murder rate—than is seen on the Chinese books.

Lastly, effects of epigenetics can and do have a lasting effect on even the grandchildren of mothers exposed to lead while pregnant (Senut et al, 2012; Sen et al, 2015). Sen et al (2015) showed lead exposure during pregnancy affected the DNA methylation status of the fetal germ cells, which then lead to altered DNA methylation on dried blood spots in the grandchildren of the mother exposed to lead while pregnant.—though it’s indirect evidence. If this is true and holds in larger samples, then this could be big for criminological theory and could be a cause for higher rates of black crime (note: I am not claiming that lead exposure could account for all, or even most of the racial crime disparity. It does account for some, as can be seen by the data compiled here).

In conclusion, the relationship between lead exposure and crime is robust and replicated across many countries and cultures. No safe level of blood lead exists, even so-called trace amounts can have horrible developmental and life outcomes, which include higher rates of criminal activity. There is a clear relationship between lead increases/decreases in populations—even within cities—that then predict crime rates. Some may point to the Chinese as evidence against a strong relationship, though there is strong evidence that the Chinese do not report anywhere near all of their crime data. Epigenetic inheritance, too, can play a role here mostly regarding blacks since they’re more likely to be exposed to high levels of lead in the womb, their infancy, and childhood. This could also exacerbate crime rates, too. The evidence is clear that lead exposure leads to increased criminal activity, and that there is a strong relationship between blood lead levels and crime.

Minimalist Races Exist and are Biologically Real

3050 words

People look different depending on where their ancestors derived from; this is not a controversial statement, and any reasonable person would agree with that assertion. Though what most don’t realize, is that even if you assert that biological races do not exist, but allow for patterns of distinct visible physical features between human populations that then correspond with geographic ancestry, then race—as a biological reality—exists because what denotes the physical characters are biological in nature, and the geographic ancestry corresponds to physical differences between continental groups. These populations, then, can be shown to be real in genetic analyses, and that they correspond to traditional racial groups. So we can then say that Eurasian, East Asian, Oceanian, black African, and East Asians are continental-level minimalist races since they hold all of the criteria needed to be called minimalist races: (1) distinct facial characters; (2) distinct morphologic differences; and (3) they come from a unique geographic location. Therefore minimalist races exist and are a biological reality. (Note: There is more variation within races than between them (Lewontin, 1972; Rosenberg et al, 2002; Witherspoon et al, 2007; Hunley, Cabana, and Long, 2016), but this does not mean that the minimalist biological concept of race has no grounding in biology.)

Minimalist race exists

The concept of minimalist race is simple: people share a peculiar geographic ancestry unique to them, they have peculiar physiognomy (facial features like lips, facial structure, eyes, nose etc), other physical traits (hair/hair color), and a peculiar morphology. Minimalist races exist, and are biologically real since minimalist races can survive findings from population genetics. Hardimon (2017) asks, “Is the minimalist concept of race a social concept?” on page 62. He writes that social concepts are socially constructed in a pernicious sense if and only if it “(i) fails to represent any fact of the matter and (ii) supports and legitimizes domination.” Of course, populations who derive from Africa, Europe, and East Asia have peculiar facial morphology/morphology unique to that isolated population. Therefore we can say that minimalist race does not conform to criteria (i). Hardimon (2017: 63) then writes:

Because it lacks the nasty features that make the racialist concept of race well suited to support and legalize domination, the minimalist race concept fails to satisfy condition (ii). The racialist concept, on the other hand, is socially constructed in the pernicious sense. Since there are no racialist races, there are no facts of the matter it represents. So it satisfies (i). To elaborate, the racialist race concept legtizamizes racial domination by representing the social hierarchy of race as “natural” (in a value-conferring sense): as the “natural” (socially unmediated and inevitable) expression of the talent and efforts of the inidividuals who stand on its rungs. It supports racial domination by conveying the idea that no alternative arrangment of social institutions could possibly result in racial equality and hence that attempts to engage in collective action in the hopes of ending the social hierarchy of race are futile. For these reasons the racialist race concept is also idealogical in the prejorative sense.

Knowing what we know about minimalist races (they have distinct physiognomy, distinct morphology and geographic ancestry unique to that population), we can say that this is a biological phenomenon, since what makes minimalist races distinct from one another (skin color, hair color etc) are based on biological factors. We can say that brown skin, kinky hair and full lips, with sub-Saharan African ancestry, is African, while pale/light skin, straight/wavy/curly hair with thin lips, a narrow nose, and European ancestry makes the individual European.

These physical features between the races correspond to differences in geographic ancestry, and since they differ between the races on average, they are biological in nature and therefore it can be said that race is a biological phenomenon. Skin color, nose shape, hair type, morphology etc are all biological. So knowing that there is a biological basis to these physical differences between populations, we can say that minimalist races are biological, therefore we can use the term minimalist biological phenomenon of race, and it exists because there are differences in the patterns of visible physical features between human populations that correspond to geographic ancestry.

Hardimon then talks about how eliminativist philosophers and others don’t deny that above premises above the minimalist biological phenomenon of race, but they allow these to exist. Hardimon (2017: 68-69) then quotes a few prominent people who profess that there are, of course, differences in physical features between human populations:

… Lewontin … who denies that biological races exist, freely grants that “peoples who have occupied major geographic areas for much of the recent past look different from one another. Sub-Saharan Africans have dark skin and people who have lived in East Asia tend to have a light tan skin and an eye color and eye shape that is difference from Europeans.” Similarly, population geneticist Marcus W. Feldman (final author of Rosenberg et al., “Genetic Stucture of Human Populations” [2002]), who also denies the existence of biological races, acknowledges that “it has been known for centuries that certain physical features of humans are concentrated within families: hair, eye, and skin color, height, inability to digest milk, curliness of hair, and so on. These phenotypes also show obvious variation among people from different continents. Indeed, skin color, facial shape, and hair are examples of phenotypes whose variation among populations from different regions is noticeable.” In the same vein, eliminative anthropologist C. Loring Brace concedes, “It is perfectly true that long term residents of various parts of the world have patterns of features that we can identify as characteristic of they area from which they come.”

So even these people who claim to not believe in “biological races”, do indeed believe in biological races because what they are describing is biological in nature and they, of course, do not deny that people look different while their ancestors came from different places so therefore they believe in biological races. We can then use the minimalist biological phenomenon of race to get to the existence of minimalist races.

Hardimon (2017: 69) writes:

Step 1. Recognize that there are differences in patterns of visible physical features of human beings that correspond to their differences in geographic ancestry.

Step 2. Observe that these patterns are exhibited by groups (that is, real existing groups).

Step 3. Note that the groups that exhibit these patterns of visible physical features correspond to differences in geographical ancestry satisfy the conditions of the minimalist concept of race.

Step 4. Infer that minimalist race exists.

Those individuals mentioned previously who deny biological races but allow that people with ancestors from differing geographic locales look differently do not disagree with step 1, nor does anyone really disagree with step 2. Step 4’s inference immediately flows from the premise in step 3. “Groups that exhibit patterns or visible physical features that correspond to differences in geographical ancestry satisfy the conditions of the minimalist concept of race. Call (1)-(4) the argument from the minimalist biological phenomenon of race” (Hardimon, 2017: 70). Of course, the argument does not identify which populations may be called races (see further below), it just shows that race is a biological reality. Because if minimalist races exist, then races exist because minimalist races are races. Minimalist races exist, therefore biological races exist. Of course, no one doubts that people come from Europe, sub-Saharan Africa, East Asia, the Americas, and the Pacific Islands, even though the boundaries between them are ‘blurry’. They exhibit patterns of visible physical characters that correspond to their differing geographic ancestry, they are minimalist races therefore minimalist races exist.

Pretty much, the minimalist concept of race is just laying out what everyone knows and arguing for its existence. Minimalist races exist, but are they biologically real?

Minimalist races are biologically real

Of course, some who would assert that minimalist races do not exist would say that there are no ‘genes’ that are exclusive to one certain population—call them ‘race genes’. Of course, these types of genes do not exist. Whether or not one individual is a part of one race or not does not rest on the basis of his physical characters, but is determined by who his parents are, because one of the three premises for the minimalist race argument is ‘must have a peculiar geographic ancestry’. So it’s not that members of races share sets of genes that other races do not, it’s based on the fact that they share a distinctive set of visible physical features that then correspond with geographic ancestry. So of course if the minimalist concept of race is a biological concept then it entails more than ‘genes for’ races.

Of course, there is a biological significance to the existence of minimalist biological races. Consider that one of the physical characters that differ between populations is skin color. Skin color is controlled by genes (about half a dozen within and a dozen between populations). Lack of UV rays for individuals with dark skin will lead to diseases like prostate cancer, while darker skin is a protectant against UV damage to human skin (Brenner and Hearing, 2008; Jablonksi and Chaplin, 2010). Since minimalist race is biologically significant and minimalist races are partly defined by differences in skin color between populations then skin color has both medical and ecological significance.

(1) Consider light skin. People with light skin are more susceptible to skin cancer since they evolved in locations with poor UVR radiation (D’Orazio et al, 2013). The body needs vitamin D to absorb and use calcium for maintaining proper cell functioning. People who evolved near the equator don’t have to worry about this because the doses of UVB they absorb are sufficient for the production of enough previtamin D. While East Asians and Europeans on the other hand, became adapted to low-sunlight locations and therefore over time evolved lighter skin. This loss of pigmentation allowed for better UVB absorption in these new environments. (Also read my article on the evolution of human skin variation and also how skin color is not a ‘tell’ of aggression in humans.)

(2) While darker-skinned people have a lower rate of skin cancer “primarily a result of photo-protection provided by increased epidermal melanin, which filters twice as much ultraviolet (UV) radiation as does that in the epidermis of Caucasians” (Bradford, 2009). Dark skin is thought to have evolved to protect against skin cancer (Greaves, 2014a) but this has been contested (Jablonski and Chaplin, 2014) and defended (Greaves, 2014b). So therefore, using (1) and (2), skin color has evolutionary signifigance.

So as humans began becoming physically adapted to their new niches they found themselves in, they developed new features distinct from the location they previously came from to better cope with the new lifestyle due to their new environments. For instance “Northern Europeans tend to have light skin because they belong to a morphologically marked ancestral group—a minimalist race—that was subject to one set of environmental conditions (low UVR) in Europe” (Hardimon, 2017: 81). Of course explaining how human beings survived in new locations falls into the realm of biology, while minimalist races can explain why this happened.

Minimalist races clearly exist since minimalist races constitute complex biological patterns between populations. Hardimon (2017: 83) writes:

It [minimalist race] also enjoys intrinsic scientific interest because it represents distinctive salient systematic dimension of human biological diversity. To clarify: Minimalist race counts as (i) salient because human differences of color and shape are striking. Racial differences in color and shape are (ii) systematic in that they correspond to differences in geographic ancestry. They are not random. Racial differences are (iii) distinctive in that they are different from the sort of biological differences associated with the other two salient systematic dimensions of human diversity: sex and age.

[…]

An additional consideration: Like sex and age, minimalist race constitutes one member of what might be called “the triumverate of human biodiversity.” An account of human biodiversity that failed to include any one of these three elements would be obviously incomplete. Minimalist race’s claim to be biologically real is as good as the claim of the other members of the triumverate. Sex is biologically real. Age is biologically real. Minimalist race is biologically real.

Real does not mean deep. Compared to the biological associated with sex (sex as contrasted with gender), the biological differences associated with minimalist race are superficial.

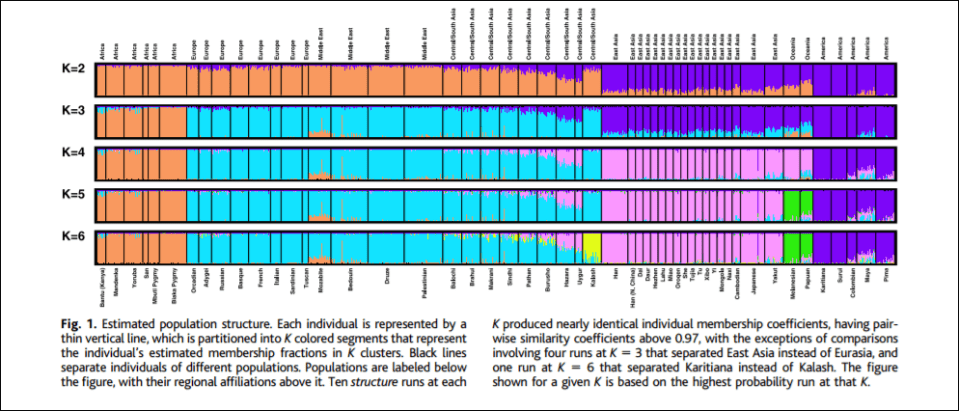

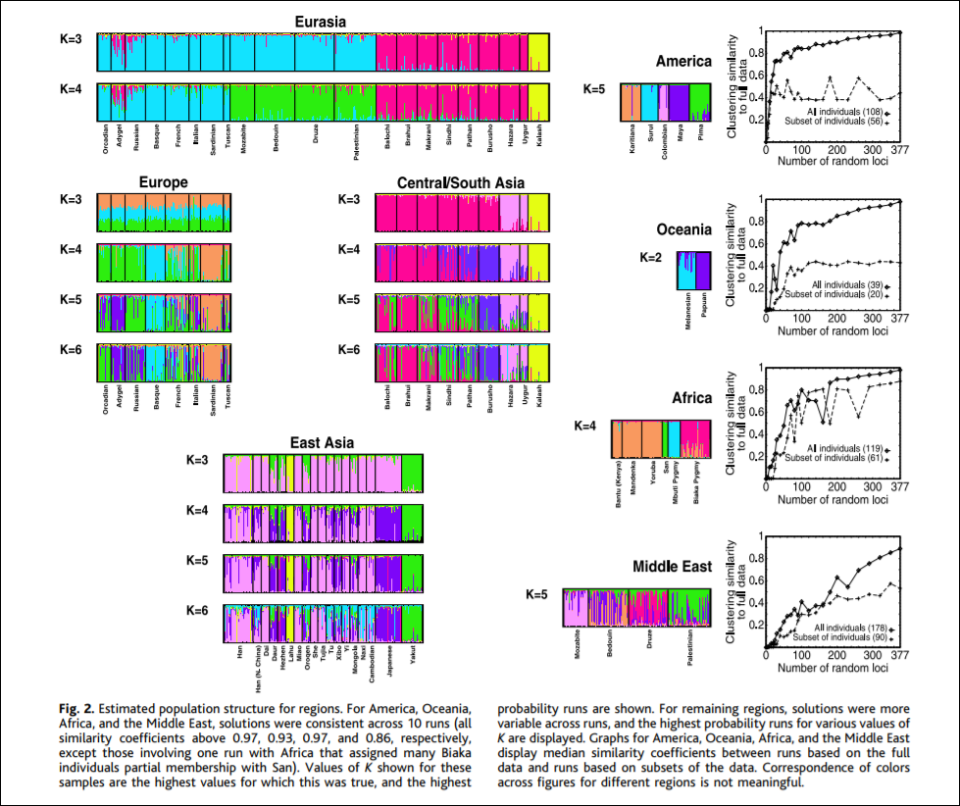

Of course, the five ‘clusters’ and ‘populations’ identified by Rosenberg et al’s (2002) K=5 graph, which told structure to produce 5 genetic clusters, corresponds to Eurasia, Africa, East Asia, Oceania, and the Americas, are great candidates for minimalist biological races since they correspond to geographic locations, and even corroborates what Fredrich Blumenbach said about human races back in the 17th century. Hardimon further writes (pg 85-86):

If the five populations corresponding to the major areas are continental-level minimalist races, the clusters represent continental-level minimalist races: The cluster in the mostly orange segment represents the sub-Saharan African continental-level minimalist race. The cluster in the mostly blue segment represents the Eurasian continental-level minimal race. The cluster in the mostly pink segment represents the East Asian continental-level minimalist race. The cluster in the mostly green segment represents the Pacific Islander continental-level minimalist race. And the cluster in the mostly purple segment represents the American continental-level minimalist race.

[…]

The assumption that the five populations are continental-level minimalist races entitles us to interpret structure as having the capacity to assign individuals to continental-level minimalist races on the basis of markers that track ancestry. In constructing clusters corresponding to the five continental-level minimalist races on the basis of objective, race-neutral genetic markers, structure essentially “reconstructs” those races on the basis of a race-blind procedure. Modulo our assumption, the article shows that it is possible to assign individuals to continental-level races without knowing anything about the race or ancestry of the individuals from whose genotypes the microsattelites are drawn. The populations studied were “defined by geography, language, and culture,” not skin color or “race.”

Of course, as critics note, the researchers predetermine how many populations that structure demarcates, for instance, K=5 indicates that the researchers told the program to delineate 5 clusters. Though, these objections do not matter. For the 5 populations that come out in K=5 “are genetically structured … which is to say, meaningfully demarcated solely on the basis of genetic markers” (Hardimon, 2017: 88). K=6 brings one more population, the Kalash, a group from northern Pakistan who speak an Indo-European language. Though “The fact that structure represents a population as genetically distinct does not entail that the population is a race. Nor is the idea that populations corresponding to the five major geographic areas are minimalist races undercut by the fact that structure picks out the Kalash as a genetically distinct group. Like the K=5 graph, the K=6 graph shows that modulo our assumption, continental-level races are genetically structured” (Hardimon, 2017: 88).

Though of course there are naysayers. Svante Paabo and David Serre, Hardimon writes, state that when individuals are sampled from homogeneous populations from around the world, the gradients of the allele frequencies that are found are distributed randomly across the world rather than clustering discretely. Though Rosenberg et al responded by verifying that the clusters they found are not artifacts of sampling as Paabo and Serre imply, but reflect features of underlying human variation. Though Rosenberg et al agree with Paabo and Serre in that that human genetic diversity consists of clines in variation in allele frequencies (Hardimon, 2017: 89). Other naysayers also state that all Rosenberg et al show is what we can “see with our eyes”. Though a computer does not partition individuals into different populations based on something that can be done with eyes, it’s based on an algorithm.

Hardimon also accepts that black Africans, Caucasians, East Asians, American Indians and Oceanians can be said to be races in the basic sense because “they constitute a partition of the human species“, and that they are distinguishable “at the level of the gene” (Hardimon, 2017: 93). And of course, K=5 shows that the 5 races are genetically distinguishable.

Hardimon finally discusses some medical significance for minimalist races. He states that if you are Caucasian that it is more likely that you have a polymorphism that protects against HIV compared to a member of another race. Meanwhile, East Asians are more likely to carry alleles that make them more susceptible to Steven-Johnson syndrome or another syndrome where their skin falls off. Though of course, the instances where this would matter in a biomedical context are rare, but still should be at the back of everyone’s mind (as I have argued), even though instances where medical differences between minimalist races are rare, there are times where one’s race can be medically significant.

Hardimon finally states that this type of “metaphysics of biological race” can be called “deflationary realism.” Deflationary because it “consists in the repudiation of the ideas that racialist races exist and that race enjoys the kind of biological reality that racialist race was supposed to have” and realism which “consists in its acknowledgement of the existence of minimalist races and the genetically grounded, relatively superficial, but still significant biological reality of minimalist race” (Hardimon, 2017: 95-96).

Conclusion

Minimalist races exist. Minimalist races are a biological reality because distinct visible patterns show differences between geographically isolated populations. This is enough for the classification of the five classic races we know of to be called race, be biologically real, and have a medical significance—however small—because certain biological/physical traits are tied to different geographic populations—minimalist races.

Hardimon (2017: 97) shows an alternative to racialism:

Deflationary realism provides a worked-out alternative to racialism—it it a theory that represents race as a genetically grounded, relatively superficial biological reality that is not normatively important in itself. Deflationary realism makes it possible to rethink race. It offers the promise of freeing ourselves, if only imperfectly, from the racialist background conception of race.

It is clear that minimalist races exist and are biologically real. You do not need to speak about supposed mental traits between these minimalist races, they are irrelevant to the existence of these minimalist biological races. As Hardimon (2017: 67) writes: “No reference is made to normatively important features such as intelligence, sexuality, or morality. No reference is made to essences. The idea of sharp boundaries between patterns of visible physical features or corresponding geographical regions is not invoked. Nor again is reference made to the idea of significant genetic differences. No reference is made to groups that exhibit patterns of visible physical features that correspond to geographic ancestry.”

The minimalist biological concept of race stands up to numerous lines of argumentation, therefore we can say without a shadow of a doubt that minimalist biological race exists and is real.

Do pigmentation and the melanocortin system modulate aggression and sexuality in humans as they do in other animals? A Response to Rushton and Templer (2012)

2100 words

Rushton et al have kept me pretty busy over the last year or so. I’ve debunked many of their claims that rest on biology—such as testosterone causing crime and aggression. The last paper that Rushton published before he died in October of 2012 was an article with Donald Templer—another psychologist—titled Do pigmentation and the melanocortin system modulate aggression and sexuality in humans as they do in other animals? (Rushton and Templer, 2012) and they make a surfeit of bold claims that do not follow. They review animal studies on skin and fur pigmentation and show that the darker an animal’s skin or fur, the more likely they are to be aggressive and violent. They then conclude that, of course (it wouldn’t be a Rushton article without it), that the long-debunked r/K ‘continuum’ explains the co-variation between human populations in birth rate, longevity, violent crime, infant mortality and rate and acquisition of AIDS/HIV.

In one of the very first articles I wrote on this site, I cited Rushton and Templer (2012) favorably (back when I had way less knowledge of biology and hormones). I was caught by biases and not knowing anything about what was discussed. After I learned more about biology and hormones over the years, I came to find out that the claims in the paper are wrong and that they make huge, sweeping conclusions based on a few correlations. Either way, I have seen the error of my ways and the biases that lead me to the beliefs I held, and when I learned more about hormones and biology I saw how ridiculous some of the papers I have cited in the past truly were.

Rushton and Templer (2012) start off the paper by discussing Ducrest et al (2008) who state that within each species studied, darker-pigmented individuals of said species exhibited higher rates of aggression, sexuality and social dominance (which is caused by testosterone) than lighter-pigmented individuals in that same species. They state that this is due to pleiotropy—when a single gene has to or more phenotypic effects. They then refer to Rushton and Jensen (2005) to reference the claim that low IQ is correlated with skin color (skin color doesn’t cause IQ, obviously).

They then state that in 40 vertebrate species that within each that the darker-pigmented members had higher levels of aggression and sexual activity along with a larger body size, better stress resistance, and are more physically active while grooming (Ducrest, Keller, and Roulin, 2008). Rushton and Templer (2012) then state that this relationship was ‘robust’ across numerous species, specifically 36 species of birds, 4 species of fish, 3 species of mammals, and 4 species of reptiles.

Rushton and Templer (2012) then discuss the “Validation of the pigmentation system as causal to the naturalistic observations was demonstrated by experimentally manipulating pharmacological dosages and by studies of cross-fostering“, citing Ducrest, Keller, and Roulin (2008). They even state that ‘Placing darker versus lighter pigmented individuals with adoptive parents of the opposite pigmentation did not modify offspring behavior.” Seems legit. Must mean that their pigmentation caused these differences. They then state something patently ridiculous: “The genes that control that balance occupy a high level in the hierarchical system of the genome.” Though, unfortunately for their hypothesis, there is no privileged level of causation (Noble, 2016; also see Noble, 2008), so this is a nonsense claim. Genes are not ‘blueprints’ or ‘recipes’ (Oyama, 1985; Schneider, 2007).

They then refer to Ducrest, Keller and Roulin (2008: 507) who write:

In this respect, it is important to note that variation in melanin-based coloration between human populations is primarily due to mutations at, for example, MC1R, TYR, MATP and SLC24A5 [29,30] and that human populations are therefore not expected to consistently exhibit the associations between melaninbased coloration and the physiological and behavioural traits reported in our study.

This quote, however, seems to be ignored by Rushton and Templer (2012) throughout the rest of their article, and so even though they did a brief mentioning of the paper and how one should be ‘cautious’ in interpreting the data in their study, it seems like they just brush it under the rug to not have to contend with it. Rushton and Templer (2012) then cite the famous silver fox study, where tame foxes were bred. They lost their dark fur and became lighter and, apparently, were less aggressive than their darker-pigmented kin. These animal studies are, in my useless when attempting to correlate skin color and the melanocortin system in the modulation of aggressive behavior, so let’s see what they write about human studies.

It’s funny, because Rushton and Templer (2012) cite Ducrest, Keller, and Roulin (2008: 507) to show that caution should be made when assessing any so-called differences in the melanocortin system between human races. They then disregard that by writing “A first examination of whether melanin based pigmentation plays a role in human aggression and sexuality (as seen in non-human animals), is to compare people of African descent with those of European descent and observe whether darker skinned individuals average higher levels of aggression and sexuality (with violent crime the main indicator of aggression).” This is a dumb comparison. Yes, African nations commit more crime than European nations, but does this mean that the skin color (or whatever modulates skin color/melanocortin system) is the cause for this? No. Not at all.

There really isn’t anything to discuss here, though, because they just run through how different African nations have higher levels of crime than European and East Asian nations, how blacks report having more sex and feel less guilty about it. Rushton and Templer (2012) then state that one study “asked married couples how often they had sex each week. Pacific Islanders and Native Americans said from 1 to 4 times, US Whites answered 2–4 times, while Africans said 3 to over 10 times.” They then switch over to their ‘replication’ of this finding, using the data from Alfred Kinsey (Rushton and Bogaert, 1988). Though, unfortunately for Rushton and Bogaert, there are massive problems with this data.

Though, the Kinsey data can hardly be seen as representative (Zuckerman and Brody, 1988), and it is also based on outdated, non-representative, non-random samples (Lynn, 1989). Rushton and Templer (2012) also discuss so-called differences in penis size between races, too. But I have written two response articles on the matter and shown that Rushton used shoddy sources like ‘French Army Surgeon who contradicts himself: “Similarly, while the French Army surgeon announces on p. 56 that he once discovered a 12-inch penis, an organ of that size becomes “far from rare” on p. 243. As one might presume from such a work, there is no indication of the statistical procedures used to compute averages, what terms such as “often” mean, how subjects were selected, how measurements were made, what the sample sizes were, etc” (Weizmann et al, 1990: 8).

Rushton and Templer (2012) invoke, of course, Rushton’s (1985; 1995) r/K selection theory as applied to human races. I have written numerous articles on r/K selection and attempts at reviving it, but it is long dead, especially as a way to describe human populations (Anderson, 1991; Graves, 2002). The theory was refuted in the late 70s (Graves, 2002), and replaced with age-specific mortality (Reznick et al, 2002). Some of his larger claims I will cover in the future (like how r/K relates to criminal activity), but he just goes through all of the same old motions he’s been going through for years, bringing nothing new to the table. In all honesty, testosterone is one of the pillars of Rushton’s r/K selection theory (e.g., Lynn, 1990; Rushton, 1997; Rushton, 1999; Hart, 2007; Ellis, 2017; extensive arguments against Ellis, 2017 can be found here). If testosterone doesn’t do what he believes it does and the levels of testosterone between the races are not as high as believed/non-existent (Gapstur et al, 2002; read my discussion of Gapstur et al 2002; Rohrmann et al, 2007; Richard et al, 2014. Though see Mazur, 2016 and read my interpretation of the paper) then we can safely disregard their claims.

Rushton and Templer (2012: 6) write:

Another is that Blacks have the most testosterone (Ellis & Nyborg, 1992), which

helps to explain their higher levels of athletic ability (Entine, 2000).

As I have said many times in the past, Ellis and Nyborg (1992) found a 3 percent difference in testosterone levels between white and black ex-military men. This is irrelavent. He also, then cites John Entine’s (2002) book Taboo: Why Black Athletes Dominate Sports and Why We’re Afraid to Talk About It, but this doesn’t make sense. Because he literally cites Rushton who cites Ellis and Nyborg (1992) and Ross et al (1986) (stating that blacks have 3-19 percent higher levels of testosterone than whites, citing Ross et al’s 1986 uncorrected numbers)—and I have specifically pointed out numerous flaws in their analysis and so, Ross et al (1986) cannot seriously be used as evidence for high testosterone differences between the races. Though I cited Fish (2013), who wrote about Ellis and Nyborg (1992):

“These uncorrected figures are, of course, not consistent with their racial r- and K-continuum.”

Rushton and Templer (2012) then state that testosterone acts like a ‘master switch’ (Rushton, 1999), implicating testosterone as a cause for aggression, though I’ve shown that this is not true, and that aggression causes testosterone production, testosterone doesn’t cause aggression. Testosterone does control muscle mass, of course. But Rushton’s claim that blacks have deeper voices due to higher levels of testosterone, but this claim does not hold in newer studies.

Rushton and Templer (2012) then shift gears to discuss Templer and Arikawa’s (2006) study on the correlation between skin color and ‘IQ’. However, there is something important to note here from Razib:

we know the genetic architecture of pigmentation. that is, we know all the genes (~10, usually less than 6 in pairwise between population comparisons). skin color varies via a small number of large effect trait loci. in contrast, I.Q. varies by a huge number of small effect loci. so logically the correlation is obviously just a correlation. to give you an example, SLC45A2 explains 25-40% of the variance between africans and europeans.

long story short: it’s stupid to keep repeating the correlation between skin color and I.Q. as if it’s a novel genetic story. it’s not. i hope don’t have to keep repeating this for too many years.

Rushton and Templer (2012: 7) conclude:

The melanocortin system is a physiological coordinator of pigmentation and life history traits. Skin color provides an important marker placing hormonal mediators such as testosterone in broader perspective.

I don’t have a problem with the claim that the melanocortin system is a physiological coordinator of pigmentation, because it’s true and we have a great understanding of the physiology behind the melanocortin system (see Cone, 2006 for a review). EvolutionistX also has a great article, reviewing some studies (mouse studies and some others) showing that increasing melatonin appears to decreases melanin.

Rushton and Templer’s (2012) make huge assumptions not warranted by any data. For instance, Rushton states in his VDare article on the subject, J. Phillipe Rushton Says Color May Be More Than Skin Deep, “But what about humans? Despite all the evidence on color, aggression, and sexuality in animals, there has been little or no discussion of the relationship in people. Ducrest & Co. even warned that genetic mutations may make human populations not exhibit coloration effects as consistently as other species. But they provided no evidence.” All Rushton and Templer (2012) do in their article is just restating known relationships with crime and race, and then attempting to implicate the melanocortin system as a factor driving this relationship, literally off of a slew of animal studies. Even then, the claim that Ducrest, Keller, and Roulin (2008: 507) provide no evidence for their warning is incorrect, because before they stated that, they wrote “In this respect, it is important to note that variation in melanin-based coloration between human populations is primarily due to mutations at, for example, MC1R, TYR, MATP and SLC24A5 [29,30]. . .” Melanin does not cause aggression, it does not cause crime. Rushton and Templer just assume too many things based on no evidence in humans, while their whole hypothesis is structured around a bunch of animal studies.

In conclusion, it seems like Rushton and Templer don’t know anything about the physiology of the melanocortin system if they believe that pigmentation and the melanocortin system modulates aggression and sexual behavior in humans. I know of no evidence (studies, not Rushton and Templer’s 2012 relationships with crime and then asserting that, because these relationships are seen in animals, that it must mean that the melanocortin system in humans modulates the relationships too) for these assertions by Rushton and Templer (2012). The fact that they think that restating relationships between crime and race, country of origin and race, supposed correlations with testosterone and crime and blacks supposedly having higher testosterone than whites, among other things, shows that the proposed relationships are caused by the melanocortin system and Lift History Theory shows their ignorance of the human physiological system.

Height, Longetivity, and Aging

1700 words

Humans reach their maximum height at around their mid-20s. It is commonly thought that taller people have better life outcomes, and are in general healthier. Though this misconception stems from misconceptions about the human body. In all reality, shorter people live longer than taller people. (Manlets of the world should be rejoicing; in case anyone is wondering I am 5’10”.) This flies in the face about what people think, and may be counter-intuitive to some but the logic—and data—is sound. I will touch on mortality differences between tall and short people and at the end talk a bit about shrinking with age (and studies that show there is no—or little—decrease in height due to self-reports, the study is flawed).

One reason why the misconception of taller people living longer, healthier lives than shorter people is the correlation between height and IQ—people assume that they are traits that are ‘similar’ in that they become ‘stable’ at adulthood—but one way to explain that relationship is that IQ is correlated with height because higher SES people can afford better food and thus be better nourished. Either way, it is a myth that taller people have lower rates of all-cause mortality.