Hereditarianism is not a Valid Science

1350 words

For years I have been arguing that hereditarianism just isn’t tenable due to the fact that the mental is irreducible to the physical. Since the mental is irreducible to the physical, then hereditarianism cannot possibly be true. I have given many conceptual arguments (here, here, here and here) which argue for (a form of) dualism, and so if dualism is true, then hereditarianism can’t possibly be true.

Here is another argument against hereditarianism:

P1: If hereditarianism is a valid science, then it must be based on a physicalist and reductionist theory of mind.

P2: The mental is irreducible to the physical.

P3: Hereditarianism is based on a physicalist and reductionist theory of mind.

C: Thus, hereditarianism is not a valid science.

Premise 1: The whole hereditarian programme assumes that psychology reduces to genes, which we can see from GWA studies of “intelligence” and other psychological traits. It’s a programme that attempts to show that differences in genes in populations lead to differences in psychological traits. However, this is merely a conceptual confusion.

Since hereditarianism attempts to reduce psychological traits to genes, then it necessarily is a physicalist and reductionist theory of mind. Hereditarianism assumes that actions and behaviors can be reduce to genes, and that we can use the methods they propose to discover these relationships. Hereditarianism, though, is said to be a scientific hypothesis and so it needs testable and falsifiable theories. But, the assumption that psychology reduces to genes is a conceptual one, and so, hereditarianism attempts to make the mind-body problem a scientific problem when it in all actuality is a conceptual argument, to which empirical evidence is irrelevant to.

Hereditarian theorists claim that standardized tests are measurement tools, and so we can then measure and quantify intelligence by administering these tests. However, there is no specified measured object, object of measurement and measurement unit for IQ (Nash, 1990), and for there to be, IQ and whatever other psychological trait the hereditarian claims to be measuring need to have those three things articulated. On another note, hereditarianism would seem to fall prey to a version of what Deacon (1990: 201) calls the numerology fallacy:

Numerology fallacies are apparent correlations that turn out to be artifacts of numerical oversimplification. Numerology fallacies in science, like their mystical counterparts, are likely to be committed when meaning is ascribed to some statistic merely by virtue of its numeric similarity to some other statistic, without supportive evidence from the empirical system that is being described.

Nonetheless, it is clear that when the hereditarian says that the mental can be measured and reduced to genes or brain structure/physiology, they are making a conceptual—not empirical—claim, and so hereditarianism would then fail on conceptual grounds. This is beside the point that (again, conceptually) that there is no a priori privileged level of causation, meaning the gene isn’t a privileged cause over and above other developmental variables (Noble, 2012) and the fact that the conceptual model of heritability and the gene used in hereditarian heritability studies is conceptually flawed (Burt and Simon, 2015). The fact of the matter is, no empirical data can refute these two arguments; these two powerful arguments then combine to refute hereditarianism, making hereditarianism logically untenable.

Hereditarianism must be a physicalist, reductionist account of the mind, and as I have argued for before, this was inevitable. Hereditarianism seeks to either reduce mind to genes or brain structure/physiology, as evidenced by for example Jung and Haier’s (2007) P-FIT model.

Premise 2: I won’t spend much time on this since I have exhaustively argued this claim. But basically, since hereditarianism relies on a physicalist and reductionist account of the mind, then mind either reduces to genes or brain structure/physiology. However, this claim fails conceptually.

Premise 3: This premise states that hereditarianism is a physicalist and reductionist theory of mind. This is evidenced by the fact that since the 80s hereditarians like Richard Haier were attempting to reduce mind (IQ, thinking) to brain physiology using EEG.

Conclusion: It then follows that hereditarianism is not a valid science. No matter how many experiments are carried out by hereditarians, this won’t prove their ideas. The ultimate claim of hereditarianism—and of mind-brain, psychophysical reduction—is a conceptual, not scientific, one.

There is also the fact that the main evidence marshaled for hereditarianism relies on heritability estimates which derive mostly from twin studies. Here’s the argument:

P1: If hereditarianism is a valid science, then it must be based on reliable and valid evidence.

P2: Hereditarianism relies mainly on heritability estimates.

P3: Heritability estimates cannot account for GxE interactions, assume additivity, and can’t account for the complex interactions between G and E.

C: Therefore, hereditarianism cannot be considered a valid science.

Science is based on observation and empirical evidence. Since the advent of twin studies, hereditarianism has relied on heritability estimates, which is a statistical measure of the variance in a trait which can be “explained” by genetic factors. Heritability estimates also assume a heterogeneous environment and that G and E don’t interact. So it then follows that if hereditarianism relies mainly on heritability estimates, then it cannot be a valid science. It doesn’t inform us what the causes of a trait or differences in them are, nor the relative influence of G and E on a trait (Moore and Shenk, 2016). There is also the fact that from these heritability estimates that they have then used and championed GWA studies to find the genes that are causal for differences in IQ scores. However, they would then need to answer the challenge in this article on PGS and I don’t see how anyone can answer it. Nevertheless, “heritability studies attempt the impossible” because “the conceptual biological model on which heritability studies depend—that of identifiably separate effects of genes vs. the environment on phenotype variance—is unsound” (Burt and Simon, 2015).

That hereditarians have shifted to brain imaging and the neurosciences (eg Kirkegaard and Fuerst, 2023) in attempting to validate hereditarianism means I can use the explanatory gap argument to put these newer claims to rest (which is basically the same as the argument I made here against the possibility of science being able to study first-personal subjective states):

P1: Mental states have a first-personal subjective aspect which cannot be captured by third-personal brain sciences.

P2: All physical states can be described in terms of their physical relations relations and properties.

C: So mental states cannot be reduced to third-personal descriptions of brain activity.

So if minds reduce to genes or brains, then we would be able to explain M in terms of P.

P1: If all mental phenomena can be fully explained in terms of physical phenomena, then there is no need for non-physical mental entities or processes.

P2: There are mental phenomena that cannot be fully explained in terms of physical phenomena.

C: Therefore, there are non-physical mental entities or processes.

If physicalism were true, then we would have no need to posit mental entities. But since there are mental phenomena that cannot be fully explained in terms of physical phenomena, then we should accept the existence of non-physical mental phenomena, which would therefore mean that dualism is true and that merely studying brain physiology and processes doesn’t mean that we are studying the mind.

Conclusion

Hereditarianism is hardly a scientific theory. It’s not a scientific theory since M doesn’t reduce to P. It’s not a scientific theory since science can’t study first-personal subjective states. The hereditarian hypothesis cannot be tested in a meaningful way—so it is therefore ad hoc. Hereditarianism should be laid to rest with other hypotheses like phlogiston. Hereditarianism makes no testable predictions. The hereditarian hypothesis is a scientific theory if and only if mind reduces to brain. But the mind doesn’t reduce to the brain. So, again, the hereditarian hypothesis isn’t a scientific theory and, therefore, the mind cannot be studied by science.

Hereditarianism should take it’s place in the annals of failed hypotheses. Hereditarians should stop claiming that hereditarianism is a scientific theory/hypothesis because it very clearly is not.

Empirical Evidence is Irrelevant to Conceptual Arguments

2050 words

Introduction

Empirical arguments rely on scientific data—data derived from the five senses. Conceptual arguments don’t rely on empirical evidence—they rely on thinking about concepts. So then we can say that it’s about a priori vs. a posteriori knowledge. A posteriori knowledge is empirical/scientific knowledge while a priori knowledge is conceptual/logical knowledge. An argument about concepts would be analyzing the concepts which make up a proposition while an empirical argument would be an argument derived from our senses and what we observe. In this article, I will articulate the distinction between empirical and conceptual argument and then provide arguments why empirical evidence is irrelevant to conceptual objections. This, then, has implications for things like the reducibility of the mental to the physical.

Empirical argument

An empirical argument is one in which scientific data is paramount to it and observations and using our five senses are key to gaining knowledge. A related inquiry is what philosopher of mind Markus Gabriel calls rampant empiricism in his book I am not a Brain. Rampant empiricism is the philosophical claim that all knowledge can be derived from our five senses. However the claim that all knowledge derives from sense experience is a philosophical—conceptual—claim, and can’t be corrected with sense experience. In my article Against Scientism, I articulated an argument against the claim that scientism is true:

Premise 1: Scientism is justified.

Premise 2: If scientism is justified, then science is the only way we can acquire knowledge.

Premise 3: We can acquire knowledge through logic and reasoning, along with science.

Conclusion: Therefore scientism is unjustified since we can acquire knowledge through logic and reasoning.

While empirical evidence and argument do, of course, hold value—as evidenced with scientific inquiry—it is quite clear that we can nevertheless gain knowledge through thinking about concepts, through a priori reasoning. That is, we can acquire knowledge through logic and reasoning, so there is more than one way to gain knowledge. So evidence is empirical if it is derived through one of the five senses, that is if it is accessible to sense experience.

Empirical evidence is concerned with the physical world. That is, what we can see and measure. It is based on observation, experience, and measurement of physical quantities. So, for example, if X isn’t quantifiable, then X can’t be measured, therefore X wouldn’t be subject to empirical verification so it would be subject to conceptual argumentation.

Conceptual argument

A conceptual argument is an argument that doesn’t rely on empirical evidence—it is a priori (Bojanic, Laquinto, and Torrengo, 2018). It merely relies on logic and reasoning to gain knowledge. However, “logic without empirical supports can only be used to prove conceptual truths” (Icefield, 2020). Such arguments are based on abstract concepts, ideas, and principles, and claims are established based on a logical analysis of concepts.

Conceptual arguments are based on a priori knowledge such as mathematical proofs, conceptual definitions and logical principles. Knowledge like this can be established through reasoning and reasoning alone without appeal to empirical evidence. Since a priori knowledge is knowledge gained without appeal to empirical evidence, or observation, empirical evidence is thusly irrelevant to conceptual arguments. Concepts are general meanings of linguistic predicates, and so philosophy itself is an a priori, conceptual discipline, which relies on deduction.

Conclusions in an a priori, conceptual argument are established with logic and reasoning without appealing to empirical data. Such examples are, of course, the relationship between the mind and body, and the nature of causation. There could be no scientific experiments which would establish which theory in philosophy of mind would be true, nor could there be a scientific experiment which would establish the nature of causation.

For many, philosophy is essentially the a priori analysis of concepts, which can and should be done without leaving the proverbial armchair. We’ve already seen that in the paradigm case, an analysis embodies a definition; it specifies a set of conditions that are individually necessary and jointly sufficient for the application of the concept. For proponents of traditional conceptual analysis, the analysis of a concept is successful to the extent that the proposed definition matches people’s intuitions about particular cases, including hypothetical cases that figure in crucial thought experiments.

…

A related attraction is that conceptual analysis explains how philosophy could be an a priori discipline, as many suppose it is. If philosophy is primarily about concepts and concepts can be investigated from the armchair, then the a priori character of philosophy is secured (Jackson 1998). (SEP, Concepts)

The argument against empirical evidence being relevant to conceptual arguments

I have articulated a few arguments for the claim that empirical evidence is irrelevant to conceptual arguments.

P1: If empirical evidence is relevant to conceptual arguments, then empirical evidence can be used to support or refute conceptual claims.

P2: Empirical evidence cannot be used to support or refute conceptual claims.

C: Thus, empirical evidence is irrelevant to conceptual arguments.

Conceptual claims are based on a priori truths and so we can know things without experience, whereas empirical evidence is based on experience and observations and so they cannot refute conceptual claims. There is, though, no kind of empirical evidence that can refute conceptual claims from philosophical analysis.

P1: If empirical evidence is relevant to conceptual arguments, then all conceptual arguments would be subject to empirical verification.

P2: There are conceptual arguments that are not subject to empirical verification.

C: Therefore, empirical evidence is irrelevant to conceptual arguments.

A few conceptual arguments that aren’t subject to empirical verification include: Fodor and Piattelli-Palmarini’s (2009, 2010) argument against natural selection, the argument against the reducibility of the mental, the Berka/Nash measurement objection, a theory/definition of “intelligence“, the argument against localization of cognitive functions in the brain (Uttal, 2001, 2012, 2014), the argument against animal mentality (Davidson, 1982), the argument against machine intentionality/mindedness, the argument against the possibility of science being able to study subjective states, and Gettier’s argument against knowledge as justified true belief (Chalmers and Jackson, 2001). These all have one thing in common: There can never be any empirical evidence that would validate or invalidate these arguments, and so falsification would be irrelevant. One would disprove the arguments not by empirical means—if they could—but by dealing with the logic/concepts of the arguments. These arguments deal with abstract, theoretical concepts which cannot be observed or tested empirically; they rely on logical reasoning like deduction and using inferences and not observation and measurement ; many involve normative judgments; and they are subject to interpretation. While empirical evidence is concerned with the directly observable, measurable physical world.

Furthermore, arguments like those in Gourionouva and Mansvelder (2019) fall prey to the facts: that there is no definition or theory of “intelligence”; that twin studies don’t show genetic influence (Joseph, 2014) since the “laws” of behavioral genetics don’t hold; and last but not least that neuroimaging studies don’t and can’t do what they set out to do (Uttal, 2001, 2012, 2014), since neuroreductionism is false along with the fact that neuroscience assumes mind is physical and reducible to the CNS.

P1: Empirical evidence relies on the observation and measurement of physical phenomena.

P2: Conceptual arguments are based on abstract concepts and logical deduction.

P3: Abstract concepts cannot be observed or measured through empirical means.

C: Thus, empirical evidence is irrelevant to conceptual arguments.

P1 asserts that empirical evidence is based on observation and measurement of physical phenomena. P2 establishes that conceptual arguments are based on logical deduction and abstract concepts which aren’t observable or measurable empirically. P3 follows from the definition of abstract concepts and logical deduction and how they aren’t directly observable or measurable phenomena. So the conclusion necessarily and logically follows from the premises—it’s impossible to directly measure or observe abstract concepts or logical deductions which are the basis of conceptual arguments, so empirical evidence is irrelevant to conceptual arguments.

P1: If a proposition is conceptual, then it is not based on empirical evidence.

P2: If a proposition is not based on empirical evidence, then it is not subject to empirical testing.

P3: So if a proposition is conceptual, then it is not subject to empirical testing.

P4: If a proposition is not subject to empirical testing, then empirical evidence is irrelevant to assessing the truth or validity of the proposition.

C: Thus, if a proposition is conceptual, then empirical evidence is irrelevant to assessing the truth or validity of the proposition.

This argument builds on the others, and using hypothetical syllogism, successfully concludes that if a proposition is conceptual then it isn’t subject to empirical verification. Thus, taken together, empirical evidence is irrelevant to conceptual arguments, and so falsification, too, is irrelevant. Since falsification is irrelevant, then testability of the conceptual argument is, too, irrelevant. Conceptual arguments are irrefutable using the methods of scientific inquiry and can only be refuted using philosophical inquiry.

P1: If empirical evidence is relevant to an argument, then the argument must be testable using empirical methods.

P2: Conceptual arguments aren’t testable using empirical methods.

C: So empirical evidence is irrelevant to conceptual arguments.

This argument is simple: If empirical evidence is relevant to an argument, but conceptual arguments aren’t testable through empirical methods, then empirical evidence isn’t relevant to conceptual arguments. This is due to the distinction between empirical and conceptual arguments/evidence.

Now I can use destructive dillema to argue that empirical evidence is irrelevant to the mind-body problem.

P1: If an argument is conceptual, then it is based on abstract concepts and logical deduction.

P2: If an argument is based on abstract concepts and logical deduction, then it cannot be observed or measured through empirical means.

P3: If an argument cannot be observed or measured through empirical means, then empirical evidence is irrelevant to that argument.

P4: The mind-body problem is a conceptual argument.

C: Therefore, empirical evidence is irrelevant to the mind-body problem.

If the mind-body problem is a conceptual argument (P4), then it is based on abstract concepts and logical deduction (P1), so it cannot be observed or measured through empirical means (P2), thus empirical evidence is irrelevant to the mind-body problem (P3), ultimately meaning that the mind-body problem—along with the mental and first-personal subjective states—cannot be studied by science. This of course—as I have been arguing for years—has implications for the so-called hereditarian hypothesis and any kind of genetic or neuroimaging studies they attempt and assert that shows that the mental is reducible to genes or brain physiology. Indeed, a priori philosophical conceptual analysis shows that consciousness cannot be reduced to any material features—meaning it is outside of the bounds of scientific explanation, just like first-personal subjective states (Chalmers and Jackson, 2001).

Conclusion

I have articulated the distinction between empirical and conceptual arguments, and provided valid and sound arguments which distinguish between both types of argument. The nonidentity between how the types of argument work and gather support for premises shows why empirical evidence is irrelevant to conceptual arguments.

Quite clearly, there is evidence that isn’t empirical and so it isn’t subject to empirical verification/falsification/testing. This is because logical concepts/argumentation/reasoning are subject to falsification from the scientific method. For one to be able to successfully reject conceptual arguments, they need to grapple with the logic and reasoning of the arguments. No empirical evidence would be able to show that a proposition is false, since empirical evidence wasn’t used for the proposition.

The most powerful arguments against so-called hereditarianism are conceptual, and no matter what kinds of studies the hereditarian conjures up, none of them will refute the arguments against the possibility of psychophysical reductionism. So if consciousness (mind) cannot be reductively explained by the scientific method, then hereditarianism fails and therefore, there cannot be a science of the mind.

Since empirical arguments rely on observation or experimentation, and conceptual arguments rely on logic and reasoning, they have different bases of evidence. Empirical arguments are concerned with observable phenomena, while conceptual arguments are concerned with abstract concepts. Empirical arguments rely on the scientific method and data collection, while conceptual arguments rely on philosophical frameworks, logic, and reasoning.

The validity of a conceptual argument relies on the logic of its inferences along with the consistency and coherence of its logical framework and the soundness of the logic underlying the premises of the argument. The distinction and non-identity between the two types of evidence allows us to rightly state that, for these reasons why empirical evidence is irrelevant to conceptual arguments.

The AR Gene, Aggression and Prostate Cancer: Yet Another Hereditarian Reduction-to-Biology Fails

2100 words

Due to the outright failure in linking testosterone to differences in racial genetics, prostate cancer (PCa) and aggression, those who would still claim genetic or biological causation for differences in PCa incidence and aggression/crime which would then be linked to race needed to search other avenues for their long-awaited discovery and mechanism for the proposed relationships. This is where the AR (androgen receptor) gene comes in. The AR gene allows the creation of androgen receptors, and this is where androgen “dock”, if you will, allowing the physiological system to use it to carry out what it needs to. In this article, I will discuss the AR gene, CAG repeats, aggression, PCa, a just-so story and finally what may explain the differences in PCa acquisition and aggression between races, not appealing to genes.

Though, hereditarianism is concerned with the so-called biological/genetic transmission of socially-desired traits and also genetic causation of socially-undesired traits, due to the fall of the testosterone-causes-aggressive-behavior paradigm, surely some other biological mechanism could explain why blacks have higher rates of aggression, crime and PCa along with testosterone? Surely, if testosterone isn’t driving the relationship, it would somehow be implicated with it, through some other mechanism in some other kind of way? This is where the androgen receptor gene (AR gene) comes into play. So since CAG repeat length is assumed to be related to androgen receptor sensitivity, and since one report states that lower CAG repeats are associated with aggression (Simmons and Roney, 2011), this is where the hereditarian looks to next for their proposed relationships between testosterone, aggression and PCa. Simmons and Roney also, similarly to Rushton’s r/K, claim that “shorter AR-CAG repeats would have been beneficial for males inhabiting tropical regions because this genetic trait would have encouraged an androgenic response, reportedly along with higher testosterone levels” (Oubre, 2020: 293). Just-so stories all the way down.

Racial differences in AR gene and aggression/PCa

Such claims that the AR polymorphism followed racial lines and was correlated with PCa incidence began to appear in the late 1990s (eg, Giovannuci et al, 1997; Pettaway, 1999). It has been shown that the number of CAG repeats on the AR gene is related to heightened activity on the androgen receptor, and that blacks are more likely to have fewer CAG repeats on the AR gene (Sartor et al, 1997; Platz et al, 2000; Bennett et al, 2002; Gilligan et al, 2004; Ackerman et al, 2013). (But also see Gilligan et al (2004), Lange et al, (2008), and Sun and Lee (2013) for contrary evidence to these claims.) African populations have shorter CAG repeats than non-African populations on the AR gene (Samtal et al, 2022), and since carriers or short CAG repeats had a higher incidence of PCa (Weng et al, 2017; Qin et al, 2022), then this would be the next-best spot to look after the testosterone/PCa/aggression hypothesis failed so spectacularly. But since “Androgen receptor (AR) mediates the peripheral effects of testosterone” (Tirabassi et al, 2015), this has been a new haven for the hereditarian to go to and look for their relationship between aggression and biology.

Fewer CAG repeats has been linked to self-reported aggression (Mettman et al, 2014; Butovskaya et al, 2015; Fernandez-Castillo and Cormand, 2016). Though unfortunately for hereditarian theorists, they also need to look elsewhere, since the number of CAG repeats wasn’t related to aggressive behavior in men nor in women (Valenzuela et al, 2022). Vermeer (2010) found no relationship between CAG repeats and adolescent risk-taking, depression, dominance, or self-esteem. These findings are contrary to other claims, such as this from Geniole et al (2019): “Testosterone thus appears to promote human aggression through an AR-related mechanism“. Rajender et al (2008) showed that rapists and murders had fewer CAG repeats than controls (18.44 repeats, 17.59 repeats, and 21.19 repeats respectively). This is significant due to what was referenced above about testosterone modulating human aggression through an androgen receptor mechanism. Butovskaya et al (2012) also found no relationship between the AR gene and any of the aggression subscales they used.

Since shorter CAG repeats on the AR gene were also related to the severity of PCa incidence (Giovannuci et al, 1997), then what explains the 2 times higher incidence of PCa in blacks compared to whites and 3 to 4 times higher incidence in Asians (Hinata and Fujisawa, 2022; Yamoah et al, 2022) should be related to AR gene and CAG repeats. (Though shorter CGN repeats don’t increase PCa risk in whites and blacks; Li et al, 2017.) However, when blacks and whites had similar preventative care, differences almost entirely vanished (Dess et al, 2019; Yamoah et al, 2022). Lewis and Cropp (2020) have a good review of PCa incidence in blacks. Thus, external—not internal—factors influenced mortality rates, and even though there may be some biological factors that cause either a higher incidence of PCa or survival once it metastatizes, that doesn’t preclude the possibility of inequities on healthcare which cause this relationship (Reddick, 2018). But how can we explain this in an evolutionary context, either recently or in the deep past? Don’t worry, the just-so storytellers have us covered.

Just-so stories and androgen receptors

Urological surgeon William Aiken (2011), publishing in the prestigious journal Medical Hypotheses “speculated” that slaves thsg survived the Middle Passage were more sensitive to androgens, which would then protect them from the conditions they found themselves in on the slave ships during the Passage. This, he surmised, is why African descendants are way disproportionately represented in sprinting records and why, then, blacks have a higher incidence of PCa than whites. Aiken (2011: 1122) explains the “reasoning” behind his hypothesis:

This hypothesis emerged from an exploration of the possible interplay between historical events and biological mechanisms resulting in the similarity in the disproportionate racial and geographic distributions in seemingly unrelated phenomena such as sprinting ability and prostate cancer. The hypothesis is equally a synthesis of the interpretations of observations of a disparate nature such as the high incidence and mortality rates of prostate cancer amongst men of African descent in the Americas while West Africans residing in urban West African centre’s have a lower prostate cancer incidence and mortality [2], the 3-fold greater prostate cancer incidence in Afro-Trinidadians compared to Indo Asian-Trinidadians despite exposure to largely similar environmental conditions [5], the improvement in athletic sprinting performance observed when athletes take anabolic steroids [3], the observation that both sprinting ability and prostate cancer are related to specific hand patterns which in turn are related to antenatal exposure to high testosterone levels [6,7], the observation that prostate cancer is androgen-dependent and undergoes involution when testosterone is inhibited or withdrawn [4], the observation that West Africans born in West Africa are under-represented amongst the elite sprinters [1] despite their relatively large populations and despite West Africa being the region of origin of the ancestors of today’s elite sprinters and finally the observation that prostate cancer is related to androgen receptor responsiveness which in turn is related to its CAG-repeat length [8].

One of Aiken’s predictions is that black Americans and Caribbean blacks should have lower shorter CAG repeats than the populations of descent in Africa. Unfortunately for him, West Africans seem to have shorter CAG repeats than descendants of the Middle Passage (Kittles et al, 2001). Not least, neither of the two predictions he proposed to explain the relationship are risky or novel. By risky prediction I mean a hypothesis that would disprove the overarching hypothesis should the relationship not hold under scrutiny. By novel fact I mean a predicted fact that’s not used in the construction of the hypothesis. Quite clearly, Aiken’s hypothesis doesn’t meet this criteria, and so it is a just-so story.

But such fantastical, selection-type stories have been in the media relatively recently. Like Oprah’s and Dr. Oz’s assertions that blacks that survived the Middle Passage did so in virtue of their ability to retain salt during the voyage which then, today, leads to higher incidences of hypertension. This is known as the slavery hypertension hypothesis (Lujan and DiCarlo, 2018) and is, of course, also a just-so story. Just like the just-so story cited above, those Africans who took the voyage across the sea had some kind of advantage which explained why they survived and, consequently, explained relationships between maladies in their descendants. These types of stories—no matter how well-crafted—are nothing more than stories that explain what they purport to explain with no novel evidence that would raise the probability of the hypothesis being true.

Aggression

Aggression is related to crime, in that it is surmised that more aggressive individuals would then commit more crimes. I’ve noted the failure of hereditarian explanations over the years, so what do I think best explains the relationship between aggression and crime and, ultimately, criminal activity? Well, since crime is an action, it is therefore irreducible. I would propose a kind of situationism in explaining this.

Situational action theory (SAT) (eg Wilkstrom, 2010, 2019) is a cousin of situationism, and is a kind of moral action theory, placing the agent in situations (environments) which then would lead to criminal action as a discourse to take. “The core principle of SAT is that crime is ultimately the outcome of certain ‘kinds of people’ being exposed to certain ‘kinds of situations’” (Messner, 2012). For instance, a good example of this would be for black Americans. Mazur’s (2016) honor culture hypothesis states that blacks who are constantly vigilant for threats to their status and self have higher rates of testosterone in virtue of the fact that aggression increases testosterone.

So this would then be an example of the kind of relationship that SAT would look for. So SAT and the honor culture hypothesis are interactionist in that they recognize the interaction between the agent and the environment (situations) the agent finds themselves in. Violence is merely situational action (Wikstrom and Treiber, 2009), so to explain a violent crime, we need to know the status of the agent and the environment that the crime occurred in, along with the victim and motivating factors for the action in question. The fact of the matter is, actions are irreducible and what is irreducible isn’t physical, so physical (biological) explanations won’t work here. Further, the longer that people stay in criminogenic environments, the more likely they are to commit crime, due to the situations they find themselves in. Thus, a kind of analytic criminology should be employed to discover how and why crimes occur (Wikstrom and Kroneberg, 2022). Considerations in biology should not be looked at when talking about actions and their causes.

Prostate cancer

I have discussed this in the past: What best explains the incidences in PCa between races is diet. For instance, blacks have lower rates of vitamin D than other races (Guiterrez et al, 2022; Thamattoor, 2021). People with lower levels of vitamin D are more likely to acquire PCa, and those with the lowest levels of vitamin D were more likely to have aggressive PCa (Xie et al, 2017). Since consuming high IUs of vitamin D seems to stave off PCa (Khan and Parton, 2004; Naier-Shalliker et al, 2021), and since there seems to be a dose-response relationship between vitamin D consumption and PCa mortality (Song et al, 2018) along wkth vitamin D seeming to reverse low-grade PCa (Samson, 2015), it stands to reason that the higher incidences of PCa in blacks in comparison to whites are due to socio-environmental dietary factors. We don’t need any assumed biological/genetic factors in order to explain the relationship when we know the etiology of PCa.

Conclusion

Due to the old, 1980s and 1990s explanations from hereditarians on the etiology of PCa and aggression with its link to race and testosterone, researchers had to look to other avenues in order to find the “biological etiology” between the relationships. They then pivoted to the AR gene and CAG repeats to explain the relationship between PCa and testosterone when the original testosterone-causes-PCa-and-aggression claim was refuted (Tricker et al, 1996; Book, Starzyk, and Quinsey, 2001; O’Connor et, 2002; Stattin et al, 2004; Archer, Graham-Kevan, and Davies, 2005; Book and Quinsey, 2005; Michaud, Billups, and Partin, 2015; Boyle et al, 2016).

But as can be seen, again, the relationships between the proposed explanations in order to continue pushing their biological/genetic theories of PCa and aggression linked with testosterone and race also fails. Rushton’s r/K theory, for instance, implicated testosterone as a “master switch” (Rushton, 1999). Attempted reductions to biology were also seen in (the now-retracted) Rushton and Templer (2012) (see responses here and here). Reductions to biology quite clearly fail, but that doesn’t deter the hereditarian from pushing the racist theory that genes and biology explain the poor outcomes of blacks.

Vygotsky’s Socio-Historical Theory of Learning and Development, Knowledge Social Class, and IQ

4050 words

Three of the main concepts that Soviet psychologist Lev Vygotsky is known for is cultural and psychological tools, private speech, and the zone of proximal development (ZPD). The ZPD is the distance between what a learner can do with and without help—the gap between actual and potential development. Vygotsky’s socio-historical theory of learning and development states that human development and learning take place in certain social and cultural contexts. When one thinks about how knowledge acquisition occurs, quite obviously, one can surmise that knowledge acquisition (learning) and human development take place in specific cultural and social contexts and so knowledge is culture-dependent (Richardson, 2002).

In this article, I will discuss the intersection of culture and Vygotsky’s concepts of private speech, cultural and psychological tools, and the zone of proximal development along with how these relate to IQ. Basically, the argument will be that what one is exposed to in childhood and during development will dictate how one performs on a test, and that the ZPD predicts school performance better than “IQ.”

What is culture and where does it come from?

This question is asked a lot by “HBDers” and I think it is a loaded question. It is a loaded question because they are fishing for a specific kind of answer—they want you to answer that culture derives from a people’s genetic constitution. This, though, fails. It fails because of how culture is conceptualized. Culture is simply what is socially transmitted by groups of people. It is physically visible (public) though the meaning of each cultural thing is invisible—it is private to the people who espouse the certain culture.

The basic source culture is values, beliefs, and norms. Cultures lay down strict norms of what is OK and what isn’t, like for example the foods they eat and along with it beliefs and attitudes shared by the social group. So a basic definition of culture would be: beliefs and ways of life that a social group shares—it is a human activity which is socially transmitted. Knowing this, we can see how learning and in some ways development, can be culturally-loaded. Since a culture dictates not only what is learned, but also how to think in a certain culture, we can then begin to see how different cultures lead people to think in different ways and along with it how different cultures lead to differences in not only knowledge but the acquisition of that knowledge.

UNESCO defines culture as “the set of distinctive spiritual, material, intellectual and emotional features of society or a social group, that encompasses, not only art and literature but lifestyles, ways of living together, value systems, traditions and beliefs” (UNESCO, 2001). (What is Culture?)

the term “culture” can refer to the set of norms, practices and values that characterize minority and majority groups (Stanford Encyclopedia of Philosophy, Culture)

Material culture consists of tangible objects that people create: tools, toys, buildings, furniture, images, and even print and digital media—a seemingly endless list of items. … Non-material culture includes such things as: beliefs, values, norms, customs, traditions, and rituals (Culture as Thought and Action)

Since society consists of individuals who then become a group living in a certain region, then it stands to reason that learning and human development are due to these kinds of cultural and social interactions between individuals which make up a certain society and therefore culture. The types of things that allow me to survive, learn, and grow in one culture won’t allow me to survive, learn, and grow to the same degree in another culture.

Now that I’ve touched on what culture is, where does it come from? Why are there different cultures? Quite simply, cultures are different because people are different and although different cultures are comprised of individuals, these individuals themselves comprise a group. These groups of people live in different environments/ecologies (physical environment), and so considerations of these ecologies lead not only to a group to begin to construct a society that is necessarily in-tune with the environment, it also leads to “mental environments” between the people that comprise the group in question. So then we can say that culture comes from the way that groups of people live their lives.

If we think about culture as thought and action, then we can begin to get at what culture really is. Values and beliefs influence our thought, attitudes, and behavior. “Culture influences action…by...shaping a repertoire or “toolkit” of habits, skills, and styles from which people construct “strategies of action“” (Swidler, 1986). Action is distinct from behavior, in that action is future- or goal-directed whereas behavior is due to antecedent conditions. That is, actions are done for reasons, to actualize a goal of the agent that is performing the action. Crudely, culture can be then said to be what a group of people does. Culture is “human-created environment, artifacts, and practices” (Vasileva and Balyasnikova, 2019).

How culture, then, comes into play in Vygotsky’s socio-historical theory of learning and development is now clear—the ways that people interact with others in a specific culture then dictates the knowledge that they acquire which then shapes their mental abilities. This theory is a purely developmental theory. The socio-historical theory makes three claims: Social interaction plays a role in learning, knowledge acquisition, and development; language is an essential cultural/psychological tool in learning, and learning occurs within the zone of proximal development (ZPD). How that I have shown how I will be using the term “culture”, it is clear that what it means for Vygotsky’s theory of human learning and development is relevant. Now I will discuss cultural and psychological tools and then turn to those three aforementioned tenets that make up the theory.

Psychological and cultural tools

Psychological tools are symbols, signs, text and language, to name a few. They are internally oriented, but in their external appearance take their form in the aforementioned ways. Language and mathematics are two kind of psychological tools, but we can also rightly say they they are cultural tools as well (in the case of language).

Cultural tools are tools specific to a culture which allows an individual to navigate that culture. Cultural tools don’t determine thinking but they do constrain it, since the “information about the expected or appropriate actions in relation to a particular performance in a community. This is indirectly social in that it is not interpersonal, though it nevertheless stems from the social context” (Gauvain, 2001:129). Language can be seen as both a cultural and psychological tool; humans are born into culturally- and linguistically-mediated environments, and so they are immediately immersed in culture from the day they are born (Vasileva and Balyasnikova, 2019).

Cultural tools include historically evolved patterns of co-action; the informal and institutionalized rules and procedures governing them; the shared conceptual representations underlying them; styles of speech and other forms of communication; administrative, management and accounting tools; specific hardware and technological tools; as well as ideologies, belief systems, social values, and so on (Vygotsky, 1988).(Richardson, 2002: 288)

Robbins (2005: 146) writes:

Another important concept within sociocultural theory, which we can highlight through Rogoff’s (1995, 1998) contextual or community focus of analysis, is the use of cultural tools (both material and psychological) in the development of understanding. As Lemke (2001) points out, we grow and live within a range of different contexts, and our lives within these communities and institutions give us tools for making sense of, and to, those around us. Vygotsky described psychological tools as those that can be used to direct the mind and behaviour, while technical tools are used to bring about changes in other objects (Daniels, 2001). Commonly cited examples of cultural tools include language, different kinds of numbering and counting, writing schemes, mnemonic technical aids, algebraic symbol systems, art works, diagrams, maps, drawings, and all sorts of signs (John-Steiner & Mahn, 1996; Stetsenko, 1999).

So cultural tools, then, become “internalized in individuals as the dominant ‘psychological tools’” (Richardson, 2002: 288).

Social interaction plays a role in learning

This seems quite intuitive. As a human develops, they begin to take cues from their overall environment and those that are rearing them. They are immersed in a specific culture immediately from birth. They then begin to internalize certain aspects of the environment around them, and then begin to internalize the specific cultural and psychological tools inherent to that specific culture.

Tomasello (2019: 13) states that his theory is that “uniquely human forms of cognition and sociality emerge in human ontogeny through, and only through, species-unique forms of sociocultural activity” and so it is not only Vygotskian, but neo-Vygotskian. So children are in effect scaffolded by the culture they are immersed in, which is how “more knowledgeable others” (MKO) affect the learning trajectory of the child. A MKO is an individual who has a better understanding of, or a higher ability than, the learner. So MKOs aren’t merely for teaching children, they are strewn throughout the world teaching less knowledgeable others. These MKOs guide individuals in their ZPD, since the MKO would have greater access to certain knowledge that the LKO wouldn’t, they would then be able to guide the LKO in their learning, able to provide instruction to the LKO so they could then perform a certain task. Learning to play baseball, right a bike, lift weights, are but a few ways that MKOs guide the development and task-acquisition of children—these are perfect examples of the concept of “scaffolding.”

Although Vygotsky never used the term “scaffolding”, it’s a direct implication of his socio-historical theory of learning and development. The concept of scaffolding has been argued to be related to the ZPD, but see Shabani, Khatib, and Ebadi (2010) and Xi and Lantolf (2021) for criticism of this relationship. However, it has been experimentally shown that the concept of scaffolding along with the ZPD can be used to extend a student’s ZPD for critical thinking (Wass, Harland, and Mercer, 2011). That is, the students can better reach their potential and therefore become independent learners.

What this means is that culture is significant in learning, language is necessary for culture, and people learn from others in their communities. Interacting with other people while developing, and even after, are how humans develop. Since we are a social species, it stands to reason that these concepts like MKOs and the significance of the cultural context in the acquisition of certain skills and learning play a significant role in the development of all children and even adults. Thus, each stage of the development of a child builds upon a previous stage, and so, play could also be seen as a form of learning—a form of sociocultural learning. Imaginative play, then, allows the self-regulation of children and also challenges them just enough in their ZPD.

Private speech

“Private speech” is when a child talks to themselves while they are performing a task (Alderson-Day and Fernyhough, 2015). It is one’s “inner speech”, their own “voice” in their heads. It is the act of talking to one’s self as they perform a task, and this is ubiquitous around the world, implying that it is a hallmark of human cognizing (Vissers, Tomas, and Law, 2020). This is basically the “voice” you head in your head as you live your daily life. It is, of course, a natural consequence of thinking and talking. Speech acts are a natural process of think acts, as Vygotsky argued, which is similar to Davidson’s (1982) argument against the possibility of animal mentality since for organisms to be thinking and rational they must be able to express numerous thoughts and interpret the speech of others. This kind of speech, furthermore, has been shown to been related to working memory and cognitive reflexivity (Skipper, 2022).

The zone of proximal development

The ZPD is what a learner can and cannot do without help. Vygotsky originally developed it to oppose the concept of “IQ” (Neugeurela, Garcia, and Buescher, 2015; Kazemi, Bagheri, and Rassei, 2020; Offori-Attah, 2021). This concept is perhaps the most-used and discussed concept that Vygotsky forwarded. Central to this concept, which is a part of Vygotsky’s overall theory of child development, is imitation. Imitation is a goal-directed activity, and so it is an action. There is intention behind the imitation because the imitator is copying what the MKO is doing. But Vygotsky was using “imitation” in a way that is not normally used. To be able to imitate, one has to be able to be able to do carry out the imitation of what they are seeing from the MKO. So Vygotsky’s concept of the ZPD is that a child can learn something that he doesn’t know how to do by imitating an MKO, having the MKO guide them through to complete the task. It has been argued that ZPD can improve a learner’s thinking ability, along with making learning more relevant and efficient to the learner since it gives the learner the ability to learn from instruction and having a MKO guide them to compete a task, which then becomes internalized (Abdurrahman, Abdullah, and Osman (2019).

So the ZPD indicates what a child can do independently, and then they are given harder, guided problems which they then imitate and further internalize. MKOs are able to recognize where a child is in their development and can help them then complete harder tasks. The ZPD is related to learning not only in school but also in play (Hakkarainen and Bredikyte, 2008). For instance, the Strong Museum of Play states that “Learners develop concepts and skills through meaningful play. Play supports physical, emotional, cognitive, and social development.” Children definitely learn from play, and this interactive kind of learning also has them better understand their body, since play is in part a physical activity (a guided, goal-directed, intention). Play is” developmentally beneficial (Eberle, 2014; UNICEF, 2018), and it is beneficial and related to the ZPD since a child can learn to do something either from a peer or coach that knows how to do the action they want to learn and then internalize. An individual that is playing is an active participant in their own learning. Play, in effect, creates the ZPD (Hakkarainen and Bredikyte, 2014). Though Vygotsky’s conception of “play” is different than used in common parlance. Play

is limited to the dramatic or make-believe play of preschoolers. Vygotsky’s play theory therefore differs from other play theories, which also include object-oriented exploration, constructional play, and games with rules. Real play activities, according to Vygotsky, include the following components: (a) creating an imaginary situation, (b) taking on and acting out roles, and (c) following a set of rules determined by specific roles (Bodrova & Leong, 2007). (Scharer, 2017: 63)

Further, “symbolic play may scaffold development because it facilitates infants’ communicative success by promoting them to ‘co-constructors of meaning’” (Creaghe and Kidd, 2022). “Play creates a zone of proximal development of the child. In play a child always behaves beyond his average age, above his daily behavior; in play it is as though he were a head taller than himself” (Vygotsky, 1978, 102 quoted in Gray and Feldman, 2004: 113).

The scaffolding occurs due to the relationship between play, the ZPD and what an individual then internalizes and then becomes embedded in their muscle memory. This is where MKOs come into play. When one is first learning to work out, they may seek out a personal knowledgeable in the mechanics of the human body to learn how to lift weights. Through instruction, they then begin to learn and then internalize the movements in their heads, and then they can just perform the lift well after successive attempts of doing a certain motion. Or take baseball. Baseball coaches would be the MKOs, and they then teach children to play baseball and they learn how to hit pitches, catch balls, throw and how to be a part of a team. Through the action of play, then, one can reach their ZPD and even extend it.

ZPD and IQ

Further, Vygotsky showed that the whether or not one has a large or small ZPD better “predicts” performance than does “IQ” and he also noted that those who scored higher on IQ tests “did so at the cost of their zone of proximal development“, since they exhaust their ZPD earlier leaving a smaller ZPD.

Vygotsky reported that not only did the size of the children’s ZPD turn out to correlate well with their success in school (large ZPD children were more successful than small ZPD children) but that ZPD size was actually a better predictor of school performance than IQ. (Poehner, 2008: 35; cf Smirni and Smirni, 2022)

It has even been experimentally demonstrated that children with high IQs have a smaller ZPD, children with low IQs have a larger ZPD (Kusmaryono and Kusmaningsih, 2021). It has also been shown that those who received ZPD scaffolding instruction improved more and even outperformed the other group on subsequent IQ tests after a first test was administered (Stanford-Binet and Mensa) (Ghelot, 2021). The responsiveness to remediation, and not “IQ” was a better predictor of school performance (Amini, Hassaskhah, and Sibet, 2017) and the degree of responsiveness wasn’t related to high or low IQ, since some learners had a high responsiveness and low score while others had a high score but low responsiveness (Poehner, 2017: 156). Those who took a test in one year and did not get better in subsequent years, Vygotsky argued, merely meant that they were not pushed outside of what they already know. So children with large ZPD were more likely to be successful irrespective of IQ while children with small ZPD were less likely to be successful, irrespective of IQ. Though the concepts of ZPD and IQ are seen as not contradictory, but related (Modarresi and Jeddy, 2021), quite clearly since “IQ” isn’t a measure of learning ability it merely shows what one has learned and so has been exposed to while the ZPD shows how one would do into the future due to how large their ZPD is. It shows not only where someone has reached, but also shows where they can reach. Thus, instead of the (undeserved) emphasis of IQ, we should therefore put the ZPD in its place, since it is a dynamic (relational) assessment and not a standardized test (Din, 2017).

What’s class got to do with it?

Since children acquire knowledge and beliefs based on their class background (what they are exposed to in their daily lives as they grow), then it follows that children will be differentially prepared for taking certain kinds of tests. So if the content on the tests is biased toward a group, then it is biased against a group. It is biased against a group since they are not exposed to the relevant material and kinds of thinking needed to be able to perform the test in a sufficient manner. Knowing what we now know about the acquisition of cultural and psychological tools, we can state that “high IQ may simply be an accident of immersion in middle-class cultural tools (aspects of literacy, numeracy, cultural knowledge, and so on) … the environment is made up of socially structured devices and cultural tools, and in which development consists of the acquisition of such cultural tools” (Richardson: 1998: 163-164). It is due to these considerations that culture-fair IQ tests are an impossibility, since people are encompassed in different cultures (what amount to learning environments where they acquire knowledge and cultural and psychological tools) are therefore an impossibility since abilities are cultural devices—culture-free tests are therefore an illusion (Cole, 2002; Richardson, 2002).

So if there are different cultural groups, then they by definition have different cultures. If they have different cultures, then they have different experiences (of course), and so, they acquire different kinds of knowledge and along with it cultural and psychological tools. It is then we can then rightly state that therefore different cultural groups would then be differentially prepared for doing certain tasks. And so, if one’s culture is more dominant and if one culture’s way of thinking is more prevalent, then it follows that people will be prepared for a certain test at different stages of being able to perform the tasks or answer the questions. Social status, also, isn’t merely just related to material things, it also influences how we think and act (Richardson and Jones, 2019) and so emotional and motivational—affective—factors would therefore play a role in one’s test score, since they are constructed from a narrow range of test items, constructed to get the results that were a priori to the test constructors. So since one’s class is related to affective factors, since IQ tests reflect mere class-specific items, it follows that the “affective state is one of the most important aspects of learning” (Shelton-Strong and Maynard, 2018). It is then, by using the concepts of cultural and psychological tools (which occur in social relations) that we can then rightly state that IQ tests are best looked at as mere class surrogates.

Conclusion

Basically, “in order to understand the individual, one must first understand the social relations in which the individual exists” (Wertsch, 1985: 63). Vygotsky’s theory is one in which the mind is formed and constructed through social and cultural interactions with those who are already immersed in the culture that the individual’s mind is developing in. And so, by using the concepts of cultural and psychological tools, we can then see how and why different classes are differentially prepared for taking tests, which is then reflected in the score outcomes. Since growing individuals learn what they are exposed to and they learn from those who are already immersed in the culture at large, then it follows that individuals learn culturally-specific forms of learning and thusly acquire different “tool sets” in which they then navigate the social world they are in. The concepts of private speech, cultural and psychological tools, MKO, scaffolding and the ZPD all coalesce to a theory of learning and development in which the learner is an active participant in their development, and so, these things also combine to show how and why groups score differently on IQ tests.

Knowledge is the content of thought, and the ability to speak is how we convey thoughts to others and how we actualize the thoughts we have into action. Thus all higher human cognitive functioning is social in nature (van der Veer, 2009). Though it is wrongly claimed that IQ is shown to be a measure of learning potential, it is rightly said that the ZPD is social in nature (Khalid, 2015). IQ doesn’t show one’s learning potential, it merely shows what one was or was not exposed to in regard to the relevant test items (Lavin and Nakano, 2017). Culture is a fluid and dynamic experience (Rublik, 2017) in which one is engrossed in the culture they are born into, and so, by understanding this, we can then understand why different groups of people score differently on IQ tests, without the need for genes or biological processes.

Though there have been good criticisms of Vygotsky’s socio-historical theory of learning and development. Though much of Vygotsky’s theorizing has led to predictions and do have some empirical support (Morin, 2012). One argument against the ZPD is that it doesn’t explain development or how it really occurs. If you think about development from a Vygotskian perspective, we see that it is as much of a cultural and social activity than is mere individual learning. By learning from people more knowledgeable than themselves, they are then able to learn how to do something, and through repetition, able to do it on their own without the MKO.

The fact of the matter is, IQ tests aren’t as good as either teacher assessment (Kaufman, 2019) or the ZPD in predicting where a learner will end up. It is for these reasons (and more) we should stop using IQ tests and we should us the relational ZPD. (One can also look at the ZPD as related to considerations from relational developmental systems theory as well; Lerner, 2011, 2013; Lerner, Johnson, and Buckingham, 2015; Ettekal et al, 2017; Bell, 2019). It is for these reasons that standardized tests should not be used anymore, and we should use tests of dynamic assessment. The empirical research on the issue bears out this claim.

Who Believes in an Afterlife in America? If Heaven Exists, Will There Be Races?

2500 words

(Note: I don’t believe in an afterlife and I’m not a theist.)

What do Americans think about the existence of an afterlife and what are the differences between races?

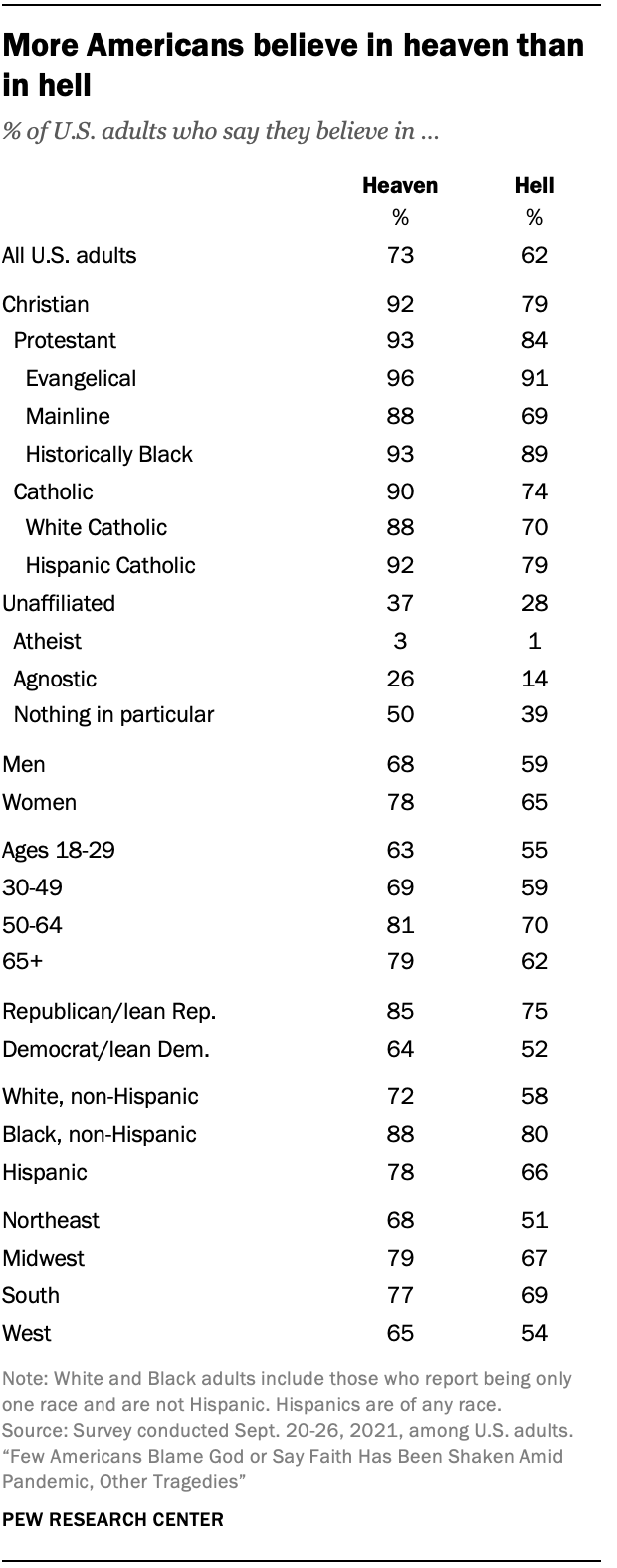

What do Americans think about the existence of an afterlife—of heaven and hell? The existence of an afterlife to American citizens is clear—more Americans believe in heaven but not in hell, per Pew. But 26% of the respondents didn’t believe in either heaven or hell. But those who did not believe in heaven or hell but did believe in an afterlife were asked to describe their views:

Respondents who believe in neither heaven nor hell but do still believe in an afterlife were given the opportunity to describe their idea of this afterlife in the form of an open-ended question that asked: “In your own words, what do you think the afterlife is like?”

Within this group, about one-in-five people (21%) express belief in an afterlife where one’s spirit, consciousness or energy lives on after their physical body has passed away, or in a continued existence in an alternate dimension or reality. One respondent describes their view as “a resting place for our spirits and energy. I don’t think it’s like the traditional view of heaven but I’m also not sure that death is the end.” And another says, “I believe that life continues and after my current life is done, I will go on in some other form. It won’t be me, as in my traits and personality, but something of me will carry on.”

Blacks were slightly more likely to believe in heaven over whites, though a super majority of both races do believe in heaven, while way more blacks than whites believed in the existence of hell. Others professed less-widely-held views on the afterlife, like existing as a spirit, consciousness, or energy in the afterlife. Those who believe state that heaven is free from earthly matters, such as suffering while in hell it is the opposite—hell is nothing but eternal suffering, not due to any fire and brimstone, but because it is eternal separation from God. America is, to my surprise, still a very superstitious country when it comes to God and Satan and the existence of heaven and hell. People believe that their prayers can be answered and that interactions between the living and the dead are possible. Black Americans are more likely to believe that their prayers can be directly answered in comparison to white Americans (83 percent compared to 65 percent, respectively) , while 67 percent of Americans think it’s possible. Black Americans also believe that revelations from a higher power are possible in comparison to white Americans (85 percent and 66 percent, respectively), while black Americans are more likely to believe that they have experienced contact from a higher power compared to white Americans (53 percent compared to 25 percent, respectively).

Blacks are also slightly more likely than whites to believe in near-death experiences (79 percent compared to 73 percent, respectively). Thus, blacks are more superstitious than whites. The Pew poll also tracks other studies—black Americans and Caribbean Blacks were more likely to be religious than whites (Joseph et al, 1996; Franzini et al, 2005; Taylor, Chatters, and Jackson, 2007; Chatters et al, 2009). Men in general are less religious than women, but black men are less religious than black women but more religious than white women. But although blacks are more likely than whites to believe in an afterlife and be religious, there is an apparent shift away (and Americans seem to be shifting away from being religious ever so slightly, though 81 percent of Americans are still believers) from religiosity in the black community; but they are still more likely to pray, say grace and attend church than other racial groups.

Moreover, black men over age 50 who attend church had a 47 percent reduction in all-cause mortality compared to those who did not attend (Bruce et al, 2022), so there seems to be a protective effect that occurs due to attending church services (Assari and Lankarani, 2018; Carter-Edwards et al, 2018; Majee et al, 2022). It has been found that blacks consistently report lower odds of having depression, and the answer is probably due to attending religious services (Reese et al, 2012). However, when it comes to church attendance, for white women their attendance at church is either nonexistent or protective when it comes to body mass while for black women consistent relations between church attendance and body mass have been shown (Godbolt et al, 2018). Given the fact that black women have been consistently more likely to be obese than white women since at least the late 80s and 90s (Gillum, 1987; Kumanyika, 1987; Allison et al, 1997) and today (Tilghman, 2003; Johnson et al, 2012; Agyemang and Powell-Wiley, 2014; Tucker et al, 2021), this finding is not surprising. But the effects of racism can not only explain the higher rates of obesity in black women (Cozier et al, 2014), it could also explain the higher rates of “weathering” of black women’s bodies (Geronimus et al, 2006).

Nevertheless, blacks are more likely to be religious and report religious experiences in comparison to whites, and blacks are also more likely to be religious in comparison to the general US population. Why may blacks be more religious than whites? This is a question I will try to answer in the future.

Is it possible for races to exist in heaven?

Some Christians claim that there will be racial/ethnic diversity in both heaven and hell. The article Will heaven be multicultural and have different races? claims that:

The ultimate answer to your question is found in Revelation 21-22 which describes the new heaven and earth. In Revelation 21:24 we are told that people from the various nations will be in heaven. That is, those who believe in Jesus Christ and follow Him will live there for eternity. But the culture of heaven will be God’s culture. Everything is new. Heaven and earth will be new. The old will have disappeared and the new will have come. Sin will be gone and racial prejudices and alliances will be gone.

While the article Will There Be Ethnic Diversity in Heaven? claims that “ethnic diversity seems to be maintained and apparent in Heaven, for eternity“, the article Racial Diversity in Hell claims that:

The difference between heaven and hell is that in heaven—that is, in the new heaven and new earth—there will be perfect racial and ethnic harmony, but in hell, racial and ethnic animosities will reach their fullest fury and last forever.

So what is RACE? In my view, race is a suite of physical characteristics which are demarcated by geographic ancestry, as argued by Hardimon and Spencer. So if race is physical, then if a thing isn’t physical—that is, if a thing is immaterial—then there would be no way to identify which racial group they were a part of while they were alive. If we take the afterlife to be a situation in which a person has died but they then exist again as a disembodied soul/mind, then there can’t possibly be races in heaven, since what identified the person as part of a racial group (the physical) doesn’t exist anymore.

In the book The Myth of an Afterlife, Drange (2015: 329-330) articulates what he calls the nonidentification argument, where it is inconceivable for a person to be identified if they are bodiless, and if they are bodiless and race is a property of physical bodies, then it would follow that there wouldn’t be races in heaven since disembodied souls, by definition, lack physical bodies—there would be no way for the identities of people to be established, and so if people’s identities cannot be established, then it follows that their racial identities cannot be established either.

- Bodiless people would have no sense organs and no body of any sort.

- Therefore, they could not feel anything by touch or see or hear anything (in the most common senses of “see” and “hear”).

- Thus, if they were to have any thoughts about who they are, then they would have no way to determine for sure that the thoughts are (genuine) memories, as opposed to mere figments of imagination.

- So, bodiless people would have no way to establish their own identities.

- Also, there would be no way for their identities to be established by anyone else.

- Hence, there would be no way whatever for the identities of bodiless people to be established.

- But for a person to be in an afterlife at all, it is conceptually necessary for his or her identity to be capable of being established.

- It follows that a totally disembodied personal afterlife is not conceivable.

Drange’s argument is against a certain conception of the afterlife, mainly if it is one where souls are disembodied, it follows that there would be no way to identify them, and so it follows that there would be no races in heaven, since race is a physical property of humans and their bodies. But there are different ways of looking at the possibility of races in heaven, depending on which theory of race one holds to.

Nathan Placencia (2021) argues that whether or not races exist in heaven depends on which philosophy of race you hold to, but he does make the positive claim that there may be racial identities in heaven. For racial constructivists, since race exists merely due to social conventions and racialization, then race wouldn’t exist. For the racial skeptic, since race doesn’t exist as a biological category, then races don’t exist. That is, since racial naturalism is false, then races of any kind cannot exist, where racial naturalism is basically like the hereditarian conception (or non-conception, if you will) of race (see Kaplan and Winther, 2015). Racial naturalists argue that race is grounded in genetically-mediated biological differences. I am of course sympathetic to the view, though I do hold that race is a social construct of a biological reality and I am a pluralist about race. The last conception that Placencia discusses is that of deflationary realism, where race is genetically-grounded but not itself normatively important (Hardimon, 2017). So Placencia claims that for the racial constructivists and skeptics, races won’t exist in heaven while for the deflationary realist, the “answer is maybe” on whether or not race will exist in heaven which then of course depends on what the resurrected heavenly bodies would look like.

Believers in heaven state that Believers will have new, physical bodies in heaven. But Jesus wasn’t immediately recognizable to his followers, though they did come to know that it was actually him after spending time with him. So theists of course then believe that we get new physical bodies in heaven but that we would look different than we did while we had a physical, earthly existence. Certain chapters in Revelations (21:4, 22:4) talk about God wiping away tears and a name appearing on their foreheads, so this then implies that there would be new, physical bodies in heaven. But now the question is, would heavenly bodies fall under racial lines as we currently understand them in this life? The question is obviously unanswerable, but certain texts in the Bible after Jesus’ resurrection state that he did look different than he did while he was alive in earth.

Baker-Hytch (2021: 182) argues that “the new creation is depicted as an everlasting reality whose human inhabitants from all nations will have resurrection bodies that—after the pattern of Jesus’ resurrection body—neither age nor die and that will partake in shared pleasures such as eating and drinking together.” So there is a trend in Christian and theistic thought that in heaven, we will all have new heavenly bodies and not exist as mere disembodied souls. But talk of new heavenly bodies faces an issue—if they are bodies in the sense that we think of bodies now, the bodies that we inhabit now, then would they grow old, decay and eventually die? Would God then give us new heavenly bodies? It would stand to reason that, if God is indeed all-powerful and all-knowing, then he would have thought these issues through and so heavenly bodies wouldn’t have the same properties as physical, earthly bodies and so they wouldn’t get older, die and eventually decay.

Conclusion

If the afterlife is completely disembodied, then it follows that race wouldn’t exist in the afterlife, since there would be no way for the identities of persons to be established, and thusly there would be no way for the race of the disembodied soul to be established. Most theists contend that we will have new, heavenly bodies in heaven, but whether or not they would look the same as the former earthly bodies is up in the air, since Jesus after his resurrection apparently looked different, since it states in the Bible that it took some time for Jesus’ followers to recognize him. So, if Heaven exists, will there be races? The concept RACE is a physical one. So if there are disembodied souls in heaven, and they have no physical bodies, then races won’t exist in heaven.

I obviously am a realist about race who holds to radical pluralism about racial kinds—there can be many concepts of race which are true and are context-dependent. Though I do not believe in an afterlife, I do believe that if an afterlife is nothing but disembodied souls living in heaven wkth God, then it follows that there won’t be races in heaven since there are no physical bodies on which to ground racial ontologies. On the other hand, if what most theists contend is true—that we get new heavenly bodies after our death and entrance into the afterlife—whether or not race would exist in heaven is questionable and it depends on which concept of RACE one holds to. If one is a constructivist or skeptic (AKA eliminativist or anti-realist) about race, then race wouldn’t exist in heaven as race is due to social conventions and the concept of racialization of groups as races. But if one is a deflationary realist about race (which I myself am), then the answer to the question of whether or not races would exist in heaven is maybe.

Nevertheless, whether or not one believes in the existence of an afterlife is slightly drawn on racial lines, with blacks being more likely to believe in an afterlife compared to whites, while are more likely to believe that their prayers can be directly answered and that they can talk to a higher power in comparison to whites.

So depending on how races get squared away in heaven upon receiving new heavenly bodies, it is unknown whether or not races will exist in heaven.

The Argument from Causality and the Argument from Prediction for a Mind-Independent World

1200 words

How can we know that a mind-independent world exists outside of our senses if our senses are subjective? We have first-personal perspectives (FPP) and so, if our first-personal experience is subjective, how can we know that an objective world exists outside of our senses? Well, I have two arguments for the existence of a mind-independent world—what I call “the argument from prediction” and “the argument from causality.”

P1: If there is a physical world independent of human minds, then we can make consistent predictions and perceive it.

P2: We can make consistent predictions about the world and perceive them.

C: So there is a physical world independent of human minds.

P1: If there is causality in the world, then there is a world independent of human minds.

P2: There is causality in the world.

C: Therefore there is a world independent of human minds.

In this article, I will justify each premise of both arguments.

For this article, I will be operating under this definition of mind-independence: X is mind-independent if the existence of X is not dependent on a thinking or perceiving thing. This is a form of metaphysical realism, where there are two theories:

a. that physical objects do not depend for their existence on being perceived or conceived by mind, and

b. that there are physical objects.

The argument from prediction

Premise 1: Only if there were a world independent of our minds could we then make predictions about what occurs in the world. For if what we perceive wasn’t independent of our minds, then we wouldn’t be able to make predictions about the world (ones that turn out to be true, of course). A mind-independent thing is a thing that exists without a thing that thinks and perceives it; so it would exist without an external observer. If humans weren’t here anymore, and if all animals went extinct while the earth was still intact, then the world would still exist.