Home » Articles posted by RaceRealist (Page 43)

Author Archives: RaceRealist

Is General Intelligence Domain-Specific?

1600 words

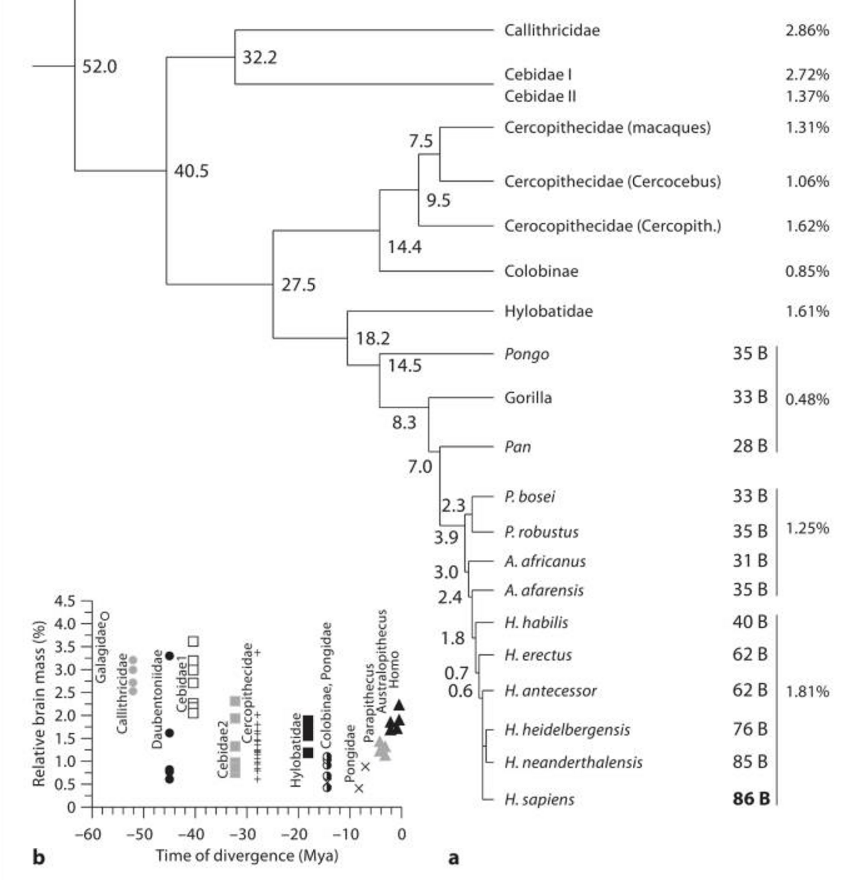

Is the human brain ‘special’? Not according to Herculano-Houzel; our brains are just linearly scaled-up primate brains. We have the number of neurons predicted for a primate of our body size. But what does this have to do with general intelligence? Evolutionary psychologists also contend that the human brain is not ‘special’; that it is an evolved organ just like the rest of our body. Satoshi Kanazawa (2003) proposed the ‘Savanna Hypothesis‘ which states that more intelligent people are better able to deal with ‘evolutionary novel’ situations (situations that we didn’t have to deal with in our ancestral African environment, for example) whereas he purports that general intelligence does not affect an individuals’ ability to deal with evolutionarily familiar entities and situations. I don’t really have a stance on it yet, though I do find it extremely interesting, with it making (intuitive) sense.

Kanazawa (2010) suggests that general intelligence may both be an evolved adaptation and an ‘individual-difference variable’. Evolutionary psychologists contend that evolved psychological adaptations are for the ancestral environment which was evolved in, not in any modern-day environment. Kanazawa (2010) writes:

The human brain has difficulty comprehending and dealing with entities and situations that did not exist in the ancestral environment. Burnham and Johnson (2005, pp. 130–131) referred to the same observation as the evolutionary legacy hypothesis, whereas Hagen and Hammerstein (2006, pp. 341–343) called it the mismatch hypothesis.

From an evolutionary perspective, this does make sense. A perfect example is Eurasian societies vs. African ones. you can see the evolutionary novelty in Eurasian civilizations, while African societies are much closer (though obviously not fully) to our ancestral environment. Thusly, since the situations found in Africa are not evolutionarily novel, it does not take high levels of g to survive in, while Eurasian societies (which are evolutionarily novel) take much higher levels of g to live and survive in.

Kanazawa rightly states that most evolutionary psychologists and biologists contend that there have been no changes to the human brain in the last 10,000 years, in line with his Savanna Hypothesis. However, as I’m sure all readers of my blog know, there were sweeping changes in the last 10,000 years in the human genome due to the advent of agriculture, and, obviously, new alleles have appeared in our genome, however “it is not clear whether these new alleles have led to the emergence of new evolved psychological mechanisms in the last 10,000 years.”

General intelligence poses a problem for evo psych since evolutionary psychologists contend that “the human brain consists of domain-specific evolved psychological mechanisms” which evolved specifically to solve adaptive problems such as survival and fitness. Thusly, Kanazawa proposes in contrast to other evolutionary psychologists that general intelligence evolved as a domain-specific adaptation to deal with evolutionary novel problems. So, Kanazawa says, our ancestors didn’t really need to think inorder to solve recurring problems. However, he talks about three major evolutionarily novel situations that needed reasoning and higher intelligence to solve:

1. Lightning has struck a tree near the camp and set it on fire. The fire is now spreading to the dry underbrush. What should I do? How can I stop the spread of the fire? How can I and my family escape it? (Since lightning never strikes the same place twice, this is guaranteed to be a nonrecurrent problem.)

2. We are in the middle of the severest drought in a hundred years. Nuts and berries at our normal places of gathering, which are usually plentiful, are not growing at all, and animals are scarce as well. We are running out of food because none of our normal sources of food are working. What else can we eat? What else is safe to eat? How else can we procure food?

3. A flash flood has caused the river to swell to several times its normal width, and I am trapped on one side of it while my entire band is on the other side. It is imperative that I rejoin them soon. How can I cross the rapid river? Should I walk across it? Or should I construct some sort of buoyant vehicle to use to get across it? If so, what kind of material should I use? Wood? Stones?

These are great examples of ‘novel’ situations that may have arisen, in which our ancestors needed to ‘think outside of the box’ in order to survive. Situations such as this may be why general intelligence evolved as a domain-specific adaptation for ‘evolutionarily novel’ situations. Clearly, when such situations arose, our ancestors who could reason better at the time these unfamiliar events happened would survive and pass on their genes while the ones who could not die and got selected out of the gene pool. So general intelligence may have evolved to solve these new and unfamiliar problems that plagued out ancestors. What this suggests is that intelligent people are better than less intelligent people at solving problems only if they are evolutionarily novel. On the other hand, situations that are evolutionarily familiar to us do not take higher levels of g to solve.

For example, more intelligent individuals are no better than less intelligent individuals in finding and keeping mates, but they may be better at using computer dating services. Three recent studies, employing widely varied methods, have all shown that the average intelligence of a population appears to be a strong function of the evolutionary novelty of its environment (Ash & Gallup, 2007; D. H. Bailey & Geary, 2009; Kanazawa, 2008).

Who is more successful, on average, over another in modern society? I don’t even need to say it, the more intelligent person. However, if there was an evolutionarily familiar problem there would be no difference in figuring out how to solve the problem, because evolution has already ‘outfitted’ a way to deal with them, without logical reasoning.

Kanazawa then talks about evolutionary adaptations such as bipedalism (we all walk, but some of us are better runners than others); vision (we can all see, but some have better vision than others); and language (we all speak, but some people are more proficient in their language and learn it earlier than others). These are all adaptations, but there is extensive individual variation between them. Furthermore, the first evolved psychological mechanism to be discovered was cheater detection, to know if you got cheated while in a ‘social contract’ with another individual. Another evolved adaptation is theory of mind. People with Asperger’s syndrome, for instance, differ in the capacity of their theory of mind. Kanazawa asks:

If so, can such individual differences in the evolved psychological mechanism of theory of mind be heritable, since we already know that autism and Asperger’s syndrome may be heritable (A. Bailey et al., 1995; Folstein & Rutter, 1988)?

A very interesting question. Of course, since it’s #2017, we have made great strides in these fields and we know these two conditions to be highly heritable. Can the same be said for theory of mind? That is a question that I will return to in the future.

Kanazawa’s hypothesis does make a lot of sense, and there is empirical evidence to back his assertions. His hypothesis proposes that evolutionarily familair situations do noot take any higher levels of general intelligence to solve, whereas novel situations do. Think about that. Society is the ultimate evolutionary novelty. Who succeeds the most, on average, in society? The more intelligent.

Go outside. Look around you. Can you tell me which things were in our ancestral environment? Trees? Grass? Not really, as they aren’t the same exact kinds as we know from the savanna. The only thing that is constant is: men, women, boys and girls.

This can, however, be said in another way. Our current environment is an evolutionary mismatch. We are evolved for our past environments, and as we all know, evolution is non-teleological—meaning there is no direction. So we are not selected for possible future environments, as there is no knowledge for what the future holds due to contingencies of ‘just history’. Anything can happen in the future, we don’t have any knowledge of any future occurences. These can be said to be mismatches, or novelties, and those who are more intelligent reason more logically due to the fact that they are more adept at surviving evolutionary novel situations. Kanazawa’s theory provides a wealth of information and evidence to back his assertion that general intelligence is domain-specific.

This is yet another piece of evidence that our brain is not special. Why continue believing that our brain is special, even when there is evidence mounting against it? Our brains evolved and were selected for just like any other organ in our body, just like it was for every single organism on earth. Race-realists like to say “How can egalitarians believe that we stopped evolving at the neck for 50,000 years?” Well to those race-realists who contend that our brains are ‘special’, I say to them: “How can our brain be ‘special’ when it’s an evolved organ just like any other in our body and was subject to the same (or similar) evolutionary selective pressures?”

In sum, the brain has problems dealing with things that were not in its ancestral environment. However, those who are more intelligent will have an easier time dealing with evolutionarily novel situations in comparison to people with lower intelligence. Look at places in Africa where development is still low. They clearly don’t need high levels of g to survive, as it’s pretty close to the ancestral environment. Conversely, Eurasian societies are much more complex and thus, evolutionarily novel. This may be one reason that explains societal differences between these populations. It is an interesting question to consider, which I will return to in the future.

Fatty Acids and PISA Math Performance

1800 words

There are much more interesting theories of the evolution of hominin intelligence other than the tiring (yawn) cold winter theory. Last month I wrote on why men are attracted to a low waist-to-hip ratio in women. However, the relationship between gluteofemoral fat (fat in the thighs and buttocks) is only part of the story on how DHA and fatty acids (FAs) drove our brain growth and our evolution as a whole. Tonight I will talk about how fatty acids predict ‘cognitive performance’ (it’s PISA, ugh) in a sample of 28 countries, particularly the positive relationship between n-3 (Omega-3s) and intelligence and the negative relationship between n-6 and intelligence. I will then talk about the traditional Standard American Diet (the SAD diet [apt name]) and how it affects American intelligence on a nation-wide level. Finally, I will talk about the best diet to maximize cognition in growing babes and women.

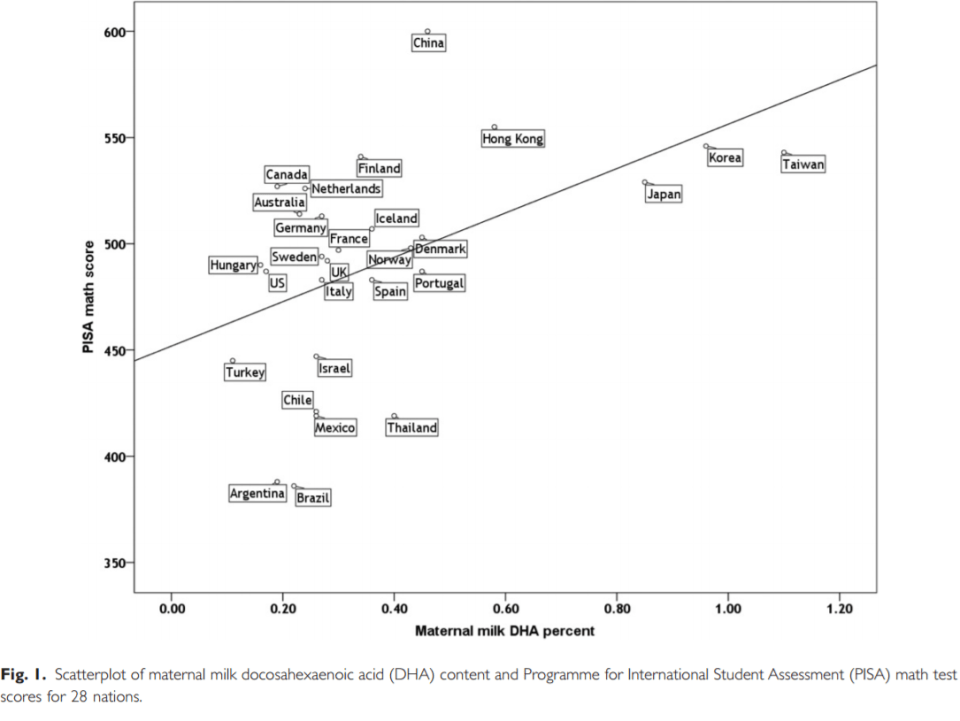

Lassek and Gaulin (2013) used the 2009 PISA data to infer cognitive abilities for 28 countries (ugh, I’d like to see a study like this done with actual IQ tests). They also searched for studies that showed data providing “maternal milk DHA DHA values as percentages of total fatty acids in 50 countries”. Further, to control for SES influences on cognitive performance, they controlled for GDP/PC (gross domestic product per country) and “educational expenditures per pupil.” They further controlled for the possible effect of macronutrients on maternal milk DHA levels, they included estimates for each country of the average amount of kcal consumed from protein, fat, and carbohydrates. To explore the relationship between DHA and cognitive ability, they included foodstuffs high in n-3—fish, eggs, poultry, red meat, and milk which also contain DPA depending on the type of feed the animal is given. There is also a ‘metabolic competition’ between n-3 and n-6 fatty acids, so they also included total animal and vegetable fat as well as vegetable oils.

Lassek and Gaulin (2013) found that GDP/PC, expenditures per student and DHA were significant predictors of (PISA) math scores, whereas macronutrient content showed no correlation.

The predictive value of milk DHA on cognitive ability is only weak when either two of the SES variables are added in the multiple regression. When milk arachidonic (a type of Omega-6 fatty acid) is added to the regression, it is negatively correlated with math scores but not significantly (so it wasn’t added to the table below).

So countries with lower maternal milk levels of DHA score lower on the maths section of the PISA exam (not an IQ test, but it’s ‘good enough’). Knowing what is known about the effects of DHA on cognitive abilities, countries who have higher maternal milk levels of DPA do score higher on the maths section of the PISA exam.

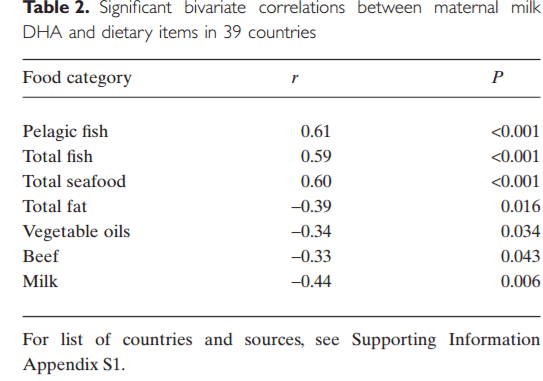

Table 2 shows the correlations between grams per capita per day of food consumption in the data set they used and maternal milk DHA. As you can see, total fish and seafood consumption are substantially correlated with total milk DHA, while foods that are high in n-6 show medium negative correlations with maternal milk DHA. The combination of foods that explain the most of the variance in maternal milk DHA is total fat consumed and total fish consumed. This explained 61 percent of the variance in maternal milk DHA across countries.

Not surprisingly, foodstuffs high in n-6 showed significant negative correlations on maternal milk DHA. “Any regression including total fish or seafood, and vegetable oils, animal fat or milk consistently explains at least half of the variance in milk DHA, with fish or seafood having positive beta coefficients and the remainder having negative beta coefficients.”

The study showed that a country’s balance of n-3 and n-6 was strongly related to the students’ math performance on the PISA. This relationship between milk DHA and cognitive performance remains sufficient even after controlling for national wealth, macro intake and investment in education. The availability of DHA in populations is a better predictor of test scores than are SES factors (which I’ve covered here on Italian IQ), though SES explains a considerable portion of the variance, it’s not as much as the overall DHA levels by country. Furthermore, maternal DHA levels are strongly correlated to per capita fish and seafood consumption while a negative correlation was noticed with the intake of more vegetable oils, fat, and beef, which suggests ‘metabolic competition’ between the n-3 and n-6 fatty acids.

There are, of course, many possible errors with the study such as maternal milk DHA values not reflecting the total DHA in that population as a whole; measures of extracting milk fatty acids differed between studies; test results being due to sampling error; and finally the per capita consumption of foods is based on food disappearance, not amount of food consumed. However, even with the faults of the study, it’s still very interesting and I hope they do further work with actual measures of cognitive ability. Despite the pitfalls of the study (the main one being the use of PISA to test ‘cognitive abilities’), this is a very interesting study. I eventually hope that a study similar to this one is undertaken with actual measures of cognitive ability and not PISA scores.

We now know that n-6 is negatively linked with brain performance, and that n-3 is positively linked. What does this say about America?

As I’m sure all of you are aware of, America is one of the fattest nations in the world. Not surprisingly, Americans consume extremely low levels of seafood (very high in DPA) and more foods high in n-6 (Papanikolaou et al, 2014). High levels of n-3 (which we do not get enough of in America) and n-6 are correlated with obesity (Simopoulos, 2016). So not only do we have a current dysgenic effect in America due to decreased fertility of the more intelligent (which is also part of the reason why we have the effect of dysgenic fertility in America), obesity is also driven by high levels of n-6 in the Western diet, which then causes obesity down the generations (Massiera et al, 2010).

I also previously wrote on agriculture and diseases of civilization. Our hunter-gatherer ancestors were all around healthier than we were. This, clearly, is due to the fact that they ate a more natural diet and not one full of processed, insulin-spiking carbohydrates, among other things. Our hunter-gatherer ancestors consumed n-3 and n-6 at equal amounts (1:1) (Kris-Etherson, et al 2000). As I documented in my article on agriculture and disease, HGs had low to nonexistent rates of the diseases that plague us in our modern societies today. However, around 140 years ago, we entered the Industrial Revolution. The paradigm shift that this caused was huge. We began consuming less n-3 (fish and other assorted seafood and nuts among other foods) while n-6 intake increased (beef, grains, carbohydrates) (Kris-Etherson, et al 2000). Moreover, the ratio of n-6 to n-3 from the years 1935 to 1939 were 8.4 to 1, whereas from the years 1935 to 1985, the ratio increased to about 10 percent (Raper et al, 2013). We Americans also consume 20 percent of our daily kcal from one ‘food’ source—soybean oil—with almost 9 percent of the total kcal coming from n-6 linoleic acids (United States Department of Agriculture, 2007). The typical American diet contains about 26 percent more n-6 than n-3, and people wonder why we are slowly getting dumber (which is, obviously, a side effect of civilization). So our n-6 consumption is about 26 percent higher than it was when we were still hunter-gatherers. Does anyone still wonder why diseases of civilization exist and why hunter-gatherers have low to nonexistent rates of the diseases that plague us?

The bioavailability of n-6 is dependent on the amount of n-3 in fatty tissue (Hibbeln et al, 2006). This goes back to the ‘metabolic competition’ mentioned earlier. N-3 also makes up 10 percent of the overall brain weight since the first neurons evolved in an environment high in n-3. N-3 fatty acids were positively related to test scores in both men and women, while n-6 showed the reverse relationship (with a stronger effect in females). Furthermore, in female children, the effect of n-3 intake were twice as strong in comparison to male children, which also exceeded the negative effects of lead exposure, suggesting that higher consumption of foods rich in n-3 while consuming fewer foods rich in n-6 will improve cognitive abilities (Lassek and Gaulin, 2011).

The preponderance of evidence suggests that if parents want to have the healthiest and smartest babes that a pregnant woman should consume a lot of seafood while avoiding vegetable oils, total fat and milk (fat, milk and beef moreso from animals that are grain-fed) Grassfed beef has higher levels of n-3, which will balance out the levels of n-6 in the beef. So if you want your family to have the highest cognition possible, eat more fish and less grain-fed beef and animal products.

In sum, if you want the healthiest, most intelligent family you can possibly have, the most important factor is…diet. Diets high in n-3 and low in n-6 are extremely solid predictors of cognitive performance. Due to the ‘meatbolic competition’ between the two fatty acids. This is because n-6 accumulates in the blood and tissue lipids exacerbating the competiiton between linolic acid (the most common form of n-6) and n-3 for metabolism and acylation into tissue lipds (Innis, 2014). Our HG ancestors had lower rates of n-6 in their diets than we do today, along with low to nonexistent disease rates. This is due to the availability of n-6 in the modern diet, which was unknown to our ancestors. Yes, seafood intake had the biggest effect on the PISA math scores, which, in my opinion (I need to look at the data), is due in part to poverty. I’m very critical of PISA, especially as a measure of cognitive abilities, but this study is solid, even though it has pitfalls. I hope a study using an actual IQ test is done (and not Richard Lynn IQ tests that use children, a robust adult sample is the only thing that will satisfy me) to see if the results will be replicated.

I also think it’d be extremely interesting to get a representative sample from each country studied and somehow make it so that all maternal DHA levels are the same and then administer the tests. This way, we can see how all groups perform with the same amounts of DHA (and to see how much of an effect that DHA really does have). Furthermore, nutritonally impoverished countries will not have access to the high-quality foods with more DHA and healthy fatty acids that lead to higher cognitive function.

It’s clear: if you want the healthiest family you could possibly have, consume more seafood.

Expounding On My Theory for Racial Differences In Sports

2300 words

In the past, I’ve talked about why the races differ—at the extremes—(and the general population, but the extremes put the picture into focus) in terms of what sports they compete and do best in. These differences come down to morphology somatype, physiology. People readily admit racial differences in sports and—rightly say—that these differences are largely genetic in nature. Why is it easier for people to accept racial differences in sports and not accept other truisms, like racial IQ differences?

I’ve muscle fiber typing and how the variances in fiber typing dictate which race/ethny performs best at which sport. I’ve also further evidence that blacks have type II fibers (responsible for explosive power), which leads to a reduced Vo2 max. This lends yet more credence to my theory of racial differences in sports—black Americans (West African descendants) have the fiber typing that is associated with explosive power, less so with endurance activities. Since I’ve documented evidence on the differences in sports such as baseball, football, swimming and jumping, bodybuilding, and finally strength sports, tonight I will talk about the evolutionary reasons for muscle fiber and somatype differences that will have us better understand the evolutionary conditions in which these traits evolved and why they got selected for.

Most WSM winners are from Nordic countries or have Nordic ancestry. There’s a higher amount of slow twitch fibers in Nordics and East Asians (and Kenyans) which is more conducive to strength and less conducive to ‘explosive’ sports. West Africans and their descendants dominate in sprinting competitions. Yea yea yea white guy won in 1960. So they will be less likely to be in strength comps and more likely to win BBing and physique comps. This is what we see.

Only three African countries have placed in the top ten in the WSM (Kenya, Namibia, and Nigeria, however, one competitor from Namibia I was able to find has European ancestry). Here is a video of a Nigerian Strongest Man competition (notice how his chest isn’t up and his hips rise before the bar reaches his knees, horrible form). Fadesere Oluwatofunmi is Nigeria’s Strongest Man, competing in the prestigious Arnold Classic, representing Nigeria. However, men such as Fadesere Olutaofunmi are outliers.

Now for a brief primer on muscle fibers and which pathways they fire through. Understanding this aspect of the human body is paramount to understanding how and why the races differ in elite competition.

Life history and muscle fiber typing

Slow twitch fibers fire through aerobic pathways. Breaking down fats and proteins takes longer through aerobic respiration. Moreover, in cold temperatures, the body switches from burning fat to burning carbohydrates for energy, which will be broken down slower due to the aerobic perspiration. Slow twitch (Type I) fibers fire through aerobic pathways and don’t tire nearly as quickly as type II fibers. Also, CHO reserves will be used more in cold weather. The body’s ability to use oxygen decreases in cold weather as well, so having slow twitch fibers is ideal (think about evolution thousands of years ago). Type I fibers fire off slower so they’ll be able to be more active for a longer period of time (studies have shown that Africans with Type II fibers hit a ‘wall’ after 40 seconds of explosive activity, which is why there are so few West-African descended marathon runners, powerlifters, Strongmen, etc). Those who possess these traits will have a higher chance to survive in these environments. Those with slow twitch fibers also have to use more oxygen. They have larger blood vessels, more mitochondria and higher concentrations of myoglobin which gives the muscles its reddish color.

Each fiber fires off through different pathways, whether they be anaerobic or aerobic. The body uses two types of energy systems, aerobic or anaerobic, which then generate Adenosine Triphosphate, better known as ATP, which causes the muscles to contract or relax. Depending on the type of fibers an individual has dictates which pathway muscles use to contract which then, ultimately, dictate if there is high muscular endurance or if the fibers will fire off faster for more speed.

Type I fibers lead to more strength and muscular endurance as they are slow to fire off, while Type II fibers fire quicker and tire faster. Slow twitch fibers use oxygen more efficiently, while fast twitch fibers do not burn oxygen to create energy. Slow twitch muscles delay firing which is why the endurance is so high in individuals with these fibers whereas for those with fast twitch fibers have their muscles fire more explosively. Slow twitch fibers don’t tire as easily while fast twitch fibers tire quickly. This is why West Africans and their descendants dominate in sprinting and other competitions where fast twitch muscle fibers dominate in comparison to slow twitch.

Paleolithic Europeans who had more stamina spread more of their genes to the next generation as their genotype was conducive to reproductive success in Ice Age Europe. Conversely in Africa, those who could get away from predators and could hunt prey more efficiently survived. Over time, frequencies of genes related to what needed to be done to survive in those environments increased, along with the frequencies of muscle fibers in the races.

Racial differences in anatomy and physiology

Along with muscle fiber differences, blacks and whites also have differences in fat-free body mass, bone density, distribution of subcutaneous fat, length of limbs relative to the trunk, and body protein contents (Wagner and Heyward, 2000). These differences are noticed and talked about in the scientific literature, even in University biology and anatomy textbooks. However, in terms of University textbooks, authors who recognize the concept of race do so in spite of what other authors write (Hallinan, 1994). Furthermore, Strkalj and Solyali (2010) analyzed 18 English textbooks on anatomy and concluded that discussion of race was ‘superficial’ and the content ‘outdated’, i.e., using the ‘Mongoloid, Caucasoid, Negroid terminology (I still do out of habit). They conclude that most mentions of race are either not mentioned in anatomy textbooks or are only ‘superficially accounted for in textbooks’. Clearly, though they are outdated, some textbooks do talk about human biological diversity, though the information needs to be updated (especially now). The center of mass in blacks is 3 percent higher than in whites, meaning whites have a 1.5 percent speed advantage in swimming while blacks have a 1.5 percent speed difference in sprinting. East Asians that are the same height as whites are even more favored in swimming, however, they are shorter on average so that’s why they do not set world records (Bejan, Jones, and Charles, 2010).

For another hand grip strength (HGP) test, see Leong et al (2016). Most studies on HGS are done on Caucasian populations with little data for non-Caucasoid populations. They found that HGS values were higher for North America and Europe, intermediate in China, South America and the Middle East, and the lowest in South Asia, Southeast Asia, and Africa. These are, most likely, average Joes and not elite BBers or strength trainers. This is one of the best papers I’ve come across on this matter (though I would kill to have a nice study done on the three classical races and their performance on the Big 3 lifts: squat, bench press and deadlift; I’m positive it would be Asian/white and blacks as the tail end).

Among other physical differences is brain size and hip width. Blacks have narrower hips than whites who have narrower hips than Asians (Rushton, 1997: 163). Bigger-brained babes are more than likely born to women who have wider hips. If you think about athletic competition, one with wide hips will get decimated by one with narrower hips as he will be better able to move. People with big crania, in turn, have the hip structure to birth big brains. This causes further division between racial groupings in sports competition.

Some people may dispute a genetic causation and attribute the success of, say, Kenyan marathoners (the Kalenjin people) and attribute the effects to the immediate environment (not ancestral), training and willpower (see here for discussion). This Kenyan subpopulation also has the morphology conducive to success in marathons (tall and lengthy), as well as type II muscle fibers (which is why Kenya placed in the WSM).

I would also like to see a study of men in their prime (age 21 to 24) from European, Africans, and East Asian backgrounds with a high n (for a study like this it’d be 20 to 30 participants for each race), with good controls. You’d see, in my opinion, East Asians slightly nudge out whites who destroy blacks. The opposite would occur in sports that use type II fibers. West Africans also have the gene variant ACTN3 which is associated with explosive sports.

For a better (less ethical study) we can use a thought experiment.

We take two babes fresh out of the womb (one European, the other West African) and keep them locked in a metabolic chamber for their whole entire lives. We keep them in the chamber until they die and monitor them, feeding them the same exact diet and giving them the same amount of attention. They start training at age 15. Who will be stronger in their prime (the European man)? Who will have more explosive power (the West African man)? A simple thought experiment can have one thinking about intrinsic racial differences in things the average American watches in their everyday lives. The subject of racial differences in sports is a non-taboo subject, however, the subject of racial differences in intelligence is a big no-no.

Think about that for a second. People obviously accept racial differences in sports, yet they have some kind of emotional attachment to the blank slate narrative. We don’t hear that you can nurture athletic success. We do, however, hear that ‘we can succeed at anything we put out minds to’. That’s not in dispute; that’s a fact. But it’s twisted in a way that genetics and ancestry has no bearing on it, when it explains a lot of the variance. People accept racial differences when they’re cheering on their favorite football team or basketball team. For instance, NFL announcer Gus Johnson said during a broadcast of a Titans and Jaguars game “He’s [Chris Johnson] got gettin’ away from the cops speed!”

Pro-sports announcers, as well as college recruiters, know what the average person doesn’t who is not exposed to these differences daily for decades on end. People in these types of professions, especially collegiate sports recruiters, must get the low-down on average racial differences and then use what they know to make their choices on who to draft for their team.

For more (anecdotal) evidence, you can look up the race/ethnicity of the winners in competitions where peoples from all over the world compete in. More West African descendants place higher in physique, BBing comps, etc; more Caucasians and East Asians (and Kenyans) place higher in strength comps. A white man has won the WSM every year since its inception. West African descended blacks dominate BBing and physique comps. Eurasians (and Kenyans) dominate in marathon running.

All of this talk of racial differences in sports (which largely has to do with whites vs. blacks, though Asians are included in my overall analysis), I’ve hardly cited anything on East Asians directly. In regards to sports that take extreme dexterity or flexibility (and high reaction), East Asians shine. They shine in diving, ping-pong, skating and gymnastics events. They usually have long torsos and small limbs. I theorize that this was an evolutionary adaptation for the East Asians, as shorter people have less surface area to keep warm. Taller people would have died out quicker than one who’s smaller and can cover up and get warmer faster. They also have quicker reaction times (Rushton and Jensen, 2005) and it has been hypothesized that this is why they dominate in ping pong.

Conclusion

We don’t need any tests to show that there are racial differences in sports; the test is the competition itself. On average, A white will be stronger than an Asian who will be stronger than a black. Conversely, a Kenyan will be a better marathoner than a West African, European or Asian. West Africans will be more likely to beat all three groups in a sprint. These differences come down to morphology, but they start inside the muscles with the muscle fibers. Some anatomy textbooks acknowledge the existence of race, however, they have old and outdated information. It’s a good thing that anatomy textbooks talk about racial differences in physiology and anatomy, now we need to start doing heavy research into racial differences in the brain. The races evolved their fiber typing depending on what they had to do to survive along with their immediate environment, i.e., high elevation like the Kalenjin people.

The evolution of differing muscle fiber types in different races is easily explainable: Europeans have slow twitch fibers. In cold temperatures, the body switches from burning fat to burning carbs for energy. Furthermore, the average person would need to have a higher lung capacity and not tire out during long hunts on megafauna. Over time, selection occurred on the individuals with more type I fibers. Conversely, West Africans and their descendants have the ACTN3 gene, which is associated with elite human athletic performance (Yang et al, 2003). Africans who could get away from predators survived and passed on the genes and fiber typing for elite athletic performance.

In sum, the races differ in terms of entrants to elite athletic competition. These differences are largely genetic in nature. Evolutionary processes explain racial differences in sports. These same selection processes that explain racial differences in elite sports competitions also explain racial differences in intelligence. I await the day that we can freely speak about racial differences in intelligence just like we speak about racial differences in sports. Denying human variation makes no sense, especially in today’s world where we have state of the art testing.

Agriculture and Diseases of Civilization

2350 words

It is assumed that since the advent of agriculture that we’ve been better nourished than our hunter-gatherer ancestors. This assumption stems from the past 130 years since the advent of the Industrial Revolution and the increase in the quality of life of those who had the benefit of the Revolution. However, over a longer period of time, the advent of agriculture is linked to poorer health, vectors of disease and lower quality of life (in terms of intractable disease). Despite what I have claimed in the past about hunter-gatherer societies, they do have lower or nonexistent rates of the diseases that currently plague our first-world societies. Why do we have such extremely high rates of disease that they don’t?

Contrary to popular belief, agriculture has caused decreases in many facets of our lives. These diseases, more aptly termed ‘diseases of civilization‘ are directly caused by agricultural and societal ways of living. This increases disease rates as it’s easier for diseases to spread faster through bigger populations. Moreover, we haven’t had time to evolve to the current diet we now eat in first-world countries which has lead to what is termed an ‘evolutionary mismatch‘ between genes and environment. We evolved to eat a certain diet and the introduction of easily digestible carbohydrates which spike insulin the highest. Since insulin causes weight gain, and carbohydrate intake has dramatically increased since the 70s, obesity has increased as a result as countries begin to industrialize and more processed foods are available to the populace.

However, since the Industrial Revolution, height has increased along with IQ. Researchers argue that in first-world countries, high rates of obesity are not preventable due to the excess amounts of highly refined and processed foods. There is data for this theory. In first-world countries, the heritability of BMI is between .76 and .85. Since first-world countries are industrialized, we would expect them to hit their ‘genetic height and weight’ along with having the ability to reach their IQ potential. However, with the excess amount of highly processed and refined foods, this would also, in theory, have the population hit their ‘genetic weights’. This is what we see in first-world countries.

To see how first-world, industrialized societies cause these gene-environment mismatches, we can compare the disease acquisition rate—or lack thereof—to that of Europeans eating an industrialized, first-world diet (high in carbohydrates).

In his 2013 book The Story of the Human Body: Evolution, Health, and Disease, Paleoanthropologist Daniel Lieberman talks at length about evolutionary mismatches. The easiest way to think about this is to think about how one evolved to their environment and think how the processes that alter the environment. A perfect example is African farmers. They may dig a trench to divert water to better irrigate their crops, but this then would cause a higher rate of mosquitoes due to the increase in still water and then selection for genes that protect against malaria would be selected for. This is one example of an evolutionary mismatch turning into an advantage for a population. Most mismatch diseases are caused by changes in the environment which change how the body functions. In other words, the current first-world diet is correlated very highly with diseases of civilization and drive most of the mismatch diseases. Most likely, you will die from one of these mismatch diseases.

If you’re born in a hotter environment, you will have more sweat glands than if you were born in a cooler environment. If you grow up eating soft, processed food, your face will be smaller than if you ate harder foods. These are two ways in which ‘cultural evolution’ (cultural change) have an effect on how the human body grows and adapts to certain stimuli based on the environment around it.

The largest cause of the higher disease rate between industrialized peoples and those in hunter-gatherer societies is shifts in life history. As our life spans increased through modernization, so to did our chance of acquiring more diseases. Of course living longer affects how many children you have but it also raises your chances of acquiring an evolutionary mismatch and your chances of dying from one.

Daniel Lieberman writes on page 190 of his book The Story of the Human Body:

A typical hunter-gatherer adult female will manage to collect 2,000 calories a day and a male can hunt between 3,000 and 6,000 calories a day. (24) A hunter-gatherer groups combined efforts yield just enough food to feed small families. In contrast, a household of early Neolithic farmers from Europe using solely manual labor before the invention of the plow could produce an average if 12,800 calories per day over the course of a year, enough to feed families of six. (25) In other words, the first farmers could double their family size.

Thusly, you can see how evolutionary mismatches would occur with the advent of an agricultural diet that we didn’t evolve to be accustomed to. This is one of the biggest examples of the negative effects of agriculture, our inability to adapt quickly to our new diets which then accelerated after the Industrial Revolution. Further, hunter-gatherers will eat anything edible while agricultural societies will largely eat only what they grow. This would have huge implications for farmers if a few pests ruined their crops since they relied on a few crops to survive.

The thing about farming is that as the Agricultural Revolution began, this increased the population size as well as making that population pretty much stable in terms of migrating. This, then, led to higher rates of disease as larger populations foster new kinds of infectious diseases. Large populations didn’t happen until the advent of farming, and with it came the first plagues. The first farming villages were small, but “as the Reverend Malthus pointed out in 1798, even modest increases in a population’s birthrate will cause rapid increases in overall population size in just a few generations.” (Lieberman, 2013: 197) So as even small increases in population size would cause a boom in future generations, which along with it would drive disease acquisition and plagues in that new and stationary society.

Lieberman further writes on pages 199-200:

Not surprisingly, farming ushered in an era of epidemics, including tuberculosis, leprosy, syphilis, plague, smallpox and influenza. (44) This is not to say that hunter-gatherers did not get sick, but before farming, human societies primarily suffered from parasites such as lice, pinworms they acquired from contaminated food, and viruses or bacteria, such as herpes simplex, which they got from contact with mammals. (45) Diseases such as malaria and yaws (the nonvenereal precursor of syphilis) were probably also present among hunter-gatherers, but at much lower rates than in farmers. In fact, epidemics could not exist prior to the Neolithic because hunter-gatherer populations are below one person per square kilometer, which is below the threshold necessary for virulent diseases to spread. Smallpox, for example, is an ancient viral disease that humans apparently acquired from monkeys or rodents (the disease’s origins are unresolved) that was able to spread appreciably until the growth of large, dense settlements. (46)

Moreover, another evolutionary mismatch is the lack of sanitation that comes with stationary societies. Hunter-gatherers could just go and defecate in a bush, whereas with the advent of civilization, waste and refuse began to pile up in the area. As noted above, when farmers clear space for irrigation to plant crops, this introduces mosquitoes into the area which then causes more disease. Furthermore, we have also acquired about 50 diseases from living near animals (Lieberman, 2013: 201). There are more than 100 evolutionary mismatch diseases that agriculture has brought to humanity.

We can compare disease rates of people in industrialized societies and people in modern-day hunter-gatherer societies. In his 2008 book Good Calories, Bad Calories, Gary Taubes documents numerous instances of hunter-gatherer societies that have no to low rates of the same modern diseases that we have:

In 1914, Hoffman himself had surveyed physicians working for the Bureau of Indian Affairs. “Among some 63,000 Indians of all tribes,” he reported, “there occurred only 2 deaths from cancer as medically observed from the year 1914.” (Taubes, 2008: 92)

“There are no known reasons why cancer should not occasionally occur among any race of people, even though it be below the lowest degree of savagery and barbarism,” Hoffman wrote. (Taubes, 2008: 92)

“Granting the practical difficulties of determining with accuracy the causes of death among the non-civilized races, it is nevertheless a safe assumption that the large number of medical missionaries and other trained medical observers, living for years among native races throughout the world, would long ago have provided a substantial basis of fact regarding the frequency of malignant disease among the so-called “uncivilized” races, if cancer were met with among them to anything like the degree common to practically all civilized countries. Quite the contrary, the negative evidence is convincing that in the opinion of qualified medical observers cancer is exceptionally rare among the primitive peoples.” (Taubes, 2008: 92)

These reports, often published in the British Medical Journal, The Lancet or local journals like the East African Medical Journal, would typically include the length of service the author had undergone among the natives, the size of the local native population served by the hospital in question, the size of the local European population, and the number of cancers involved in both. F.P. Fouch, for instance, district surgeon of the Orange Free State in South Africa, reported to the BMJ in 1923 that he had spent six years at a hospital that served fourteen thousand natives. “I never saw a single case of gastric or duodenal ulcer, colitis, appendicitis, or cancer in any form in a native, although these diseases were frequently seen among the white or European population.” (Taubes, 2008: 92)

As a result of these modern processed foods, noted Hoffman, “far-reaching changes in bodily functioning and metabolism are introduced which, extending over many years, are the causes or conditions predisposing to the development of malignant new growths, and in part at least explain the observed increase in cancer death rate of practically all civilized and highly urbanized countries.” (Taubes, 2008: 96)

The preponderance of evidence shows that these people have low rates of disease that are endemic to our societies due to the advent of agriculture. There is one large difference between hunter-gatherer societies and industrialized ones: the type and amount of food we eat.

Along with the boom of agriculture, we see a slight decrease in height the longer people live in these types of societies. As the Neolithic began 11,500 years ago, height increased about 1.5 inches for males and slightly less for females. But around 7,500 years ago, stature began to decrease and we began noticing evidence of nutritional stress and skeletal markers of disease. There is evidence that as maize was introduced into eastern Tennessee about 1,000 years ago, a decrease of .87 inches in men and 2.4 inches in women were seen. Further, the height of early farmers in China and Japan decreased by 3.1 inches as rice farming progressed, with similar height decreases being seen in Mesoamerica in men (2.2 inches) and women (3.1 inches).

Anti-hereditarian Jared Diamond asks the question “Was farming worth it?” in which he writes:

With agriculture came the gross social and sexual inequality, the disease and despotism, that curse our existence.

The first two things he brings up are pretty Marxist in nature, though they are true. He implies that agriculture causes so-called ‘sexual inequalities’ in which women are made ‘beasts of burden’, made to do the work while men walk by ’empty handed’. This seems to be one negative to a society that is, supposedly, smarter than Europeans.

Regular readers may remember me criticizing Andrew Anglin and his stance on the paleo diet—with how it’s ‘how European man evolved to eat’. However, I am a data-driven person and I try to not let any bias get involved in my thought processes. I know do believe that we should eat a diet that closely mimics our hunter-gatherer ancestors, though we shouldn’t go overboard like certain people in the paleo community, we should be mindful of the quality of food we do it as we will greatly increase our life expectancy along with our quality of life. Indeed, researchers have proposed that we should adopt diets that are close in composition to what our hunter-gatherer ancestors ate in order to battle diseases of civilization. Based on what I’ve read over the past few months, I am inclined to agree. Indeed, evidence for this is seen in a sample of ten Australian Aborigines who were introduced back to their traditional lifestyle (O’Dea, 1984). In a 7 week period, they showed improvement in carbohydrate and lipid metabolism, effectively becoming diabetes-free in almost 2 months.

In sum, there were obviously both positive and negative effects on human life due to the advent of agriculture (leaning more towards negative). These range from diseases to increased population size, to ‘social inequalities’ to higher rates of obesity (this evolutionary mismatch will be extensively covered in the future) to a whole myriad of other diseases. These then lower the quality of life of the individual inflicted. However, the rates of these diseases are low to non-existent in hunter-gatherer societies due to them being nomadic and eating more plentiful foods. Agricultural societies become dependent on a few staple crops so when an endemic occurs, there is mass death since they do not know how to subsist on anything but what they have become accustomed to. The advent of agriculture leads to a decrease in stature as well as brain size. Further, agriculture and the processed foods that came with it caused us to become more susceptible to obesity, which was further exacerbated by the industrial revolutions and the ‘nutritional guidelines’ of the 60s and 70s that led to higher rates of coronary heart disease. It is the lifestyle change from agriculture that we have not adapted to yet that causes disease these diseases of civilization that shorten our life expectancies. I do now believe that all people should eat a diet as close to hunter-gatherer diet as possible, as that’s what the preponderance of evidence shows.

By the way, to my knowledge, contrary to what The Alternative Hypothesis says, there are no differences in carbohydrate metabolism between races (save for a few populations such as the Pima).

Why Are Humans Here?

1600 words

Why are humans here? No, I’m not going to talk about any gods being responsible for our placement on this planet, though some extraterrestrial phenomena do play a part in why we are here today. The story of how and why we are here is extremely fascinating, because we are here only by chance, not by any divine purpose.

To understand why we are here, we first need to know what we evolved from and where this organism evolved. The Burgess Shale is a limestone quarry formed after the events of the Cambrian explosion. In the Shale are the remnants of an ancient sea that had more varieties of life than today’s modern oceans. The Shale is the best record we have of Cambrian fossils after the Cambrian explosion we currently have. Preserved in the Shale are a wide variety of creatures. One of these creatures is our ancestor, the first chordate. It’s name: Pikaia gracilens.

Pikaia is the only fossil from the Burgess Shale we have found that is a direct ancestor of humans. Now think about the Burgess decimation and the odds of Pikaia surviving. If this one little one and a half inch organism didn’t survive the Burgess decimation, everything you see around you today would not be here. By chance, we humans are here today due to the very unlikely survival of Pikaia. Stephen Jay Gould wrote a whole book on the Burgess Shale and ended his book Wonderful Life: The Burgess Shale and the Nature of History (1989: 323) as follows:

And so, if you wish to ask the question of the ages—why do humans exist?—a major part of that answer, touching those aspects of the issue that science can touch at all, must be: because Pikaia survived the Burgess decimation. This response does not cite a single law of nature; it embodies no statement about predictable evolutionary pathways, no calculation of probabilities based on general rules of anatomy or ecology. The survival of Pikaia was a contingency of “just history.” I do not think that any “higher” answer can be given, and I cannot imagine that any resolution could be more fascinating.

The survival of organisms during a mass extinction may be strongly predicated by chance (Mayr, 1964: 121). The Burgess decimation is but one of five mass extinction events in earth’s history. Let’s say we could wind back life’s tape to the very beginning and let it play out again, at the end of the tape would we see something familiar or completely ‘alien’? I’m betting on it being something ‘alien’, since we know that the survival of certain organisms is paramount to why Man is here today. Indeed, biochemist Nick Lane and author of the book The Vital Question: Evolution and the Origins of Complex Life (2015) agrees and writes on page 21:

Given gravity, animals that fly are more likely to be lightweight, and possess something akin to wings. In a more general sense, it may be necessary for life to be cellular, composed of small units that keep their insides different from the outside world. If such constraints are dominant, life elsewhere may closely resemble life on earth. Conversely, perhaps contingency rules – the make-up of life depends on the random survivors of global accidents such as the asteroid impact that wiped out the dinosaurs. Wind back the clock to Cambrian times, half a billion years ago, when mammals first exploded into the fossil record, and let it play forwards again. Would that parallel be similar to our own? Perhaps the hills would be crawling with giant terrestrial octopuses.

I believe contingency does rule—we are the survivors of global accidents. Even survival during asteroid impact and its ensuing effects that killed the dinosaurs 65 million years ago was based on chance. The chance that the mammalian critters were small enough and could find enough sustenance to sustain themselves and survive while the big-bodied dinosaurs died out.

Let’s say one day someone discovers how to make a perfect representation in a lab that perfectly mimicked the conditions of the early earth down to the tee. Let’s also say that 1 month is equal to 1 billion years. In close to 5 months, the experiment will be finished. Will what we see in this experiment mirror what we see today, or will it be something completely different—completely alien? Stephen Jay Gould writes on page 323 of Wonderful Life:

Wind the tape of life back again to Burgess times, and let it play again. If Pikaia does not survive in the replay, we are wiped out of future history—all of us, from shark to robin to orangutan. And I don’t think that any handicapper, given Burgess evidence known today, would have granted very favorable odds for Pikaia.

Why should life play out the exact same way if we had the ability to wind back the tape of life?

Another aspect of our evolution and why we are here is the tiktaalik, the best representative for a “transtional species between fish and land-dwelling tetrapods“. Tiktaalik had the unique ability to prop itself up out of the water to scout for food and predators. Tiktaalik had the beginnings of beginnings of arms, what it used to prop itself up out of the water. Due to the way its fins were structured, it had the ability to walk on the seabed, and eventually land. This one ancestor of ours began to gain the ability to breathe air and transition to living on land. If all tiktaaliks had died out in a mass extinction, we, again, would not be here. The exclusion of certain organisms from history then excludes us from the future.

And now, of course, with talks of the how and why we are here, I must discuss the notion of ‘evolutionary progress‘. Surely, to say that there is any type of ‘progress’ to evolution based on the knowledge of certain organisms’ chance at survival seems very ludicrous. The commonly held notion of the ‘ladder of progress’, the scala naturae, is still prominent both in evolutionary biology and modern-day life. There is an implicit assumption that there must be some linear line from single-celled organisms to Man, and that we are the eventual culmination of the evolutionary process. However, if Pikaia had not survived the Burgess decimation, a lot of the animals you see around you today—including us—would not be here.

If dinosaurs had not died out, we would not be here today. That chance survival of small shrew-like mammals during the extinction event 65 mya is another reason why we are here. Stephen Jay Gould (1989) writes on page 318:

If mammals had arisen late and helped to drive dinosaurs to their doom, then we could legitamately propose a scenario of expected progress. But dinosaurs remained domininant and probably became extinct only as a quirky result of the most unpredictable of all events—a mass dying triggered by extraterrestrial impact. If dinosaurs had not died in this event, they would probably still dominate the large-bodied vertebrates, as they had for so long with such conspicuous success, and mammals would still be small creatures in the interstices of their world. This situation prevailed for one hundred million years, why not sixty million more? Since dinosaurs were not moving towards markedly larger brains, and since such a prospect may lay outside the capability of reptilian design (Jerison, 1973; Hopson, 1977), we must assume that consciousness would not have evolved on our planet if a cosmic catastrophe had not claimed the dinosaurs as victims. In an entirely literal sense, we owe our existence, as large reasoning mammals, to our lucky stars.

He also writes on page 320:

Run the tape again, and let the tiny twig of Homo sapiens expire in Africa. Other hominids may have stood on the threshhold of what we know as human possibilities, but many sensible scenarios would never generate our level of mentality. Run the tape again, and this time Neanderthal perishes in Europe, and Homo erectus in Asia (as they did in our world). The sole surviving stock, Homo erectus in Africa, stumbles along for a while, even prospers, but does not speciate and therefore remains stable. A mutated virus then wipes Homo erectus out, or a change in climate reconverts Africa into an inhospitable forest. One little twig on the mammalian branch, a lineage with interesting possibilities that were never realized, joins the vast majority of species in extinction. So what? Most possibilities are never realized, and who will know the difference?

Arguments of this form led me to the conclusion that biology’s most profound insight to human nature, status and potential lies in the simple phrase, the embodiment of contingency: Homo sapiens is an entity, not an idea.

In any type of rewind scenario, any little nudge, any little difference in the rewind would change the fate of the planet. Thusly, contingency rules.

So the answer to the question of why humans are here doesn’t have any mystical or religious answer. It’s as simple as “No Pikaia, no us.” Why we are here is highly predicated on chance and if any of our ancestors had died in the past, Homo sapiens would not be here today. Knowing what we know about the Burgess Shale shows how the concept of ‘progress’ in biology is ridiculous. Rewinding the tape of life will not lead to our existence again, and some other organism will rule the earth but it would not be us. The answer to why we are here is “just history”. I don’t think any other answer to the question is as interesting as cosmic and terrestrial accidents. That just makes our accomplishments as a species even more special.

How Does the Increasingly Diverse American Landscape Affect White Americans’ Racial Attitudes?

1700 words

Last month I wrote about how Trump won the election due to white Americans’ exposure to diversity caused them to support Trump and his anti-immigration policies over Clinton and Sanders. That is, whites high in racial/ethnic identification exposed to more diversity irrespective of political leaning would vote for Trump for President and not Clinton or Sanders. It is commonly said that more diversity will increase tolerance for the out-group, and all will be well. But is this true?

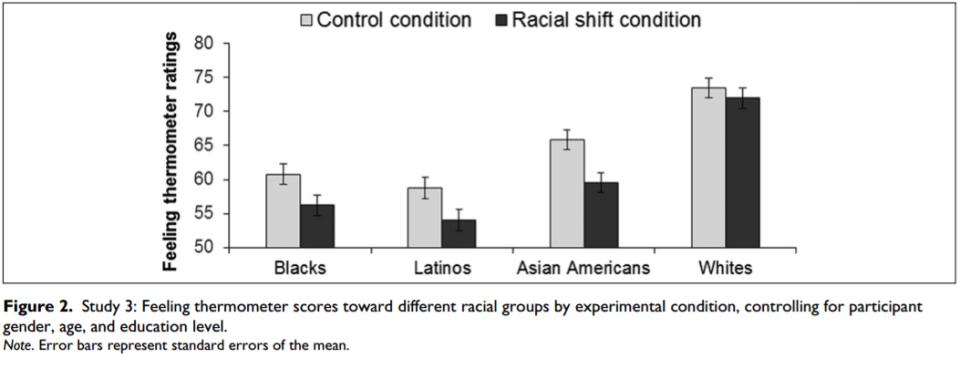

Craig and Richeson (2014) explored how the changing racial shift in America affects whites’ feelings towards the peoples replacing whites (‘Hispanic’/Latino populations) as well as the feelings of whites towards other minority groups that are not replacing them in the country. Interestingly, whites exposed to the racial shift group showed more pro-white, anti-minority violence as well as preferring spaces and interactions with their own kind over others. Moreover, negative feelings towards blacks and Asians were seen, two groups that are not replacing white Americans.

White Canadians who were exposed to a graph showing that whites would be a projected minority “perceived greater in-group threat” leading to the expression of “somewhat more anger toward and fear of racial minorities.” East Asians are showing the most population growth in Canada. Relaying this information to whites has them express less warmth towards East Asian Canadians.

In their first study (n=86, 44 shown the racial shift and 42 shown current U.S. demographics), participants who read the title of a newspaper provided to them. One paper was titled “In a Generation, Ethnic Minorities May Be the U.S. Majority”, whereas the other was titled “U.S. Census Bureau Releases New Estimates of the US Population by Ethnicity.” They were asked questions such as “I would rather work alongside people of my same ethnic origin,” and “It would bother me if my child married someone from a different ethnic background.” Whites who read the newspaper article showing ethnic replacement showed more racial bias than those who read about current U.S. demographics. Whites exposed to projected demographics were more likely to prefer settings and interactions with other whites compared to the group who read current demographics.

In study 2 a (n=28, 14 Dutch participants and 14 American participants, 14 exposed to the U.S. racial shift, 14 exposed to the Dutch racial shift), those in the U.S. racial shift category showed more pro-white/anti-Asian bias than participants in the Dutch racial shift category. Those who were exposed to the changing U.S. ethnic landscape were more likely to show pro-white/anti-black bias than participants exposed to the Dutch racial shift (study 2b, n=25, 14 U.S. racial shift, 11 Dutch racial shift). In other words, making the U.S changing racial/ethnic population important, whites showed that whites were, again, more likely to be pro-white and anti-minority, even while exposed to an important racial demographic shift in a foreign country (the Netherlands). Whites, then, exposed to more racial diversity will show more automatic bias towards minorities, especially whites who live around a lot of blacks and ‘Hispanics’. Making whites aware of the changing racial demographics in America had them express automatic racial bias towards all minority groups—even minority groups not responsible for the racial shift.

In study 3 (n=620, 317 women, 76.3% White, 9.0% Black, 10.0% Latino, 4.7% other race) whether attitudes toward different minority groups may be affected by the exposure to the racial shift. Study 3 specifically focused on whites (n=415, 212 women, median age 48.8, a nationally representative sample of white Americans). Half of the participants were shown information about the projected ethnic shift in America while the other half were given a news article on the geographic mobility in America (individuals who move in a given year). They were asked their feelings on the following statements:

“the American way of life is seriously threatened” and were asked to indicate their view of the trajectory of American society (1 = American society is getting much worse every year, 5 = American society is getting much better every year); these two items were standardized and averaged to create an index of system threat (r = .64). To assess system justification, we asked participants to indicate their agreement (1 = strongly agree, 7 = strongly disagree) to the statement “American society is set up so that people usually get what they deserve.”

They were also asked the following questions on how certain they were of America’s social future:

“If they increase in status, racial minorities are likely to reduce the influence of White Americans in society.” The racial identification question asked participants to indicate their agreement (1 = strongly agree, 7 = strongly disagree) with the following statement, “My opportunities in life are tied to those of my racial group as a whole.”

The researchers had the participants read the article about the impending racial shift in America and had them fill out “feeling thermometers” on how they felt about differing racial groups in America (blacks, whites, Asians and ‘Hispanics’) with 1 being cold and 100 being hot. Whites reported the most positivity towards their own group, followed by Asians, blacks and showing the least positivity towards ‘Hispanics’ (the group projected to replace whites in 25 years). Figure 2 also shows that whites don’t show the same negative biases they would towards other minorities in America, most likely due to the ‘model minority‘ status.

So the researchers showed that by making the racial shift important, that led to more white Americans showing negative attitudes towards minorities—specifically ‘Hispanics’. This was brought about by whites’ “concerns of lose of societal status.” When whites begin to notice demographic changes, the attitudes towards minorities will change—most notable the attitudes towards blacks and ‘Hispanics’ (which is due to the amount of crime committed by both groups, and is why whites show favoritism towards Asians, in my opinion). Overall, it was shown in a nationally representative sample of whites that showing the changing demographics in the country leads to more negative responses towards minority groups. This is due to the perceived threat on whites’ group status, which leads to more out-group bias.

These four studies report empirical evidence that contrary to the belief of liberals et al—that an increasingly diverse America will lead to more acceptance—more exposure to diversity and the changing racial demographics will have whites show more negative attitudes towards minority groups, most notably ‘Hispanics’, the group projected to become the majority by 2042. The authors write:

Consistent with this prior work, the present research offers compelling evidence that the impending so-called “majority-minority” U.S. population is construed by White Americans as a threat to their group’s position in society and increases their expression of racial bias on both automatically activated and selfreport attitude measures.

Interestingly, the authors also write:

That is, the article in the U.S. racial shift condition accurately attributed a large percentage of the population shift to increases in the Latino/Hispanic population, yet, participants in this condition expressed more negative attitudes toward Black Americans and Asian Americans (Study 3) as well as greater automatic bias on both a White-Asian and a White-Black IAT (Studies 2a and 2b). These findings suggest that the information often reported regarding the changing U.S. racial demographics may lead White Americans to perceive all racial minority groups as part of a monolithic non-White group.

You can see this from the rise of the alt-right. Whites, when exposed to the reality of the demographic shift in America, will begin to show more pro-white attitudes while derogating minority out-groups. It is important to note the implications of these studies. One could look at these studies, and rightly say, that as America becomes more diverse that ethnic tensions will increase. Indeed, this is what we are now currently seeing. Contrary to what people say about diversity “being our strength“, it will actually increase ethnic hostility in America and lead towards evermore increasing strife between ethnic groups in America (that is ever-rising due to the current political and social climate in the country). Diversity is not our “strength”—it is, in fact, the opposite. It is our weakness. As the country becomes more diverse we can expect more ethnic strife between groups, which will lower the quality of life for all ethnies, while making whites show more negative attitudes towards all minority groups (including Asians and blacks, but less so than ‘Hispanics’) due to group status threat. The authors write in the discussion:

That is, these studies revealed that White Americans for whom the U.S. racial demographic shift was made salient preferred interactions/settings with their own racial group over minority racial groups, expressed more automatic pro-White/antiminority bias, and expressed more negative attitudes toward Latinos, Blacks, and Asian Americans. The results of these latter studies also revealed that intergroup bias in response to the U.S. racial shift emerges toward racial/ethnic minority groups that are not primary contributors to the dramatic increases in the non-White (i.e., racial minority) population, namely, Blacks and Asian Americans. Moreover, this research provides the first evidence that automatic evaluations are affected by the perceived racial shift. Taken together, these findings suggest that rather than ushering in a more tolerant future, the increasing diversity of the nation may actually yield more intergroup hostility.

Thinking back to Rushton’s Genetic Similarity Theory, we can see why this occurs. Our genes are selfish and want to replicate with out similar genes. Thus, whites would become less tolerant of minority groups since they are less genetically similar to them. This would then be expressed in their attitudes towards minority groups—specifically, ‘Hispanics’ as that ethny will most likely to become the majority and overtake the white majority in 25 years. This is GST on steroids. Once whites realize the reality of the situation of increasing diversity in America—along with their status in the country as a whole—they will then show more negative bias towards minority out-groups.

All in all, the more whites are exposed to diversity in the social context as well as the reality of the ethnic demographic shift in 25 years will be more likely to show negative attitudes towards all American ethnies (though less negative attitudes towards Asians, dude to being less criminal, in my opinion). As the country becomes less white, so to will the whites in America become less tolerant of all minorities and start banding together for pro-white interests—showing that diversity is not our strength. This, in reality, is exactly what liberals do not want—whites banding together showing less favoritism towards the out-group. However, this is what occurs in countries that increasingly become diverse.

Neurons By Race

1100 words

With all of my recent articles on neurons and brain size, I’m now asking the following question: do neurons differ by race? The races of man differ on most all other variables, why not this one?

As we would have it, there are racial differences in total brain neurons.In 1970, an anti-hereditarian (Tobias) estimated the number of “excess neurons” available to different populations for processing bodily information, which Rushton (1988; 1997: 114) averaged to find: 8,550 for blacks, 8,660 for whites and 8,900 for Asians (in millions of excess neurons). A difference of 100-200 million neurons would be enough to explain away racial differences in achievement, for one. Two, these differences could also explain differences in intelligence. Rushton (1997: 133) writes:

This means that on this estimate, Mongoloids, who average 1,364 cm3 have 13.767 billion cortical neurons (13.767 x 109 ). Caucasoids who average 1,347 cm3 have 13.665 billion such neurons, 102 million less than Mongoloids. Negroids who average 1,267 cm3 , have 13.185 billion cerebral neurons, 582 million less than Mongoloids and 480 million less than Caucasoids.

Of course, Rushton’s citation of Jerison, I will leave alone now that we know that encephilazation quotient has problems. Rushton (1997: 133) writes:

The half-billion neuron difference between Mongoloids and Negroids are probably all “excess neurons” because, as mentioned, Mongoloids are often shorter in height and lighter in weight than Negroids. The Mongoloid-Negroid difference in brain size across so many estimation procedures is striking

Of course, small differences in brain size would translate to differences differences neuronal count (in the hundreds of millions), which would then affect intelligence.

Moreover, since whites have a greater volume in their prefrontal cortex (Vint, 1934). Using Herculano-Houzel’s favorite definition for intelligence, from MIT physicist Alex Wissner-Gross:

The ability to plan for the future, a significant function of prefrontal regions of the cortex, may be key indeed. According to the best definition I have come across so far, put forward by MIT physicist Alex Wissner-Gross, intelligence is the ability to make decisions that maximize future freedom of action—that is, decisions that keep most doors open for the future. (Herculano-Houzel, 2016: 122-123)

You can see the difference in behavior and action in the races; how one race has the ability to make decisions to maximize future ability of action—and those peoples with a smaller prefrontal cortex won’t have this ability (or it will be greatly hampered due to its small size and amount of neurons it has).

With a smaller, less developed frontal lobe and less overall neurons in it than a brain belonging to a European or Asian, this may then account for overall racial differences in intelligence. The few hundred million difference in neurons may be the missing piece to the puzzle here.Neurons transmit information to other nerves and muscle cells. Neurons have cell bodies, axons and dendrites. The more neurons (that’s also packed into a smaller brain, neuron packing density) in the brain, the better connectivity you have between different areas of the brain, allowing for fast reaction times (Asians beat whites who beat blacks, Rushton and Jensen, 2005: 240).

Remember how I said that the brain uses a certain amount of watts; well I’d assume that the different races would use differing amount of power for their brain due to differing number of neurons in them. Their brain is not as metabolically expensive. Larger brains are more intelligent than smaller brains ONLY BECAUSE there is a higher chance for there to be more neurons in the larger brain than the smaller one. With the average cranial capacity (blacks: 1267 cc, 13,185 million neurons; whites: 1347 cc, 13,665 million neurons, and Asians: 1,364, 13,767 million neurons). (Rushton and Jensen, 2005: 265, table 3) So as you can see, these differences are enough to account for racial differences in achievement.

A bigger brain would mean, more likely, more neurons which would then be able to power the brain and the body more efficiently. The more neurons one has, the more likely it it that they are intelligent as they have more neuronal pathways. The average cranial capcities of the races show that there are neuronal differences between them, which these neuronal differences then are the cause for racial differences, with the brain size itself being only a proxy, not an actual indicator of intelligence. The brain size doesn’t matter as much as the amount of neurons in the brain.

A difference in the brain of 100 grams is enough to account for 550 million cortical neurons (!!) (Jensen, 1998b: 438). But that ignores sex differences and neuronal density. However, I’d assume that there will be at least small differences in neuron count, especially from Rushton’s data from Race, Evolution and Behavior. Jensen (1998) also writes on page 439:

I have not found any investigation of racial differences in neuron density that, as in the case of sex differences, would offset the racial difference in brain weight or volume.

So neuronal density by brain weight is a great proxy.

Racial differences in intelligence don’t come down to brain size; they come down to total neuron amount in the brain; differences in size in certain parts of the brain critical to intelligence and amount of neurons in those critical portions of the brain. I’ve yet to come across a source talking about the different number of neurons in the brain by race, but when I do I will update this article. From what we know, we can make the assumption that blacks have less packing density as well as a smaller number of neurons in their PFC and cerebral cortex. Psychopathy is associated with abnormalities in the PFC; maybe, along with less intelligence, blacks would be more likely to be psychopathic? This also echoes what Richard Lynn says about Race and Psychopathic Personality:

There is a difference between blacks and whites—analogous to the difference in intelligence—in psychopathic personality considered as a personality trait. Both psychopathic personality and intelligence are bell curves with different means and distributions among blacks and whites. For intelligence, the mean and distribution are both lower among blacks. For psychopathic personality, the mean and distribution are higher among blacks. The effect of this is that there are more black psychopaths and more psychopathic behavior among blacks.

Neuronal differences and size of the PFC more than account for differences in psychopathy rates as well as differences in intelligence and scholastic achievement. This could, in part, explain the black-white IQ gap. Since the total number of neurons in the brain dictates, theoretically speaking, how well an organism can process information, and blacks have a smaller PFC (related to future time preference); and since blacks have less cortical neurons than Whites or Asians, this is one large reason why black are less intelligent, on average, than the other races of Man.

How Intelligent Were Our Hominin Ancestors?

3000 words