Home » IQ (Page 5)

Category Archives: IQ

“Definitions” of ‘Intelligence’ and its ‘Measurement’

1750 words

What ‘intelligence’ is and how, and if, we can measure it has puzzled us for the better part of 100 years. A few surveys have been done on what ‘intelligence’ is, and there has been little agreement on what it is and even if IQ tests measure ‘intelligence.’ Richardson (2002: 284) noted that:

Of the 25 attributes of intelligence mentioned, only 3 were mentioned by 25 per cent or more of respondents (half of the respondents mentioned ‘higher level components’; 25 per cent mentioned ‘executive processes’; and 29 per cent mentioned ‘that which is valued by culture’). Over a third of the attributes were mentioned by less than 10 per cent of respondents (only 8 per cent of the 1986 respondents mentioned ‘ability to learn’).

As can be seen, even IQ-ists today cannot agree upon a definition—indeed, even Ian Deary admits that “There is no such thing as a theory of human intelligence differences—not in the way that grown-up sciences like physics or chemistry have theories” (quoted in Richardson, 2012). (Also note that attempts of validity are circular, relying on correlations with other, similar tests; Richardson and Norgate, 2015; Richardson, 2017b.)

Linda Gottfredson, University of Delaware sociologist and well-known hereditarian, is a staunch defender of JP Rushton (Gottfredson, 2013) and the hereditarian hypothesis (Gottfredson, 2005, 2009). Her ‘definition’ of intelligence is one of the most-oft cited ones, eg, Gottfredson et al (1993: 13) notes that (my emphasis):

Intelligence is a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly and learn from experience. It is not merely book learning, a narrow academic skill, or test-taking smarts. Rather, it reflects a broader and deeper capability for comprehending our surroundings-“catching on,” “ making sense” of things, or “figuring out” what to do.

So ‘intelligence’ is “a very general mental capability”, its main ‘measure’ IQ tests (knowledge tests), but ‘intelligence’ “is not merely book learning, a narrow academic skill, or test-taking smarts.” Here’s some more hereditarian “reasoning” (which you can contrast with the hereditarian “reasoning” on race—just assume it exists). Gottfredson also argues that ‘intelligence’ or ‘g’ is learning ability. But, as Richardson (2017a: 100) notes, “it will always be quite impossible to measure ability with an instrument that depends on learning in one particular culture“—which he terms “the g paradox, or a general measurement paradox.”

Gottfredson (1997) also argues that the “active ingredient” in IQ testing is the “complexity” of the items—what makes one item more difficult than another, such as a 3×3 matrix item being more complex than a 2×2 matrix item and giving some examples of analogies which she believes to show a type of higher, more complex cognition in order to figure out the answer to the problem. (Also see Richardson and Norgate, 2014 for further critiques of Gottfredson.)

The trouble with this argument is that IQ test items are remarkably simple in their cognitive demands compared with, say, the cognitive demands of ordinary social life and other activities that the vast majority of children and adults can meet adequately every day.

For example, many test items demand little more than rote reproduction of factual knowledge most likely acquired from experience at home or by being taught in school. Opportunities and pressures for acquiring such valued pieces of information, from books in the home to parents’ interests and educational level, are more likely to be found in middle-class than in working-class homes. So the causes of differences could be causes in opportunities for such learning.

The same could be said about other frequently used items, such as “vocabulary” (or word definitions); “similarities” (describing how two things are the same); “comprehension” (explaining common phenomena, such as why doctors need more training). This helps explain why differences in home background correlate so highly with school performance—a common finding. In effect, such items could simply reflect the specific learning demanded by the items, rather than a more general cognitive strength. (Richardson, 2017a: 91)

IQ-ists, of course, would then state that there is utility in such “simple-looking” test items, but we have to remember that items on IQ tests are not selected based on a theoretical cognitive model, but are selected to give the desired distributions that the test constructors want (Mensh and Mensh, 1991). “… those items in IQ tests have been selected because they help produce the expected pattern of scores. A mere assertion of complexity about IQ test items is not good enough” (Richardson, 2017a: 93). “The items selected for inclusion [on Binet’s test] were those that in the judgment of the teachers distinguished bright from dull students” (Castles, 2012: 88). It seems that all hereditarians do is “assert” or “assume” things—like the equal environments assumption (EEA), the existence of race, and now, the existence of “intelligence”. Just presuppose what you want and, unsurprisingly, you get what you wanted. The IQ-ist then triumphs that the test did its job—sorting high- and low-quality thinkers on the basis of their IQ scores. But that’s exactly the problem: prior assumptions on the nature of ‘intelligence’ and its distribution dictate the construction of the tests in question.

Mensh and Mensh (1991: 30) state that “The [IQ] tests do what their construction dictates; they correlate a group’s mental worth with its place in the social hierarchy.” That is, who is or is not “intelligent” is already presupposed. There has been ample admission of such presumptions affecting the distribution of scores, as some critics have documented (e.g., Hilliard, 2012’s documentation of test norming for two different white cultural groups in South Africa and that Terman equalized scores on his 1937 revision of the Stanford-Binet).

Herrnstein and Murray (1994: 1) write that:

That the word intelligence describes something real and that it varies from person to person is as universal and ancient as any understanding about the state of being human. Literate cultures everywhere and throughout history have had words for saying that some people are smarter than others. Given the survival value of intelligence, the concept must be still older than that. Gossip about who in the tribe is cleverest has probably been a topic of conversation around the fire since fires, and conversation, were invented.

Castles (2012: 83) responds to these assertions stating that “the concept of intelligence is indeed a “brashing modern notion.” 1” Herrnstein and Murray, of course, are in the “Of COURSE intelligence exists!” camp, for, to them, it conferred survival advantages and so, it must exist and we can, therefore, measure it in humans.

Howe (1997), in his book IQ in Question, asks us to imagine someone asking to construct a vanity test. Vanity, like ‘intelligence’, has no agreed-upon definition which states how it should be measured nor anything that makes it possible to check that we are measuring the supposed construct correctly. So the one who wants to assess vanity needs to construct a test with questions he presumes tests vanity. So if the questions he asks relates to how others perceive vanity, then the ‘vanity test’ has been successfully constructed and the test constructor can then believe that he’s measuring “differences in” vanity. But, of course, selecting items on a test is a subjective matter; there is no objective way for this to occur. We can say, with length for instance, that line A is twice as long as line B. But we could not, then, state that person A is twice as vain as person B—nor could we say that person A is twice as intelligent as person B (on the basis of IQ scores)—for what would it mean for someone to be twice as vain as someone else, just like what would it mean for someone to be twice as intelligent as someone else?

Howe (1997: 6) writes:

The measurement of intelligence is bedeviled by the same problems that make it virtually impossible to measure vanity. It is of course possible to construct intelligence tests, and the tests can be useful in a number of ways for assessing human mental abilities, but it is wrong to assume that such tests have the capability of measuring an underlying quality of intelligence, if by ‘measuring’ we have in mind the same operations that are involved in the measurement of a physical quality such as length. A psychological test score is no more than an indication of how well someone has performed at a number of questions that have been chosen for largely practical reasons. Nothing is genuinely being measured.

But if “A psychological test score is no more an indication of how well someone has performed at a number of questions that have been chosen largely for practical reasons”, then it follows that knowledge exposure explains outcomes in psychological test scores. Richardson (1998: 127) writes:

The most reasonable answer to the question “What is being measured?”, then, is ‘degree of cultural affiliation’: to the culture of test constructors, school teachers and school curricula. It is (unconsciously) to conceal this that all the manipulations of item selection, evasions about test validities, and searches for post hoc theoretical underpinning seem to be about. What is being measured is certainly not genetically constrained complexity of general reasoning ability as such,

Mensh and Mensh (1991: 73) note that “In reality — which is precisely the opposite of what Jensen claims it to be — test discrimination among individuals within any group is the incidental by-product of tests constructed to discriminate between groups. Because the tests’ class and racial bias ensures that some groups will be higher and others lower in the scoring hierarchy, the status of an individual member of a group is as a rule predetermined by the status of that group.”

In sum, what these tests test is what the test constructors presume—mainly, class and racial bias—so they get what they want to see. If the test does not match their presuppositions, the test gets discarded or reconstructed to fit with their biases. Thus, definitions of ‘intelligence’ will always be, as Castles (2012: 29), “intelligence is a cultural construct, specific to a certain time and place.” The definition from Gottfredson doesn’t make sense, as the “test-taking smarts” is the main “measure” of ‘intelligence’, and so intelligence’s “main measure” is the IQ test—which presupposes the distribution of scores as developed by the test constructors (Mensh and Mensh, 1991). Herrnstein and Murray’s definition does not make sense either, as the concept of “intelligence” is a modern notion.

At best, IQ test scores measure the degree of cultural acquisition of knowledge; they do not, nor can they, measure ‘intelligence’—which is a cultural concept which changes with the times. The tests are inherently biased against certain groups; looking at the history and construction of IQ testing will make that clear. The tests are middle-class knowledge tests; not tests of ‘intelligence.’

The “World’s Smartest Man” Christopher Langan on Koko the Gorilla’s IQ

1500 words

Christopher Langan is purported to have the highest IQ in the world, at 195—though comparisons to Wittgenstein (“estimated IQ” of 190), da Vinci, and Descartes on their “IQs” are unfounded. He and others are responsible for starting the high IQ society the Mega foundation for people with IQs of 164 or above. For a man with one of the highest IQs in the world, he lived on a poverty wage at less than $10,000 per year in 2001. He has also been a bouncer for the past twenty years.

Koko is one of the world’s most famous gorillas, most-known for crying when she was told her cat got hit by a car and being friends with Robin Williams, also apparently expressing sadness upon learning of his death. Koko’s IQ, as measured by an infant IQ test, was said to be on-par or higher than some of the (shoddy) national IQ scores from Richard Lynn (Richardson, 2004; Morse, 2008). This then prompted white nationalist/alt-right groups to compare Koko’s IQ scores with that of certain nationalities and proclaim that Koko was more ‘intelligent’ than those nationalities on the basis of her IQ score. But, unfortunately for them, the claims do not hold up.

The “World’s Smartest Man” Christopher Langan is one who falls prey to this kind of thinking. He was “banned from” Facebook for writing a post comparing Koko’s IQ scores to that of Somalians, asking why we don’t admit gorillas into our civilization if we are letting Somalian refugees into the West:

“According to the “30 point rule” of psychometrics (as proposed by pioneering psychometrician Leta S. Holingsworth), Koko’s elevated level of thought would have been all but incomprehensible to nearly half the population of Somalia (average IQ 68). Yet the nation’s of Europe and North America are being flooded with millions of unvetted Somalian refugees who are not (initially) kept in cages despite what appears to be the world’s highest rate of violent crime.

Obviously, this raises the question: Why is Western Civilization not admitting gorillas? They too are from Africa, and probably have a group mean IQ at least equal to that of Somalia. In addition, they have peaceful and environmentally friendly cultures, commit far less violent crime than Somalians…”

I presume that Langan is working off the assumption that Koko’s IQ is 95. I also presume that he has seen memes such as this one floating around:

There are a few problems with Langan’s claims, however. (1) The notion of a “30-point IQ point communication” rule—that one’s own IQ, plus or minus 30 points, denotes where two people can understand each other; and (2) bringing up Koko’s IQ and the comparing it to “Somalians.”

It seems intuitive to the IQ-ist that a large, 2 SD gap in IQ between people will mean that more often than not there will be little understanding between them if they talk, as well as the kinds of interests they have. Neuroskeptic looked into the origins of the claim of the communication gap in IQ, found it to be attributed to Leta Hollingworth and elucidated by Grady Towers. Towers noted that “a leadership pattern will not form—or it will break up—when a discrepancy of more than about 30 points comes to exist between leader and lead.” Neuroskeptic comments:

This seems to me a significant logical leap. Hollingworth was writing specifically about leadership, and in childen [sic], but Towers extrapolates the point to claim that any kind of ‘genuine’ communication is impossible across a 30 IQ point gap.

It is worth noting that although Hollingworth was an academic psychologist, her remark about leadership does not seem to have been stated as a scientific conclusion from research, but simply as an ‘observation’.

[…]

So as far as I can see the ‘communication range’ is just an idea someone came up with. It’s not based on data. The reference to specific numbers (“+/- 2 standard deviations, 30 points”) gives the illusion of scientific precision, but these numbers were plucked from the air.

The notion that Koko had an “elevated level of thought [that] would have been all but incomprehensible to nearly half the population of Somalia (average IQ 68)” (Langan) is therefore laughable, not only for the reason that a so-called communication gap is false, but for the simple fact that Koko’s IQ was tested using the Cattell Infant Intelligence Scales (CIIS) (Patterson and Linden,1981: 100). It seems to me that Langan has not read the book that Koko’s handlers wrote about her—The Education of Koko (Patterson and Linden, 1981)—since they describe why Koko’s score should not be compared with human infants, so it follows that her score cannot be compared with human adults.

The CIIS was developed “to a downward extension of the Stanford-Binet” (Hooper, Conner, and Umansky, 1986), and so, it must correlate highly with the Stanford-Binet in order to be “valid” (the psychometric benchmark for validity—correlating a new test with the most up-to-date test which had assumed validity; Richardson, 1991, 2000, 2017; Howe, 1997). Hooper, Conner, and Umansky (1986: 160) note in their review of the CIIS, “Given these few strengths and numerous shortcomings, salvaging the Cattell would be a major undertaking with questionable yield. . . . Nonetheless, without more research investigating this instrument, and with the advent of psychometrically superior measures of infant development, the Cattell may be relegated to the role of an historical antecedent.” Items selected for the CIIS—like all IQ tests—“followed a quasi-statistical approach with many items being accepted and rejected subjectively.” They state that many of the items on the CIIS need to be updated with “objective” item analysis—but, as Jensen notes, items emerge arbitrarily from the heads of the test’s constructors.

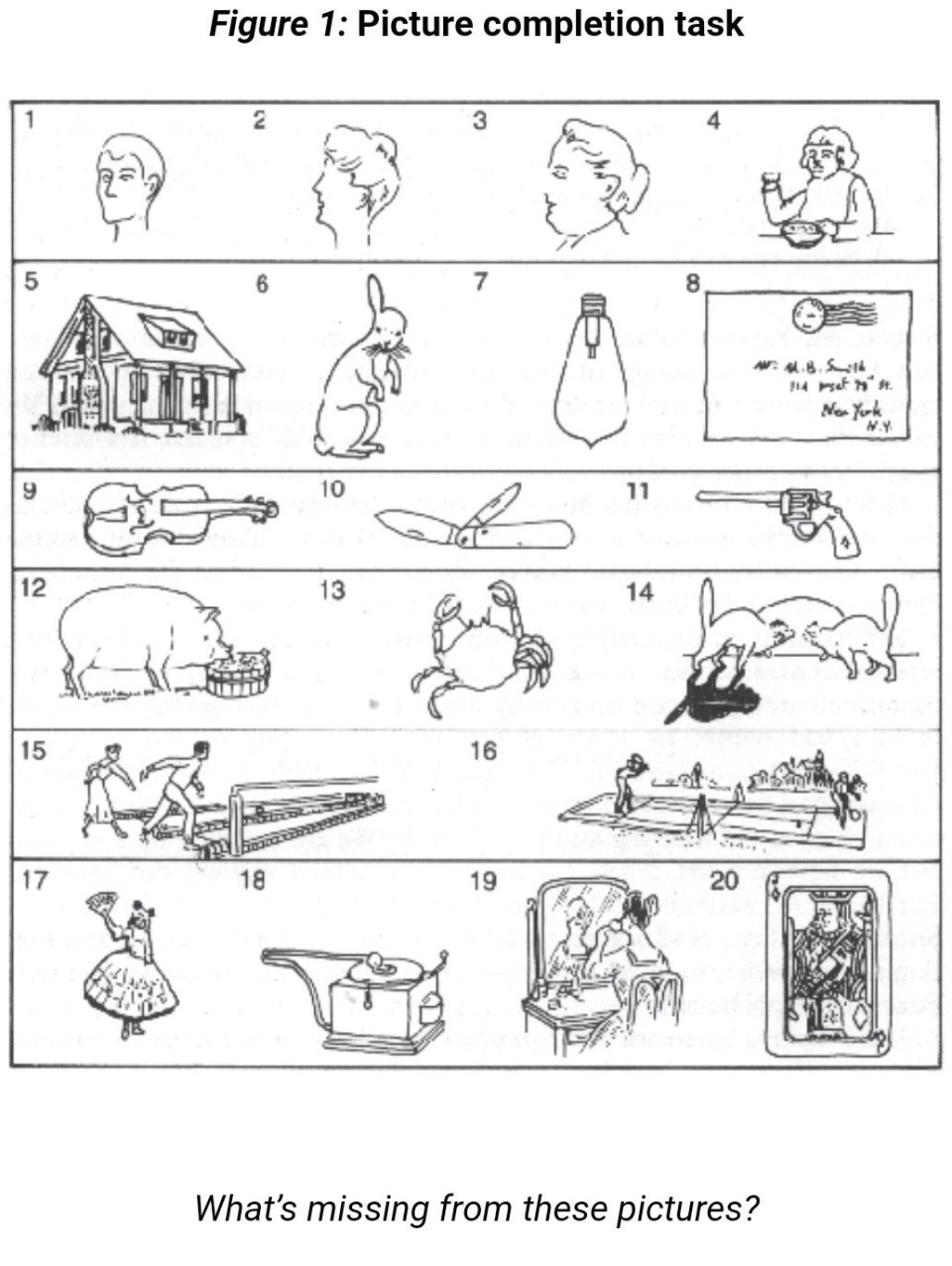

Patterson—the woman who raised Koko—notes that she “tried to gauge [Koko’s] performance by every available yardstick, and this meant administering infant IQ tests” (Patterson and Linden, 1981: 96). Patterson and Linden (1981: 100) note that Koko did better than human counterparts of her age in certain tasks over others, for example “her ability to complete logical progressions like the Ravens Progressive Matrices test” since she pointed to the answer with no hesitation.

Koko generally performed worse than children when a verbal rather than a pointing response was required. When tasks involved detailed drawings, such as penciling a path through a maze, or precise coordination, such as fitting puzzle pieces together. Koko’s performance was distinctly inferior to that of children.

[…]

It is hard to draw any firm conclusions about the gorilla’s intelligence as compared to that of the human child. Because infant intelligence tests have so much to do with motor control, results tend to get skewed. Gorillas and chimps seem to gain general control over their bodies earlier than humans, although ultimately children far outpace both in the fine coordination required in drawing or writing. In problems involving more abstract reasoning, Koko, when she is willing to play the game, is capable of solving relatively complex problems. If nothing else, the increase in Koko’s mental age shows that she is capable of understanding a number of the principles that are the foundation of what we call abstract thought. (Patterson and Linden, 1981: 100-101)

They conclude that “it is specious to compare her IQ directly with that of a human infant” since gorillas develop motor skills earlier than human infants. So if it is “specious” to compare Koko’s IQ with an infant, then it is “specious” to compare Koko’s IQ with the average Somalian—as Langan does.

There have been many critics of Koko, and similar apes, of course. One criticism was that Koko was coaxed into signing the word she signed by asking Koko certain questions, to Robert Sapolsky stating that Patterson corrected Koko’s signs. She, therefore, would not actually know what she was signing, she was just doing what she was told. Of course, caregivers of primates with the supposed extraordinary ability for complex (humanlike) cognition will defend their interpretations of their observations since they are emotionally invested in the interpretations. Patterson’s Ph.D. research was on Koko and her supposed capabilities for language, too.

Perhaps the strongest criticism of these kinds of interpretations of Koko comes from Terrace et al (1979). Terrace et al (1979: 899) write:

The Nova film, which also shows Ally (Nim’s full brother) and Koko, reveals a similar tendency for the teacher to sign before the ape signs. Ninety-two percent of Ally’s, and all of Koko’s, signs were signed by the teacher immediately before Ally and Koko signed.

It seems that Langan has never done any kind of reading on Koko, the tests she was administered, nor the problems in comparing them to humans (infants). The fact that Koko seemed to be influenced by her handlers to “sign” what they wanted her to sign, too, makes interpretations of her IQ scores problematic. For if Koko were influenced what to sign, then we, therefore, cannot trust her scores on the CIIS. The false claims of Langan are laughable knowing the truth about Koko’s IQ, what her handlers said about her IQ, and knowing what critics have said about Koko and her sign language. In any case, Langan did not show his “high IQ” with such idiotic statements.

The History and Construction of IQ Tests

4100 words

The [IQ] tests do what their construction dictates; they correlate a group’s mental worth with its place in the social hierarchy. (Mensh and Mensh, 1991, The IQ Mythology, pg 30)

We have been attempting to measure “intelligence” in humans for over 100 years. Mental testing began with Galton and then shifted over to Binet, which then became the most-well-known IQ tests today—Stanford-Binet and the WAIS/WISC. But the history of IQ testing is rife with unethical conclusions derived from their use, along with such conclusions they drew actually being carried out (i.e., the sterilization of “morons”; see Wilson, 2017’s The Eugenic Mind Project).

History of IQ testing

Any history of ‘intelligence’ testing will, of course, include Francis Galton’s contributions to the creation of psychological tests (in terms of statistical analyses, the construction of some tests, among other things) to the field. Galton was, in effect, one of the first behavioral geneticists.

Galton (1869: 37) asked “Is reputation a fair test of natural ability?“, to which he answered, “it is the only one I can employ.” Galton, for example, stated that, theoretically or intuitively, there is a relationship between reaction time and intelligence (Khodadi et al, 2014). Galton then devised tests of “reaction time, discrimination in sight and hearing, judgment of length, and so on, and applied them to groups of volunteers, with the aim of obtaining a more reliable and ‘pure’ measure of his socially judged intelligence” (Richardson, 1991: 19). But there was little to no relationship between Galton’s proposed proxies for intelligence and social class.

In 1890, Galton, publishing in the journal Mind coined the term “mental test (Castles, 2012: 85), while Cattell then got Galton to move to Columbia and got him permission to use his “mental tests” to all of the entering students. This was about two decades before Goddard brought the test to America—Galton and Cattell were just getting America warmed up for the testing process.

Yet others still attempted to create tests that were purported to measure intelligence, using similar kinds of parameters as Galton. For instance, Miller, 1962 provides a list (quoted in Richardson, 1991: 19):

1 Dynamotor pressure How tightly can the hand squeeze?

2 Rate of movement How quickly can the hand move through a distance of 30 cms?

3 Sensation areas How far apart must two points be on the skin to be recognised as two rather than one?

4 Pressure causing pain How much pressure on the forehead is necessary to cause pain?

5 Least noticeable difference in weight How large must the difference be between two weights before it is reliably detected?

6 Reaction-time for sound How quickly can the hand be moved at the onset of an auditory signal?

7 Time for naming colours How long does it take to name a strop of ten colored papers?

8 Bisection on a 10 cm line How accurately can onr point to the centre of an ebony rule?

9 Judgment of 10 sec time How accurately can an interval of 10 secs be judged?

10 Number of letters remembered on once hearing How many letters, ordered at random, can be repeated exactly after one presentation?

Individuals differed on these measures, but when they were used to compare social classes, Cattell stated that they were “disappointingly low” (quoted in Richardson, 1991: 20). So-called mental tests, Richardson (1991: 20) states, were “not [a] measurement for a straightforward, objective scientific investigation. The theory was there, but it was hardly a scientific one, but one derived largely from common intuition; what we described earlier as a popular or informal theory. And the theory had strong social implications. Measurement was devised mainly as a way of applying the theory in accordance with the prejudices it entailed.”

It wasn’t until 1903 when Alfred Binet was tasked to construct a test that identified slow learners in grade-school. In 1904, Binet was appointed a member of a commission on special classes in schools (Murphy, 1949: 354). In fact, Binet constructed his test in order to limit the role of psychiatrists in making decisions on whether or not healthy children—but ‘abnormal’—children should be excluded from the standard material used in regular schools (Nicolas et al, 2013). (See Nicolas et al, 2013 for a full overview of the history of intelligence in Psychology and a fuller overview of Binet and Simon’s test and why they constructed it. Also see Fancher, 1985 and )

The way Binet constructed his tests were in a way to identify children who were not learning what the average child their age knew. But the tests must distinguish between the lazy from the mentally deficient. So in 1905, Binet teamed up with Simon, and they published their first IQ test, with items arranged from the simplest to the most difficult (but with no standardization). A few of these items include: naming objects, completing sentences, comparing lines, comprehending questions, and repeating digits. Their test consisted of 30 items, which increased in difficulty from easiest to hardest and the items were chosen on the basis of teacher assessment and checking the items and seeing which discriminated which child and that also agreed with the constructors’ presuppositions.

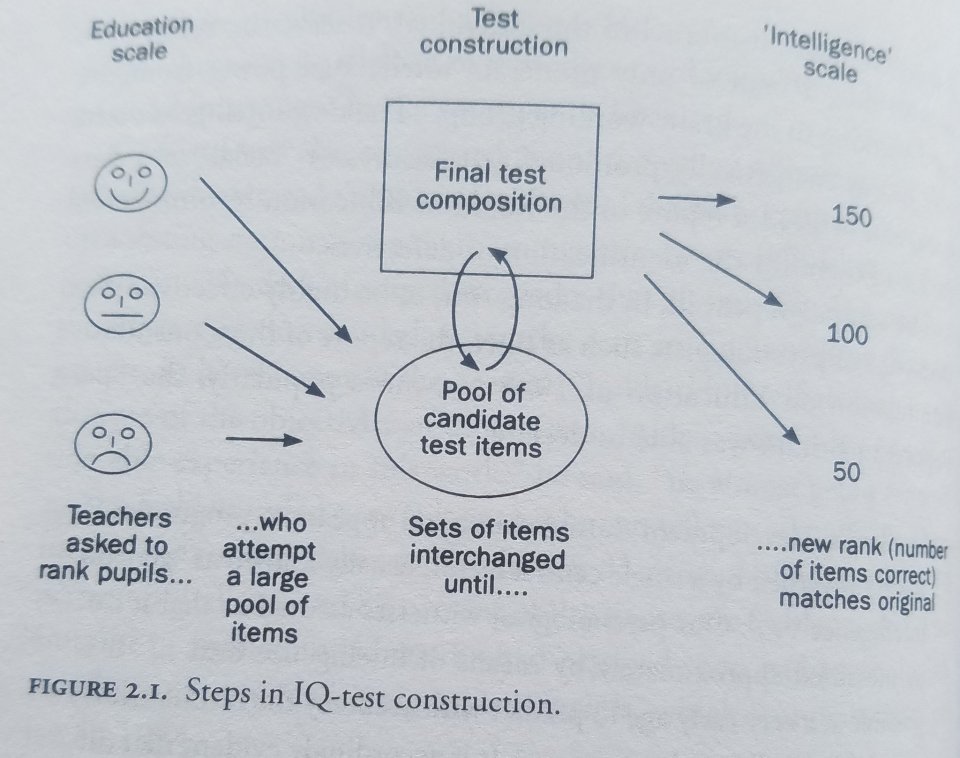

Richardson (2000: 32) discusses how IQ tests are constructed:

In this regard, the construction of IQ tests is perhaps best thought of as a reformatting exercise: ranks in one format (teachers’ estimates) are converted into ranks in another format (test scores, see figure 2.1).

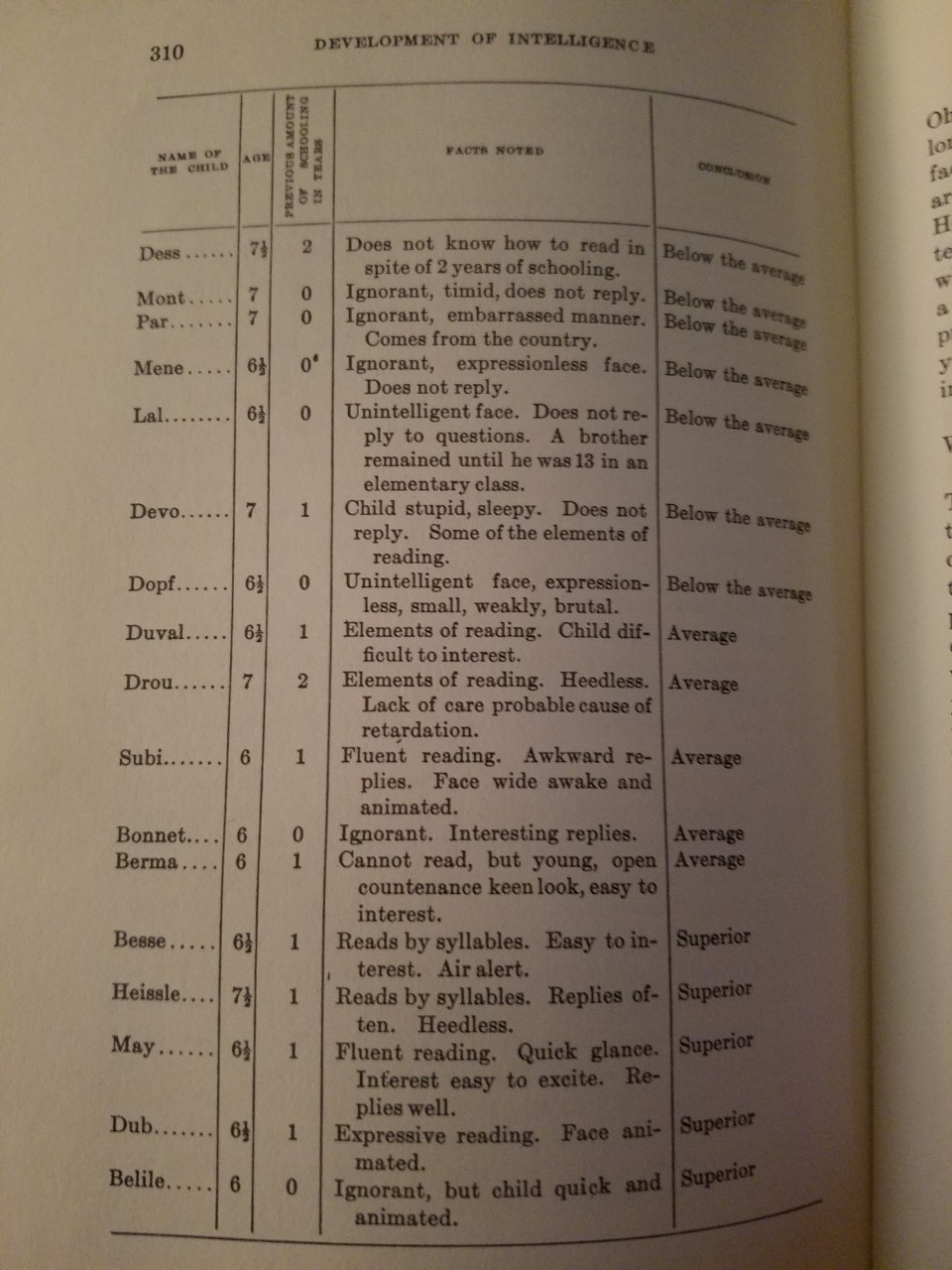

In The Development of Intelligence in Children, Binet and Simon (1916: 309) discuss how teachers assessed students:

A teacher , whom I know, who is methodical and considerate, has given an account of the habits he has formed for studying his pupils; he has analysed his methods, and sent them to me. They have nothing original, which makes them all the more important. He instructs children from five and a half to seven and a half years old; they are 35 in number; they have come to his class after having passed a prepatory course, where they have commenced to learn to read. For judging each child, the teacher takes account of his age, his previous schooling (the child may have been one year, two years in the prepatory class, or else was never passed through the division at all), of his expression of countenance, his state of health, his knowledge, his attitude in class, and his replies. From thes diverse elements he forms his opinion. I have transcribed some of these notes on the following page.

In reading his judgments one can see how his opinion was formed, and of how many elements it took account; it seems to us that this detail is interesting; perhaps if one attempted to make it precise by giving coefficients to all of these remarks, one would realize still greater exactitude. But is it possible to define precisely an attitude, a physiognomy, interesting replies, animated eyes? It seems that in all this the best element of diagnosis is furnished by the degree of reading which the child has attained after a given number of months, and the rest remains constantly vague.

Binet chose the items used on his tests for practical, not theoretical reasons. They then learned that some of their tests were harder, and others were easier, so they then arranged their tests by age levels: how well the average child for that age could complete the test in question. For example, if the average child could complete 10/20 for their age group, then they were average for that age. Then, if they scored below that, they were below average and above that they were higher than average. So the “mental age” for the child in question was calculated with the following formula: IQ=MA/CA*100. So if one’s MA (mental age) was 13 and their chronological age was 9, then their IQ would be 144.

Before Binet’s death in 1911, he revised his and Simon’s previous test. Intelligence, to Binet, is “the ability to understand directions, to maintain a mental set, and to apply “autocriticism” (the correction of one’s own errors)” (Murphy, 1949: 355). Binet measured subnormality by subtracting mental age from chronological age. (If mental and chronological age are equal, then IQ is 100.) To Binet, relative retardation was important. But William Stern, in 1912, thought that relative retardation was not important, but relative retardation was, and so he proposed to divide the mental age by the chronological age and multiply by 100. This, he showed, was stable in most children.

Binet termed his new scale a test of intelligence. It is interesting to note that the primary connotation of the French term l’intelligence in Binet’s time was what we might call “school brightness,” and Binet himself claimed no function for his scales beyond that of measuring academic aptitude.

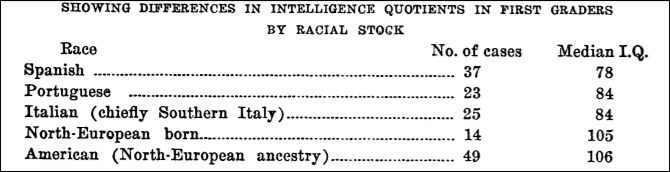

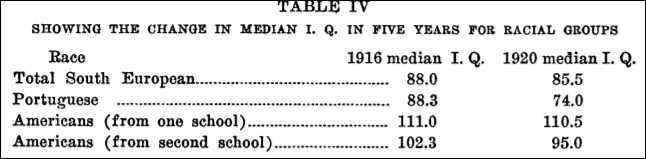

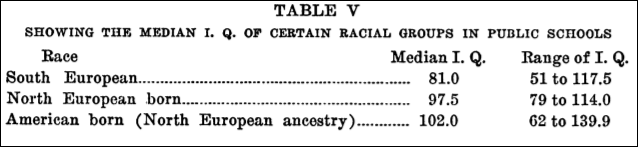

In 1908, Henry Goddard went on a trip to Europe, heard of Binet’s test, and brought home an original version to try out on his students at the Vineland Training School. He translated Binet’s 1908 edition of his test from French to English in 1909. Castles (2012: 90) notes that “American psychology would never be the same.” Goddard was also the one who coined the term “moron” (Dolmage, 2018) for any adult with a mental age between 8 and 13. In 1912, Goddard administered tests to immigrants who landed at Ellis Island and found that 87 percent of Russians, 83 percent of Jews, 80 percent of Hungarians, and 79 percent of Italians were “feebleminded.” Deportations soon picked up, with Goddard reporting a 350 percent increase in 1913 and a 570 percent increase in 1914 (Mensh and Mensh, 1991: 26).

Then, in 1916, Terman published his revision of the Binet-Simon scale, which he termed the Stanford-Binet intelligence scale, based on a sample of 1,000 subjects and standardized for ages ranging from 3-18—the tests for 16-year-olds being were for adults, whereas the tests for 18-year-olds were for ‘superior’ adults (Murphy, 1949: 355). (Terman’s test was revised in 1937, when the question of sex differences came up, see below, and in 1960.) Murphy (1949: 355) goes on to write:

Many of Binet’s tests were placed at higher or lower age levels than those at which Binet had placed them, and new tests were added. Each age level was represented by a battery of tests, each test being assigned a certain number of month credits. It was possible, therefore, to reckon the subject’s intelligence quotient, as Stern had suggested, in terms of the ratio of mental age to chronological age. A child attaining a score of 120 months, but only 100 months old, would have an IQ of 120 (the decimal point omitted).

It wasn’t until 1917 that psychologists devised the Army Alpha test for literate test-takers and the Army Beta test for illiterate test-takers and non-English speakers. Examples for items on the Alpha and the Beta can be found below:

1. The Percheron is a kind of

(a) goat, (b) horse, (c) cow, (d) sheep.

2. The most prominent industry of Gloucester is

(a) fishing, (b) packing, (c) brewing, (d) automobiles.

3. “There’s a reason” is an advertisement for

(a) drink, (b) revolver, (c) flour, (d) cleanser.

4. The Knight engine is used in the

(a) drink, (b) Stearns, (c) Lozier, (d) Pierce Arrow.

5. The Stanchion is used in

(a) fishing, (b) hunting, (c) farming, (d) motoring. (Heine, 2017: 187)

Mensh and Mensh (1991: 31) tell us that

… the tests’ very lack of effect on the placement of [army] personnel provides the clue to their use. The tests were used to justify, not alter, the army’s traditional personnel policy, which called for the selection of officers from among relatively affluent whites and the assignment of white of lower socioeconomic status go lower-status roles and African-Americans at the bottom rung.

Meanwhile, while Binet was devising his Binet scales at the beginning of the 20th century, Spearman was devising his theory of g over in Europe. Spearman noted in 1904 that children who did well or poorly on certain types of tests did well or poorly on all of them—they were correlated. Spearman’s discovery was that correlated scores reflect a common ability, and this ability is called ‘general intelligence’ or ‘g’ (which has been widely criticized).

In sum, the conception of ‘intelligence tests’ began as a way to attempt to justify the class/race hierarchy by constructing the tests in a way to agree with the constructors’ presuppositions of who is or is not intelligent—which will be covered below.

Test construction

When tests are standardized, a whole slew of candidate items are pooled together and used in the construction of the test. For an item to be used for the final test, it must agree with the a priori assumptions of the test’s constructors on who is or is not “intelligent.”

Andrew Strenio, author of The Testing Trap states exactly how IQ tests are constructed, writing:

We look at individual questions and see how many people get them right and who gets them right. … We consciously and deliberately select questions so that the kind of people who scored low on the pretest will score low on subsequent tests. We do the same for middle or high scorers. We are imposing our will on the outcome. (pg 95, quoted in Mensh and Mensh, 1991)

Richardson (2017a: 82) writes that IQ tests—and the items on them—are:

still based on the basic assumption of knowing in advance who is or is not intelligent and making up and selecting items accordingly. Items are invented by test designers themselves or sent out to other psychologists, educators, or other “experts” to come up with ideas. As described above, initial batches are then refined using some intuitive guidelines.

This is strange… I thought that IQ tests were “objective”? Well, this shows that they are anything but objective—they are, very clearly, subjective in their construction which leads to what the constructors of the test assumed—their score hierarchy. The test’s constructors assume that their preconceptions on who is or is not intelligent is true and that differences in intelligence are the cause for differences in social class, so the IQ test was created to justify the existing social hierarchy. (Nevermind the fact that IQ scores are an index of social class, Richardson, 2017b.)

Mensh and Mensh (1991: 5) write that:

Nor are the [IQ] tests objective in any scientific sense. In the special vocabulary of psychometrics, this term refers to the way standardized tests are graded, i.e., according to the answers designated “right” or “wrong” when the questions are written. This definition not only overlooks that the tests contain items of opinion, which cannot be answered according to universal standards of true/false, but also overlooks that the selection of items is an arbitrary or subjective matter.

Nor do the tests “allocate benefits.” Rather, because of their class and racial biases, they sort the test takers in a way that conforms to the existing allocation, thus justifying it. This is why the tests are so vehemently defended by some and so strongly opposed by others.

When it comes to Terman and his reconstruction of the Binet-Simon—which he called the Stanford-Binet—something must be noted.

There are negligible differences in IQ between men and women. In 1916, Terman thought that the sexes should be equal in IQ. So he constructed his test to mirror his assumption. Others (e.g., Yerkes) thought that whatever differences materialized between the sexes on the test should be kept and boys and girls should have different norms. Terman, though, to reflect his assumption, specifically constructed his test by including subtests in which sex differences were eliminated. This assumption is still used today. (See Richardson, 1998; Hilliard, 2012.) Richardson (2017a: 82) puts this into context:

It is in this context that we need to assess claims about social class and racial differences in IQ. These could be exaggerated, reduced, or eliminated in exactly the same way. That they are allowed to persist is a matter of social prejudice, not scientific fact. In all these ways, then, we find that the IQ testing movement is not merely describing properties of people—it has largely created them.

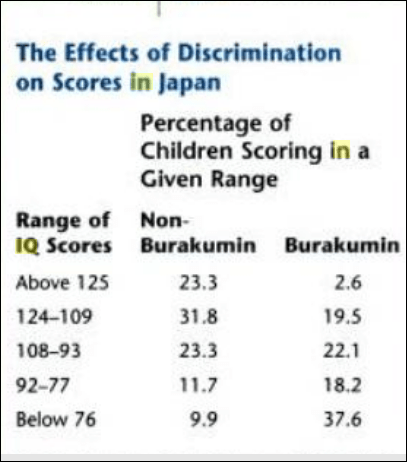

This is outright admission from the test’s constructors themselves that IQ differences can be built into and out of the test. It further shows that these tests are not “objective”, as they claim. In reality, they are subjective, based on prior assumptions. Take what Hilliard (2012: 115-116) noted about two white South African groups and differences in IQ between them:

A consistent 15- to 20-point IQ differential existed between the more economically privileged, better educated, urban-based, English-speaking whites and the lower-scoring, rural-based, poor, white Afrikaners. To avoid comparisons that would have led to political tensions between the two white groups, South African IQ testers squelched discussion about genetic differences between the two European ethnicities. They solved the problem by composing a modified version of the IQ test in Afrikaans. In this way they were able to normalize scores between the two white cultural groups.

The SAT suffers from the same problems. Mensh and Mensh (1991: 69) note that “the SAT has been weighted to widen a gender scoring differential that from the start favored males.” They note that, since the SAT’s inception, men have score higher than women, but the gap was due primarily to men’s scores on the math subtest “which was partially offset until 1972 by women’s higher scores on the verbal subtest.” But by 1986 men outscored women on the verbal portion, with the ETS stating that they created a “better balance for the scores between sexes” (quoted in Mensh and Mensh, 1991: 69). What they did, though, was exactly what Terman did: they added items where the context favored men and eliminated those that favored women. This prompts Hilliard (2012: 118) to ask “How then could they insist with such force that no cultural biases existed in the IQ tests given blacks, who scored 15 points below whites?”

When it comes to test bias, Mensh and Mensh (1991: 51) write that:

From a functional standpoint, there is no distinction between crassly biased IQ-test items and those that appear to be non-biased. Because all types of test items are biased (if not explicitly, then implicitly, or in some combination thereof), and because the tests’ racial and class biased correspond to the society’s, each element of a test plays its part in ranking children in the way their respective groups are ranked in the social order.

This, then, returns to the normal distribution—the Gaussian distribution or bell curve.

The normal distribution is assumed. Items are selected to conform with the normal curve after the fact by trying out a whole slew of items for which Jensen (1980: 147-148) states that “items must simply emerge arbitrarily from the heads of test constructors.” Items that show little correlation with the testers’ expectations are then removed from the final test. Fischer et al (1996), Simon (1997), Richardson (1991; 1998; 2017) also discuss the myth of the normal distribution and how it is constructed by IQ test-makers. Further, Jensen brings up an important point about items emerging “arbitrarily from the heads of test constructors.” That is, test constructors have their idea in their head on who is or is not ‘intelligent’, they then try out a whole slew of items, and, unsurprisingly, they get the type of score distribution they want! Howe (1997: 20) writes that:

However, it is wrongly assumed that the fact that IQ scores have a bell-shaped distribution implies that differing intelligence levels of individuals are ‘naturally’ distributed in that way. This is incorrect: the bell-shaped distribution of IQ scores is an artifical product that results from test-makers initially assuming that intelligence is normally distributed, and then matchinig IQ scores to differing levels of test performance in a manner that results in a bell-shaped curve.

Richardson (1991) notes that the normal distribution “is achieved in the IQ test by the simple decision of including more items on which an average number of the trial group performed well, and relatively fewer on which either a substantial majority or a minority of subjects did well. Richardson (1991) also states that “if the bell-shaped curve is the myth it seems to be—for IQ as for much else—then it is devastating for nearly all discussion surrounding it.” Even Jensen (1980: 71) states that “It is claimed that the psychometrist can make up a test that will yield any kind of score distribution he pleases. This is roughly true, but some types of distributions are much easier to obtain than others.”

The [IQ test] items are, after all, devised by test designers from a very narrow social class and culture, based on intuitions about intelligence and variation in it, and on a technology of item selection which builds in the required degree of convergence of performance. (Richardson, 1991)

Micceri (1988) examined score distributions from 400 tests administered all over the US in workplaces, universities, and schools. He found significant non-normal distributions of test scores. The same can be said about physiological processes, as well.

Candidate items are administered to a sample population, and to be selected for the final test, the question must establish the scoring norm for the whole group, along with subtest norms which is supposed to replicate when the test is then released for general use. So an item must play the role in creating a distribution of scores that places each subgroup (of people) in its predetermined place on the (artifact of test construction’s) normal curve. It is then how Hilliard (2012: 118) notes:

Validating a newly drawn-up IQ exam involved giving it to a prescribed sample population to determine whether it measured what it was designed to assess. The scores were then correlated, that is, compared with the test designers’ presumptions. If the individuals who were supposed to come out on top didn’t score highly or, conversely, if the individuals who were assumed would be at the bottom of the scores didn’t end up there, then the designers would scrap the test.

Howe (1997: 6) states that “A psychological test score is no more than an indication of how well someone has performed at a number of questions that have been chosen for largely practical reasons. Nothing is genuinely being measured.” Howe (1997: 17) also noted that:

Because their construction has never been guided by any formal definition of what intelligence is, intelligence tests are strikingly different from genuine measuring instruments. Binet and Simon’s choice of items to include as the problems that made up their test was based purely on practical considerations.

IQ tests are ‘validated’ against older tests such as the Stanford-Binet, but the older tests were never validated themselves (see Richardson, 2002: 301.) Howe (1997: 18) continues:

In the case of the Binet and Simon test, since their main purpose was to help establish whether or not a child was capable of coping with the conventional school cirriculum, they sensibly chose items that seemed to assess a child’s capacity to succeed at the kinds of mental problems that are encountered in the classroom. Importantly, the content of the first intelligence test was decided by largely pragmatic considerations rather than being constrained by a formal definition of intelligence. That remains largely true of the tests that are used even now. As the early tests were revised and new assessment batteries constructed, the main benchmark for believing a new test to be adequate was its degree of agreement with the older ones. Each new test was assummed to be a proper measure of intelligence if the distributions of people’s scores at it matched the pattern of scores at a previous test, a line of reasoning that conveniently ignored the fact that the earlier ‘measures’ of intelligence that provided the basis for confirming the quality of the subsequent ones were never actually measures of anything. In reality … intelligence tests are very different from true measures (Nash, 1990). For instance, with a measure such as height it is clear that a particular quantity is the same irrespective of where it occurs. The 5 cm difference between 40 cm and 45 cm is the same as the 5 cm difference between 115 cm and 120 cm, but the same cannot be said about differing scores gained in a psychological test.

Conclusion

This discussion of the construction of IQ tests and the history of IQ testing can lead us to one conclusion: that differences in scores can be built into and out of the tests based on the prior assumptions of the test’s constructors; the history of IQ testing is rife with these same assumptions; and all newer tests are ‘validated’ on their agreement with older—still non-valid!—tests. The genesis of IQ testing beginning with social prejudices, constructing the tests to agree with the current hierarchy, however, does indeed damn the conclusions of the tests—that group A outscores group B does not mean that A is more ‘intelligent’ than B; it only means that A was exposed to more of the knowledge on the test.

The normal distribution, too, is a result of the same item addition/elimination to get the expected scores—the scores that then agree with the constructors’ racial and class biases. Bias in mental testing does exist, contra Jensen (1980). It exists due to carefully selected items to distinguish between different racial groups and social classes.

This critique in IQ testing I have mounted is not an ‘environmentalist’ critique, either. It is a methodological one.

Jews, IQ, Genes, and Culture

1500 words

Jewish IQ is one of the most-talked-about things in the hereditarian sphere. Jews have higher IQs, Cochran, Hardy, and Harpending (2006: 2) argue due to “the unique demography and sociology of Ashkenazim in medieval Europe selected for intelligence.” To IQ-ists, IQ is influenced/caused by genetic factors—while environment accounts for only a small portion.

In The Chosen People: A Study of Jewish Intelligence, Lynn (2011) discusses one explanation for higher Jewish IQ—that of “pushy Jewish mothers” (Marjoribanks, 1972).

“Fourth, other environmentalists such as Majoribanks (1972) have argued that the high intelligence of the Ashkenazi Jews is attributable to the typical “pushy Jewish mother”. In a study carried out in Canada he compared 100 Jewish boys aged 11 years with 100 Protestant white gentile boys and 100 white French Canadians and assessed their mothers for “Press for Achievement”, i.e. the extent to which mothers put pressure on their sons to achieve. He found that the Jewish mothers scored higher on “Press for Achievement” than Protestant mothers by 5 SD units and higher than French Canadian mothers by 8 SD units and argued that this explains the high IQ of the children. But this inference does not follow. There is no general acceptance of the thesis that pushy mothers can raise the IQs of their children. Indeed, the contemporary consensus is that family environmental factors have no long term effect on the intelligence of children (Rowe, 1994).

The inference is a modus ponens:

P1 If p, then q.

P2 p.

C Therefore q.

Let p be “Jewish mothers scored higher on “Press for Achievement” by X SDs” and let q be “then this explains the high IQ of the children.”

So now we have:

Premise 1: If “Jewish mothers scored higher on “Press for Achievement” by X SDs”, then “this explains the high IQ of the children.”

Premise 2: “Jewish mothers scores higher on “Press for Achievement” by X SDs.”

Conclusion: Therefore, “Jewish mothers scoring higher on “Press for Achievement” by X SDs” so “this explains the high IQ of the children.”

Vaughn (2008: 12) notes that an inference is “reasoning from a premise or premises to … conclusions based on those premises.” The conclusion follows from the two premises, so how does the inference not follow?

IQ tests are tests of specific knowledge and skills. It, therefore, follows that, for example, if a “mother is pushy” and being pushy leads to studying more then the IQ of the child can be raised.

Looking at Lynn’s claim that “family environmental factors have no long term effect on the intelligence of children” is puzzling. Rowe relies heavily on twin and adoption studies which have false assumptions underlying them, as noted by Richardson and Norgate (2005), Moore (2006), Joseph (2014), Fosse, Joseph, and Richardson (2015), Joseph et al (2015). The EEA is false so we, therefore, cannot accept the genetic conclusions from twin studies.

Lynn and Kanazawa (2008: 807) argue that their “results clearly support the high intelligence theory of Jewish achievement while at the same time provide no support for the cultural values theory as an explanation for Jewish success.” They are positing “intelligence” as an explanatory concept, though Howe (1988) notes that “intelligence” is “a descriptive measure, not an explanatory concept.” “Intelligence, says Howe (1997: ix) “is … an outcome … not a cause.” More specifically, it is an outcome of development from infancy all the way up to adulthood and being exposed to the items on the test. Lynn has claimed for decades that high intelligence explains Jewish achievement. But whence came intelligence? Intelligence develops throughout the life cycle—from infancy to adolescence to adulthood (Moore, 2014).

Ogbu and Simon (1998: 164) notes that Jews are “autonomous minorities”—groups with a small number. They note that “Although [Jews, the Amish, and Mormons] may suffer discrimination, they are not totally dominated and oppressed, and their school achievement is no different from the dominant group (Ogbu 1978)” (Ogbu and Simon, 1998: 164). Jews are voluntary minorities, and voluntary minorities, according to Ogbu (2002: 250-251; in Race and Intelligence: Separating Science from Myth) suggests five reasons for good test performance from these types of minorities:

- Their preimmigration experience: Some do well since they were exposed to the items and structure of the tests in their native countries.

- They are cognitively acculturated: They acquired the cognitive skills of the white middle-class when they began to participate in their culture, schools, and economy.

- The history and incentive of motivation: They are motivated to score well on the tests as they have this “preimmigration expectation” in which high test scores are necessary to achieve their goals for why they emigrated along with a “positive frame of reference” in which becoming successful in America is better than becoming successful at home, and the “folk theory of getting ahead in the United States”, that their chance of success is better in the US and the key to success is a good education—which they then equate with high test scores.

So if ‘intelligence’ is a test of specific culturally-specific knowledge and skills, and if certain groups are exposed more to this knowledge, it then follows that certain groups of people are better-prepared for test-taking—specifically IQ tests.

The IQ-ists attempt to argue that differences in IQ are due, largely, to differences in ‘genes for’ IQ, and this explanation is supposed to explain Jewish IQ, and, along with it, Jewish achievement. (See also Gilman, 2008 and Ferguson, 2008 for responses to the just-so storytelling from Cochran, Hardy, and Harpending, 2006.) Lynn, purportedly, is invoking ‘genetic confounding’—he is presupposing that Jews have ‘high IQ genes’ and this is what explains the “pushiness” of Jewish mothers. The Jewish mothers then pass on their “genes for” high IQ—according to Lynn. But the evolutionary accounts (just-so stories) explaining Jewish IQ fail. Ferguson (2008) shows how “there is no good reason to believe that the argument of [Cochran, Hardy, and Harpending, 2006] is likely, or even reasonably possible.” The tall-tale explanations for Jewish IQ, too, fail.

Prinz (2014: 68) notes that Cochran et al have “a seductive story” (aren’t all just-so stories seductive since they are selected to comport with the observation? Smith, 2016), while continuing (pg 71):

The very fact that the Utah researchers use to argue for a genetic difference actually points to a cultural difference between Ashkenazim and other groups. Ashkenazi Jews may have encouraged their children to study maths because it was the only way to get ahead. The emphasis remains widespread today, and it may be the major source of performance on IQ tests. In arguing that Ashkenazim are genetically different, the Utah researchers identify a major cultural difference, and that cultural difference is sufficient to explain the pattern of academic achievement. There is no solid evidence for thinking that the Ashkenazim advantage in IQ tests is genetically, as opposed to culturally, caused.

Nisbett (2008: 146) notes other problems with the theory—most notably Sephardic over-achievement under Islam:

It is also important to the Cochran theory that Sephardic Jews not be terribly accomplished, since they did not pass through the genetic filter of occupations that demanded high intelligence. Contemporary Sephardic Jews in fact do not seem to haave unusally high IQs. But Sephardic Jews under Islam achieved at very high levels. Fifteen percent of all scientists in the period AD 1150-1300 were Jewish—far out of proportion to their presence in the world population, or even the population of the Islamic world—and these scientists were overwhelmingly Sephardic. Cochran and company are left with only a cultural explanation of this Sephardic efflorescence, and it is not congenial to their genetic theory of Jewish intelligence.

Finally, Berg and Belmont (1990: 106) note that “The purpose of the present study was to clarify a possible misinterpretation of the results of Lesser et al’s (1965) influential study that suggested that existence of a “Jewish” pattern of mental abilities. In establishing that Jewish children of different socio-cultural backgrounds display different patterns of mental abilities, which tend to cluster by socio-cultural group, this study confirms Lesser et al’s position that intellectual patterns are, in large part, culturally derived.” Cultural differences exist; cultural differences have an effect on psychological traits; if cultural differences exist and cultural differences have an effect on psychological traits (with culture influencing a population’s beliefs and values) and IQ tests are culturally-/class-specific knowledge tests, then it necessarily follows that IQ differences are cultural/social in nature, not ‘genetic.’

In sum, Lynn’s claim that the inference does not follow is ridiculous. The argument provided is a modus ponens, so the inference does follow. Similarly, Lynn’s claim that “pushy Jewish mothers” don’t explain the high IQs of Jews doesn’t follow. If IQ tests are tests of middle-class knowledge and skills and they are exposed to the structure and items on them, then it follows that being “pushy” with children—that is, getting them to study and whatnot—would explain higher IQs. Lynn’s and Kanazawa’s assertion that “high intelligence is the most promising explanation of Jewish achievement” also fails since intelligence is not an explanatory concept—a cause—it is a descriptive measure that develops across the lifespan.

Knowledge, Culture, Logic, and IQ

5050 words

… what IQ tests actually assess is not some universal scale of cognitive strength but the presence of skills and knowledge structures more likely to be acquired in some groups than in others. (Richardson, 2017: 98)

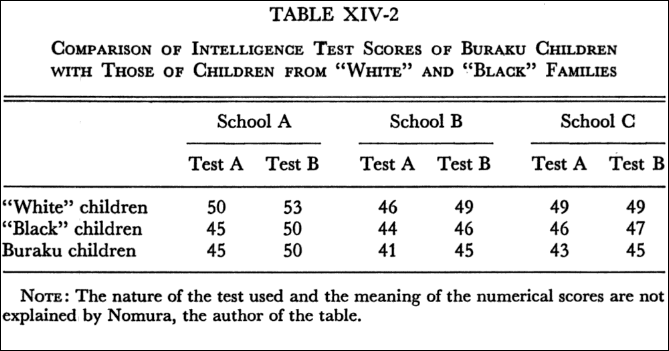

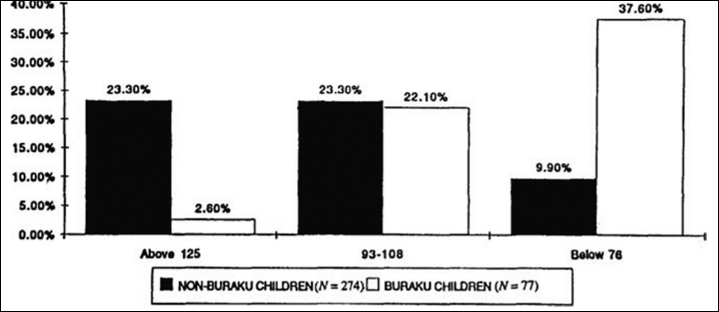

For the past 100 years, the black-white IQ gap has puzzled psychometricians. There are two camps—hereditarians (those who believe that individual and group differences in IQ are due largely to genetics) and environmentalists/interactionists (those who believe that individual and group differences in IQ are largely due to differences in learning, exposure to knowledge, culture and immediate environment).

Knowledge

However, one of the most forceful arguments for the environmentalist (i.e., that the cause for differences in IQ are due to the cultural and social environment; note that an interactionist framework can be used here, too) side is one from Fagan and Holland (2007). They show that half of the questions on IQ tests had no racial bias, whereas other problems on the test were solvable with only a specific type of knowledge – knowledge that is found specifically in the middle class. So if blacks are more likely to be lower class than whites, then what explains lower test scores for blacks is differential exposure to knowledge – specifically, the knowledge to complete the items on the test.

But some hereditarians say otherwise – they claim that since knowledge is easily accessible for everyone, then therefore, everyone who wants to learn something will learn it and thus, the access to information has nothing to do with cultural/social effects.

A hereditarian can, for instance, state that anyone who wants to can learn the types of knowledge that are on IQ tests and that they are widely available everywhere. But racial gaps in IQ stay the same, even though all racial groups have the same access to the specific types of cultural knowledge on IQ tests. Therefore, differences in IQ are not due to differences in one’s immediate environment and what they are exposed to—differences in IQ are due to some innate, genetic differences between blacks and whites. Put into premise and conclusion form, the argument goes something like this:

P1 If racial gaps in IQ were due specifically to differences in knowledge, then anyone who wants to and is able to learn the stuff on the tests can do so for free on the Internet.

P2 Anyone who wants to and is able to learn stuff can do so for free on the Internet.

P3 Blacks score lower than whites on IQ tests, even though they have the same access to information if they would like to seek it out.

C Therefore, differences in IQ between races are due to innate, genetic factors, not any environmental ones.

This argument is strange. One would have to assume that blacks and whites have the same access to knowledge—we know that lower-income people have less access to knowledge in virtue of the environments they live in. For instance, they may have libraries with low funding or bad schools with teachers who do not care enough to teach the students what they need to succeed on these standardized tests (IQ tests, the SAT, etc are all different versions of the same test). (2) One would have to assume that everyone has the same type of motivation to learn what amounts to answers for questions on a test that have no real-world implications. And (3) the type of knowledge that one is exposed to dictates what one can tap into while they are attempting to solve a problem. All three of these reasons can cascade in causing the racial performance in IQ.

Familiarity with the items on the tests influences a faster processing of information, allowing one to correctly identify an answer in a shorter period of time. If we look at IQ tests as tests of middle-class knowledge of skills, and we rightly observe that blacks are lower class than whites who are more likely to be middle class, then it logically follows that the cause of differences in IQ between blacks and whites are cultural – and not genetic – in origin. This paper – and others – solves the century-old debate on racial IQ differences – what accounts for differences in IQ scores is differential exposure to knowledge. Claiming that people have the same type of access to knowledge and, thusly, won’t learn it if they won’t seek it out does not make sense.

Differing experiences lead to differing amounts of knowledge. If differing experiences lead to differing amounts of knowledge, and IQ tests are tests of knowledge—culturally-specific knowledge—then those who are not exposed to the knowledge on the test will score lower than those who are exposed to the knowledge. Therefore, Jensen’s Default Hypothesis is false (Fagan and Holland, 2002). Fagan and Holland (2002) compared blacks and whites on for their knowledge of the meaning of words, which are highly “g”-loaded and shows black-white differences. They review research showing that blacks have lower exposure to words and are therefore unfamiliar with certain words (keep this in mind for the end). They mixed in novel words with previously-known words to see if there was a difference.

Fagan and Holland (2002) picked out random words from the dictionary, then putting them into a sentence to attempt to give the testee some context. They carried out five experiments in all, and each one showed that, when equal opportunity was given to the groups, they were “equal in knowledge” (IQ). So, whites were more likely to know the items more likely to be found on IQ tests. Thus, there were no racial differences between blacks and whites when looked at from an information-processing point of view. Therefore, to expain racial differences in IQ, we must look to differences in the cultural/social environment. Fagan (2000) for instance, states that “Cultures may differ in the types of knowledge their members have but not in how well they process. Cultures may account for racial differences in IQ.”

The results of Fagan and Holland (2002) are completely at-ends with Jensen’s Default Hypothesis—that the 15-point gap in IQ is due to the same environmental and cultural factors that underlie individual differences in the group. However, as Fagan and Holland (2002: 382) show that:

Contrary to what the default hypothesis would predict, however, the within racial group analyses in our study stand in sharp contrast to our between racial group findings. Specifically, individuals within a racial group who differed in general knowledge of word meanings also differed in performance when equal exposure to the information to be tested was provided. Thus, our results suggest that the average difference of 15 IQ points between Blacks and Whites is not due to the same genetic and environmental factors, in the same ratio, that account for differences among individuals within a racial group in IQ.

Exposure to information is critical, in fact. For instance, Ceci (1996) shows that familiarity with words dictates speed of processing to use in identifying the correct answer to the problem. In regard to differences in IQ, Ceci (1996) does not deny the role of biology—indeed, it’s a part of his bio-ecological model of IQ, which is a theory that postulates the development of intelligence as an interaction between biological dispositions and the environment in which those dispositions manifest themselves. Ceci (1996) does note that there are biological constraints on intelligence, but that “… individual differences in biological constraints on specific cognitive abilities are not necessarily (or even probably) directly responsible for producing the individual differences that have been reported in the psychometric literature.” That such potentials, though may be “genetic” in origin, of course, does not license the claim that genetic factors contribute to variance in IQ. “Everyone may possess them to the same degree, and the variance may be due to environment and/or motivations that led to their differential crystallization.” (Ceci, 1996: 171)

Ceci (1996) also further shows that people can differ in intellectual performance due to 3 things: (1) the efficiency of underlying cognitive potentials that are relevant to the cognitive ability in question; (2) the structure of knowledge relevant to the performance; and (3) contextual/motivational factors relevant to crystallize the underlying potentials gained through one’s knowledge. Thus, if one is lacking in the knowledge of the items on the test due to what they learned in school, then the test will be biased against them since they did not learn the relevant information on the tests.

Cahan and Cohen (1989) note that nine-year-olds in fourth grade had higher IQs than nine-year-olds in third grade. This is to be expected, if we take IQ scores as indices of—cultural-specific—knowledge and skills and this is because fourth-graders have been exposed to more information than third-graders. In virtue of being exposed to more information than their same-age cohort in different grades, they then score higher on IQ tests because they are exposed to more information.

Cockroft et al (2015) studied South African and British undergrads on the WAIS-III. They conclude that “the majority of the subtests in the WAIS-III hold cross-cultural biases“, while this is “most evident in tasks which tap crystallized, long-term learning, irrespective of whether the format is verbal or non-verbal” so “This challenges the view that visuo-spatial and non-verbal tests tend to be culturally fairer than verbal ones (Rosselli and Ardila, 2003)”.

IQ tests “simply reflect the different kinds of learning by children from different (sub)cultures: in other words, a measure of learning, not learning ability, and are merely a redescription of the class structure of society, not its causes … it will always be quite impossible to measure such ability with an instrument that depends on learning in one particular culture” (Richardson, 2017: 99-100). This is the logical position to hold: for if IQ tests test class-specific type of knowledge and certain classes are not exposed to said items, then they will score lower. Therefore, since IQ tests are tests of a certain kind of knowledge, IQ tests cannot be “a measure of learning ability” and so, contra Gottfredson, ‘g’ or ‘intelligence’ (IQ test scores) cannot be called “basic learning ability” since we cannot create culture—knowledge—free tests because all human cognizing takes place in a cultural context which it cannot be divorced from.

Since all human cognition takes place through the medium of cultural/psychological tools, the very idea of a culture-free test is, as Cole (1999) notes, ‘a contradiction in terms . . . by its very nature, IQ testing is culture bound’ (p. 646). Individuals are simply more or less prepared for dealing with the cognitive and linguistic structures built in to the particular items. (Richardson, 2002: 293)

Heine (2017: 187) gives some examples of the World War I Alpha Test:

1. The Percheron is a kind of

(a) goat, (b) horse, (c) cow, (d) sheep.

2. The most prominent industry of Gloucester is

(a) fishing, (b) packing, (c) brewing, (d) automobiles.

3. “There’s a reason” is an advertisement for

(a) drink, (b) revolver, (c) flour, (d) cleanser.

4. The Knight engine is used in the

(a) drink, (b) Stearns, (c) Lozier, (d) Pierce Arrow.

5. The Stanchion is used in

(a) fishing, (b) hunting, (c) farming, (d) motoring.

Such test items are similar to what are on modern-day IQ tests. See, for example, Castles (2013: 150) who writes:

One section of the WAIS-III, for example, consists of arithmetic problems that the respondent must solve in his or her head. Others require test-takers to define a series of vocabulary words (many of which would be familiar only to skilled-readers), to answer school-related factual questions (e.g., “Who was the first president of the United States?” or “Who wrote the Canterbury Tales?”), and to recognize and endorse common cultural norms and values (e.g., “What should you do it a sale clerk accidentally gives you too much change?” or “Why does our Constitution call for division of powers?”). True, respondents are also given a few opportunities to solve novel problems (e.g., copying a series of abstract designs with colored blocks). But even these supposedly culture-fair items require an understanding of social conventions, familiarity with objects specific to American culture, and/or experience working with geometric shapes or symbols. [Since this is questions found on the WAIS-III, then go back and read Cockroft et al, 2015 since they used the British version which, of course, is similar.]

If one is not exposed to the structure of the test along with the items and information on them, how, then, can we say that the test is ‘fair’ to other cultural groups (social classes included)? For, if all tests are culture-bound and different groups of people have different cultures, histories, etc, then they will score differently by virtue of what they know. This is why it is ridiculous to state so confidently that IQ tests—however imperfectly—test “intelligence.” They test certain skills and knowledge more likely to be found in certain groups/classes over others—specifically in the dominant group. So what dictates IQ scores is differential access to knowledge (i.e., cultural tools) and how to use such cultural tools (which then become psychological tools.)

Lastly, take an Amazonian people called The Pirah. They have a different counting system than we do in the West called the “one-two-many system, where quantities beyond two are not counted but are simply referred to as “many”” (Gordon, 2005: 496). A Pirah adult was shown an empty can. Then the investigator put six nuts into the can and took five out, one at a time. The investigator then asked the adult if there were any nuts remaining in the can—the man answered that he had no idea. Everett (2005: 622) notes that “Piraha is the only language known without number, numerals, or a concept of counting. It also lacks terms for quantification such as “all,” “each,” “every,” “most,” and “some.””

(hbdchick, quite stupidly, on Twitter wrote “remember when supermisdreavus suggested that the tsimane (who only count to s’thing like two and beyond that it’s “many”) maybe went down an evolutionary pathway in which they *lost* such numbers genes?” Riiiight. Surely the Tsimane “went down an evolutionary pathway in which they *lost* such numbers genes.” This is the idiocy of “HBDers” in action. Of course, I wouldn’t expect them to read the actual literature beyond knowing something basic (Tsimane numbers beyond “two” are known as “many”) and the positing a just-so story for why they don’t count above “two.”

Non-verbal tests

Take a non-verbal test, such as the Bender-Gestalt test. There are nine index cards which have different geometrical designs on them, and the testee needs to copy what he saw before the next card is shown. The testee is then scored on how accurate his recreation of the index card is. Seems culture-fair, no? It’s just shapes and other similar things, how would that be influenced by class and culture? One would, on a cursory basis, claim that such tests have no basis in knowledge structure and exposure and so would rightly be called “culture-free.” While the shapes that come on Ravens tests are novel, the rules governing them are not.

Hoffmann (1966) studied 80 children (20 Kickapoo Indians (KIs), 20 low SES blacks (LSBs), 20 low SES whites (LSWs), and 20 middle-class whites (MCWs)) on the Bender-Gestalt test. The Kickapoo were selected from 5 urban schools; 20 blacks from majority-black elementary schools in Oklahoma City; 20 whites in low SES areas of Oklahoma; and 20 whites from middle-schools in Oklahoma from majority-white schools. All of the children were aged 8-10 years of age and in the third grade, while all had IQs in the range of 90-110. They were matched on a whole slew of different variables. Hoffman (1966: 52) states “that variations in cultural and socio-economic background affect Bender Gestalt reproduction.”

Hoffman (1966: 86) writes that:

since the four groups were shown to exhibit no significant differences in motor, or perceptual discrimination ability it follows that differences among the four groups of boys in Bender Gestalt performance are assignable to interpretative factors. Furthermore, significant differences among the four groups in Bender performance illustrates that the Bender Gestalt test is indeed not a so called “culture-free” test.

Hoffman concluded that MCWs, KIs, LSBs, and LSWs did not differ in copying ability, nor did they differ significantly in discriminating in different phases in the Bender-Gestalt; there also was no bias in figures that had two of the different sexes on them. They did differ in their reproductions of Bender-Gestalt designs, and their differing performance can be, of course, interpreted differently by different people. If we start from the assumption that all IQ tests are culture-bound (Cole, 2004), then living in a different culture from the majority culture will have one score differently by virtue of having differing—culture-specific knowledge and experience. The four groups looked at the test in different ways, too. Thus, the main conclusion is that:

The Bender Gestalt test is not a “culture-free” test. Cultural and socio-economic background appear to significantly affect Bender Gestalt reproduction. (Hoffman, 1966: 88)

Drame and Ferguson (2017) and Dutton et al (2017) also show that there is bias in the Raven’s test in Mali and Sudan. This, of course, is due to the exposure to the types of problems on the items (Richardson, 2002: 291-293). Thus, their cultures do not allow exposure to the items on the test and they will, therefore, score lower in virtue of not being exposed to the items on the test. Richardson (1991) took 10 of the hardest Raven’s items and couched them in familiar terms with familiar, non-geometric, objects. Twenty eleven-year-olds performed way better with the new items than the original ones, even though they used the same exact logic in the problems that Richardson (1991) devised. This, obviously, shows that the Raven is not a “culture-free” measure of inductive and deductive logic.

The Raven is administered in a testing environment, which is a cultural device. They are then handed a paper with black and white figures ordered from left to right. Note that Abel-Kalek and Raven (2006: 171) write that Raven’s items “were transposed to read from right to left following the custom of Arabic writing.” So this is another way that the tests are biased and therefore not “culture-free.”) Richardson (2000: 164) writes that:

For example, one rule simply consists of the addition or subtraction of a figure as we move along a row or down a column; another might consist of substituting elements. My point is that these are emphatically culture-loaded, in the sense that they reflect further information-handling tools for storing and extracting information from the text, from tables of figures, from accounts or timetables, and so on, all of which are more prominent in some cultures and subcultures than others.

Richardson (1991: 83) quotes Keating and Maclean (1987: 243) who argue that tests like the Raven “tap highly formal and specific school skills related to text processing and decontextualized rule application, and are thus the most systematically acculturated tests” (their emphasis). Keating and Maclean (1987: 244) also state that the variation in scores between individuals is due to “the degree of acculturation to the mainstream school skills of Western society” (their emphasis). That’s the thing: all types of testing is biased towards a certain culture in virtue of the kinds of things they are exposed to—not being exposed to the items and structure of the test means that it is in effect biased against certain cultural/social groups.

Davis (2014) studied the Tsimane, a people from Bolivia, on the Raven. Average eleven-year-olds scored 78 percent or more of the questions correct whereas lower-performing individuals answered 47 percent correct. The eleven-year-old Tsimane, though, only answered 31 percent correct. There was another group of Tsimane who went to school and lived in villages—not living in the rainforest like the other group of Tsimane. They ended up scoring 72 percent correct, compared to the unschooled Tsimane who scored only 31 percent correct. “… the cognitive skills of the Tsimane have developed to master the challenges that their environment places on them, and the Raven’s test simply does not tap into those skills. It’s not a reflection of some kind of true universal intelligence; it just reflects how well they can answer those items” (Heine, 2017: 189). Thus, measures of “intelligence” are not an innate skill, but are learned through experience—what we learn from our environments.

Heine (2017: 190) discusses as-of-yet-to-be-published results on the Hadza who are known as “the most cognitively complex foragers on Earth.” So, “the most cognitively complex foragers on Earth” should be pretty “smart”, right? Well, the Hadza were given six-piece jigsaw puzzles to complete—the kinds of puzzles that American four-year-olds do for fun. They had never seen such puzzles before and so were stumped as to how to complete them. Even those who were able to complete them took several minutes to complete them. Is the conclusion then licensed that “Hadza are less smart than four-year-old American children?” No! As that is a specific cultural tool that the Hadza have never seen before and so, their performance mirrored their ignorance to the test.

Logic

The term “logical” comes from the Greek term logos, meaning “reason, idea, or word.” So, “logical reasoning” is based on reason and sound ideas, irrespective of bias and emotion. A simple syllogistic structure could be:

If X, then Y

X

∴ Y

We can substitute terms, too, for instance:

If it rains today, then I must bring an umbrella.

It’s raining today.

∴ I must bring an umbrella.

Richardson (2000: 161) notes how cross-cultural studies show that what is or is not logical thinking is not objective nor simple, but “comes in socially determined forms.” He notes how cross-cultural psychologist Sylvia Scribner showed some syllogisms to Kpelle farmers, which were couched in terms that were familiar to them. One syllogism given to them was:

All Kpelle men are rice farmers

Mr. Smith is not a rice farmer

Is he a Kpelle man? (Richardson, 2002: 162)