Home » Posts tagged 'IQ'

Tag Archives: IQ

Formalizing the Heritability Fallacy

2050 words

Introduction

Claims about the genetic determination of psychological traits, like intelligence, continue to be talked about in the academic literature using twin and adoption studies and more recently GWAS and PGSs. High heritability is frequently taken to indicate that a trait is “largely genetic”, that genetic differences are explanatorily primary, or that environmental interventions are comparatively limited in their effects. These inferences capture both soft and strong hereditarianism, ranging from explicit genetic determinism to probablistic genetic influence.

But at the same time, critics like Lewontin (1974), Block and Dworkin, Oyama (2000), Rose (2006), and Moore and Shenk (2016)—among others—have emphasized that heritability is a population- and environment-relevant statistic describing the proportion of phenotypic variance associated with genetic variance under specific background conditions. It does not, by itself, identify causal mechanisms, degrees of genetic control, or the developmental sources of individual traits. Moore and Shenk have labeled the inference from high heritability to genetic determination the “heritability fallacy.” Despite widespread agreement that heritability is often misinterpreted, genetic conclusions continue to be drawn from heritability estimates, suggesting that this underlying inferential error has yet to be identified.

I will argue that the persistence of hereditarian interpretations of heritability reflect a deeper logical one than a mere empirical one. I will show that any attempt to infer genetic determination from heritability must take one of two forms. Either high heritability is treated as evidence for genetic determination, in which case the inference is logically invalid committing the fallacy of affirming the consequent; or high heritability is treated as entailing genetic determination, in which case the inference rests on a false case of what heritability measures. These two options exhaust the plausible inferential routes from heritability to hereditarian conclusions, and in neither case does the inference succeed.

So by formalizing the dilemma, I will show the logical structure of the heritability fallacy, and show that it is not confined to outdated arguments. This dilemma applies equally to contemporary and cautious forms of hereditarian causation that emphasize probablistic causation, polygenicity, or gene-environment interaction. Because heritability does not track causal contribution, developmental control, or counterfactual stability, no refinement of statistical technique can bridge the inferential gap that hereditarianism requires.

This argument doesn’t deny that genes are necessary for development, that genetic variation exists or that genetic differences may correlate with phenotypic differences under certain conditions. Rather, it shows that heritability cannot bear the explanatory weight that hereditarianism requires of it. Thus, hereditarianism fails not because of missing data or insufficiently sophisticated models, but because the central inference on which it relies cannot be made in principle.

The argument

This argument has two horns: (a) high heritable entails genetic determination or (b) high heritability correlates with genetic determination.

P1: If heritability licenses inferences about genetic determination, then either: (a) high heritability entails genetic determination or (b) high heritability merely correlates with genetic determination.

P2: If (a), the inference from high heritability to genetic determination is valid only if heritability measures genetic determination, which it does not.

P3: If (b), the inference commits the fallacy of affirming the consequent.

P4: Heritability measures only population-level variance partitioning, not individual-level causal genetic determination.

C: Therefore, heritability cannot license inferences about genetic determination.

C1: Therefore, hereditarianism fails insofar it relies on heritability.

When it comes to (a), the argument is valid (if H -> G; H; ∴ G), but heritability doesn’t measure or capture genetic determination. For it to do that it would have to measure causal genetic control, track developmental mechanisms, generalize across environments, and distinguish genes from correlated environments. So horn (a) is a category mistake, a category error, and a misinterpretation of heritability.

When it comes to (b), it’s an invalid inference since it affirms the consequent. The form is (If G -> H; H; ∴ G). Basically, if genetic determination produces high heritability, then high heritability must imply genetic determination. This doesn’t follow because there are other causes of high heritability other than G: environmental uniformity, social stratification, canalized developmental pathways, institutional sorting, and shared rearing conditions, among other things. Thus, the inference is invalid since H does not uniquely imply G. The failure of horn (b) is structural, not empirical. Ultimately, the consequent does not uniquely imply the antecedent, therefore the argument affirms the consequent.

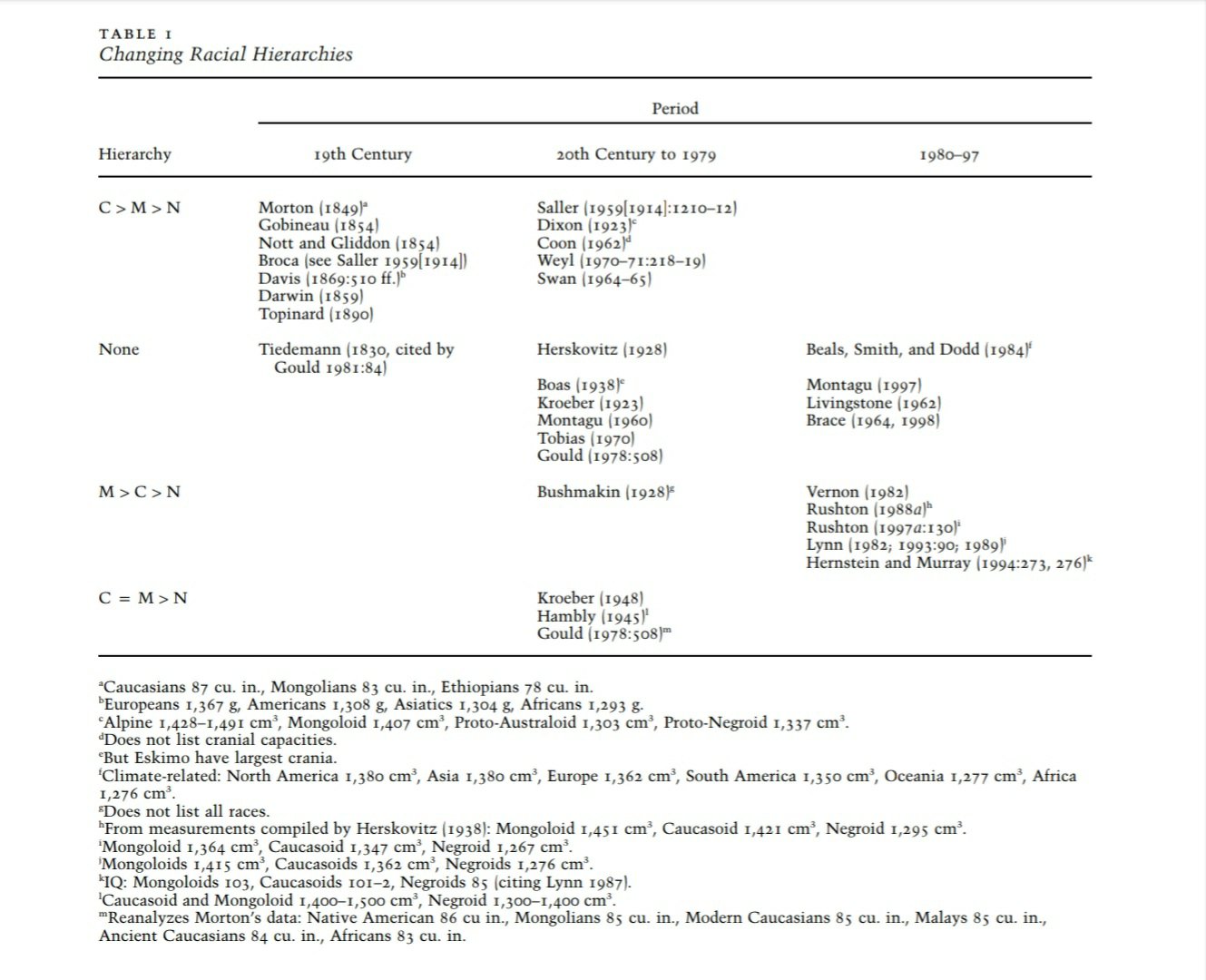

The hereditarian group differences argument is basically: (1) Within-group heritability -> individual genetic causation; (2) individual causation -> group mean difference; (3) group differences -> biological explanation. This is something that Herrnstein and Murray argued for, but Joseph and Richardson (2025) show that there is no evidence for the claim that they made.

Possible responses

“We don’t claim determination, just strong genetic influence.” “Strong genetic influence” can mean a multitude of things: large causal contribution, constraint on developmental outcomes, stability across environments, or explanatory primacy. But heritability doesn’t entail any of those options. High heritability is compatible with large environmental causal effects, massive developmental plasticity, complete reverseability under intervention, and zero mechanistic understanding. The dilemma still applies.

“Genes set limits, environments only operate within them.” This is similar to the “genes load the gun and environment pulls the trigger” claim. Unfortunately, for this claim to be meaningful, hereditarians must provide: a non-circular account of “genetic limits”, counterfactual stability across environments, and a developmental mechanism mapping genes -> phenotypes. However, no such limits are specified, reaction norms are unknown, and developmental systems theory shows that genes are necessary enabling conditions, not boundary-setting sufficient causes (eg Oyama, 2000; Noble, 2012). This claim just assumes what needs to be proven.

“Polygenic traits are different; heritability is about many small causes.” Unfortunately, polygenicity increases context-sensitivity, developmental underdeterminination, and environmental mediation. GWAS hits lack mechanistic understanding and are environment- and population-specific. Therefore, polygenicity undermines causal genetic determination.

“Heritability predicts outcomes so it must be causal.” This is just confusing prediction with explanation. Weather predicts umbrella use, and barometer predict storms. But does that say anything about prediction and causation? Obviously, prediction doesn’t entail causation. Heritability predicts variance under strict, fixed background conditions. It says nothing about why the trait exists, how the trait develops, and what would happen infer intervention.

Population stratification and the misattribution of genetic effects

GWAS is heritability operationalized without theory. It assumes additive genetic effects, stable phenotype measurement, linear genotype-phenotype mapping, and environment as noise. But association studies don’t identify causal mechanisms and unfortunately, more and more data won’t be able to ultimately save the claim, since correlations are inevitable (Richardson, 2017; Noble, 2018) But for traits like IQ (since that seems to be the favorite “trait” of the hereditarian GWAS proponent), measurement is rule-governed and non-ratio, development is socially scaffolded, and gene effects covary (non-causally, Richardson, 2017) with institutions, practices, and learning. So GWAS findings are statistically real, explanatorily inert and overinterpreted. PGS reifies population stratification, encodes social stratification, and fall under environmental change. Therefore, GWAS falls infer the same argument that is mounted here. Richardson (2017) has a masterful argument on how these differences arise, which I have reconstructed below.

High heritability within stratified societies does not indicate that observed group differences are genetically caused. Rather, social stratification simultaneously produces (i) persistent genetic stratification across social groups and (ii) systematic environmental differentiation that directly shapes cognitive development. As a result, between-group differences in cognitive test performance will necessarily mirror the structure of stratification, even when no trait differences are genetically determined.

In such contexts, heritability estimates capture covariwbce within an already-structured population, not causal genetic contribution. The same stratification processes that maintain patterned mating, residential segregation, and ancestry clustering also produce differential schooling quality, resource exposure, discrimination, and developmental opportunities. These environmental processes directly affect cognitive performance while remaining statistically aligned with ancestry. So the resulting group differences reflect the pattern of stratification rather than the action of genes.

P1: In socially stratified populations, social stratification produces both (a) persistent genetic stratification, and (b) systematic environmental differentiation relevant to cognitive development.

P2: Persistent environmental differentiation directly contrived to cognitive performance outcomes.

P3: Because genetic stratification and environmental differentiation are jointly generated by the same stratification processes, they are necessarily aligned across groups.

C1: Therefore, between-group differences in cognitive test performance in stratified populations necessarily reflect the pattern of ongoing social stratification.

C2: Therefore, the alignment of cognitive differences with genetic population structure does not imply genetic causation, but follows from the way stratification co-produces genetic clustering and environmental differentiation.

A discerning eye can see what I did here. The heritability pincer argument leads hereditarians into one of two inferences. A hereditarian can now say “Even if heritability isn’t causal, it still tracks genetic causation.” But the Richardson stratification argument blocks this by showing that social stratification sorts both genomes and environments, so cognitive differences can arise without genes being a causal mechanism of them.

Conclusion

The heritability fallacy consists in inferring genetic determination from high heritability, an inference that is either invalid (affirming the consequent) or valid only by presupposing the false premise that heritability measures causal genetic determination rather than population-relative variance.

The argument I have developed shows that hereditarian interpretations of heritability cannot license claims of genetic determinism. If heritability is to entail generic causation, then the inference presupposes that heritability measures causal genetic influence, which it doesn’t. If heritability is instead feared as merely correlates with genetic causation, then the inference from high heritability to genetic causation commits the fallacy of affirming the consequent. In either case, heritability is a statistic of variance partitioning at the level of populations, not a measure of individual-level or developmental causation. It does not identify what produces, sustains or structures phenotypic differences.

The population stratification argument clarifies why this logical failure is also an empirical one. In socially stratified societies, historical and ongoing processes of segregation, assortative mating, migration sorting, institutional selection, and unequal exposure to developmental environments generate joint covariwbce between socially structured genetic ancestry and socially structured environmental conditions. Persistent group differences in cognitive test performance in these contexts are therefore not independent of genetic causation. Rather, they necessarily reflect the underlying pattern of stratification that simultaneously produces environmental differentiation. Apparent “genetic signals” in behavioral or cognitive outcomes can therefore emerge even when the operative causal mechanisms are environmental and developmental.

Together, these argument converge on a single conclusion. Heritability estimates do not—and cannot—discriminate between causal pathways that arise from genes and those that arise from socially reproduced environments they covary with genetic structure. To infer genetic determination from heritability is therefore doubly unwarranted: it is a fallacy in form and is unreliable in stratified populations where social structure organizes both gene frequencies and developmental conditions along the same lines. Hereditarianism fails insofar as it treats heritability, or stratification-aligned outcome differences, as evidence of intrinsic generic causation rather than as reflections of the socially constructed systems that produce and maintain them.

The way that heritability is interpreted, along with how genes are viewed as causal for phenotypes, leads to the conclusion that DNA is a blueprint—Plomin claimed in his 2018 book that DNA is a “fortune-teller”, while genetic tools like GWAS/PGSs are “fortune-telling devices.” Genes don’t carry context-independent information, so genes aren’t a blueprint or recipe (Schneider, 2007; Noble, 2024).

This pincer argument along with the social stratification argument shows one thing: hereditarianism is a failure of an explanation and that environment can explain these observations, because ultimately, genes don’t do anything on their own and done act in the way hereditarians need them to for their conclusions to be true and for their research programme to be valid.

The hereditarian has no out here. Due to the assumptions they hold about how traits are fixated and how traits are caused, they are married to certain assumptions and beliefs. And if the assumptions and belief they hold are false, then their beliefs don’t hold under scrutiny. Heritability is a way to give an air of scientific-ness to their arguments by claiming measurement and putting numbers to things, but this argument I’ve mounted here just shows yet again that hereditarianism isn’t a valid science. Hereditarian beliefs about the causation of traits belong in an age where we don’t have the current theoretical, methodological and empirical knowledge on the physiology of genes and developmental systems. Hereditarians need to join the rest of us in the new millennium; their beliefs and arguments belong in the 1900s.

The Conceptual Impossibility of Hereditarian Intelligence

3650 words

Introduction

For more than 100 years—from Galton and Spearman to Burt, Jensen, Rushton, Lynn and today’s polygenic score enthusiasts—hereditarian thinkers have argued that general intelligence is a unitary, highly heritable biological trait and that observed individual and group level differences in IQ and it’s underlying “g” factor primarily reflect genetic causation. The Bell Curve brought such thinking into the mainstream from obscure psychology journals, and today hereditarian behavioral geneticists claim that 10 to 20 percent of the variance in education and cognitive performance has been explained by GWA studies (see Richardson, 2017). The consensus is that intelligence within and between populations is largely genetic in nature.

While hereditarianism is empirically contested and morally wrong, the biggest kill-shot is that it is conceptually impossible, and one can use many a priori arguments from philosophy of mind to show this. Donald Davidson’s argument against the possibility of psychophysical laws, Kripke’s reading of Wittgenstein, and Nagel’s argument from indexicality can be used to show that hereditarianism is a category error. Ken Richardson’s systems theory can then be used to show that g is an artifact of dynamic systems (along with test construction), and Vygotsky’s cultural-historical psychology shows that higher mental functions (which hereditarians try to explain biologically) originate as socially scaffolded, inter-mental processes mediated by cultural tools and interactions with more knowledgeable others, not individual genetic endowment.

Thus, these metaphysical, normative, systemic, developmental and phenomenological refutations show that hereditarianism is based on a category mistake. Ultimately, what hereditarianism lacks is a coherent object to measure—since psychological traits aren’t measurable at all. I will show here how hereditarianism can be refuted with nothing but a priori logic, and then show what really causes differences in test scores within and between groups. Kripke’s Wittgenstein and the argument against the possibility of psychophysical laws, along with a Kim-Kripke normativity argument against hereditarianism show that hereditarianism just isn’t a logically tenable position. So if it’s not logically tenable, then the only way to explain gaps in IQ is an environmental one.

I will begin with showing that no strict psychophysical laws can link genes/brain states to mental kinds, then demonstrating that even the weaker functional-reduction route collapses at the very first step because no causal-role definition of intentionality (intelligence) is possible. After that I will add the general rule following considerations from Kripke’s Wittgenstein and then add it to my definition of intelligence, showing that rule-following is irreducibly normative and cannot be fixed by any internal state and that no causal-functional definition is possible. Then I will show that the empirical target of hereditarianism—the g factor—is nothing more than a statistical artifact of historically contingent, culturally-situated rule systems and not a biological substrate. These rule systems do not originate internally, but they develop as inter-mental relations mediated by cultural tools. Each of these arguments dispenses with attempted hereditarian escapes—the very notion of a genetically constituted, rank-orderable general intelligence is logically impossible.

We don’t need “better data”—I will demonstrate that the target of hereditarian research does not and cannot exist as a natural, measurable, genetically-distributed trait. IQ scores are not measurements of a psychological magnitude (Berka, 1983; Nash, 1990); no psychophysical laws exist that can bridge genes to normative mental kinds (Davidson, 1979), and the so-called positive manifold is nothing more than a cultural artifact due to test construction (Richardson, 2017). Thus, what explains IQ variance is exposure to the culture in which the right rules are used regarding the IQ test.

Psychophysical laws don’t exist

Hereditarianism implicitly assumes a psychophysical law like “G -> P.” Psychophysical laws are universal, necessary mappings between physical states and mental states. To reduce the mental to the physical, you need lawlike correlations—whenever physical state P occurs, mental state M occurs. These laws must be necessary, not contingent. They must bridge the explanatory gap from the third-personal to the first-personal. We have correlations, but correlations don’t entail identity. If correlations don’t entail identity, then the correlations aren’t evidence of any kind is psychophysical law. So if there are no psychophysical laws, there is no reduction and there is no explanation of the mental.

Hereditarianism assumes type-type psychophysical reduction. Type-type identity posits that all instances of a mental type correspond to all instances of a physical type. But hereditarians need bridge laws—they imply universal mappings allowing reduction of the mental to the measurable physical. But since mental kinds are anomalous, type-type reduction is impossible.

Hereditarians claim that genes cause g which then cause intelligence. This requires type-type reduction. Intelligence kind = g kind = physical kind. But g isn’t physical—it’s a mathematical construct, the first PC. Only physical kinds can be influenced by genes;nonphysical kinds cannot. Even if g correlates with brain states, correlation isn’t identity. Basically, no psychophysical laws means no reduction and therefore no mental explanation.

If hereditarianism is true, then intelligence is type-reducible to g/genes. If type-reduction holds, then strict psychophysical laws exist. So if hereditarianism is true, then strict psychophysical laws exist. But no psychophysical laws exist, due to multiple realizablilty and Davidson’s considerations. So hereditarianism is false.

We know that the same mental kind can be realized in different physical kinds, meaning that no physical kind correlates one-to-one necessarily with a mental kind. Even if we generously weaken the demand from strict identity to functional laws, hereditarian reduction still fails (see below).

The Kim-Kripke normativity argument

Even the only plausible route to mind-body reduction that most physicalists still defend collapses a priori for intentional/cognitive states because no causal-functional definition can ever capture the normativity of meaning and rule following (Heikenhimo, 2008). Identity claims like water = h2O only work because the functional profile is already reducible. Since the functional profile of intentional intelligence is not reducible, there is no explanatory bridge from neural states to the normativity of thought. So identity claims fail—this just strengthens Davidson’s conclusions. Therefore, every reductionist strategy that could possibly license the move from “genetic variance -> variation in intelligence” is blocked a priori.

(1) If hereditarianism is true, then general intelligence as a real cognitive capacity must be reducible to the physical domain (genes, neural states, etc).

(2) The only remaining respectable route to mind-body reduction of cognitive/intentional processes is Kim’s three-step functional-reduction model.

(C1) So if hereditarianism is true, then general intelligence must he reducible to Kim’s three-step functional-reduction model.

(3) Kim-style reduction requires—as its indispensable first step—an adequate causal-functional definition of the target property (intelligence, rule-following, grasping meaning, etc) that preserves the established normative meaning of the concept without circularly using mental/intentional vocabulary in the definiens.

(4) Any causal-functional definition of intentional/cognitive states necessarily obliterates the normative distinction between correct and incorrect application (Kripke’s normativity argument applied to mental content).

(C2) Therefore, no adequate causal-functional definition of general intelligence is possible, even in principle.

(5) If no adequate causal-functional definition is possible, then Kim-style functional reduction of general intelligence is impossible.

(C3) So Kim-style functional reduction of general intelligence is impossible.

(C4) So hereditarianism is false.

A hereditarian can resist Kim-Kripke in 4 ways but each fails. (1) They can claim intelligence need not be reducible, but then genes cannot causally affect it, dissolving hereditarianism into mere correlation. (2) They can reject Kim-style reduction in factor of non-reductive or mechanistic physicalism, but these views still require functional roles and collapse under Kim’s causal exclusion argument. (3) They can insist that intelligence has a purely causal-functional definition (processing efficiency or pattern recognition), but such definitions omit the normativity of reasoning and therefore do no capture intelligence at all. (4) They can deny that normativity matters, but removing correctness conditions eliminates psychological content and makes “intelligence” unintelligible, destroying the very trait hereditarianism requires. Thus, all possible routes collapse into contradiction or eliminativism.

The rule-following argument against hereditarianism

Imagine a child who is just learning to add. She adds 68+57=125. We then say that she is correct. Why is 125 correct and 15 incorrect? It isn’t correct because she feels sure, because someone who writes 15 could be just as sure. It isn’t correct because her brain lit up in a certain way, because the neural pattern could also belong to someone following a different rule. It isn’t correct because all of her past answers, because all past uses were finite and are compatible with infinitely many bizzare rules that only diverge now. It isn’t correct because of her genes or any internal biological state, because DNA is just another finite physical fact inside of her body.

There is nothing inside of her head, body or genome that reaches out and touches the difference between correct and incorrect. But the difference is real. So where does it lie? It lives outside of her in the shared community practices. Correctness is a public status, not a private possession. Every single thing that IQ tests reward—series completion, analogies, classification, vocabulary, matrix reasoning—is exactly this kind of going on correctly. So every single point on an IQ test is an act whose rightness is fixed in the space of communal practice. What we call “intelligence” exists only between us—between the community, society and culture in which an individual is raised.

Intelligence is a normative ability. To be intelligent is to go on in the same way, to apply concepts correctly, to get it right when solving new problems, reasoning, understanding analogies, etc. So intelligence = rule-following (grasping and correctly applying abstract patterns).

Rule following is essentially normative—there is a difference between seeming right and being right. Any finite set of past performances is compatible with an infinite set of many rules. No fact about an individual—neither physical nor mental content—uniquely determines the rule they are following. So no internal state fixes the norm. Thus, rule following cannot be constituted by internal/genetic states. No psychophysical law can connect G to correct rule following (intelligence).

Therefore rule-following is set by participation in a social practice. Therefore, normative abilities (intelligence, reasoning, understanding) are socially, not genetically, constituted. So hereditarianism is logically impossible.

At its core, intelligence is the ability to get it right. Getting it right is a social status conferred by participation in communal practices. No amount of genetic or neural causation can confer that status—because no internal state can fix the normative fact. So the very concept of “genetically constituted general intelligence” is incoherent. Therefore, hereditarianism is logically impossible.

(1) H -> G -> P

Hereditarianism -> genes/g -> normative intelligence

(2) P -> R

Normative intelligence -> correct rule-following.

(3) R -> ~G

Rule following cannot be fixed by internal physical/mental states.

So ~(G -> P)

So ~H.

The Berka-Nash measurement objection

This is a little-known critique of psychology and IQ. First put forth in Karel Berka’s 1983 book Measurement: It’s Concepts, Theories, and Problems, and then elaborated on in Roy Nash’s (1990) Intelligence and Realism: A Materialist Critique of IQ.

If hereditarianism is true, then intelligence must be a measurable trait (with additive structure, object, and units) that genes can causally influence via g. If intelligence is measurable, then psychophysical laws must exist to map physical causes to mental kinds. But no such measurability or laws exist. Thus, hereditarianism is false.

None of the main, big-name hereditarians have ever addressed this type of argument. (Although Brand et al, 2003 did attempt to, their critique didn’t work and they didn’t even touch the heart of the matter.) Clearly, the argument shows that hereditarian psychology is weak to such critique. The above argument shows that IQ is quasi-quantification, without an empirical object, no structure, or lawful properties

The argument for g is circular

“Subtests within a battery of intelligence tests are included n the basis of them showing a substantial correlation with the test as a whole, and tests which do not show such correlations are excluded.” (Tyson, Jones, and Elcock, 2011: 67)

g is defined as the common variance of pre-selected subtests that must correlate. Subtests are included only if they correlate. A pattern guaranteed by construction cannot be evidence of a pre-existing biological unity. So g is a tautological artifact, not a natural kind that genes can cause.

Hereditarians need g to be a natural kind trait that genes can act upon. But g is an epiphenomenal artifact due to test construction produced by current covariation of culturally specific cognitive tasks in modern school societies. Artifacts of historically contingent cultural ecologies are not natural kind traits. So g is not a natural kind. So hereditarianism is false.

The category error argument

Intelligence is a first-person indexical act. g is a third-person statistical abstraction. There can be no identity between a phenomenonal act and a statistical abstraction. So g cannot be intelligence—no reduction is possible.

There is no such thing as genetically constituted general intelligence since intelligence is a rational normative competence, the g factor is an epiphenomenal artifact of a historically contingent self-organizing cultural-cognitive ecology, and higher psychological functions originate as social relations mediated by cultural tools which only later appear individual. Hereditarianism tries to explain a normative status with causal mechanisms, a dynamic cultural artifact with a fixed trait, and an inter-mental function with intra-cranial genetics.

g is a third-person statistical construct. Intelligence, as a psychological trait, consists of first-person indexical cognitive acts. Category A – third-person, impersonal (g, PGS, allele frequencies, brain scans). Category B – first-person, subjective, experiential).

Genetic claims assert that differences in g (category A) are caused by differences in genes and that this then explains differences in intelligence (category B). For such claims to be valid, g (category A) must be identical to intelligence (category B). But g has no first-person phenomenology meaning no one experiences using g, while intelligence does. So g (category A) cannot be identical to intelligence (category B).

Thus, claiming genes cause differences in g which then explain group differences in intelligence commits a category error, since a statistical artifact is equated with a lived, psychological reality.

A natural-kind trait must be individuated independent of the measurement procedure. g is individuated only by the procedure (PC1 extracted from tests chosen for their intercorrelations). Therefore, g is not a natural-kind trait. Only natural kinds can plausibly be treated as biological traits. Thus, g is not a biological trait.

Combining this argument with the Kim-Kripke normativity argument shows that hereditarians don’t just reify a statistical abstraction, they try to reduce a normative category into a descriptive one.

Vygotsky’s social genesis of higher functions

Higher psychological functions originate as social relations mediated by cultural tools which only later appear individual. If hereditarianism is true, then higher psychological functions originate as intra-individual genetic endowments. A function cannot originate both as inter-mental social relations and as intra-individual genetic endowments. So hereditarianism is false.

Intelligence is not something a sole individual possesses—it is something a person achieves within a cultural-historical scaffold. Intelligence is not an individual possession that cab be ranked by genes, it is a first-person indexical act that is performed within, and made possible by, that social scaffold.

Ultimately, Vygotsky’s claim is ontological, not merely developmental. Higher mental functions are constituted by social interaction and cultural tools. Thus, their ontological origin cannot be genetic because the property isn’t intrinsic, it’s relational. No amount of intra-individual genetic variation can produce a relational property.

Possible counters

“We don’t need reduction, we only need prediction/causal inference. We’re only showing genes -> brains -> test scores.” If genes or polygenic scores causally explain the intentional-level fact that someone got question 27 right, there must be a strict law covering the relation. There is none. All they have is physical-physical causation—DNA -> neural firing -> finger movement. The normative fact that the movement was the correct one is never touched by any physical law.

“Intelligence is just “whatever enables success on complex cognitive tasks—we can functionalize it that way and avoid normativity.” This is the move that Heikenhimo (2008) takes out. Any causal-role description of “getting it right on complex tasks” obliterates the distinction between getting it right and merely producing behavior that happens to match. The normativity argument shows you can’t define “correct application” in purely causal terms without eliminativism or circularity.

“g is biologically real because it correlates with brain volume, reaction time, PGSs, etc.” Even if every physical variable perfectly correlated with getting every Raven item right, it still wouldn’t explain why one pattern is normatively correct and another isn’t. The normative status is anomalous and socially constituted. Correlation isn’t identity and identity is impossible.

“Heritability is just a population statistic.” Heritability presupposes that the trait is well-defined and additive in the relevant population. The Berka-Nash measurement objection shows that IQ (and any psychological trait) is not quantitatively-structured trait with a conjoint measurement structure. Without that, h2 is either undefined or meaningless.

Even then, the hereditarian can agree with the overall argument I’ve mounted here and say something like: “Psychometrics and behavioral genetics have replaced the folk notion of intelligence with a precise, operational successor concept: general cognitive ability as indexed by the first principle component of cognitive test variance. This successor concept is quantitative, additive, biologically real and has non-zero heritability. We aren’t measuring the irreducibly normative thing you’re talking about; we’re measuring something else that is useful and genetically influenced.” Unfortunately, this concept fails once you ask what justifies treating the first PC as a causal trait. As soon as you claim it causes anything at the intentional-level (higher g causes better reasoning, generic variance causes higher g which causes higher life success), they are back to needing psychophysical laws or a functional definition that bridges the normative gap. If they then retreat to pure physical prediction, they have then abandoned the claim that genes cause intelligence differences. Therefore, this concept is either covertly normative (and therefore irreducible), or purely descriptive/physical (therefore being irrelevant to intelligence.)

A successor concept can replace a folk concept if and only if it preserves the explanatorily relevant structure. But replacing “intelligence” with “PC1 of test performance” destroys the essential normative structure of the concept. Therefore, g cannot serve as a scientific successor to the concept of intelligence.

“We don’t need laws, identity, or functional definitions. Intelligence is a real pattern in the data. PGSs, brain volume, reaction time, educational attainment and job performance all compress onto a single and robust predictive dimension. That dimension is ontologically real in exactly the same way as temperature is real in statistical mechanics even before we had microphysical reduction. The heritability of the pattern is high. Therefore genes causally contribute to the pattern. g, the single latent variable, compresses performance across dozens of cognitive tests, predicts school grades, job performance, reaction time, brain size, PGSs with great accuracy. This compression is identical across countries, decades, and test batteries. So g is as real as temperature.” This “robust, predictive pattern” is real only as conformity to culturally dominant rule systems inside modern test-taking societies. The circularity of g still rears its head.

Conclusion

Hereditarianism rests on the unspoken assumption that general intelligence is a natural-kind, individual-level, biologically-caused property that can be lawfully tied to, or functionality defined in terms of, genes and brain states. Davidson shows there are no psychophysical laws; Kim-Kripke show even functional definitions are impossible; Kripke-Wittgenstein show that intelligence is irreducibly normative and holistic; Richardson/Vygotsky show that g is a cultural artifact and that higher mental faculties are born inter-mental;

Because IQ doesn’t measure any quantitatively-structured psychological trait (Berka-Nash), and no psychophysical laws exist (Davidson), the very notion of additive genetic variance contributing to variance in IQ is logically incoherent – h2 is therefore 0.

Hereditarianism requires general intelligence to be (1) a natural-kind trait located inside the skull (eg Jensen’s g), (2) quantitatively-structured so that genetic variance components are meaningful, (3) reducible—whether by strict laws or functional definition—to physical states that genes can modulate, and (4) the causal origin of correct rule-following on IQ tests. Every one of these requirements is logically impossible: no psychophysical laws exist (Davidson), no functional definitions of intentional states is possible (Heikenhimo), rule-following is irreducibly normative and socially constituted (Kripke-Wittgenstein), IQ lacks additive quantitative structure (Berka, Nash, Michell, Richardson) higher mental functions originate as social relations (Vygotsky).

Now I can say that: Intelligence is the dynamic capacity of individuals to engage effectively with their sociocultural environment, utilizing a diverse range of cognitive abilities (psychological tools), cultural tools, and social interactions, and realized through rule-governed practices that determine the correctness of reasoning, problem solving and concept application.

Differences in IQ, therefore, aren’t due to differences in genes/biology (no matter what the latest PGS/neuroimaging study tells you). They show an individual’s proximity to the culturally and socially defined practices on the test. So from a rule-following perspective, each test item has a normatively correct solution, determined by communal standards. So IQ scores show the extent to which someone has internalized the relevant, culturally-mediated rules, not a fixed, heritable mental trait.

So the object that hereditarians have been trying to measure and rank by race doesn’t and cannot exist. There is no remaining, respectable position for the hereditarian to turn to. They would all collapse into the same category error: trying to explain a normative, inter-mental historically contingent status with intra-cranial causation.

No future discovery—no better PGSs, no perfect brain scan, no new and improved test battery—can ever rescue the core hereditarian claim. Because the arguments here are conceptual. Hereditarianism is clearly a physicalist theory, but because physicalism cannot accommodate the normativity and rule following that constitute intelligence, the hereditarian position inherits physicalism’failure, making it untenable. Hereditarianism needs physicalism to be true. But since physicalism is false, so is hereditarianism.

(1) If hereditarianism is true then general intelligence must be a quantitatively-structured, individual-level, natural-kind trait that is either (a) linked by strict psychophysical laws or (b) functionally reducible to physical states genes can modulate.

(2) No such trait is possible since no psychophysical laws exist (Davidson), no functional reduction of intentional/normative states is possible (Kim-Kripke normativity argument), and rule-following correctness is irreducibly social and non-quantitative (Wittgenstein/Kripke, Berka, Nash, Michell, Richardson, Vygotsky).

(C) Therefore, hereditarianism is false.

The Arbitrariness of “IQ/Intelligence”

2200 words

…but the question “What is intelligence?” has only ever been answered by a shifting social consensus. So perhaps, lke the stuff of dreams and nightmares, it too belongs in the realm of mere appearances. (Goodey, 2011)

IQ groupings/cutoffs are arbitrary. What I mean by “arbitrary” is something without reason or justification; something that is not supported by facts or reasons. What is the reason/justification/facts/reasons for the groupings? The arbitrariness of IQ is also seen historically when we look at how score distributions were changed when different assumptions were had about the “nature” of “intelligence” (e.g., Terman, 1916; Hilliard, 2012). In this article, I will argue that IQ cutoffs are arbitrary with no rational justification for them; they just use them because they get the desired distributions they want.

The arbitrariness of such cutoffs and groupings have been known since the first tests were beginning to be created by American test constructors when Binet and Simon’s test was brought over from France by Goddard in 1910. (See here for a history of the testing movement and how they construct the test.) Terman (1916: 89) warned “That the boundary lines between such groups [feebleminded, dull, superior, genius etc.] are arbitrary.” It is also in this same book—The Measurment of Intelligence—that Terman adjusted the scores of men and women, adding and subtracting items that both men and women get right/wrong the most to even out their scores. This was done by Terman putting items on the test that men were good at (“arithmetical reasoning, giving differences between a president and a king, solving the form board, making change, reversing hands of a clock, finding similiarities, and solving “the induction test.”” [Terman, 1916: 81]) while he also put items on the test that women were good at (“drawing designs from memory, aesthetic comparison, comparing object from memory, answering the “comprehension questions”, repeating digits and sentences, tying a bow-knot, and finding rhymes” [Terman, 1916: 81]). This can also be seen in SAT differences between men and women, as Rosser (1989) points out. It is a matter of item selection/analysis and what the desired distribution of scores you want is.

Such arbitrary IQ cutoffs for these “groups” that Terman used value judgments on reflect the necessity of IQ-ists to attempt to conceptualize “intelligence” as normally distributed, with most falling in the middle and fewer on the tails—where “geniuses” and above are on the right and “mildly impaired and delayed”, per the 5th edition of the Stanford-Binet. But the normal distribution for “IQ” is a myth (Richardson, 2017: chapter 2). The construction of normally distributed IQ tests means that any and all “group distinctions” and “cutoffs” are arbitrary. The test was created first, AND THEN they attempt to deduce what it “measures” on the basis of correlations with other tests and of academic achievement. Further, even showing that there is a relationship between IQ scores and academic achievement is irrelevant, and this is because they are different versions of the same test—meaning that the item content is similar between the tests (Schwartz, 1975; Beaujean et al, 2018). It is a creation of the test’s constructors, not something that we just so happened to find when these tests were created.

Thus, the “bell curve” is an artifact, not a fact, of test construction (Simon, 1997). Items are added and removed on a sample population until the desired distribution is reached. And it is this artificial distribution that all IQ theorizing rests on and it is this artificial distribution that IQ-ists attempt to use for their cutoffs between different “grades” of “intelligence” between people. When it comes to the constructed bell curve, about 2.2% of people fall below 70, so the test was constructed to get this result. So, if the bell curve is an artificial production created by humans, then so is the classification system (“intelligence”). If the classification system is an artificial creation, then so too is the concept of “learning disability.” Bazemore, Shinaprayoon, and Martin write that:

By developing an exclusion-inclusion criteria that favored the aforementioned groups, test developers created a norm “intelligent” (Gersh, 1987, p.166) population “to differentiate subjects of known superiority from subjects of known inferiority” (Terman, 1922, p. 656).

So basically, test constructors had in mind—before they developed the test—who was or was not “intelligent” and then built the test to fit their desires. I can see someone saying “Why does this matter if it happened 100 years ago?” Well, it matters because there is no conceptual support for hereditarian thinking for psychological traits and if there is no support, then the only reason they persist is due to prejudice (Mensh and Mensh, 1991). Furthermore, newer IQ tests use similar items as older ones, and newer tests are “validated” against older tests (like the Stanford-Binet), and so, biases in those tests carry over, without conscious bias toward groups being an ultimate goal (Richardson, 2002: 287,

The arbitrariness of IQ can also be seen with the cutoff for learning disability—a cutoff of 70 or below is seen as the individual needing remedial help and so, the IQ test is a good instrument for these purposes. IQ tests are arbitrary in their use to reflect deficits in everyday functioning (Arvidsson and Granlund, 2016). Cutoffs for learning disabilities have fluctuated between IQ 70-85 over the years. Someone in the US is defined as “learning disabled” if there is a discrepancy between their academic achievement and their “intelligence” (i.e., IQ test score). But, is there any justification as either for a cutoff, where if one were under a certain magic number that they would then be “learning disabled”?

The answer is no, because IQ is irrelevant to the definition of learning disabilities (Siegel, 1988, 1989, 1993). It is absolutely unnnecessary to give IQ tests to identify the learning disabled and the existence of a discrepancy is not a necessary condition (Gunderson and Siegal, 2001). People under IQ 70 frequently do not need specialist services whereas people with IQs over 70 frequently do (Whitaker, 2004). Such tests only see WHAT a person has learned, they DO NOT estimate one’s intellectual “capability”; since IQ tests are tests of a certain type of knowledge, it then follows that exposure to the items on the test and test structure—along with other non-cognitive variables (Richardson, 2002)—explain test score differences and that these differences can be built into and out of the test based on certain a priori assumptions. It further follows that if one has a low score, they were not exposed to the item content and structure of the test and that it is not a “deficit of intelligence” like IQ-ists claim.

Webb and Whitaker (2012) describe the double think employed by many clinical psychologists, privately acknowledging the limitations of IQ tests and the arbitrary nature of the cut-off score of 70 IQ points that defines learning disability, whilst publicly and professionally talking about learning disabilities ‘as if it were a real, naturally occurring condition” (p. 440). Thus the diagnostic procedure involving IQ tests can be seen as a way of passing off culturally specific norms of competence (measured through arcane rituals of assessment) as if they were universal and incontrovertible. (Chinn, 2021: 137-138)

The arbitrariness of IQ 70 as the cutoff for mental disability also rears its head in the courtroom, when defendants are on trial for murder. In Atkins v. Virginia, SCOTUS rules that it was unconstitutional to execute intellectually disabled people. Then in Hall v. Florida, it was ruled that an IQ score by itself was not, by itself, useful in the justification of sentencing; they needed to use other medical/diagnostic criteria. Some people may cry something like “But IQ matters to people it does not matter to only when there is a defendant that has rumblings of being executed but he does not because it is found that he has an IQ below 70!” Nevermind the ethical debate on the death sentence, the arbitrary cutoff of 70 for mental retardation—which, as has been shown, does not hold—has numerous legal and societal consequences for the individual so unluckily deemed “disabled.”

Kanaya and Ceci (2007) argue that when an individual takes a test (whether or not they took it at the beginning or end of the test’s cycle) would have dictated whether or not they qualified for the arbitrary IQ 70 cutoff to not be executed. So the year in which a test is administered is literally a life or death issue. So the year in which a defendant on trial for murder was tested can determine whether or not they are put to death. Prosecutors in many US states have succesfully argued for “ethnic adjustments” for IQ. Sanger (2015) reviews many US cases in which prosecutors have done so. Arguing that “ethnic adjustments” for IQ are “logically, clinically, and unconstitutionally unsound”, he reviews studies that show that abuse, neglect, poverty, and trauma decrease test scores and that the abuse, neglect, poverty, and trauma can be epigenetically passed on through multiple generations. Sanger (2015: 148-149) concludes:

Furthermore, any correlations between the average IQ test scores of racial cohorts (or average scores of cohorts to the overall community norm) are not attributable to race and are heavily influenced by race-neutral environmental factors.397 Those raceneutral environmental factors include the effects of the environment of childhood abuse, stress, poverty, and trauma.398 Such adverse environmental (but race-neutral) factors likely result in phenotypic manifestations, which include epigenetic changes affecting intellectual ability and result in greater numbers of persons with intellectual disabilities within that population.399 The individuals whose intellectual ability is adversely affected by those harmful environmental factors are disproportionately represented by minority groups and among those facing the death penalty in the United States.400

Therefore, the actual recipients of death sentences—the people on death row—are poor, of color, and have disproportionately been subjected to stress, poverty, abuse, and trauma.401 These very people are likely to suffer from actual phenotypic/biological impairment in intellectual functioning that can be passed down by way of programmed epigenetic gene expression through generations.

Quite clearly, this arbitrary IQ 70 cutoff for “intellectual disability” has real-life implications for some people, and in some cases it is a life or death matter based on “ethnic adjustments” and when an individual took a specific test sometime in that test’s lifecycle before renorming. So Sanger showed that it is common that the IQ scores of blacks and “Hispanics” get adjusted upwards routinely, so they can face the death penalty. They push them above the “cutoff” so they can be executed.

In my view, such distinctions between “IQ groups” like that created by Terman—and even continuing into the present day—is an attempt at naturalizing “intellectual disability”; an attempt at saying that these are “natural kinds.” Though “intelligent people and intellectually disabled people are not natural kinds but historically contingent forms of human self-representation and social reciprocity, of relatively recent historical origin” (Goodey, 2011:13). So, intellectual disability, learning disability, intelligence—these are all social constructs (which do not denote natural kinds) and they change with the times.

But Herrnstein and Murray (1994: 1) argued that “the word intelligence describes something real and…it varies from person to person is as universal and ancient as any understanding about the state of being human. Literate cultures everywhere and throughout history have words for saying that some people are smarter than others.” But unfortunately for Herrnstein and Murray, “Intelligence as currently and conventionally understood by psychologists is a brashly modern notion” (Daston, 1992: 211).

Conclusion

The arbitrariness of the designation of “intelligence” means that “IQ/intelligence” is not a “thing”, nor is a “natural kind”, but it is indeed a socially constructed historical notion (Goodey, 2011), as is the concept of “giftedness” (Borland, 1997). The creation of these tests and indeed the label “intellectually disabled” is completely racialized (Chinn, 2021). The arbitrariness and socially constructed notion of what “intelligence” is can be seen just by analyzing the test items—they are heavily classed and racialized, specifically white middle-class. When it comes to the death penalty and IQ, there are very serious issues, as when an individual was given a test may be the deciding factor between life or death, along with the fact that minorities are more likely to be on death row and they are also more likely to experience abuse, trauma, etc which can then be passed on generationally and then also influence test scores—along with test construction, which there is no justification for a certain set of items, just whatever gets the desired distribution is what is “right”; that’s why “IQ” is arbitrary.

We need to dispense with the idea that there is a “thing” called “intelligence” and that it is biological; we need to understand that what we do call “intelligence” is socially constructed as what psychologists all “intelligent” is answering items right and getting a higher score on a test which are heavily biased toward certain races/classes in America. Once we understand that this concept is socially constructed and is not biological, maybe we won’t repeat past mistakes, like sterilizing tens of thousands of people in the name of eugenics.

Reductionism, Natural Selection, and Hereditarianism

3400 words

Assertions derived from genetic reductionist ideas also ignore the abundant and burgeoning evidence that genes are outcomes of evolutionary processes and not bases of them. (Lerner, 2021: 449)

Genetic reductionism places (social) problems “in the genes” and so if these problems are “in the genes” then we can either (1) use gene therapy, (2) reduce the frequency of “the bad genes” in the population (eugenics) or (3) just live with these genetically caused problems. Social groups differ materially and they also differ genetically. To the gene-determinists, social positioning is genetically determined and it is due to a genetically determined intelligence. (See here for arguments against the claim.)

On the basis of heritability estimates derived from flawed methodologies like twin and adoption studies (Richardson and Norgate, 2005; Joseph, 2014; Burt and Simons, 2015; Moore and Shenk, 2016), hereditarians claim that traits like “IQ” (“intelligence”) are strongly genetically determined and if a trait is strongly genetically determined, then environmental interventions are doomed to fail (Jensen, 1969). Since IQ is said to have a heritability of .8, it is then claimed by the reductionist that environmental interventions are useless or near useless. Indeed, this was the conclusion of Jensen’s (1969) (infamous) paper—compensatory education has failed (an environmental intervention) and so the differences are genetic in nature.

Arguments like those have been forwarded for the better part of 100 years—and the arguments are false because they rely on false assumptions. The false assumptions are (1) that natural selection has caused trait differences between populations and (2) that genes are active—not passive—causes. (1) and (2) here can be combined for (3): genes that cause differences between groups were naturally selected and eventually fixed in the populations. This article will review some hereditarian thinking on natural selection and human variation, show how the theorizing is false, show how the theory of natural selection itself cannot possibly be true (Fodor and Piatteli-Palmarini, 2010) and finally will show that by accepting genetic reductionism we cannot achieve social justice since the causes of the social problems reduce to genes.

The ultimate claim from hereditarians is that human behavior, social life, and development can be reduced to—and explained by—genes. Social inequities are the target for social justice. Inequities refer to differences between groups that are avoidable and unjust. So the hereditarian attempts to reduce social ills to genes, thereby getting around what social justice activists want. They just reduce it to genes leading to possibilities (1)-(3) above. This has the possibility of being disastrous, for if we can fix the problems the hereditarians deem as “genetic”, then countless lives will not be made better.

Hereditarianism and natural selection

The crucial selection pressure responsible for the evolution of race differences in intelligence is identified as the temperate and cold environments of the northern hemisphere, imposing greater cognitive demands for survival and acting as selection pressures for greater intelligence. (Lynn, 2006: 135)

Hereditarians are neo-Darwininans and since they are neo-Darwinians, they hold that natural selection is the most powerful “mechanism” of evolution, causing trait changes by culling organisms with “bad” traits which then decreaes the frequency of the genes that supposedly cause the trait. But (1) natural selection cannot possibly be a mechanism as there is no agent of selection (that is, no mind selecting organisms with fitness-enhancing traits for a certain environment), nor are there laws of selection for trait fixation that hold across all ecologies (Fodor and Piattelli-Palmarini, 2010); and (2) genes aren’t causes of traits on their own—they are caused to give the information in them by and for the physiological system (Noble, 2011).

In his article Epistemological Objections to Materialism, in The Waning of Materialism, Koons (2010: 338) has an argument against natural selection with the same force as Fodor and Piattelli-Palmarini (2010):

The materialist must suppose that natural selection and operant conditioning work on a purely physical basis (without presupposing any prior designer or any prior intentionality of any kind). According to anti-Humean materialism, only microphysical properties can be causally efficacious. Nature cannot select a property unless that property is causally efficacious (in particular, it must causally contribute to survival and reproduction). However, few, if any, of the biological features that we all suppose to have functions (wings for flying, hearts for pumping bloods) constitute microphysical properties in a strict sense. All biological features (at least, all features above the molecular level) are physically realized in multiple ways (they consist of extensive disjunctions of exact physical properties). Such biological features, in the world of the anti-Humean materialist, don’t have effects—only their physical realizations do. Hence, the biological features can’t be selected. Since the exact physical realizations are rarely, if ever repeated in nature, they too cannot be selected. If the materialist responds by insisting that macrophysical properties can, in some loose and pragmatically useful way of speaking, be said to have real effects, the materialist has thereby returned to the Humean account, with the attendant difficulties described in the last sub-section. Hence, the materialist is caught in the dilemma.

We can grant that “nature” cannot select a trait if it isn’t causally efficacious. But combining Fodor’s argument with Koons’, if traits are linked then the fitness-enhancing trait cannot be directly selected-for since when you have one, you have the other. In any case, “natural selection” is part of the bedrock of hereditarian theorizing. It was natural selection—according to the hereditarian—that caused racial differences in behavior and “intelligence.” And so, if the hereditarian has no response to these two arguments against natural selection, then they cannot logically claim that the differences they describe are due to “natural selection.”

So the hereditarian theorist asserts that those with genes that conferred a fitness advantage had more children than those that didn’t which led to the selection of the genes that became fixed in certain populations. This is a familiar story—and the hereditarian uses this as a basis for the claim that racial differences in traits are the outcome of natural selection. These views are noted in Rushton (2000: 228-231), Jensen (1998: 170, 434-436) and Lynn (2006: Chapters 15, 16, and 17). But as Noble (2012) noted, there is no privileged level of causation—that is, before performing the relevant experiments, we cannot state that genes are causes of traits so this, too, refutes the hereditarian claim.

Rushton’s “Differential K” theory—where Mongoloids, Caucasians, and Africans differ on a suite of traits, which is influenced by their life histories and whether or not they are r- or K-strategists. Rushton (2000: 27) also claimed that “different environments cause, via natural selection, biological differences“, and by this he means that the environment acts as a filter. But the claim that the environment is the filter that causes variation in traits due to genes being “selected against” fails, too. When traits are correlated, the environmental filters (the mechanism by which selection theory purportedly works) cannot distinguish between causes of fitness and mere correlates of causes of fitness. So appealing to environments causing biological differences fails.

But unfortunately for hereditarians, a new analysis by Kevin Bird refutes the claim that natural selection is responsible for racial differences in “IQ” (Bird, 2021). So now, even assuming that genes can be selected-for their contribution to fitness and assuming that psychological traits can be genetically transmitted (which is false), hereditarianism still fails.

Hereditarianism and genetic reductionism

The ideology of IQ-ism is inherently reductionist. Behavioral geneticists, although they claim to be able to partition the relative contributions of genes and environment into nest little percentages, are also reductionists about “traits”—such as “IQ.” Further, if one is an IQ-ist then there is a good chance that they would fall into the reductionist camp of attempting to explain “intelligence” as being reducible to physiological brain states, and parts of the brain (such as Deary, 1996; Deary, Penke and Johnson, 2010; Jung and Haier, 2007; Haier, 2016; Deary, Cox, and Hill, 2021).

Reductionism can be simply stated as the parts have a sort of causal primacy over the whole. When it comes to psychological reduction, it is often assumed that genes would be the ultimate thing that it is reduced to, thereby, explaining how and why psychological traits differ between individuals—most importantly to the IQ-ist, “intelligence.” Behavioral geneticists have been reductionists since the field’s inception which has carried over to the present day (Panofsky, 2014). Even now, in the 3rd decade of the 2020s, reductionist accounts of behavior and psychology are still being pushed and the attempted reduction is reduction to genes. Now, this does not mean that environmental reduction has primacy—although we can and have identified environmental insults that do impede the ontogeny of certain traits.

Deary, Cox, and Hill (2021) argue for a “systems biology” approach to the study of “intelligence.” They review GWAS studies, neuroimaging studies and attempt a to lay the groundwork for a “mechanistic account” of intelligence, attempting to pick up where Jung and Haier (2007) left off. Unfortunately, the claims they make about GWAS fail (Richardson and Jones, 2020; Richardson, 2017b, 2021) and so do the claims they make about neuroreduction (Uttal, 2012).

This kind of genetic reductionism for psychological traits—along social ills such as addiction, violence, etc—then becomes ideological, in thinking that genes can explain how and why we have these kinds of problems. Indeed, this was why the first “IQ” tests were translated and brought to America—to screen and bar immigrants the IQ-ists saw as “feebleminded” (Richardson, 2003, 2011; Allen, 2006; Dolmage, 2018). Such tests were also used to sterilize people in the name of a eugenic ideology that was said to be for the betterment of society (Wilson, 2017). Thus, when such kinds of reductionism are applied to society and become an ideology, we definitely can see how such pseudoscientific beliefs can manifest itself in negative outcomes for the populace.

Ladner (2020:10) “constructed an economic analysis grounded in evolutionary biology.” Ladner claims that “Natural Selection is the main force that determines economic behavior.” Ladner claims that socialism will always fail since authoritarian regimes stifle our selfish proclivities while capitalism is grounded in selfishness and greed and so will always prevail over socialism. This is quite the unique argument… Of course Dawkins gets cited since Ladner is talking about selfishness, and these selfish genes are what cause the selfish behavior that allow capitalism to flourish. But the claim that genes are selfish is not a physiologically testable hypothesis (Noble, 2011) and DNA can’t be regarded as a replicator that’s independent from the cell (Noble, 2018). In any case, the argument in this book is that inequality is due to natural selection and there isn’t much we can do about capitalism since genes make us selfish and capitalism is all about selfishness. But being too selfish leads to such huge wealth inequalities we see in America today. The argument is pretty novel but it fails since it is a just-so story and the claims about “natural selection” are false.

(See Kampourakis, 2017 and Richardson, 2017a for a great overview on what genes are and what they really do.)

Hereditarianism and mind-brain identity

Pairing hereditarianism with physicalism about the brain is an implicit assumption of the theory. Ever since our the power of our neuroimaging methods have increased since the beginning of the new millennium, many studies have come out correlating different psychological traits with different brain states. Processes of the mind, to the mind-brain identity theorist, are identical to states and processes of the brain (Smart, 2000). And in the past two decades, studies correlating physiological brain states and psychological traits have increased in number.

The leading theorists here are Haier and Jung with their P-FIT model. P-FIT stands for the Parieto-Frontal Integration Theory which first proposed by Jung and Haier (2007) who analyzed 37 neuroimaging studies. This, they claim, will “articulate a biology of intelligence.” (Also see Colom et al, 2009.) Again, correlations are expected but we can’t then claim that the brain states cause the trait (in this case, “IQ.” (See Klein, 2009 for a primer on the philosophical issues in neuroimaging.)

But in 2012 psychologist William Uttal published his book Reliability in Cognitive Neuroscience: A Meta-meta Analysis where he argues that pooling these kinds of studies for a meta-analysis (exactly what Jung and Haier (2007) did) “could lead to grossly distorted interpretations that could deviate greatly from the actual biological function of an individual brain.” Pooling multiple studies from different individuals taken at different times of the day under different conditions would lead to a wide variation in physiologies, nevermind the fact that motion artifacts can influence neuroimages, and it emotion and cognition are intertwined (Richardson, 2017a: 193).

The point is, we cannot pool together these types of studies in attempt to localize cognitive processes to states of the brain. This is exactly what P-FIT does (or attempts to do). In any case, the correlations found by Jung and Haier (2007) can be explained by experience. IQ tests are experience-dependent (that is, one must be exposed to the knowledge on the test and they must be familiar with test-taking), and so too are parts of the brain that change based on what the person experiences. We cannot say that the physiological states are the cause of the IQ score—since the items on the test are more likely to be found in the middle-class, they would then be more prepared for test-taking.

Socially disastrous claims

Views from the likes of Robert Plomin—that there’s “not much we can do” about “environmental effects” (Plomin, 2018: 174)—are socially disastrous. If such ideas become mainstream then we may desist with programs that actually help people, on the basis that “it doesn’t work.” But this claim, that environmental effects are “unsystematic and unstable” are derived from conclusions based largely on twin studies. So whatever variance is left is attributed to the environment. (Do note, though, the Plomin’s claim that DNA is a blueprint is false.)

Hereditarians like Plomin then claim that environmental effects derive from one’s genotype so in actuality environmental effects are genetic effects—this is called “genetic nurture.” By using this new concept, the reductionist can skirt around environmental effects and claim that the effect itself is genetic even though it’s environmental in nature. Genes, in this concept, are active causes, actively causing parental behavior. So genes cause parental behavior which then influences how parents treat/parent their children. In this way, behavioral geneticists can claim that environmental effects are genetic effects too. (This is like Joseph’s (2014) Argument A in its circularity.)

By applying and accepting genetic reductionist claims, we rob people of certain life chances and we don’t commit ourselves to social justice. Of course, to the hereditarian, since the environment doesn’t matter then genes do. So we need to look at society from the gene-view. But this view betrays how and why our current social structures are the way they are. “IQ” tests were originally created to show that the current social hierarchy is the “right one” and the hereditarian believes to have shown that the hierarchy is “genetic” and so each group has their place on the social hierarchy on the basis of IQ scores which reduce to genes (Mensh and Mensh, 1991).

But humans are social creatures and although hereditarians attempt to reduce human social life to genes (in a circular manner), they fail. And their failing has led to the destruction of thousands of lives (see the sterilizations in America during the 1900s and around the world eg in Cohen, 2016 and Wilson, 2017). Reductionist attempts of social behavior to genes have been tried over the past 20 years (e.g., Jensen, 1998; Rushton, 2000, Lynn, 2006; Hart, 2007) but they all fail (Lerner, 2018, 2021). Social (environmental) changes cannot undo what the genes have “set” in individuals and so, we need not pour money into social programs.

For instance, many hereditarians and criminologists have espoused eugenic views, like Jensen’s claim that welfare could lead to the genetic enslavement of a part of the population (Jensen, 1969: 95) and that we can “estimate a person’s genetic standing on intelligence” based on their IQ score (Jensen, 1970: 13) to name two things. It is no surprise to me that people who hold such reductionist views of genes and society that they would also hold eugenic views like these. It is, in fact, a logical endpoint of hereditarianism—“phasing out” populations, as Lynn described in his review of Cattell’s Beyondism (see Tucker, 2009).

The answer to hereditarianism

Since we have to reject hereditarianism, then the answer to hereditarian dogma is relational developmental systems (RDS) theory which emphasizes the actions of all developmental resources, not reducing development to one primary developmental resource as hereditarians do. Similar things have been noted by other developmental systems theorists, most notably Oyama (1985/2000). What is selected aren’t genes, or behaviors. What is actually selected are the whole developmental system. Genes aren’t active causes. So if we look at development as a dance with music, as Noble (2006, 2016) does, there are no sufficient causes for development, but there are necessary causes of which genes are but one part of the whole system.

The answer to hereditarianism is to simply show that it fails conceptually, it’s “causal” framework for explaining the differences is unsound (“natural selection”) and to show that multiple interacting factors are responsible for human development in the womb and throughout the life course. “Theories derived from RDS meta-theory focus on the “rules,” the processes that govern, or regulate, exchanges between (the functioning of) individuals and their contexts” (Lerner, 2021: 457). Hereditarianism relies on gene-selectionism. But genes are not leaders in evolution; development is inherently holistic, not reductonist.

Conclusion

The hereditarian program has its beginnings with Francis Galton and then after the first “IQ” test was made (Binet’s), American eugenicists used it to “show” who was a “moron” (meaning, who had a low “IQ” meaning “intelligence”). Tens of thousands of sterilizations were soon carried out since the causes of these problems were in these people’s genes and so, negative eugenics needed to be practiced in order to cull the population of these genes that lead to socially undesirable traits.

The hereditarian hypothesis is, therefore, a racist hypothesis, contra Carl (2019) who argued the hereditarian hypothesis is not racist while citing many arguments from critics. I won’t get into that here, as I have many articles on the matter that Carl (2019) discusses. But what I will say is that the hereditarian hypothesis is racist in virtue of (1) not being logically plausible (reductionism about the mind and physicalism are both false) and (2) the hypothesis ranks races on a scale of “higher to lower” (that is, a hierarchy). Racism “is a system of ranking human beings for the purpose of gaining and justifying an unequal distribution of political and economic power” (Lovechik, 2018). Therefore, the hereditarian hypothesis is a racist hypothesis, contra Carl’s protestations. Hereditarians may claim that their claims are stifled in the public debate, but for behavioral genetics at large, this is false (see Kampourakis, 2017). Carl (2018) claims that “stifling” the debate around race, genes, and IQ can do harm but he is sadly mistaken! By believing that differences that can be changed are “genetic”, they are deemed to be unfixable and the groups who have a higher frequency of which ever genes that are causally efficacious (supposedly) for IQ will then be treated differently.

If neuroreduction (mind-brain reduction) is false, if genetic reduction is false, and if natural selection isn’t a mechanism, then hereditarianism cannot possibly be true, and if heritability . The arguments given here go well with my conceptual arguments against hereditarianism for more force against the hereditarian hypothesis. Just like with my argument to ban IQ tests, we must ban hereditarian research too, since the outcomes can be socially disastrous (Lerner, 2021 part VI, Developmental Theory and the Promotion of Social Justice). By now, these kinds of “theories” and claims have been refuted to hell and back, and so, the only reason to hold these kinds of beliefs is due to racist attitudes (combined with some mental gymnastics).

So for these, and many more, reasons, we must outright reject genetic reductionism (not least because these claims derive from flawed studies with false assumptions like twin studies) along with its partner “natural selection.” We therefore must commit ourselves to social justice to ameliorate the effects of racist attitudes and views.

Binet and Simon’s “Ideal City”

1500 words

Ranking human worth on the basis of how well one compares in academic contests, with the effect that high ranks are associated with privilege, status, and power, does suggest that psychometry is best explored as a form of vertical classification and attending rankings of social value. (Garrison, 2009: 36)

Binet and Simon’s (1916) book The Development of Intelligence in Children is somewhat of a Bible for IQ-ists. The book chronicles the methods Binet and Simon used to construct their tests for children to identify those children who needed more help at school. In the book, they describe the anatomic measures they used. Indeed, before becoming a self-taught psychologist, Binet measured skulls and concluded that skull measurements did not correlate with teacher’s assessment of their students’ “intelligence” (Gould, 1995, chapter 5).

In any case, despite Binet’s protestations that Gould discusses, he wanted to use his tests to create what Binet and Simon (1916: 262) called an “ideal city.”

It now remains to explain the use of our measuring scale which we consider a standard of the child’s intelligence. Of what use is a measure of intelligence? Without doubt one could conceive many possible applications of the process, in dreaming of a future where the social sphere would be better organized than ours; where every one would work according to his own aptitudes in such a way that no particle force should be lost for society. That would be the ideal city. It is indeed far from us. But we have to remain among the sterner and matter-of-fact realities of life, since we here deal with practical experiments which are the most commonplace realities.